Fiber Optical Testing Needs for the 5G Network Evolution

As the network concepts and technologies develop for 5G, so will the corresponding test methods and processes evolve to keep pace. Future 5G test methods will need to provide a high confidence to operators that the technology and services are implemented according to specification and that the quality of service (QoS) matches the requirements of the application or service being delivered.

5G will be both an evolution and a revolution: an evolution as mobile evolves to support a wide range of new use cases, and a revolution as the architecture concept completely transforms to enable these attributes:

- provide fast, highly efficient network infrastructure

- support more device connections

- low latency, low power consumption

- data rates that exceed 10 Gbps.

5G includes the evolution of existing 4G networks that use technologies such as centralized radio access networks (C-RANs) and heterogenous networks (HetNet) to increase the capacity of existing networks at an affordable cost. But revolution for core architecture is on the way to fully use SDN/NFV and network "slicing," a new millimeter-wave-band air interface for higher capacity, and a new architecture/signaling for extremely low latency.

5G networks are expected to have speeds and bandwidth that match – or at least rival – wireline systems. To achieve that goal, new network design elements have been developed and there will be even greater integration of fiber optics into the fronthaul as well as the central unit (CU). Optical test systems that meet these expanded verification requirements have now been developed to address these new demands and help optimize installation and operation of 5G networks so they meet specified key performance indicators (KPIs).

Fiber’s expanding role

Fiber has played a growing role in wireless networks, as expanding use cases have required greater network speed. That will become even more prominent with 5G, which will operate unlike any other wireless network due to its expanded latency requirements, bandwidth, and transmission speeds. This evolution has meant new standards, designs, and testing requirements.

When it comes to 5G transport, 3GPP is developing standards based on identifying different types of traffic and dividing the traffic by application. 4G treated all traffic the same with respect to transport. Applications are prioritized upon bandwidth need in 5G. Streaming video for entertainment, surfing the Web, and similar are given a lower priority. For mission-critical applications, including industrial Internet, smart grids, remote surgery, and intelligent transportation systems, ultra-reliable low-latency communication (URLLC) is being used.

URLLC is part of GPP Release 15 and specifies 1-ms speed, which can only be attained with end-to-end low latency. It is ideal for mission-critical applications because of its security and 99% reliability. Because of these requirements, a different approach to system design and operations must be taken compared to 4G.

The physical layer is daunting because URLLC must satisfy both low latency and ultra-high reliability. This combination creates a considerably different type of QoS goal compared to traditional mobile broadband applications. Therefore, enhanced mobile broadband (eMBB) services are integrated into URLLC to establish one 5G air interface framework.

Open standards for agnostic design

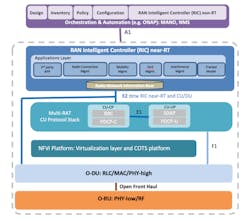

Mobile operators are driving an initiative to establish standards via the Open Radio Access Network Alliance (O-RAN) to evolve the fronthaul to satisfy the demands of 5G. O-RAN is an open standard designed to replace CPRI, which is a 4G specification developed by equipment manufacturers. Figure 1 shows the O-RAN architecture.

The O-RAN standard includes Open Fronthaul Specifications composed of control, user, synchronization, and management plane protocols. User planes will treat traffic based on the O-RAN protocol, so all the network equipment is compatible. Most operators and OEMs are committed to adopting the fronthaul specifications agreed to by the Alliance and are introducing O-RAN-compliant products in commercial 5G networks.

IEEE also developed two transport network standards for 5G networks. 1914.1 is for architecture and divides the fronthaul into two Next Generation Fronthaul Interface (NGFI), or xhaul, elements to prioritize traffic. The standard splits traffic into the CU (Centralized Unit) and Distributive unit (DU). The 5G base station is divided into two logical segments. The DU performs the heavy processing of the data. Once that is complete, the data is sent to the RU for transmission with data given priority based upon use case and its mission-critical nature.

The other is IEEE 1914.3, which establishes the Radio over Ethernet (RoE) standard that specifies a transport protocol and encapsulation format for transporting time-sensitive wireless data between two endpoints over Ethernet-based transport networks. RoE was developed so networks can take the necessary evolution from CPRI to Ethernet. It is preferred over eCPRI, even though the latter provides a faster path for 5G deployment and utilization. eCPRI has the same concerns as CPRI in that it is a specification developed by equipment manufacturers, not an open industry standard. IEEE 1914.3 will be compliant with the O-RAN standard.

Optical testing in 5G networks

All these new standards and design considerations make verifying the performance of 5G networks a greater challenge. Testing takes on greater importance due to these variables, as well as the performance specifications associated with next-generation networks. Below are some important capabilities in field instruments as it relates to optical testing:

Bandwidth – Everyone knows 5G increases network bandwidth tremendously. With 4G, it was 10 Gbps, but it will be 25 Gbps per radio or sector with 5G. The transmission jump will increase aggregate data transmitted and received to a tower by 10X compared to 4G. For this reason, field transport testers need to measure high data rates to support this added bandwidth capability.

Data splicing – The functional split by application of 5G adds another verification step field technicians must make on networks. Field instruments need splicing testing capability to accurately measure the difference between low and high latencies.

Packet-based testing – Given the 5G standards and protocols that will split and prioritize data transmissions based upon their mission-critical nature, packet-based testing is a priority. Most field technicians are not experienced in this area, so simple profiles must be integrated into the test instrument. Such instruments also should enable duplicate testing in the field and repeatable measurements to be conducted that comply with 3GPP-based latency and timing thresholds.

Network synchronization – 4G networks are synched via cost-effective GPS, because LTE has favorable line of site. The 5G approach is to rely on Ethernet and the Precision Time Protocol (PTP) grandmaster clock, as defined by IEEE 1588, to distribute timing through the fiber to the nodes. Time-sensitive Ethernet networks will be used where GPS is not available or as a backup when GPS fails or jams.

There are two challenges associated with this approach. As a signal transmits through each network element the timing slips slightly. If there are too many elements, a timing error will occur. Phase error is another issue. Operators and network engineers need to determine the maximum number of elements to ensure coverage but not adversely affect timing.

To address this issue, two profiles were developed. The PTP G.8275.2 enhanced profile meets the synchronization requirements of technologies such as LTE-TDD, LTE-A CoMP, and LTE-MBSFN. The G.8275.1 profile is also used in networks where phase or time-of-day synchronization is required and where each network device participates in the PTP.

Because a boundary clock is used at every node in the chain between PTP grandmaster clock and PTP slave elements, there is a reduction in time error accumulation through the network.

Test instruments must measure the element threshold based upon these standards or performance issues, including dropped calls and outages, will eventually occur. Many of these problems will not directly point to poor synchronization, which makes troubleshooting long, expensive, and frustrating. Therefore, field technicians need a PPT-aware test set that can connect to the grandmaster clock to determine the nodes that are causing slips and fails. To conduct the most accurate timing error analysis, the test system should be able to measure timing error within +/-20 ms.

eCPRI/IEEE1914.3 (RoE) – For time and cost efficiencies, both eCPRI and RoE measurements should be made with a single instrument. Some instruments have a dual-port 25G eCPRI/RoE function to optimize testing by offering efficient signal generation and analysis plus precision one-way latency measurement of transport networks. This ability provides support for implementing URLLC.

Conclusion

Fiber networks and the testing of fiber optics will play significant roles in the evolution of 5G. 5G networks require multiple new design elements and standards to achieve the latency, speed, and bandwidth expected by the next-generation network, as well as meet the needs of many mission-critical use cases. Because of this, transport testing has become more robust and complicated. Field test tools must address the standards and measurement requirements in a simple and efficient manner for optimal deployment, installation, and operation of 5G networks to achieve KPIs.

Daniel Gonzalez is a business development manager for Anritsu Co. He possesses more than 17 years’ experience in digital and optical transport testing, development, training, and execution spanning technologies including TDM, SONET, OTN, ATM, Carrier Ethernet and physical layer signal integrity. In his current role, he is responsible for providing technical support to sales, marketing and customers in North and South America.

About the Author

Daniel Gonzalez

Business Development Manager

Daniel Gonzalez possesses over 16 years’ experience in digital and optical transport testing, development, training and execution spanning technologies including TDM, SONET, OTN, ATM, Carrier Ethernet and Physical Layer Signal Integrity.

As a Business Development Manager for Anritsu Company, Daniel is responsible for providing technical support to sales, marketing and customers in North & South America. Daniel holds a B. S. in Telecommunications Management from DeVry University, is a member of OIF (Optical Internetworking Forum) Networking and Operations Working Group (WG), IEEE Communications Society (ComSoc) and Ethernet Alliance, and holds a Personal Certification for MEF (Metro Ethernet Forum) Carrier Ethernet 2.0. He also has several of his articles published in Lightwave, ISE Magazine, Mission Critical and Pipeline publications.