Applying 'fat pipes' for public-networking systems

Several trends will influence the best solution, depending on the point in time, to provide optimum cost, power, and density.

PETE WIDDOES, W.L. Gore & Associates

The widespread acceptance and extensive use of applications requiring significant data-networking capabilities have driven the development of many interesting enabling technologies over the past 10 years. Of particular interest is new technology for intrasystem cabling. Parallel optic pipes are already replacing parallel copper pipes in new system designs, and future systems will require larger and larger pipes.

The explosion of the Internet and easy money for the startup service providers led to the well-known public-network bandwidth race of the late 1990s. Prior to the mid-'90s, public-network traffic, driven primarily by voice circuit growth, was increasing at a predictable rate of 5-10% per year overall. Industry experts generally agree that Internet traffic equaled other public-network traffic sometime in the last two to three years.

From here on, any significant growth in Internet traffic will also mean significant growth in total public-network traffic. The traffic growth for the next few years is a hotly debated topic and will be closely watched, because it will define a notable part of the market opportunity for both equipment and component vendors.

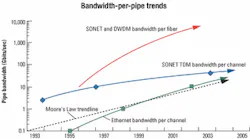

The capacity of optical pipes handling this traffic from system to system (and city to city) has increased significantly over the last decade, resulting in a considerable cost-per-bit decrease. Looking forward, the cost per bit must continue to drop dramatically, because service-provider revenues will grow far more slowly than the traffic required to generate them.Figure 1 shows that the bandwidth per channel of the native SONET data streams has not grown as fast as Moore's Law, which states that the number of transistors on an IC will double about every 18 months. That occurred because it was more cost-effective to increase bandwidth by adding optical channels using DWDM technology rather than increasing the data rate per channel. With the inclusion of DWDM capability, the combined capacity increase has more than doubled every 18 months.

Networking systems

The equipment needed to transport and manage the high-bandwidth traffic through the public network includes edge and core switch/routers, digital and optical crossconnects, SONET optical edge devices, and DWDM/optical add/drop multiplexer (OADM) systems. Figure 2 illustrates the growth in bandwidth of an input/output (I/O) shelf used in switch-routers designed for the core of the network from the early '90s to now. These systems should scale at roughly the same rate as the traffic growth in the network. If they don't, service providers must greatly increase the number of elements in their networks, which makes the networks increasingly difficult and expensive to manage.

Besides the obvious advantage of size, smaller generally means fewer components, which typically provide better reliability, reduced cost per bit, and lower power. To accomplish that, designers need to use the emerging next-generation components to get the maximum available bandwidth density.

The challenge designers face with one- to three-year system development cycles is to correctly guess which components and which vendors will actually possess production volume, quality, and reliability capabilities in time for system qualification. This challenge represents one of the most important issues in system development, because a large revenue stream for the equipment vendor could be delayed-or even lost-if reliable high-quality components aren't available in the required quantities when needed.

Network system architectures generally consist of multiple equipment shelves with a centralized or distributed switch-fabric topology. In either topology, fat pipes are needed to interconnect the shelves. Digital crossconnects, SONET ADMs, and early ATM switches have used copper cabling for years for this purpose.

However, the current bandwidths required severely limit the distances feasible with copper. More channels at lower data rates could be used, but this solution causes a density problem. The "fat pipes"-very-high-bandwidth pipes for very short distances-designed into these systems should offer the proper balance between the "latest technology" and an acceptable risk of availability, quality, and reliability.

Fat-pipe requirements

Although many features of fat pipes are important to specific applications, almost all system designers look for the following attributes: bandwidth density, power, cost, product reliability, multisourcing, link performance, maximum distance capability, and bandwidth granularity.

That is particularly important since these critical electronic elements typically occur up to three times in the total fat-pipe link-in the basic application-specific ICs in the system, the serializer/deserializer feeding the traffic to the optoelectronics, and the driver and receiver circuits inside the optoelectronic modules.

Picking a data rate per channel lower than the current cost-effective rate will result in sub-optimal bandwidth density, power, and cost per bit. Picking a data rate higher than the cost-effective one will likely optimize bandwidth density at the expense of higher power and cost per bit.

Another way to visualize these issues is to consider that any specific bandwidth fat-pipe application will have a different solution, depending on the year it needs to provide optimum cost, power, and density. Different solutions for a 10-Gbit/sec fat pipe illustrate the effect of time. For instance, in 1999-2001 the best solution was a 12 x 1.25-Gbits/sec parallel optical link; in 2002-04, the best solution will be a 4 + 4 x 2.5-Gbit/sec parallel optical transceiver-style link; and from about 2005 on, the best solution will probably be a 10-Gbit/sec serial link. Each of these solutions will take advantage of the lowest-cost-per-bit semiconductors available at that time.

Power, reliability, and cost are best evaluated on a "per bit" basis, because different approaches can accomplish the same fat-pipe requirements as discussed above (e.g., lower data rates and more channels versus "faster and narrower"). Bandwidth density is the primary product benefit provided by fat pipes. Would system designers always like more bandwidth density? Probably, but at a certain level, bandwidth density is adequate, and attaining more bandwidth density would adversely affect some other product attribute like power, reliability, or cost.

Public-networking equipment must support very high levels of network reliability. Guaranteed service-level agreements commonly specify five-nines (99.999%) availability, which means the circuit can be unavailable for only about five minutes each year. While designers make considerable investment in redundancy-both in the network and within each piece of equipment-they give component reliability an extremely high priority.In optical fat pipes, the laser is generally the highest FIT-rate element. Because vertical-cavity surface-emitting-laser (VCSEL) technology is still in its early years of commercialization, much progress will be made. Not all VCSEL manufacturers have a comprehensive understanding of their laser's failure mechanisms and how their design affects these mechanisms.

Acceptable levels of reliability have been achieved through careful treatment of the VCSEL and thorough data analysis during the manufacturing processes. System development teams need to explore these issues along with the typical reliability data set provided by their vendors to ensure the components will meet reliability expectations.

Because OEMs must hit their target release dates, an adequate supply of components must be available from the system qualification phase and beyond. While designers and purchasers would like the components to be covered by a standard, intrasystem link requirements are usually too varied to be met by a single standardized product. Consequently, fat pipes used for intrasystem links have typically been proprietary, semi-custom designs that allow the designer to incorporate the very latest technology. This scheme worked reasonably well with copper-cable assemblies, because the technology and vendor base were fairly mature.

Parallel optics is a relatively new technology, so designers have adopted more "standard" configurations to minimize the risk associated with a vendor's ability to meet high-volume supply with sufficient quality and reliability. Component vendors have responded by joining together to develop multisource agreements (MSAs) quickly enough to provide designers with the latest technologies in a quasi-standardized format.

MSAs should represent "available" (or at least "soon to be available") products and should be supported by credible vendors with the capability, interest, and financing to ensure product availability through the life of the system. As with all new technologies, a vendor may experience manufacturing "hiccups" that temporarily interrupt the supply of acceptable product. For this reason, the system development team should test for interoperability between the MSA vendors' products, and the purchasing department should buy from at least two sources.

Although numerous metrics contribute to evaluating optical link performance, such as random and deterministic jitter, optical power, extinction ratio, receiver sensitivity and many more, the ultimate performance goal is to achieve the required bit-error rate (BER) for the total link in real-life usage conditions. Optical fat-pipe links are typically specified to 10-12 BER due to testing constraints in manufacturing, but frequently system designers are targeting much better BERs for these links.

In these cases, the link power budget is increased by 1-2 dB above what is needed to account for the attenuation and other link power penalties. The additional decibel supplies the extra power needed at the receiver to allow a several orders of magnitude decrease in BER, provided that the receiver does not have a noise floor above the desired BER level.

System designers generally determine the maximum cabled distance needed between shelves in their system in concert with their marketing organization. While the majority of the links will typically be less than 50 m, they still need to accommodate installations where the shelves reside on different floors and at different ends of the central office (CO) building.

This setting often leads to a 300-m maximum length based on the old AT&T 1,000-ft standard. Designers are also interested in 600-m and 1-km length capabilities to handle very large COs and adjacent-building situations. Optical fat-pipe-length capabilities are addressed by managing link budget variables such as transmitter optical power, receiver sensitivity, and fiber type used.

The bandwidth granularity of a system is the natural distribution of bandwidth in the system based on its topology, and it defines how much bandwidth needs to be transported by its intrasystem links from any one location to another. Aggregate link bandwidth capacity of the fat pipe should match the bandwidth granularity of the chosen system topology.

If the fat pipes are too small, multiple links will be needed, which probably will not provide the best possible bandwidth density or cost per bit. If the fat pipes are too large, they will suffer density and cost disadvantages because they will be underused. Figure 3 gives a historical perspective (for both copper and optical fat pipes) showing how this bandwidth granularity has changed with time. It's interesting to note the clear trend for bandwidth granularity and the implication for future fat-pipe requirements.

In the late 1980s and early '90s, large crossconnect systems commonly used copper fat pipes with 16 channels operating at DS-3 or STS-1 data rates (45 to 52 Mbits/sec). In the early '90s, ATM switches were using 12 channels of copper operating at about 100 Mbits/sec each. OC-192 (10-Gbit/sec) SONET transport systems of the mid-to-late '90s used 4 x 622-Mbit/sec copper fat pipes.

Many systems that have just started production in the last year or two were designed with 12-channel parallel-optic links operating at 1.25 to 1.6 Gbits/sec per channel. Today, many systems going into qualification use 12-channel parallel-optic fat pipes operating at about 2.5 Gbits/sec per channel, which is compliant with the SNAP12 MSA.

Future fat-pipe requirements

The bandwidth granularity trend in Figure 3 would suggest that a 120-Gbit/sec fat pipe will be needed by about 2004-05. Which fat-pipe architecture will represent the best solution in that time frame-12 channels x 10 Gbits/sec (12 fibers), 24 channels x 5 Gbits/sec (24 fibers), 48 channels x 2.5 Gbits/sec (48 fibers), or 4 x 2.5-Gbit/sec coarse WDM run on each of 12 fibers (48 total channels)?

The best solution will prioritize reliability, multisourcing, bandwidth density, distance, BER, power, and cost in a way that is compatible with the needs of the system development team. Fat-pipe vendors need to focus on the attributes their customers value the most. At the same time, they need to accommodate slight variations of these common attributes to provide a semi-custom product that can meet the needs of different applications.

Several trends will influence the best solution at any point in time. Moore's Law will continue to push the lowest cost per bit to higher and higher data rates. Greater capabilities will emerge in the optical-component space as the market, technologies, and vendors mature. Parallel optics is beginning to deliver on the great promise it holds for providing the "best solution" for cost-effective high- band width interconnects.

Pete Widdoes is the optoelectronics marketing manager for W.L. Gore & Associates Inc. (Elkton, MD). He can be reached via the company's Website, www.wlgore.com.