Tech Trends: LAN test equipment gets subtle facelift

Optical time domain reflectometers (OTDRs), optical power meters, and optical loss test sets (OLTS) are widely used by today’s network engineers. While the LAN test market is neither the most visible nor dynamic industry segment, it is not entirely stagnant, either. Basic LAN test devices have been improved to accommodate new features, including 10-Gigabit Ethernet (10-GbE) testing.

“If you look at the local-area network, that has become the weak point because a lot of backbones and even metro networks are currently running at 10 Gbits/sec, whether it’s SONET or Gigabit Ethernet,” reports Stephen Colangelo, senior product manager at NetTest North America (Utica, NY). “The problem has been your access network or your LAN-trying to get that speed to the user. Up until this point, it’s been the bottleneck. Most of what you’ve seen recently are increases or improvements, trying to improve the bandwidth of these local-area networks.”Today’s LAN technicians are focusing more and more on end-to-end certification or measuring the loss of the entire link, notes Jerome Laferriere, OTDR product manager at Acterna (Germantown, MD). “The most important tool for LAN testing is really becoming the loss test set and power meters,” he says. “However, if the loss is too high compared to LAN standards, technicians will look at each splice and each connector along the link using an OTDR. But fewer people are doing that because the splices are better and more accurate. So the OTDR is less important than in the past.”

Nevertheless, an OTDR remains necessary to locate faults and provide a loss value for each event. In the past, OTDRs simply needed to transmit light from point A to point B, says Colangelo. Such devices didn’t have to provide high performance or great resolution because they were used mostly to check the continuity of the link. But OTDR resolution has become more critical as LANs migrate to higher transmission speeds like GbE and 10-GbE.

Dead zones are blind spots that occur because reflections from “events” like connectors and splices tend to saturate the OTDR’s receiver. “Sometimes, the dead zone can be 10-15 m,” reports Peter Schweiger, business development manager at Agilent Technologies (Palo Alto, CA). “If you have a link that’s only 20 or 30 m long, half of the link is obscured by the instrument’s blindness or dead zone. Consequently, the measurements you make aren’t always as accurate as you’d like them to be,” he says.

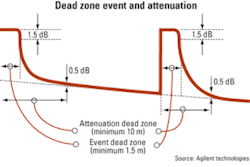

The trend in the industry is to reduce both event and attenuation dead zones. Event dead zones are defined as the distance from the start of the reflection to the point where the OTDR has recovered to 1.5 dB below the top of the reflection (see Figure on page 13). The event dead zone prohibits the OTDR from accurately measuring loss and attenuation.

According to Laferriere, an event dead zone limits the user’s ability to see two closely spaced connectors. Connectors are the single biggest contributors to total link loss. On an OTDR trace, a connector appears as a spike. Splices, by contrast, appear on the OTDR trace as small events with low amplitude. “It’s very difficult to have a connector followed by a splice,” says Laferriere. “A connector is a big spike, and if you follow that with a very small event with very low amplitude, the splice may be hidden by the connector, by the reflective event.”

Splices cause attenuation dead zones, which are defined as the distance from the start of the reflection to the point where the receiver has recovered to within 0.5 dB of linear backscatter. At this point, the OTDR can measure attenuation and loss, since these measurements require backscatter.

Today’s OTDRs measure event dead zones up to 1 m and attenuation dead zones between 3 and 10 m. “This is really enough for LAN qualification,” says Laferriere.

Nicholas Gagnon, business development manager for EXFO’s access and premises markets (Quebec City), identifies a trend toward what he calls the use of certification OTDRs. In the past, end users used OTDRs to insert some threshold value on the loss. If the measured value exceeded those user-defined thresholds, the OTDR would fail the link.

With the migration to 10-GbE, it’s possible to fine-tune an OTDR’s pass/fail algorithms to include additional parameters called certification analysis. These parameters include taking the optical return loss of the connectors and imposing certain length parameters. For example, says Gagnon, “a 10-Gigabit Ethernet fiber link in the premises cannot exceed a certain length because you will not have the correct amount of bandwidth on the fiber. So a certification OTDR accounts for the loss of the link, the length of the link-and more and more people are asking about standards like the TIA-568A and other standardized protocol parameters.”

For his part, Agilent’s Schweiger isn’t fond of the term “certification.” “It makes me think of meat being certified,” he says. “Grade A meat passes all these criteria. In the LAN cabling world, that’s been the case for many years-Category 5, Category 6, and now augmented Category 6 and even Category 7. Now manufacturers are starting to say, ‘Wouldn’t you like to certify your fiber?’ ” Schweiger contends that certifying fiber for use in the LAN is “a little bit overblown. Really it’s all back to what are the reflectances and what are the losses?” That said, he believes certification is a more useful term when used to describe 10- and 40-Gbit/sec transmission where you have other parameters-like dispersion-that affect the fiber.

NetTest’s Colangelo agrees: “As speeds increase, you need to verify the fiber, but you also need to perform some type of certification or verification of throughput and latency. You want to make sure that your network can handle these speeds, both for your current plans and for upgrades going forward.”

As transmission speeds increase, network engineers expect the fiber to support exponentially more traffic, says Colangelo. “With the expectations on the fiber now, it’s not enough to just test the physical layer anymore,” he observes. “Now you actually have to start getting in and testing these Ethernet or new transport technologies.”

“Our customers know the physical layer testing pretty well,” adds Laferriere. “What is new for them is the Gigabit Ethernet and 10-Gigabit Ethernet services testing. The combination testing of the physical layer and the service layer is key for them.”Meghan Fuller is the senior news editor at Lightwave.