Evolving metro cloud networks through virtualization and automation

We all know that Internet traffic continues to rise at a staggering rate, but some of the fundamentals are also changing. Last year metro traffic surpassed long haul and will account for 66% of total traffic by 2019. Meanwhile, by 2018, the cloud will account for 76% of total data center traffic; by then IP will represent almost 80% of all traffic as well. (Details are easily found in the 2015 Cisco Visual Networking Index [VNI] and the Cisco Global Cloud Index.)

So, how can network operators scale their networks to accommodate this ever-escalating traffic without reducing performance, reliability, and service quality? And, very importantly, how do they do this while driving a strong business case?

The metro value chain

If one were to create a simple value chain to address these issues (see Figure 1), it would start with meeting bandwidth demands -- move traffic from point A to point B as efficiently as possible. For many large mega-content providers, simple high-capacity point-to-point connectivity between two data centers is sufficient because they have access to a large pool of network resources primarily for their internal bandwidth needs and predominantly between their own data centers. They want very high capacity at the lowest cost per bit -- a basic transport-only requirement.

Carriers and even colocation providers (both being service providers) require high-capacity connectivity between data centers too. But they also need networking equipment to interconnect local data centers and deliver services to end users or businesses or partners -- services such as mobile, wireless backhaul, video, Ethernet business, content delivery, cloud services, etc. These metro data centers facilitate distribution and aggregation of information and services across a broad range of suppliers and customers -- for example Salesforce.com as a supplier of a service, and enterprise users of Salesforce.com as consumers of that service.

As already noted above, metro traffic is expanding twice as fast as long-haul, fueled in large part by the increase in data center interconnect (DCI) traffic volumes for the cloud. This increase in DCI volumes is driven by a combination of the number of data centers being built and the increased bandwidth consumed per data center.

So connectivity is next on the value chain -- typically provided by carriers and service providers. Fixed -- or legacy -- hardware-centric network equipment that connects multiple diverse sites usually requires redundant network links to ensure optimal reliability and performance. If there's a problem, the redundant network takes over. A considerable downside to this strategy, though, is that it can result in unused and expensive resources that must be managed until needed -- increasing hardware costs while adding to space, power, and footprint overhead.

With the unprecedented levels of traffic experienced every minute of every day and the forces of competition faced by carriers and service providers increasing, the most valuable aspects of the chain are services and virtualization. Colocation providers and carriers are in essence service providers to businesses and consumers -- whose complicated requirements can be addressed most efficiently and cost effectively through software-based networks.

The move to software and the cloud

End users and businesses are moving to cloud-based service hosting models to provide on-demand services as and when needed; their service providers must accommodate by transitioning from legacy fixed networks to virtualized, flexible, and scalable infrastructures. Service providers can make use of cloud-based resources to offer more resilient and more efficient service delivery networks.

With the emergence of innovative technologies (e.g., open switching, software-defined networking [SDN] and network functions virtualization [NFV]) and advanced DCI platforms, colocation providers and carriers are today transforming their network infrastructure from essentially hardware-based to software-based services delivery. Network design and implementation is shifting away from resources that are statically delivered on a per-customer basis with dedicated backup resources for each customer. Additionally, with network demands unpredictable -- such as a sudden surge of the viewing of a new streaming TV series -- hardware-based network infrastructure approaches just can't react quickly enough to meet these unforeseen demands.

Major service providers are studying the business benefits and impacts to moving to virtualized cloud-based/resource-based service delivery models. Most vocal in this transformative thinking is AT&T (at least in North America), which recently disclosed that the company is moving away from a hardware-centric to a software-centric network. Underscoring this strategy, the company said that 75% of its network will be virtualized and under software control by 2020. Doing so will enable AT&T to virtualize 200 network functions by 2020. Against the backdrop of an expected 100,000% growth in demand for its wireless data network between 2014 and 2017 alone, AT&T convincingly stated that continuing down a hardware-centric path is simply unsustainable.

With this transition already underway, AT&T now has its first SDN service available. Called Network on Demand, it offers an online portal to businesses with the flexibility to increase the number of ports, add or change services, and alter bandwidth levels to address changing needs and manage their network needs.

How to enable virtualization

So how does this shift to virtualization happen? Dedicated hardware must be replaced by virtualized network functions (VNFs) running in software on commercial hardware. Service providers and carriers are embracing NFV architectures that decouple functions from proprietary hardware appliances so they can run in software on commercial hardware platforms. Concurrently, they're also adopting SDN -- a concept that fosters network flexibility, automation, programmability, and virtualization.

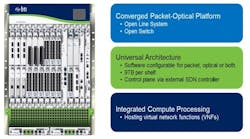

These open software-based network infrastructures facilitate interoperability across platforms from different vendors (Figure 2). Open architectures leveraging x.86 processors, merchant silicon, and LINUX with open software interfaces such as NETCONF, OpenFlow, and RESTful make the choice of inexpensive, off-the-shelf hardware platforms much easier. The value is in the virtualized network functions. The objective of this approach is to create an open networking infrastructure to allow highly programmable and elastic networking of available resources for lower capital expense (capex) and operating expense (opex), transforming service provider operations to a cloud-computing model.

Multi-layer convergence is key to this transformation. A recent study by Bell Labs showed that converging routing and optical transport technologies allows operators to meet the same requirements for service availability while using approximately 40% fewer networking resources. Enabled by the convergence of optical, routing and application layers, this strategic approach reduces network elements, space, power and cooling requirements. That, in turn, dramatically simplifies complexity while increasing return on investment (ROI) by slashing expenses. SDN automation and programmability further reduce operational complexity and cost. Providers and their customers can manage the network seamlessly -- seen as a single network with a pool of resources -- rather than what amounts to the network dictating to and managing the provider.

The network and IT data center separation is removed with this transformation; network software upgrades become aligned to a continuous software/DevOps model. Carriers and service providers can increase profits while enabling faster time-to-service turn-up. Offering unique and targeted features based on service chaining of virtualized functions -- with no need to deploy and provision dedicated hardware appliances -- can be achieved from the orchestration layer, simply by instantiating the appropriate virtual machines. This drives substantially improved monetization of the network as well as rapid scale worldwide as networks grow.

The movement to a cloud-computing model must start with carriers and service providers evolving their central office model to a data center model (Figure 3). Local data centers can distribute services and content distribution to service demarcation end points (the network edge) and host latency-sensitive services.

Taking the first steps

So, the strategy is clear. Next comes the question of how best to implement the movement to virtualized networks. Specifically, how to transform the network from vendor-specific hardware equipment to a new embedded infrastructure that is based on merchant hardware and open software interfaces. Technology advances are fueling a new generation of packet-optical networking approaches that are based on merchant hardware and open software that can provide a smooth migration path to virtualized services delivery.

Integrating rich packet capabilities with optical transport that is based on an open and SDN-enabled converged networking infrastructure reduces complexities by flattening the network, decreases costs, and increases services agility, reliability, and performance. The network management is highly automated because it is virtualized and elements are software-based.

The rapid and unprecedented transformation of data center networks is changing the business models of carriers, colocation providers, and service providers. It is allowing them to move quickly to leverage new opportunities, be more competitive in increasingly crowded markets, and control operational costs. Concurrently, the shift to virtualized and automated metro networks is underway as the number of data centers escalates, traffic levels set new records, and business and consumer customers demand new services.

With innovation in infrastructure evolving and interoperability through open software-based platforms becoming a must, providers must leverage these game-changing strategies, models, products, and services -- or they'll be watching from the sidelines.

Robert Keys is chief technology officer of BTI Systems.