Segmenting the Data Center Interconnect Market

In 2014, when the first purpose-built data center interconnect (DCI) platform debuted, the DCI market was growing at 16% per year, only slightly faster than overall data center infrastructure networking market, according to Ovum’s March 2016 "DCI Market Share Report." The new system addressed pent-up demand for a server-like optical platform designed specifically for DCI. Since then, sales of purpose-built, small form factor DCI products have taken off, growing at a 50-100% clip, depending on analysts’ forecasts, and projected to outpace the overall data center growth rate for the next several years.

What drives this surge in spending? Multiple factors are at work, but two stand out:

- Data center to data center traffic (server-to-server) is growing much faster than data center to user due to distributed application design and replication. In addition, for performance and cost reasons, large cloud and content providers need to locate data centers closer to the end customers, which has resulted in the rapid development and growth of metro data centers. Unlike rural mega data centers, cloud providers typically build multiple smaller data centers in heavily populated metro areas due to the cost and availability of real estate and power. The result is a large number of metro data centers that require a high degree of interconnectivity.

- IT organizations have finally begun to adopt the cloud in an aggressive fashion. Whether the approach leverages software as a service (SaaS) applications such as Salesforce.com or Microsoft 365, or migration of some or all back office infrastructure into third-party data centers running infrastructure as a service (IaaS), the "chasm" has been crossed.

With this growth, the market has begun to segment, which means one size platform does not fit all (see Figure 1). To evaluate the technologies and platforms that play a role in DCI, an understanding of the customer segmentation is necessary.

(Source: ACG Research, "2H-2015 Worldwide Optical Data Center Interconnect Forecast," April 2016)

DCI Market Segmentation

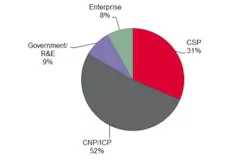

As Figure 2 illustrates, a small number of internet content providers (ICPs) compose the largest group of spenders on DCI. Also part of this DCI ecosystem, but to a much lesser degree, are carrier-neutral providers (CNPs). Together these two account for almost half of the total DCI spend worldwide, and over half in North America.

(Source: Ovum, "Market Share Report: 4Q15 and 2015 Data Center Interconnect (DCI)," March 2016)

The vast volume of DCI traffic the largest ICPs generate has made it economically favorable for them to build their own DCI networks in many locations rather than buy transport services from service providers. We have seen a large increase in ICP-owned/operated terrestrial networks; Google, Microsoft, and Facebook have opted to build their own subsea networks as well.

Another sub-segment of this DCI market is the smaller ICPs and CNPs, e.g., AOL and Equinix. CNPs are of increasing importance in the cloud ecosystem because they provide the meet-me points where ICPs gain direct connections to the multiplicity of enterprises as well as local internet service providers (ISPs) that provide last-mile broadband access for consumers and businesses.

Communication service providers (CSPs) form another large segment of the DCI market. This group comprises a number of different provider types, such as Tier 1s, Tier 2s, cable, wholesale carriers, and even some data center operators. In particular, wholesale carriers such as Level3 and Telia Carrier are seeing a tremendous growth in traffic from ICP customers. In geographies or on routes where an ICP cannot access fiber or cannot cost-effectively build and operate its own DCI network, the ICP will purchase DCI from wholesale enterprise carriers. In these cases, ICPs still often want significant control over the operations and technologies used to carry their traffic. This is leading to interesting new business models between ICPs and CSPs:

- Managed fiber – where the CSP manages and operates a dedicated transport system (terminal gear, fiber, amplifiers, ROADMs) for the ICP. Either the CSP or the ICP can own the terminal equipment.

- Spectrum – the CSP provides a contiguous amount of spectrum (e.g., 250 GHz) as defined by filter tunings through a shared line system. The ICP can then light that spectrum using whatever optical technology it chooses.

- Wavelengths – the CSP provides a single wavelength or set of wavelengths for an ICP on a shared line system either through an add/drop multiplexer or a muxponder.

Data center operators (DCOs) include CSP subsidiaries that directly provide colocation and IaaS, such as Verizon (including assets from its Terremark acquisition) and CenturyLink (Savvis) as well as pure-play DCOs such as CyrusOne. These DCOs do not have the large volume of inter-data center traffic common in the higher tiers, but do have significant volume between their data centers and their customers’ private data centers. Most often this connectivity is provided by a CSP via MEF Carrier Ethernet services. We also see some internet protocol (IP)/multi-protocol label switching (MPLS) virtual private network (VPN) services used for this application as well.

Another segment of the DCI market comprises enterprise, government, and research and education (R&E) organizations. Although more of this group’s traffic will move to third-party clouds over time, at least some of these organizations continue to grow their own data center infrastructure. This growth occurs within a single metro area – such as an extensive university campus and its satellite offices – or across a large government agency such as the United States Postal Service or Internal Revenue Service that spans many locations across the country.

Because the traffic volume between these data centers is significantly smaller than that of ICPs (perhaps with the exception of certain government agencies), the economics of interconnection often favor purchasing CSP services rather than building dedicated DCI networks. But not always: the largest of these organizations can realize a strong return on investment by building their own DCI networks, and others may choose to do so based on other considerations such as security and control.

Differing Requirements

The market segmentation is critical to understand, as each segment has a different set of DCI requirements. Therefore, beyond cost, several features and capabilities may be prioritized differently across segments:

- Fiber capacity – the maximum bandwidth per fiber pair

- Power efficiency – measured as watts per gigabit per second

- Density – measured in gigabits per second per rack unit (RU).

Simplicity and ease of use – a true appliance (single box) approach without the need for external devices (such as multiplexers, amplifiers, or dispersion compensation modules), and with simple plug-and-play configuration and operation

Programmability and automation – the ability for the customer to use open application programming interfaces (APIs) to directly program the platforms and easily integrate them into its operations environment

Multi-protocol clients and multiple network services – the ability to support a range of interface protocols (e.g., Ethernet, Fibre Channel, TDM, optical data unit) and/or networking features (e.g., VPNs or MEF Carrier Ethernet services).

Table 1 illustrates the relative importance of each attribute in platform selection for each customer segment.

The largest operational expense for an ICP is power, so power efficiency is typically the most critical DCI attribute following cost. Programmability is another critical attribute for the top ICPs as they have painstakingly optimized and automated their abilities to manage their infrastructures and grow capacity through internally developed operations systems. Any new platforms entering their environments must be able to quickly (read: weeks, not years) and easily (read: a few developers, not a 100-person team) be integrated into their systems.

Fiber capacity and density are important to ICPs, as they drive space and dark fiber costs, but are not as critical as power efficiency and programmability. Services support is the least critical factor for ICP optical transport DCI platforms. All DCI client-side interfaces have migrated or are migrating to 100 Gigabit Ethernet. There are no additional services required at the transport layer, and all packet protocols and traffic management are handled at the application layers within the data centers.

CNPs are concerned with power and space, but unlike the large ICPs, CNPs require the support of a range of services that may place additional requirements on the DCI transport equipment. For example, some CNPs provide Ethernet services (e.g. Ethernet Virtual Private Line and multiplexed virtual LAN-based services) to enable their customers to interconnect colocation sites between metro data centers, interconnect to cloud and "x as a service" providers via cloud exchange services, and peer with Border Gateway Protocol (BGP)/IP via internet exchange services. Programmability is also important, as CNPs are striving for zero-touch provisioning and operations automation.

Fiber capacity is less important, as it is rare that the inter-data center traffic of the CNPs exceeds several terabits per span. Remember that much of the traffic in and out of a CNP data center is carried by the carriers/CSPs colocated in that data center.

CSPs often prefer a chassis-based system versus the small form factor platform ICPs prefer. However, this may change as CSPs adopt more of a scale-out ICP infrastructure approach. CSPs are concerned with space, power, and density but they absolutely need a rich set of protocols and interfaces to handle legacy services. CSPs are interested in ease of use but they typically have large, skilled optical operations teams to handle configuration and operation and can deal with more complex products. For this same reason, while they desire programmability and automation they are willing to adopt it more slowly and with greater patience than ICPs.

Enterprises have a similar concern for power efficiency as CNPs, but in this case it is because they must pay a monthly fee to the CNPs where they colocate based on the maximum power per rack they can draw. It behooves the enterprise customer to use the most power-efficient equipment on the market. Service support also is key to the enterprise as they are likely to still require native Fibre Channel support and potentially other variants like TDM or Infiniband.

Programmability is less important, as the scale of an enterprise cloud does not typically drive the level of operations automation required by the larger cloud providers. Fiber capacity is also not typically relevant, as the overall capacity per spans is measured in a few hundred gigabits per second.

However, over the last year several newer features have bubbled up to become highly desirable and even must-have for an increasing number of customers across all market segments:

- In-flight data encryption at both Layer 1 and Layer 2 (MacSEC)

- Link Layer Discovery Protocol (LLDP) for the automated detection of routers and switches

- Configuration/provisioning APIs based on YANG data models for automation and programmability.

The Need for Platform Diversity

Net-net, these differences in market drivers make it difficult to develop a single DCI platform that can serve all markets optimally.

One approach, which we refer to as "telco" or "general purpose," is a chassis-based system in which a customer can mix and match client interface cards and line cards. This approach serves the enterprise and CSP market well, but the added cost, power, and size of a chassis system is not aligned with what the large ICPs’ need. Likewise, a pure Ethernet-over-DWDM pizza box can meet the requirements of the ICPs but may fail to serve the enterprise market in terms of service types and bandwidth granularity.

A developer of DCI equipment will either need a full portfolio of DCI products or will need to pick which segment of the market to address.

Stu Elby is senior vice president, Data Center Business Group, at Infinera.