Cloud Automation Enhances Optical Data Center Interconnect

The phenomenal growth of hyperscale internet content providers (ICPs) continues to drive innovation and change in network architectures, systems, and components. In optical networking, ICPs drove the development of purpose-built, highly optimized data center interconnect (DCI) platforms. ICPs are also driving innovation in software architectures, protocols, and systems that enable them to automate their operations and scale their infrastructures and services efficiently. These "cloud automation" techniques are now spreading from servers, storage, and data center networks to optical DCI networks.

Automation Trends in ICP and Service Provider Networks

ICPs have openly discussed the importance of automating their operations to scale capacity rapidly while limiting the growth in staff and costs. In describing the role of a Google Site Reliability Engineer (SRE), Vice President of Engineering Ben Treynor says the company is doing operations work "using engineers with software expertise" who can "substitute automation for human labor."

ICP automation can be characterized by three principles:

- Make everything open and programmable.

- Automate every task.

- Collect every data point and apply big data analytics.

Because ICPs have large software teams that can develop custom, higher layer automation applications, their requirements for network equipment suppliers focus on maximizing network programmability and visibility.

Increasing automation is also a goal for traditional communication service providers (CSPs), and capabilities driven by ICPs also apply to CSP networks. However, most CSPs do not have the software development capabilities typical of large ICPs, so many CSPs need their suppliers to provide automation software aligned to their network architecture and business model.

CSPs operate large mesh networks supporting a wide range of customers and services, from Layer 1 to Layer 7 and from megabits per second to hundreds of gigabits per second. Customer service requirements are dynamic and unpredictable, requiring CSPs to continuously improve agility from the application layer to the optical transport network, and from capacity planning to service fulfillment. For these CSP customers, a critical enabler of agility is multi-layer automation using software-defined networking (SDN). Looking forward, CSPs will be able to extend multi-layer SDN automation all the way to the photonic layer with software-defined capacity (SDC), which automates optical capacity engineering and further increases network agility.

Returning our focus to ICPs, the following sections illustrate how the ICPs’ automation principles are driving improvements in optical DCI networking.

Everything Open and Programmable

ICPs have long preferred to control and manage devices using software tools and systems developed in-house and tailored to their needs. Initially, ICPs developed automation techniques for their networks using available tools such as simple network management protocol (SNMP), Syslog and Terminal Access Controller Access-Control System Plus (TACACS+) protocols and, where necessary, command line interface (CLI) scripting.

To simplify automation and improve reliability and scalability, ICPs led the push for modern, open application programming interfaces (APIs) on networking equipment. A primary example is the combination of NETCONF and YANG. NETCONF enables communication of network configuration and operations data and YANG provides a framework for description of that data. One of the most important benefits of YANG is that it supports abstraction, a functional representation of a network element – or an entire network or an end-to-end service – that is easy to understand and use. Well-defined abstractions are critical to programmability. YANG models are currently being standardized through various industry efforts, such as the OpenConfig working group. NETCONF and YANG were first deployed in compact optical DCI platforms in 2015 and are now considered fundamental requirements for many network operators.

Another emerging open standard protocol is gRPC, a type of remote procedure call used to support streaming telemetry, as described below.

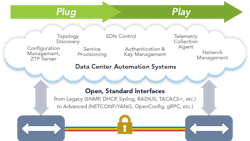

As optical DCI equipment incorporates support for open APIs and programmability, these tools can be used in numerous automation systems and tasks, from first installation ("plug") to ongoing operation ("play"), as illustrated in Figure 1.

Automate Every Task

Three examples of how automation has affected optical DCI systems are zero-touch provisioning, topology discovery, and encryption automation.

Zero-touch Provisioning. The concept of zero-touch provisioning (ZTP) is simple: Once a device has been physically installed and connected to a network, it automatically becomes fully operational. ZTP has been implemented successfully for servers and data center switches, and now ICPs are driving ZTP for optical DCI equipment.

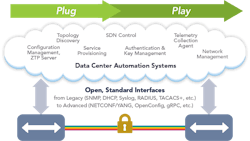

For initial equipment installation and turn-up, ZTP typically follows the steps shown in Figure 2.

The same ZTP and configuration management tools can also be used to push new configurations to network elements (NEs), reducing update time, cost, and error rates.

ZTP is extremely beneficial to ICPs who are deploying new optical DCI devices at a rapid rate into globally distributed data centers, colocation sites, and internet exchange (IX) sites where they have limited onsite staff or access. Physical installation can be contracted to remote-hands personnel, who do not need to know how to configure the equipment, and then everything else is automated remotely.

Topology Discovery. Once optical DCI devices are connected and configured, network operators need to be able to validate that they have been connected correctly and isolate faults quickly and accurately. Both tasks require an accurate view of the network topology, i.e., how every device is connected to other devices.

Topology discovery in Ethernet switching networks uses the Link Layer Discovery Protocol (LLDP), which enables switches to identify each neighbor on each Ethernet (Layer 2) link. But when switches are connected across an optical DCI network, that underlying (Layer 1) network is typically invisible to LLDP; the switches learn only that they are connected to each other but nothing about the optical devices in between, as illustrated in the top half of Figure 3.

To fill in that information gap, leading optical DCI equipment now incorporates a simple but effective feature called LLDP snooping. As LLDP packets are exchanged through a DCI link, the optical DCI system reads the packets without altering them, and then reports the contents of each packet to the topology management system, along with an identifier indicating which network port on the optical DCI system received it. The topology management system can then "connect the dots" to know exactly how the Layer 2 link traverses the optical DCI systems in the middle, as illustrated in bottom half of Figure 3. LLDP snooping simplifies troubleshooting of cabling mistakes during initial installation and fault isolation over time.

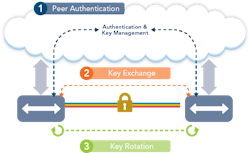

Automating Encryption. Leading compact DCI systems also have begun to incorporate built-in hardware support for line-rate encryption, enabling all data to be encrypted as it travels between data centers. To do this securely, each network element must be able to integrate seamlessly into an ICP’s global security infrastructure so that it can be authenticated before key exchange, data encryption, and ongoing key rotation (see Figure 4). Once encryption is operational, the network operator’s security management systems may need to enable encryption on additional ports, update security policies, and monitor encryption performance. All of these functions can be automated, for example via a NETCONF interface, enabling encrypted links to be set up quickly and managed efficiently.

Collect Everything, Apply Big Data Analytics

Automation is equally critical to ongoing network management. In today’s networks, performance and fault management and troubleshooting typically rely on highly skilled network engineers working with limited data, which requires a substantial amount of hands-on activity. Good network management systems (NMSs) make the process simpler and reduce the cost and time required for fault isolation and recovery. But ICPs nonetheless see opportunities to improve on the current state of the art by applying their automation skills.

The new approach relies on two key principles: collect everything and apply big data analytics.

Collect Everything. With the falling cost of compute power and storage, there are few remaining barriers to collecting every bit of potentially relevant data about networks. Every network element can incorporate a powerful processor capable of collecting, aggregating, and transmitting all performance data, error logs, and anything else that might be useful. Massive storage in cloud data centers makes it possible for all that data to be collected and stored indefinitely, and ICPs have the expertise to synthesize and analyze the resulting large data sets.

However, collecting all available data efficiently requires new tools and new protocols. Looking forward, ICPs will expect all network elements, including optical DCI systems, to support continuous transmission of data to cloud-based data collection agents, a process known as streaming telemetry. As noted above, a preferred protocol to support streaming telemetry is gRPC.

As a result, leading optical DCI systems are now beginning to implement streaming telemetry with gRPC, and some of the network operators who use them are already setting target dates to stop using SNMP in day-to-day network management.

Apply Big Data Analytics. ICPs are working to automate the initial steps in trouble isolation and repair using smart software, data analytics, and machine learning. A smart software agent that has learned how engineers responded to problems in the past can assess alarms, collect and analyze relevant data points, and focus attention on the most likely alarm at the source of the problem. The agent may even be able to take initial actions such as shutting down a port with excessive errors. In an ideal scenario, before a human network engineer gets involved, the root cause of the problem is already isolated and fixed by a temporary workaround. The engineer can focus instead on the bigger issues of how to implement a permanent fix and how to reduce the probability of similar failures in the future.

Once streaming telemetry is widely deployed and massive amounts of historical data are available, big data analytics techniques can be applied to identify trends or opportunities for optimization. While the ICP network operators may not know exactly what the new applications and benefits of such big data analysis will be, they are excited about having the data readily available to answer new questions as they arise.

Summary

Continuous efficiency improvement through automation and big data analytics will deliver significant operational benefits to ICPs and other network operators. To support these goals, optical DCI systems need to be fully open and programmable and support automation tasks such as ZTP, topology discovery, encryption automation, and streaming telemetry. Looking forward, we can expect that new automation capabilities, including software-defined capacity, will bring ICP and CSP networks closer to a vision of fully autonomous cognitive networking.

Jay Gill is principal product marketing manager at Infinera.