Optical DCI Architecture: Point-to-Point versus ROADM

Chain reactions and snowball effects aren't just staple plot devices in movies where musclebound heroes and villains burn things and blow stuff up; the Internet revolution is a real-world chain reaction. The communications network industry talks in terms of "exploding" demand and "exponential" traffic growth with good reason. In essence, small phenomena such as sharing holiday snaps online eventually trigger much bigger results.

Burgeoning Internet traffic has consequently triggered another snowball effect in the data center world. This effect goes beyond growing demand for data center services because more and more traffic is passing among data centers as well as to and from internet exchange points (IXP)—applications known as data center interconnect (DCI).

There are varying service demands between each data center. When just two data centers are connected, a single fiber is all that's needed between them. But when multiple data centers are interconnected, multiple fibers are needed to serve the multiple interconnection points involved. With four data centers, six fibers are needed. The more data centers are interconnected, the more complex the web of interconnection becomes.

Virtually all data centers use DWDM networks to meet their DCI needs. The ability to carry vast amounts of traffic at an economical price makes DWDM technology the obvious choice. Two competing optical DWDM architectures exist for providing DCI bandwidth:

- Point-to-point (P2P)

- ROADM.

With a P2P design, each site has a single DWDM multiplexer and a fiber pair for each site to which it is connected. With a ROADM design, there is a single two-degree ROADM at each site and a single fiber ring to which each site is connected. The ROADM is capable of dropping traffic into that data center or passing traffic directly through to the next node on the ring.

In this analysis, we want to focus on the costs of implementing each scenario. With a disaggregated product portfolio, the amount of product deployed can be optimized for the scenario with very little unused resources. Sometimes, with a converged platform, additional unused resources are part of the platform and may not be used. This analysis is based on disaggregated network equipment, optimizing each node so we can focus on the impacts of the architecture.

Let's consider three scenarios in which three, four, and five data center sites are connected.

Three-Site Scenario

A comparison of three-node P2P and ROADM architectures is shown in Figure 1. Note that there are two transponders and two DWDM multiplexers at each site for the P2P architecture, with a total of three fiber connections.

These equipment counts are similar for the ROADM architecture, with two transponders and a two-degree ROADM at each site, with a total of three fiber connections. Instead of the two DWDM multiplexers, this small ROADM configuration consists of one splitter-coupler optical muxponder and two wavelength-selective switch (WSS) devices.

Four-Site Scenario

A comparison of four-node P2P and ROADM architectures is shown in Figure 2. Note that there are three transponders and three DWDM multiplexers at each site for the P2P architecture, with a total of six fibers. Contrast this with the ROADM architecture, which needs just three transponders and a two-degree ROADM at each site, with a total of four fibers.

Five-Site Scenario

A comparison of five-node P2P and ROADM architectures is shown in Figure 3. Note that there are four transponders and four DWDM multiplexers at each site for the P2P architecture, with a total of 10 fibers. Contrast that with the ROADM architecture, which needs four transponders and a two-degree ROADM at each site, with a total of five fibers. These scenarios illustrate that as the number of interconnected sites in a DCI architecture increases, a ROADM network design provides a more efficient approach to the fiber plant than P2P connectivity.

Comparison

To evaluate these two optical architectures more closely, let's examine the three-year total cost of ownership (TCO) for each scenario. Three cost categories are used for this analysis: equipment; utilities/power and rent; and dark fiber. The graphs in Figures 4–6 plot the relative costs of each category against an increasing number of sites for both the P2P and ROADM architectures.

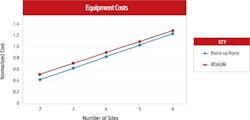

Figure 4 shows that the equipment costs are fairly similar between the two architectures regardless of the number of sites included in the design. Small ROADM configurations are more complicated than the simpler DWDM multiplexers used in the P2P architecture, thus the ROADM costs are uniformly, slightly greater.

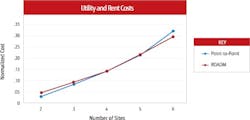

Figure 5 shows that the cost of utilities and rent are slightly higher for ROADM architectures up to four sites, but then P2P architectures become slightly more expensive. The DWDM multiplexers used in the P2P architecture are passive, whereas the ROADM equipment is active; thus the P2P uses less power and the P2P scenarios have lower utility costs. As the number of sites increases, the number of rack unit devices used at each site grows in the case of the P2P design. However, the number of ROADMs at each site stays the same. Thus, the growing rent associated with the additional devices in the P2P architecture drives the utility costs to increase, crossing the utility cost of the ROADM architecture.

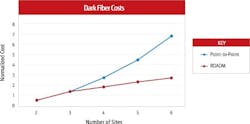

Figure 6 shows a similar dark fiber cost for up to three sites, but then the relative cost increasingly escalates for the P2P architecture above three sites. This cost was previously depicted in Figures 2 and 3 by the evident growth in fiber usage as site counts grow in a P2P architecture versus ROADM.

Total Cost of Ownership

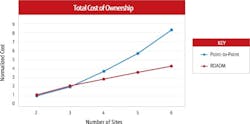

Using standard costs for required optical equipment outlays, as well as typical lease rates for facilities and fiber, a graph comparing three-year total cost of ownership (TCO) of the P2P and ROADM architectures is shown in Figure 7. In this graph, the x-axis indicates the number of sites needed (with a single wavelength between each site) and the y-axis represents the normalized three-year TCO. While there is little difference between them when just a few sites are connected, ROADM architecture holds an escalating cost advantage over P2P as the number of sites increases beyond three locations. This cost differential is primarily attributed to dark fiber costs and becomes more significant as architectures become more "meshy." For example, in a six-site network design, the cost of a P2P architecture is approximately twice that of ROADM.

Figure 7. TCO comparison.

Additional Considerations

The comparison of the two DCI architectures doesn't end with equipment, utilities/rent, and dark fiber costs. Other aspects like the amount of bandwidth and the cost of operation should be explored.

One argument against ROADM architecture is that there are fewer wavelengths, and thus less bandwidth between each site. ROADM sites will have 88, 96, or possibly 128 wavelengths of 100G/200G available, depending on the technology used. Thus, a four-node scenario will have an average of about 42 (128/3) wavelengths between each node. That's a lot of bandwidth and, in most cases, will satisfy wavelength and bandwidth needs for years to come.

Additionally, performing add-and-change operations in a P2P design requires fibers to be added or moved manually. More importantly, as demands are added, the power balancing of the network needs to be adjusted. Consequently, a skilled person is dispatched to each site to adjust the network for proper power balance. This becomes more and more difficult and costly as the numbers of demands and nodes increase. In contrast, a ROADM network has the capability to automatically change configurations from a centralized site remotely—and cut out the costly truck roll by automatically adjusting power as new demands are added. As the number of sites increases, these operational benefits of a ROADM network become more and more apparent and more beneficial. The larger the network, the more significant the operational savings become.

The use of disaggregated equipment platforms in the network design is not necessary, but it does simplify the transition from P2P to ROADM architectures. With an open, modular, and scalable approach, disaggregation enables efficient changes and upgrades as warranted by the bandwidth demand and growth of the network.

Compare Carefully

This paper has presented a cost comparison between P2P and ROADM architectures for DCI applications. Based on the site scenarios provided, ROADM architecture has equipment costs that are slightly greater than a P2P design, but utility and rent costs that are fairly comparable. However, fiber costs of P2P architecture are significantly, and increasingly, greater as the number of sites increase. According to this TCO analysis, network design scenarios of four or more sites will benefit economically from using a ROADM architecture versus P2P.

Additionally, the choice of architectures should take into account medium- and long-term future plans over a reasonably predictable timeframe to balance short-term against long-term costs. If there is a high likelihood of needing ROADM architecture in future, it may be simpler and ultimately less costly to begin that migration today.

Jeff Babbitt is principal optical solutions architect in the Optical Business Unit at Fujitsu Network Communications. He has more than 20 years of experience in the telecommunications industry. He has been instrumental in the modeling of various aspects of a telecom network, network optimization, and technology.