Test vendors make play for services

Test equipment manufacturers say they have noticed in the last year a subtle shift in the industry from FTTX testing to triple play testing. While that may seem like a relatively minor distinction, it is nevertheless a critical one, say test vendors. Thanks to the recent FCC ruling on fiber to the curb (FTTC), customers can still receive triple play services even if they don’t have fiber all the way to their homes. Thus, many test vendors are now focusing their energies on triple play, which is much broader in scope.

Moreover, infrastructure testing is now just part of the game. It’s no longer enough to verify the functionality of the physical plant; you also must test the services it supports. Companies like Agilent Technologies, Acterna (recently acquired by JDS Uniphase), and Spirent Communications are now committed to measuring and monitoring the quality of the customer experience.

“We believe that the future is not going to be necessarily on the infrastructure test but a test of the customer’s experience,” explains Peter Schweiger, business development engineer for Agilent’s optical-network test products (Palo Alto, CA). “You need to do tests that provide quality of service from a customer point of view. You might look at the bit-error rates and all of that, but at the end of the day, a guy wants to invite his buddies over to watch the Super Bowl in high definition, and that picture had better be perfect.”

“People need to look past testing the physical pipe,” adds Acterna marketing vice president Jim Nershook. “You could have a great copper or fiber pipe, and still your applications-your IPTV, your VoIP, and your data-perform poorly. This is really a big paradigm shift. It’s no longer sufficient just to test the physical pipe and say, ‘Okay, it works.’”

The next generation of test equipment should enable technicians to correlate service degradations with impairments in the physical plant. Network congestion, for example, can affect IPTV services. “IP video and VoIP are very packet-sensitive applications, so latency and packet loss can ruin the customer experience,” says Nershook.

Moreover, he says, a service provider wants to make sure technicians are dispatched in the field not to find problems but to fix them. “And you can only do that when you specifically say, ‘Okay, you have a problem here, and it correlates to this,’” he asserts.

A key challenge facing service providers entering the triple play arena is video. The RBOCs in particular face formidable competition from the cable multiple systems operators (MSOs), who are already adept at delivering quality video services. To entice customers to select their services, the RBOCs must provide a video service that is at least as good as their competitors, if not better.

That is not an insignificant challenge, say test vendors, because providers must take into account the psychology of the end customer, who is more apt to complain about poor video service than poor voice service. “We are all conditioned by our cell phones to accept mediocre quality,” admits Schweiger. “But video is really the killer app. Especially when you’re paying specifically for a high-definition signal, you won’t tolerate anything but perfection.”

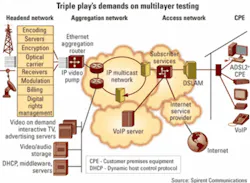

According to Jason Collins, senior marketing manager at Spirent (Rockville, MD), there are two types of video testing. The first is traditional infrastructure testing to measure the quality of the received video service. The second type of video testing is entirely new and based some service providers having chosen to deliver video traffic via IP multicast technology.

“What happens is, to change a channel in these video services, you’re going to be doing a multicast join to join the stream that you want to change the channel to,” says Collins. “There’s a lot of work going on right now to ensure that that multicast join happens very quickly. Today, we have the quality of service testing, and our direction is that we’re adding the multicast testing capabilities that would be needed for the video services.”

An additional challenge is the implementation of a mean opinion score (MOS) for video services. Today’s service providers rely on MOSs to measure the customer’s experience on voice services, but now they have to develop the same technique for video. “It’s very complicated-even more complicated than trying to figure out what good voice quality is, there’s so much in human perception of the video,” admits Collins. “It gets into a lot of psychology.”

The need to test service quality has also heightened the need for multilayer testing, says Collins. “Poor voice quality could be the result of high bit-error rates on Layer 1. Or maybe packets are dropped because of a queuing misconfiguration on a router somewhere; that’s a Layer 3 problem. Or it could be that they have a mismatch in the codex from one end to the other, which is a Layer 7 problem,” he explains. “You really have to go through all of those layers of testing to figure out what exactly it is. And sometimes it could be multiple things, because problems tend to ripple up.”Acterna also has been working on what it calls its application-aware test and service assurance strategy. “Most networks have an EMS [element-management-system] capability where each critical network element, you can see what it’s doing. In our opinion, that’s not enough anymore,” maintains Nershook. “We really need to see how the application performs through all the network elements. We like to say, ‘That’s actually a look-through strategy rather than just a look-down strategy.’ ”

Like Spirent, Acterna also has developed a service assurance portfolio. “Once your network is up and running, these probes will guarantee that it stays up and running,” Nershook notes. “In a VoIP system, they can actually determine the delay and latency. In terms of VoIP calls, how many calls are being processed? They can place calls in the network. You can do some proactive monitoring of your network rather than just being completely reactive all the time.”

Schweiger reports that Agilent is also working to develop equipment to better evaluate triple play services. In the last 12 months, the company has launched a variety of testers for the physical layer, including a low-cost FTTX OTDR. “Now going forward, you’re going to see a new [handheld] VoIP tester and then an increased focus on data, whether that be the computer connection or the IPTV.”

The key is to develop flexible equipment, adds Schweiger. “That’s a challenge because usually an instrument that is flexible costs a little bit more than an instrument that is designed specifically for one task,” he reasons.

Reducing customers’ test costs remains critical for test vendors. “We’re looking at decreasing the cost of deployment,” says Nershook, “and decreasing the cost of [customers] finding an issue within their network...once you can prove your value there, that’s where you will see the business.”

Despite carriers’ growing interest in testing services, testing the physical plant is still critical. With the increased deployment of PONs, even the basic handheld power meter must make more sophisticated measurements today.

Fiber to the home (FTTH) architectures employ three wavelengths, 1310 nm upstream and 1490 nm downstream for voice and packet transmission and 1550 nm downstream for RF video. “More and more what we see in the field is that this video transmission wavelength is not going to be used or is not going to be used 100% of the time,” reports Benoit Masson, senior product manager at EXFO (Quebec City). “That means that in some systems, instead of using 1310 and 1550, they are using 1310 and 1490. It’s not a big shift in wavelength, but it changes the transmission pattern. In older fiber, at 1490, you are closer to what we call the water peak, where you have a big attenuation on the fiber.” Such testing requires the use of a tri-band power meter that covers all three wavelengths and enables the technician to see up to three measurements at the same time.

Moreover, typical power measurements are taken on a continuous wave, but in FTTX networks, the power meter must measure a modulated signal since modulation can affect power readings, says Masson. The optical-network terminal (ONT) that sits at the customer premises does not transmit continuously; it transmits only when the optical line terminal (OLT) at the central office (CO) tells it to transmit. “In a BPON, you may have as little as one ATM cell that is transmitted by the ONT every 100 msec. So if you use a standard power meter with a standard measuring technique, you will not be able to tell whether the ONT is working well or not,” he adds.

Today’s power meters must also provide what Masson calls pass-through testing. “The only way we found to allow a technician to test all wavelengths, all signals, was doing a pass-through,” he says. “You have one connector that’s connected toward the CO and another that’s connected toward the house. If you open the link and you connect only to the ONT at the house, the ONT will stop transmitting because it only responds to the OLT at the CO.”

While EXFO and other test equipment vendors have made strides in the installation testing of FTTX networks, the troubleshooting phase will provide additional challenges, reports Etienne Gagnon, vice president of optical layer product management at EXFO. When the RBOC trio of Verizon, BellSouth, and SBC decided to move forward with FTTX networks, they did so because deploying fiber is less expensive than deploying high-bandwidth DSL from an opex point of view, he says.

“Now you have a completely different level of technician that has to be fiber-aware, that has to troubleshoot fiber, and I think that’s one of the biggest challenges carriers have today,” he maintains. “The installation part was done at least at the beginning by well-trained technicians, but the troubleshooting will be done by every technician in the field. We’re working with them to make our test equipment simple to use and not as expensive as construction tools,” says Gagnon, noting that EXFO plans to launch additional test tools in the near future.