Moving beyond 10-Tbps line capacity

By BERTRAND CLESCA AND WILLIAM SZETO

The last update of Cisco’s Visual Networking Index confirms, one more time, the skyrocketing need for bandwidth. Global IP traffic has increased eightfold over the past five years and will increase threefold over the next five years, the report predicts.1 This traffic increase directly affects the expected capacity requirements for the optical backbone networks that must support the long-distance transmission of all these services. We’ll discuss how capacity demand is addressed today and explore the next steps to further increase backbone-network capacity as well as the associated timeframe.

Network capacity offered by 100G technology

Network operators have just started to turn to 100G coherent technology to give them the capacity overhead they need. This technology meets the high-capacity networking needs of today and tomorrow more efficiently than traditional 10G technology. The main drivers for 100G transport include:

- Maximization of fiber use.

- Minimization of transport cost.

- Transport of 100G client signals (e.g., from 100G routers).

It seems that 100G development has avoided the multiple modulation formats “mistake” of 40G, especially for long-haul applications where polarization-multiplexing quadrature phase-shift keying (PM-QPSK) clearly prevails. This commonality of approach will ensure mass production and lower cost for the basic components required to build 100G interfaces.

The basic principle of PM-QPSK trades speed for parallelism at the expense of added complexity. The parallelism that PM-QPSK offers (two states of optical polarization, 2 bits per symbol) enables 100G wavelengths to ride on a 50-GHz frequency grid.

The number of transmitted 100G waves thus depends on the optical bandwidth of the line equipment, i.e., the in-line optical amplifiers placed along the route to periodically boost the optical power of the signals. Although effectively enabling multichannel amplification, an EDFA’s optical bandwidth is intrinsically limited to about 36 nm. Assuming 50-GHz channel spacing, the EDFA bandwidth therefore typically allows 88 optical channels, hence the 8.8-Tbps line capacity offered today by several equipment vendors.

Increasing line capacity

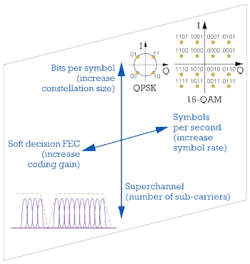

Given the spectral bottleneck EDFAs impose, new approaches are necessary to increase spectral efficiency at the terminal/interface levels. Figure 1 illustrates the most commonly explored axes of development to increase line capacity beyond 8.8 Tbps. Given the speed limitations of current electronics, increasing the symbol rate is not an option for the midterm. That’s why, in addition to improving the performance of forward error correction (FEC) by moving from hard to soft decision approaches, most vendors are pursuing the following two paths:

- Increasing the number of bits per symbol by developing higher-order modulation formats for higher channel capacity. But along with their higher complexity and cost compared to the current PM-QPSK option, multilevel modulation formats such as 16-QAM offer a reduced reach due to weaker tolerance to optical noise and nonlinearities.

- Building superchannels by multiplexing a number of sub-carriers with small channel spacing to maximize EDFA bandwidth use. The typical capacity gain obtained by building superchannels with today’s 100G technology is no more than 30% compared with 100G PM-QPSK wavelengths spaced 50 GHz apart.

By combining higher-order modulation formats and superchannels, vendors might offer 24-Tbps line capacity in the future via 400G wavelengths in a gridless approach. However, 400G 16-QAM transmission would have a reach limit of about 600 km. Furthermore, the gridless approach – where the carrier wavelengths are no longer allocated to specific spectral slots – might work well on a simple point-to-point link but not on meshed networks.

Today, in spite of some lab and field trials, there is no clear direction toward an optimal technical option for going beyond 100G in long-haul applications.

Standards for the next channel rate

In parallel with the exploration of different technical options, the development of future high-bit-rate systems awaits the resolution of several major standards-related issues. The most critical issue is to decide the bit rate.

On May 9, 2011, the IEEE 802.3 standards body announced the formation of the Ethernet Bandwidth Assessment Ad Hoc Group to assess future bandwidth requirements for Ethernet wireline applications. The IEEE is expected to make a recommendation on the bit rate and other key technical parameters, which will then kick start standardization work for next generation high-capacity networks. When the work on this recommendation concludes is not clear as of this summer.

Another critical piece of standards work is to define the future OTN ODU5 frame within ITU-T Study Group 15. Several contributions were made in ITU-T SG15 meetings on starting this process. The group decided to wait for the bit-rate recommendation from the IEEE to synchronize standards development.

A third part of the critical standards work rests with the Optical Internetworking Forum (OIF). The OIF was instrumental in the development of the optical-line interface implementation agreements (de facto standards) for 100G and is expected to play a similar role in the next rate.

Additionally, the ITU-T SG15 (Q9/15) has begun work on shared mesh protection schemes. This effort may provide an important step toward the future implementation of higher-bit-rate systems.

Meanwhile, with the rapid growth in Internet traffic, large data centers will become one of the major applications for future high-bit-rate systems. These data centers will demand more efficient transport for server connectivity, core network switching, and other internal data-center requirements. So standards also must be developed with data centers in mind.

These standards-related items will take time to complete. As a reference, the IEEE recommendations on 40/100G were ratified in June 2010 after many months of hard work. We can expect that standards work on future high-bit-rate systems will take at least 24 months to complete.

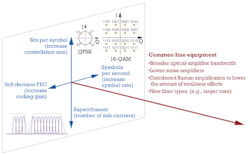

Enhanced line equipment for higher capacity

As discussed, most of the dimensions explored today to increase optical-network capacity focus on the interface level. There is, however, another dimension that heavily affects both capacity and reach. This dimension involves the line equipment, where improved fiber and enhanced amplifiers can significantly increase the Capacity x Reach metric (see Figure 2).

The fiber in terrestrial networks can support an optical bandwidth much broader than the one EDFAs provide. Consequently, combining line equipment that can tap this wider optical bandwidth with 100G data rates is a natural way to increase line capacity today while offering decreasing cost per bit as 100G technology matures.

Meanwhile, improved noise performance (OSNR requirements are more stringent when the channel rate increases) and distributed amplification within the line fiber (too high per-channel power leads to nonlinear effects for which today’s receiver technology cannot properly compensate) are key parameters to enable ultra long reach.

As the optical telecommunications community generally agrees, Raman amplification is an effective way to meet these three key requirements for 100G and higher channel rates. Practically speaking, Raman amplification offers all-optical reach in excess of 3,000 km with field fiber attenuation, standard margin per span for repair, and non-uniform span lengths as found in real network environments.

The way and timeframe toward higher capacity

The history of high-speed optics teaches us that about three years separate the first field trials (2007 for 100G) and the early adopter phase (2010). Another three years separate the early adopter phase and the generalized deployment phase (which, for 100G, should begin in 2013).2 Meanwhile, the evolution of the average sales price ratio between 100G and 10G line cards indicates that parity will be reached, on average, in 2013–14. Such parity is just the beginning of the volume curve, where the technology is fully mature (with some incremental improvements in the future) and the price erodes. With a 10-fold capacity increase compared to 10G technology and significant price erosion to come, 100G will become the new 10G and the rate to deploy for the next several years.

From a standards perspective, general availability of the next channel-rate technology realistically is still several years away, mostly because the IEEE still has to make a recommendation on what this next channel rate will be. Other standards organizations have work to do as well (e.g., ITU-T has to provide definitions and standards for ODU5). Operators are unwilling to deploy non-standardized equipment in their backbone networks, so it is unlikely we’ll see 400G or 1-Tbps (1T) equipment in commercial service in such applications within the next three or four years.

Lastly, today’s 100G technology achieves excellent reach performance (more than 3,000 km in real network environments with Raman-supported amplification). By contrast, the reach demonstrated so far by 400G or 1T prototypes is significantly shorter. This factor would impose severe limitations and extra costs not only for long-haul links, but also for highly meshed network configurations.

Consequently, we believe 100G should dominate for the next five years before the commercial deployment of any higher rates that still need to be defined, standardized, and developed.

In parallel to further incremental improvements (e.g., space and power consumption) brought to 100G interface cards and additional 400G/1T lab demos and field trials, another path to explore to increase capacity is the development and implementation of more advanced line equipment. A larger optical bandwidth can expand line capacity significantly beyond 10 Tbps. Better noise performance and distributed amplification within the line fiber can achieve a reach longer than 3,000 km with long spans for 100G and higher rates. The other good news is that higher-end line equipment can also reduce the relative cost of interface cards by relaxing their technical requirements and limiting the number required throughout the network, e.g., by the elimination of regeneration sites.

References

- Cisco Visual Networking Index: Forecast and Methodology, 2011–2016 (May 30, 2012).

- Forecast and Methodology, 2011–2016, Ovum analyst Ron Kline’s presentation at Terabit Optical & Data Networking 2012 Conference (April 16–19, 2012 – Cannes, France).

BERTRAND CLESCA is head of global marketing and WILLIAM SZETO is chief technology officer–terrestrial systems at Xtera Communications Inc.