Photonic switches in the worldwide National Research and Education Networks

Scientific applications such as supercomputing and bio-informatics can benefit from high-capacity transport between a limited number of locations. This need for high-capacity internetworking is driving the growth of National Research and Education Networks (NRENs) such as the National LambdaRail (NLR)1 initiative in the United States. The NLR serves as a national interconnect for regional optical research networks.

In many cases, next-generation IP/optical networks are deployed to support network research requiring a large amount of flexible bandwidth. Combining an intelligent optical core with an intelligent optical edge and flexible control-plane protocols, NRENs can truly scale bandwidth to meet the growing demands of network-based research. Particle physics, virtualization, and computing clusters are some of the key research areas driving this transition toward hybrid IP/optical networks. The Global Lambda Integrated Facility is an organization whose members support scientific research, middleware development, and the interconnection of the international LambdaGrid that will provide high-bandwidth capacity as needed to science and research organizations worldwide (see Figure 1).2Photonic switches play a critical role in these new network initiatives. Powered with intelligent multilayer protocols such as GMPLS, photonic switches interoperate with routers and transport equipment to enable new and higher-capacity bandwidth on demand services. With smaller footprints and much lower costs than routers, photonic switches can provide virtually unlimited switching capacity: Lightwave circuits (lambdas) can be switched, independent of bit rate, protocol, or format. Fundamentally, photonic switches extend the transparency and low cost structure per bit of optical fiber to Layer 1 switching.

Some of the first NRENs to deploy photonic switches in conjunction with transport and Layer 2/3 devices are the SuperSINET3 in Japan and OptIPuter/TransLight4 in the United States and Europe. In particular, researchers are investigating different approaches in using lambdas to provide optimized network performance for demanding grid applications. At the same time, the Canadian Network for the Advancement of Research Industry and Education (CANARIE) in Canada and other research-network consortia have led the way in utilizing available dark fibers to build cheaper, customer-owned networks5.

The dark fiber model is an important evolution away from managed services leased from carriers. In the future, it is possible that some NRENs may end up in a competitive situation with traditional service providers, providing services to high-end users such as scientific institutions and even large businesses, depending on regulations. But regardless of their competitive implications, NRENs also have important societal and economic impacts because they spearhead the emergence of new architectures and protocols to support new services. The best example is the development of the Internet in the 1970s as a result of U.S. government network initiatives.

NRENs typically need to offer a mix of production and experimental services that scale from Layer 1 to Layer 3. That requires a flexible architecture that can evolve and address integration challenges. Both photonic switches and GMPLS protocols help to scale the network to meet the needs of new demanding services and simplify system and network integration.

A representative IP/optical-node architecture that addresses the primary service and performance requirements is shown in Figure 2. Traditional IP services are first managed via router, then connected to the optical backbone. By using an optical switch, high-capacity Gigabit Ethernet (GbE) or 10-GbE Layer 2 services are switched directly to the optical transport as an optical wavelength for very-large-capacity users and experimentations.This type of architecture also offers economic advantages in terms of lower capital investment and operational costs. GMPLS automates network provisioning and allows more efficient use of network resources. Photonic switches reduce the cost of transport by lessening the use of SONET/SDH framing for transmission and fully leveraging new DWDM transport capabilities. These platforms are also well adapted to fiber-rich environments where lower-cost 10-GbE long-range interfaces and CWDM technologies can be used to carry data. On the client side, high-capacity Layer 2 services no longer have to bear the cost of expensive router cards. Ultimately, remote devices such as supercomputers can now be connected at data speeds (e.g., 10 Gbits/sec) previously only viable for very-short-distance interconnection within a single computer.

Moreover, such architecture achieves better performance because it does not suffer the throughput and latency limitations that pose such critical problems in advanced scientific applications. Ideally, Ethernet/optical would yield the most cost-effective architecture and the best performance for high-capacity services. However, IP Layer 3 devices are needed to scale the network beyond a few high-capacity users and provide the services to home and business users.

Photonic switches also address important challenges like the need to provide adequate quality of service and monitoring capabilities for the operation and maintenance of commercial networks. That is made possible through implementation of GMPLS protocols developed at the Internet Engineering Task Force and the standardization efforts of the International Telecommunication Union (ITU) and Optical Internetworking Forum. GMPLS generalizes MPLS to support multiple connection types, including timeslots, wavelengths, and fibers. One fundamental benefit of GMPLS is the establishment of an end-to-end label-switched path. The GMPLS suite of protocols also includes signaling/routing protocols (OSPF-TE or ISIS-TE), reservation and traffic engineering protocols (RSVP-TE), and link management protocols (LMP and LMP-DWDM).

Several nationwide field trials have been carried out in recent years in Japan6 and GMPLS-controlled networks with 3D MEMS-based photonic switches are now commercially deployed in Japanese carrier networks. GMPLS-powered networks provide more diverse and bandwidth-efficient protection services such as shared mesh protection. These architectures also allow service provisioning across multi-network domains with the choice of overlay or peer-to-peer models. Because of these field-proven efforts, photonic switches and GMPLS protocols are becoming mature technologies.

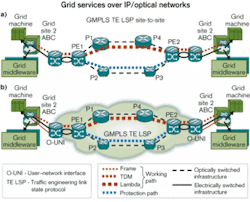

Photonic switches and GMPLS can also be used to implement new grid services. The term grid is widely used for distributed, parallel, and networked processing of open systems interconnection (OSI) Layer 7 applications and services. A grid network is an overlay atop a Layer 3/2/1 network (lower layers consisting of network, data link and physical layers).

The functioning, configuration, and behavior of Layer 3/2/1 networks are assumed to be independent of overlay Layer 7 grid networks. Even if the Layer 3/2/1 network remains a cloud to the grid, it is desirable for the grid to make use of a wide variety of network services provided by the Layer 3/2/1 network. The justifications for interfacing network services to the grid are manifold, and interfaces are already being actively developed by NRENs (e.g., the Poznan Supercomputing and Networking Center in Poland). With interfaces to network services, a grid middleware or application is able to perform network-aware grid functions such as scheduling and storage management.

Figure 3 shows two versions of a grid over an optical transport network, supporting GMPLS protocols and conforming to ITU automatic-switched optical-network standards. The upper diagram shows a GMPLS network owned by the Grid organization that provisions site-to-site using GMPLS traffic engineering label-switched paths (TE LSPs). That corresponds to a peer model with internal network-network interfaces (I-NNIs).Figure 3. In a peer model (a), a GMPLS network owned by the Grid organization provisions site-to-site, using GMPLS traffic engineering label-switched paths (TE LSPs) and internal network-network interfaces (I-NNIs). In the overlay-model (b), grid services are established across multiple networks, and user-network interfaces are implemented between the grid network sites and underlying network. Unlike the peer-to-peer model with I-NNIs, overlay model subnetworks do not share critical information.

Figure 3a shows a network architecture where user-network interfaces (UNIs) are implemented between the grid network sites and the underlying network. That corresponds to an overlay model, and the underlying network may or may not be owned by the Grid organization.

In this case, grid services are established across multiple networks, and the TE LSP can still be established using an external NNI (E-NNI). As opposed to the peer-to-peer model using I-NNI, overlay-model subnetworks do not share critical information. The advantage of the GMPLS protocols lies in their ability to support an overlay, a peer, or a hybrid version and their adaptability to the operational constraints of the network.

Emerging NRENs are taking full advantage of the benefits of an intelligent optical-switched core, an intelligent optical edge, and a flexible suite of control-plane protocols to scale network bandwidth and meet the growing demands of network-based research. These networks are blazing performance trails that can be leveraged by large-scale commercial- carrier networks worldwide.Olivier Jerphagnon is a product marketing manager and David Altstaetter is a senior system engineer at Calient Networks (San Jose, CA). Graca Carvalho is a consulting engineer with Cisco Systems EMEA. Chris McGuganis senior manager for academic research and technologies initiatives at Cisco (San Jose, CA). The authors can be reached via their companies’ Websites, www.calient.net and www.cisco.com.

1. National LambdaRail (NLR), www.nlr.net.

2. Global Lambda Integrated Facility (GLIF), www.glif.is.

3. S. Asano, “SuperSINET,” www.dante. net/conference/globalsummit2002/html/ 2-6asano/, Global Research Networking Summit, May 2002.

4. T. DeFanti et al., “TransLight: a global-scale LambdaGrid for e-science,” Communications of the ACM, Vol. 46, Issue 11 (November 2003), pp. 34-41.

5. B. St. Arnaud, “Disowning the network,” http://currentissue.telephonyonline.com/ar/telecom_disowning_network/, Telephony, September 2002.

6. T. Otani et al., “Field trial of GMPLS controlled PXCs and IP/MPLS routers,” Paper Tu3.4.3, proceedings of ECOC-IOOC, September 2003.