Optical performance monitors continue to chase market

The market for embedded optical performance monitors (OPMs) has been rocky since its inception. Conflicting requirements, significant technical hurdles, and lack of a standard interface have kept significant deployments "within the next six months." The forecasted market has been reduced significantly since the technology first appeared.

Embedded OPMs (or "optical-channel monitors," as they are sometimes called) perform the task of the benchtop optical spectrum analyzer (OSA) at the rack level in a WDM network. The job of these mini-OSAs is to continuously monitor key optical parameters of the optical signal to provide feedback on the signal's health. The OPM market has evolved considerably in the last few years, leaving a few vendors vying for a piece of a slowly growing market.

Work on embedded monitors started in the mid-1990s, yet none were commercially deployable until around 1999. Interestingly, all of the early development came from startup

In 1997, there were about five companies seriously developing OPMs. Contrast that with about 15 companies in 2001 and about six today.Why were these early efforts so commercially unsuccessful? Three factors tell most of the tale:

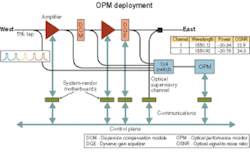

1. The market was very slow to develop. DWDM systems manufacturers were never quite sure if the OPM was a necessity or just a nice feature to have as a marketing tool. That left the OPM in the lab for much longer than typical modules at that time. Additionally, confusion existed as to whether the OPM should control a particular network element (primarily an amplifier, although there was interest in using it to close the feedback loop to a dynamic gain equalizer) or be used as a higher-level device that would report the health of a particular node to higher control layers. To a reduced degree, this dual personality still exists. OPMs thus missed the first few design cycles of DWDM systems.

2. The technology was immature. All OPM designs incorporate either a detector array or narrowband tunable filter. Detector arrays were prohibitively expensive, and the early devices suffered from pixel dropout or fading. For designs based on tunable filters...well, to this day the market is ripe for a cheap tunable filter with high reliability. Finally, immaturity plagued the firmware side as well. Startup suppliers were focused on getting the hardware running with little attention paid to software. Robust software development is something that even the larger component manufacturers continue to pursue.

3. Since there was no large incumbent supplier of OPMs, the industry did not quickly coalesce around a single standard interface (hardware or firmware). Instead, a core design team of say 15 engineers at each company had to support up to five different firmware and hardware interfaces. The end result is that very few customers were serviced adequately.

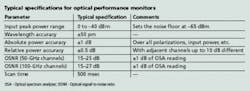

Typical specifications for OPMs are shown in the Table. From that list, several challenges become apparent.Power. The OPM is a single-fiber device, so it obviously does not have an insertion loss (IL) specification. However, the IL is the most critical parameter to the designer. It determines the dynamic range of the instrument but more important the stability over the lifetime of the entire optical path's loss must remain very high. That means the lifetime drifts of all the optical components, from the input to the detector, must remain near-constant. Whether the design incorporates free space or all in-fiber components (couplers, isolators, etc.), the cumulative IL drift over life must be on the order of 0.5 dB or less. That leaves 0.5 dB for temperature effects and measurement-to-measurement noise.

Adding to the difficulty is that the really important measurement is the relative power measurement (for example, power difference between adjacent channels), where tolerances are even tighter. That means the wavelength-dependent loss of the optical path must essentially remain constant over life—a very difficult task.

Since the OPM is primarily a power measurement instrument, it is unfortunate that no standard multichannel power reference exists (at a reasonable price, anyway) that could be incorporated into a design. Such an element would greatly increase the reliability of monitors and to some degree simplify their design.

Optical signal-to-noise ratio (OSNR). OSNR is typically the most difficult measurement to achieve. Since OSNR is a relative measurement of two powers, the OPM must again try to emulate the bench OSA set at some agreed resolution bandwidth (typically 100 pm). Vendors and customers must agree on a specific OSA, and just as important, that OSA's settings for OSNR measurements or the specification will be very hard to achieve. All OPMs must perform a lot of signal processing to yield an accurate result given the wide variety of possible input spectra that need to be measured. Finally, some debate exists over the utility of the OSNR measurement itself. Certainly, the OSNR value yields a measure of the health of a DWDM signal as it traverses a node. However, it is more of symptom than an indication of the root cause of some impairment.

Wavelength. Debate also exists over whether a monitor should measure wavelength. In reality, the OPM must be able to reliably identify the channels present in a DWDM signal. Since DWDM systems were designed before monitors were available practically, system developers included wavelength drift tolerance in their boxes. In fact, for 50-GHz systems, the lasers employ lockers that ensure the lifetime drift of the laser is typically less than the standard OPM's wavelength measurement accuracy. In addition, most monitors based on tunable-filter technology employ an onboard wavelength reference, so while the wavelength measurement is accurate, whether it's useful is highly debatable.

Scan time/number of scans. Scan time has been another contentious specification. Early developers believed the monitor should be able to complement SONET/SDH alarming for fast restoration. It quickly became apparent that achieving the necessary spectral scans in tens of milliseconds was not practical.

Once this criterion was discarded, it became apparent scan time was less important. OPMs designed with detector arrays that take a snapshot of the entire spectrum with each measurement are capable of fast readout times. But once the post-processing is included, the fastest scan time available is on the order of 100 msec. Tunable-filter designs are slower since they actually scan the spectrum. Interestingly, the scan speed limitation is typically due to the speed of the detector's transimpedance circuit that must swing up and down through three orders of magnitude across many channels as it scans from noise floor to channel peak.

In practice, OPMs can generate a lot of data (80 channels of wavelength, power, and OSNR data) that needs to be continuously analyzed by the motherboard to which the monitor is mated. From the motherboard, which is a component of the system vendor's architecture, decisions are made on the information. To control a dynamic gain equalizer (DGE), for instance, the information needs to travel along the backplane to the card that interfaces with the DGE and ultimately onto the DGE itself. Information destined out of the node must travel along the optical supervisory channel. For a system with many nodes, that can mean a lot of data flows to the network operating center. Thus, there is likely a significant delay between when OPM data is generated and when it is analyzed—so scan time is in reality not as critical as would be initially thought.

Furthermore, what is the OPM really measuring? Long-term drifts in the optical path, degradation of active components, and channel power balancing during the commissioning of new channels represent the primary measurement requirements of a deployed OPM (especially for long-haul systems). None of these measurements requires rapid scan times. One option system manufactures use to amortize the cost of the OPM is to install a 1×N switch in front of the OPM so that multiple DWDM fibers (or multiple places in a node) can be monitored sequentially. Needless to say, that again relaxes the scan time requirement.

Finally, continuously scanning at a rapid rate of 400 msec (state of the art for a tunable-filter-based OPM) over a life of 15 years will result in a requirement of ~1.2 billion cycles over life—a very tall order for a tunable filter.

Effectively all the major system vendors have an OPM designed into their long-haul offerings. While the collapse of demand has kept volumes low, the total market for embedded OPMs in 2004 is on the order of $10–$20 million. Unfortunately for the vendors, price pressure has continued unabated to the point where a 100-GHz-capable OPM in moderate volume (hundreds of units) is selling for about $3,000. A 50-GHz OPM with decent OSNR performance would command $5,000–$7,000 depending on some specifications. In contrast, the first OPMs in 1999 sold for $15,000. A positive development is that de facto standardized sizes and interfaces have emerged so that many form factors and software revisions need not be supported.

OPM penetration into the metro market is almost nonexistent, although demand exists for a monitor that could measure power at a price point of about $1,000. Ultimately that could be an important market, since metro networks will likely be the first place to see the deployment of architectures capable of dynamic reconfigurability.

Considerable work was done in the past on designing a monitor that could measure—in addition to power, OSNR, and wavelength—other parameters such as dispersion, Q, or even bit-error rate. These are daunting challenges and, as in 1997, the component infrastructure is not mature enough (especially the tunable filter) to realize a commercial product in the near future.

Today, reliable, qualified monitors are available and range from simple, power-only devices using low-resolution arrays to high-performance units that can measure 50-GHz channel spectra for both the C- and L-bands. Ultimately, monitors will be deployed in two slightly different applications in the network. Foremost, they will be buried, unseen, in the more advanced network modules, where they will provide direct feedback to the module itself. Examples of this application include DGEs and wavelength switches (reconfigurable optical add/drop multiplexers). The second application is for the system vendor to have access to the data as a tool for channel commissioning, topology discovery in wavelength-agile networks, fault localization, and traditional long-term drift monitoring. In this application OPMs can move away from the embedded architecture and into a pizza-box form factor with a direct Ethernet interface.

Optical performance monitors have had to travel a rough road as the technology has matured, but there remains little doubt that they are here to stay and will see increased use as the optical layer evolves to a more dynamic architecture.

Keith Beckley is director of product management at NOVX Systems (Markham, Ontario).