Standardizing optical-layer control and signaling

There are several standards organizations pursuing multiple ways of controlling the optical layer. What will be the outcome?

Krishna Bala

Tellium

Developing signaling protocols for dynamic control of the optical layer is one of the most critical challenges facing makers of optical switches today and is perhaps the most critical standards issue being resolved currently. The Optical Internetworking Forum (OIF), the Internet Engineering Task Force (IETF), the Optical Domain Service Interconnect (ODSI), and more recently the T1.X1 subcommittee and the International Telecommunication Union (ITU) are all working to rapidly establish methodologies that will determine how Internet Protocol (IP) and optical networks interoperate. At the core of the debate is whether to use the "client-server" model, which would place optical-domain-specific control intelligence entirely at the optical layer, or the "peer" model, which requires optical-domain awareness intelligence in IP routers.

The outcome of these standardization efforts, which is expected by year-end, has many significant long-term ramifications that are being considered during the decision process. First and foremost is how quickly interoperability can be achieved in the optical arena. Interoperability between optical devices is critical for the overall success of the market segment. With a variety of players arising-each targeting different aspects of the optical market from edge devices and metro switches to core switches and ultra-long-haul equipment-carriers will have a wide choice of devices to use in their next-generation networks.

Carriers have already made it clear that they expect to integrate best-of-breed devices from a variety of vendors. To enable carriers to build their networks in this fashion, equipment providers must adopt protocols and architectures that will interoperate seamlessly. That includes deciding which interworking models and protocols to use. These decisions directly affect how applications on the optical layer are developed. Such applications currently include dynamic lambdas, instantaneous bandwidth provisioning, and mesh restoration. Other applications may emerge in the future. Given each model's foundation, the choice will determine which paradigm will drive application development-optical or router-based intelligence-and how quickly operators will be able to adopt new applications.

As stated previously, two primary models are emerging in the industry for interoperability between the IP and optical layer. The peer model is based on the premise that the optical-layer control intelligence can be transferred to the IP layer, which may assume end-to-end control. The client-server model is based on the premise that the optical layer is independently intelligent and serves as an open platform for the dynamic interconnection of multiple client layers, including IP.Both models assume the development of the next-generation optical mesh network with a simplified IP-based control plane (based on Multiprotocol Label Switching-MPLS). The MPLS control architecture provides a simple and mature set of protocols (compatible with IP networks) on which to base next-generation communications. Unified control across the data and optical layers simplifies cross-layer network management and improves resource utilization through cross-layer traffic engineering.

In this scenario, IP routing protocols are leveraged for topology discovery, and MPLS signaling protocols are used for automatic provisioning. These protocols are robust and scalable and have already been successfully field-tested. Additionally, the use of these protocols for optical-layer control helps equipment manufacturers ensure interoperability by using established standards. The IP-based optical-layer control stack will be standardized, as efforts move forward and a model is adopted.

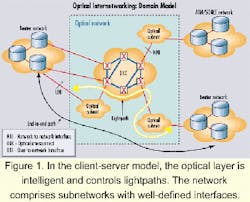

While each model uses an IP-centric control architecture, they would manage applications differently. For example, the optical control plane will control dynamic lambda provisioning with routers on the edge and linked optical subnets (see Figure 1). After a router experiences congestion, the network-management system (NMS) or the router will request a dynamic lambda be provisioned. The optical switches will then be able to create new or enhanced service channels (OC-48 or OC-192) on the optical layer to meet the router needs. Thus, dynamic lambda provisioning can adapt to traffic flows.

The client-server model would let each router talk directly to the optical network using a well-defined user-to-network interface (UNI). Interconnection between subnets would occur using a network-to-network interface (NNI). In essence, the client-server model allows each subnetwork to evolve independently. It allows innovation to rapidly evolve in each subnetwork independently, which allows a carrier to introduce new, innovative technologies in the network without the burden of evolving the older technologies. Carriers can leverage their existing legacy infrastructure.

More importantly, from the standpoint of carriers seeking to build multivendor optical networks, the client-server model enables interoperability in the near term by the use of well-defined UNIs and NNIs. Creators of optical systems will likely be able to build standardized interoperability into their networks by first quarter 2001.

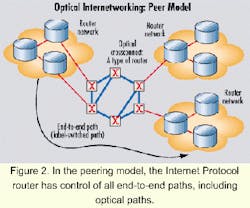

The peer model would support dynamic provisioning by using an end-to-end path (label- switched path-LSP) that surrounds the optical network, managing it remotely (see Figure 2). The peer model assumes that the IP router controls the optical layer. Under this model, a significant amount of state and control information flows between the IP and optical layers. As a result, it will take much longer to achieve interoperability under this model than under the client-server model.

Each model has its advantages, but the client-server model appears to have among others one significant advantage over the peer model-a faster path to interoperability.

Architecturally, the client-server model is more direct and simplified. It allows for both in-band and out-of-band control of lightpaths in a fashion similar to the paradigm used in intelligent networks and SS7 technology. With peer-model architectures, additional communication between the IP and optical layers is required to manage end-to-end paths that traverse over lightpaths. This high amount of state and control information flow includes direct communication with routers at the opposite edge of the optical network, in addition to the communications required with the optical network.

It would be unwise for network operators to create an infrastructure for IP over optical networks that are constrained by technology at the IP layer. Optical wavelength-routing technology has been quadrupling in capacity every year. IP router technology, on the other hand, is limited by Moore's law, which states that silicon devices only double in capacity every year. The client-server model allows innovation at the optical layer to continue independently of the IP layer while providing the necessary interoperability required for fast service creation.

Regardless of which model is adopted by standards bodies, there are several critical issues to be resolved for a successful standard to emerge. First, IP transport over optical networks must be standardized. That includes determining the requirements for the IP-optical interface, the information exchange over this interface, and traffic engineering.

A major area that needs standardization is the MPLS-based control plane for optical networks consisting of multiple, interconnected subnetworks. That includes dynamic provisioning and fast restoration across optical subnetworks as well as the routing and signaling protocols. Specific work items include the development of a common global addressing scheme for optical-path endpoints, the propagation of reachability information across subnetworks, end-to-end path provisioning using standardized signaling, policy support (accounting, security, etc.), and support for subnet-specific provisioning and restoration algorithms. Within a single subnetwork, the issues are automated provisioning of lightpaths using signaling, automated topology discovery, capacity optimization algorithms, protection of established paths along each segment, and end-to-end restoration of paths using shared protection.Local topology discovery has its own series of control-plane requirements. These issues are less resolved and include determining what information must be exchanged, what configuration information is needed, what link and port parameters are defined, and what is the protocol for auto-discovery of local topology.

With regard to the standards bodies, the OIF has a signaling working group that is working with the architecture and OAM&P (operations, administration, maintenance, and provisioning) groups to create the first version of the UNI 1.0 specification. The IETF has a special-interest group for IP over optics that is developing an architecture framework document. Both groups are actively involved in specifying the details of the IP-over-optical-signaling infrastructure.

Many companies participating in these groups are also working on the ODSI initiative. Recently, there has been activity in the T1.X1 committee on the Automatic Switched Optical Network. The ITU is expected to carry this standards activity forward.

Several trends are emerging in the standardization process. Provisioning of lightpaths is reusing the MPLS traffic-engineering framework and will likely leverage CR-LDP (constrained-based routing-label distribution protocol)/RSVP (resource reservation setup protocol) for signaling lightpath establishment and teardown. Automated topology discovery is moving toward a link management protocol (LMP) for link/port states and attributes. Additionally, routing protocols with traffic-engineering (TE) extension for topology discovery are being developed. Currently, open shortest path first and intermediate system to intermediate system are two protocols under consideration. These protocols with TE extensions can be used to advertise additional link-state information, including resource availability and diverse physical routes.

LMP for neighbor discovery can be used between two adjacent optical switches. That will permit auto-discovery of peer optical crossconnects, the corresponding port IDs, link speed and type, and port and channel state. LMP will utilize unused SONET overhead bytes for its operation.

Lastly, signaling protocols based on both CR-LDP and RSVP have been proposed. These proposals allow for the mapping of physical-plant hier archy into a label structure and defining type length values for TE parameters applied to lightpaths. Under development are extensions to RSVP/CR-LDP to facilitate dynamic and partial restoration as well as creation of controlled interaction between IP routers and optical switches.

There is much hard work ahead. But the news is good, thanks to the commitment that standardization participants have made to working on resolving the optical-layer control issues in the near term. Interoperability is at the top of every participant's list, and IP-centric control enables it to become a reality. Now, we as an industry must demonstrate it. As a result of everyone's efforts, it is my belief we will see unified standards emerge later this year or early next year.

Krishna Bala is chief technical officer at Tellium (Oceanport, NJ).