Quality of service in resilient packet transport rings

How quality of service is implemented in the network is becoming a critical issue for telecommunications service providers.

JAY SHULER, Luminous Networks

The term "quality-of-service" (QoS) is used to describe any combination of performance attributes that can be applied to telecommunications traffic. To be useful, these service attributes must be provisionable, manageable, verifiable, and billable. They must be applied consistently and predictably-some would even say deterministically-to user traffic based on one or more identifying attributes of that service. Service providers create services by manipulating these attributes through a combination of traffic engineering and QoS mechanisms. They then offer these services to end users through service-level agreements (SLAs)-essentially a contract between the provider and the user that sets expectations and pricing while establishing penalties for noncompliance.

QoS has one set of implications to end users and another to carriers. Users don't care how QoS is implemented. In fact, they don't want to deal with it at all. They just want their applications to perform. Carriers, on the other hand, are directly impacted by QoS implementation issues.

End-user traffic is highly diverse. Most solutions are designed to be highly efficient and cost-effective for one type of service. SONET solutions are optimized for high-quality voice and circuit (private-line) services which, until recently, have constituted the majority of network traffic. Even now, these services account for the majority of carrier revenues and often more than 100% of their profits, i.e., they are subsidizing the build-out of the data network. So it is essential that metropolitan-area-network (MAN) solutions have the ability to provide circuit services with an appropriate QoS.ATM was invented to provide multiple services, such as high-bandwidth data, over a single infrastructure. As such, it has a highly developed, mature, and standardized set of QoS mechanisms for the provision of even the most finicky services. But since the invention of ATM, the Internet has exploded-and with it, demand for IP-based services. It has become apparent that for a variety of reasons, including complexity, cost, and efficiency, ATM is not well adapted to networking IP data. However, many of the mechanisms developed for ATM are applicable to IP networking. Once these mechanisms are employed, stand back! A new wave of demand will come from the many new applications that are now possible over this new Internet, including many that have not yet been envisioned.

Thus there is a compelling reason to build an infrastructure that is optimized for IP, yet provides a rich set of QoS mechanisms to allow carriers to define service classes that meet the requirements of many different user applications. A sample of these applications includes circuit services, high-priority data services, voice telephony, and interactive and broadcast video. Each application makes a different set of demands on the network. It is instructive to use them as examples to explore the need for QoS in a resilient packet-transport network.

There are basically five dimensions to QoS that impact end users: availability, throughput, delay (latency), delay variation (jitter and wander), and loss (bit loss or packet loss).

Availability is the percentage of time the network is there when the user needs it. The traditional benchmark is 99.999%, or "five-nines," which translates into approximately 5.25 minutes of downtime per year. This level is still unattainable in traditional data-communications equipment, most of which was designed for the enterprise network.

Availability is achieved through a combination of equipment reliability and network survivability. Other factors also play a role, including software stability (which can take down a network as surely as a central-office fire), and the ability to evolve or upgrade the network without taking the network out of service. To meet the high expectations of carrier-class networks, optical MAN equipment must be highly redundant and capable of operating continuously under heavy loads and extreme temperatures.

Switch fabric and system controller cards must be redundant and hot-standby as well as hot-swappable, with rapid fail-over mechanisms in case of equipment failure. Heat is an enemy of electronic equipment. Power supplies should be distributed to each card, spreading the heat profile across the shelf. At the very least, power supplies should be highly redundant and load-sharing. Backplanes, which are not easily replaced, should be passive to increase reliability. Cards should be arranged vertically, with bottom-to-top, front-to-back airflow to prevent heat from accumulating from one shelf or bay to another. Even the placement of components on the card should be designed to prevent hot spots that cause local failure. Together, these features combine to provide very high meantime between failure numbers.

Furthermore, the network must restore itself quickly in case of events that are outside the carrier's control, such as fiber cuts. Alternate paths must be provisioned and available to traffic that might be impacted by a node or link failure. Provisioning rules must be implemented in the management system to ensure that protected services are not over-provisioned in such a way that they impact each other in case of a failure. When a failure does occur, the system must restore user services quickly enough so the failure is essentially transparent to the users. The "Gold Standard" for service restoration in case of a fiber cut-less than 50 msec-is set by SONET. To be classified as "carrier class" and meet the needs of users and user services, resilient packet-transport (RPT) equipment must meet these high standards. Fortunately, it can.

Throughput is the basic measurement of the amount of traffic-or bandwidth-delivered over a given period of time. Generally speaking, more is better. Traditionally, users have obtained bandwidth measured in kilobits per second, and a single T1 (1.544 Mbits/sec) was considered a high-speed pipe. With the advent of native Ethernet services delivered by next-generation MAN equipment, bandwidth is now measured in megabits-or even gigabits-per second.

Resilient packet ring devices are now available to provide Gigabit Ethernet services to every user at breakthrough price points. For those users who do not need (and are not willing to pay for) gigabit bandwidth, it is possible to bandwidth-limit (or "police") a physical Gigabit Ethernet pipe to any increment of service, providing "fractional Ethernet" services that can be remotely and dynamically managed. Policing ensures that users receive no more than their provisioned service bandwidth. Shaping and priority queuing-along with careful traffic engineering-ensure they get at least what they have been guaranteed.

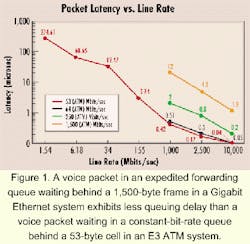

Delay, or latency, is the average transit time of a service from the ingress to the egress point of the network. Many services-especially real-time services like voice and video communications-are highly intolerant of delay. Echo-cancelers are necessary in voice circuits that exhibit more than 6.5 msec of roundtrip delay, and interactive conversation becomes very cumbersome when delay exceeds about 200-250 msec (or a quarter-second). To provide high-quality voice and videoconferencing, MAN equipment must be capable of guaranteeing low latency.There are many components of delay, including packetization delay, queuing delay, switching delay, and propagation delay. Propagation delay is the amount of time it takes information to traverse a copper, fiber, or wireless link and is a function of the speed of light. It is therefore a factor in any system, including SONET, ATM, and RPT. Queuing delay is imposed on a packet at congestion points when it waits in line for its turn, while other packets are sent through a switch or wire. ATM mitigates queuing delay by chopping packets into small pieces, packing them into cells and putting them into absolute priority queues. Because the cells are small, the highest-priority queue can be serviced more often, reducing the wait time for packets in this queue to deterministic levels. At gigabit speeds, however, the waiting time for high-priority traffic is very small, even under the worst conditions. The worst-case wait time for a voice packet in an absolute priority queue in a Gigabit Ethernet system is about 12 microsec (see Figure 1)-better than for a voice cell waiting in an ATM constant-bit-rate (CBR) queue at E3 (34-Mbit/sec) speeds.

Since ATM voice is considered fully toll-quality at speeds considerably less than that (T1), it follows that low latency voice can also be achieved over Gigabit Ethernet through the application of simple class-based priority queuing. This approach avoids implementing complex queuing mechanisms when networking variable-sized packets.

Delay variation is the difference in delay exhibited by different packets that are part of the same traffic flow. High-frequency delay variation is known as jitter, while low-frequency delay variation is called wander. Jitter is caused primarily by differences in queue wait times for consecutive packets in a flow and is the most significant issue for QoS. Certain traffic types-especially real-time traffic-are very intolerant of jitter. Differences in packet arrival times cause choppiness in the voice or video. All transport systems exhibit some jitter. As long as jitter falls within defined tolerances, it does not impact service quality. Excessive jitter can be overcome by buffering, but that increases delay and can cause other problems.

One of the attributes of RPT is the ability to provide synchronous circuit services such as T1 over the asynchronous packet-transport network. That enables the carrier to use a single platform for both data and private-line services, increasing network efficiency and significantly reducing both equipment and management costs. To provide high-quality private lines, the Luminous Networks RPT implementation is capable of accepting an external stratum timing source, distributing it to all the nodes on the RPT ring and then to external devices hanging off the ring. This way, both ends of the T1/E1 or DS-3/E3 circuit remain in perfect sync, just as they would over a SONET network.

Wander is a problem with any synchronous transmission system. SONET overcomes wander by maintaining strict stratum timing across the network. In an asynchronous system, wander is not generally a problem, except in the case of RPT when synchronous circuit services are being maintained over the packet network. In either case, wander can cause T1 frame drops and violate the QoS requirements of the circuit. Luminous RPT overcomes wander the same way that SONET does-with strict stratum timing. This timing is applied not to Ethernet packets, but only to synchronous circuit-emulated services.

Loss-either bit errors or packet drops-has a bigger impact on packet data services than on real-time services. During a voice conversation, loss of a single bit or packet of information is often not even noticed by the users. During a video broadcast, it may cause a momentary glitch on the screen, but then all proceeds as before. Even a transmission control protocol (TCP) data transfer can handle drops, because TCP allows for retransmission of lost information.

In fact, one major congestion control mechanism called random early discard (RED) actually drops packets intentionally when traffic reaches a configured threshold to throttle back the rate of TCP transmissions and reduce congestion. It also desynchronizes TCP flows to prevent oscillation of throughput due to opening and closing rate windows. However, if packet drops become epidemic, then the quality of all transmissions is impacted. Usually, packet drops are a symptom of a dying link or equipment card or a symptom of congestion. An RPT system must be able to keep management statistics on dropped traffic and provide alarms to the network manager when they pass expected thresholds. Table 1 shows the sensitivity of different applications to these QoS attributes.

Technically, an RPT network provides complete flexibility in defining service classes that can be sold to end users. That means the element management system provides access to the mechanisms (described later) that allow a service provider to create customized service classes for each user. From a practical standpoint, however, it is far more likely that general-service templates will be defined that can be efficiently marketed to all end users in a service territory, with some minor tweaks available to special accounts.

It is expected that the service provider will create expedited forwarding (EF), assured forwarding (AF) and best-effort (BE) services. The industry has created specific definitions for these services, which were created specifically to address different application requirements. Adherence to these definitions facilitates consistent service delivery across multiple IP carriers.

These service definitions have roughly the following characteristics with respect to the dimensions of QoS (excluding availability, which, as defined, is a characteristic of hardware and RPT). Note in Table 2 that the (roughly) equivalent ATM service class is also shown.

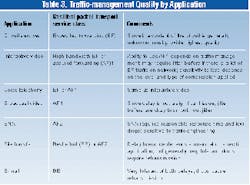

Of these service classes, the hardest to manage is AF2, since it is oversubscribed yet implies some proportional bandwidth guarantees. Note that these QoS levels map neatly with the requirements of the key applications discussed earlier (see Table 3). As shown, it is not only the QoS mechanisms in the nodes that guarantee service quality, but also the way in which the network is engineered and managed. Good traffic management makes QoS mechanisms much simpler and more effective. On the other hand, good QoS makes traffic management easier and enables much more efficient use of bandwidth.

There are several ways in which QoS can be achieved. They fall into two general categories: brute force methods and intelligent solutions. There is no silver bullet-no "right" way-to achieve QoS under all circumstances, but there are certainly more and less expensive ways. Which is the most cost-effective solution depends on the cost of the different resources available to implement QoS, such as manpower, bandwidth, and equipment (processing power). Usually the "right" solution is a balance of all three.

Overlay networks. Obviously, one way to achieve QoS is to assign different traffic types to different networks, which qualifies as a brute force method. It is expensive in terms of equipment, fiber utilization, and management-particularly in the access network. However, it is the way most service providers build their networks today.

Bandwidth over-provisioning. Also a brute force method, one school of thought argues that adding enough bandwidth to the link's interconnecting switches or routers eliminates the need for QoS mechanisms to control delay and jitter on time-critical packets. It is true that increasing bandwidth on these links without proportionately increasing usage will result in reduction of packet jitter. However, significant over-provisioning of network bandwidth is not sufficient by itself and does not eliminate the need for simple QoS mechanisms such as strict prioritization of time-critical traffic (e.g., real-time voice).

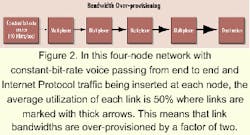

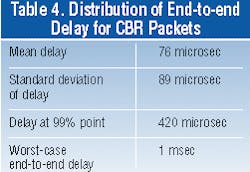

Figure 2 depicts a four-node network with CBR voice passing from end to end and IP traffic being inserted at each node. The average utilization of each link is 50% where links are marked with thick arrows, meaning that link bandwidths are over-provisioned by a factor of two. The IP sources are bursty sources with a Weibull burstiness factor of 0.33 on packet inter-arrivals, which is representative of TCP arrivals on the Internet. The maximum transfer unit assumed on the network is 1,500 bytes.Data on the distribution of end-to-end delay for CBR packets is shown in Table 4. It shows that the voice packets in the worst case suffer a one-way, end-to-end delay of 1 msec. The worst case is important for voice because of the stringent Telcordia Technologies requirements on allowable frame slips for DS-1 interfaces (less than one frame slip per week, or less than one out of 4.8 billion frames).

Strict prioritization of voice packets ensures that the absolute worst-case, end-to-end delay for any voice packet is approximately 180 microsec. This worst case occurs when a voice packet is delayed behind an entire 1,500-byte packet at every multiplex point. Therefore, even for a 2X over-provisioned network, the use of just one additional level of strict prioritization improves the worst-case jitter by more than a factor of five.The above result is important for several reasons. It shows the simplest type of QoS mechanism, two-level strict prioritization, significantly improves worst-case jitter for voice packets traversing four nodes, even in an over-provisioned network. That's important in access networks, because it controls equipment cost by avoiding echo cancellation for synchronous voice. Though the worst-case roundtrip delay of 2 msec in this example is less than the generic 6.5-msec Telcordia limit before echo cancellation becomes a requirement for synchronous voice, this example does not take into account the other significant sources of delay, such as packetization delay and propagation delay.

A small increase in the number of nodes in the cascade or a small increase in average network utilization results in a rapid increase in worst-case end-to-end delay. In addition, over-provisioning by any significant factor is inherently inefficient. Strict prioritization of voice traffic yields consistent worst-case delays, regardless of the level of over-subscription of lower-priority IP data, assuming that voice is a relatively small percentage (less than 60%) of overall traffic. QoS enables service providers to meet service guarantees while using more of their bandwidth. More usable bandwidth means more revenue.

Per-flow queuing and signaling. ATM is one protocol that establishes a separate logical queue for each virtual circuit. On the one hand, it is a great way to guarantee treatment of traffic; on the other hand, it is complex (many states to track), expensive (queues take memory), and not particularly scalable, considering the billions of flows that occur on the Internet each day. That is somewhat moderated by the fact that doing a good job of QoS management at the edge of the carrier network can take the load off the core. Even so, unless these flows are aggregated, the core still has the issue of maintaining queues and states for billions of flows.

IP QoS mechanisms. IP QoS (probably more accurately referred to as class-of-service), was developed to counter the complexity of ATM and per-flow QoS in a Layer 3-centric network. An earlier integrated-services IP QoS model depended on RSVP as an overlay signaling layer for resource reservation. Current IP differentiated-services implementations make use of class based queuing (CBQ) of aggregated flows, with RED and "penalty-box" algorithms to control congestion and ensure fair allocation of available bandwidth.

With CBQ, if all flows of a given traffic type are aggregated into one big queue, it will still be possible to provide some level of QoS without the cost and complexity associated with ATM. In fact, this mechanism works reasonably well, as long as other congestion management mechanisms are in place and the network is properly engineered.

RED takes advantage of the backoff mechanism in TCP by dropping packets at random when traffic exceeds a predetermined threshold. That causes TCP to back off, reducing the congestion. UDP traffic is connectionless and nonadaptive, so it does not respond to RED. A relatively new class of algorithms, penalty box performs a similar function for UDP traffic.

Hybrid schemes. Obviously, each of the above schemes involves tradeoffs between cost, complexity, and functionality. It's important to recognize how the network will be used and make appropriate tradeoffs. There is no doubt that the trend in IP QoS is toward greater complexity, which is made necessary by the greater demands placed on the network by user applications and the need of service providers to capture greater value (i.e., revenues and earnings) out of their IP networks. It is being made possible by continual advances in processing power, bandwidth availability, networking algorithms for traffic management, and our understanding of the behavior of networks under realistic conditions.

Luminous is taking advantage of the latest differentiated-services mechanisms and incorporating some additional functionality that adds significantly to QoS without adding significantly to the cost. For example, Luminous works with a concept of a "logical port," which is defined as a given service class for a given customer. A logical port can be one or more physical ports, or there can be many logical ports per physical port. That allows maximum flexibility in defining service classes and assigning bandwidth per service class per customer. Currently, the Luminous PacketWave is capable of handling over 9,000 logical ports per shelf.

The primary challenges in providing QoS over a resilient packet-transport ring involve ensuring fairness-or rather, unfairness-for provisioned oversubscribed services. We have discovered that it is relatively easy to provide best-effort traffic and one or more guaranteed-bandwidth services.

What is more challenging is to provide an oversubscribed version of AF traffic and statistically guarantee that the user will receive bandwidth in proportion to the user's SLA. The challenge is to provide provisioned unfairness, or to throttle the users in proportion to the bandwidth promised in their SLAs. The bottom line is that carrier-class QoS and SLA provisioning is possible and manageable in a network that provides the robustness and circuit capabilities of SONET, the scalability of DWDM, and the data-networking capabilities of Ethernet and IP solutions.

Jay Shuler is vice president of marketing and business development for Luminous Networks (Cupertino, CA). The company's Website is www.luminousnetworks.com.