Video-optimized fiber is all about the bends

by John George and David Mazzarese

There is no longer any ambiguity about the killer application: It’s personalized, on-demand, multifaceted, symmetrical, ever higher definition, bandwidth-gobbling video. And it’s not just one application. A suite of applications that rely on video is here and on the near horizon. With cost-effective high-definition, super high-definition, and eventually 3D video enabled by a continuing exponential decline in storage and processing costs, we are clearly headed toward greater than 1-Gbit/sec data rates dedicated to each subscriber.

Yet what is the most effective and efficient optical path to maximize return on network investment to provide reliable support of video? Optical fiber cable, splitters, connectors, jumpers, and patch panels comprise a small part of the network cost yet will have to support many bandwidth upgrades for many decades to avoid premature replacement. One 20th century issue with the optical path has been solved: With vastly improved electronics, it’s clear that high-power fiber nonlinearities such as stimulated Brillouin scattering (SBS) and stimulated Raman scattering (SRS) are sufficiently controlled and no longer a practical barrier for the optical path designer. This fact and the continuing transition from analog to lower-power digital video have rendered unnecessary any extraordinary measures to suppress SBS in high-power systems.

But other optical path challenges remain. In the face of video-driven data rates quadrupling every four years and tight optical power budgets, the constraints for today’s forward-looking network are primarily bandwidth and loss. The bandwidth of the optical path can be maximized using available full-spectrum G.652D singlemode optical fiber, splitters, and low-loss connections. However, service providers have recently become concerned about added loss caused by the fiber bends-particularly for bend-sensitive video wavelengths used in FTTX, enterprise, and hybrid fiber/coax (HFC) networks. The combination of tight power budgets and conventional singlemode fiber can shut down video services in today’s compact, bend-challenged networks (see Fig. 1).

Nearly all video applications, whether point-to-point or PON, operate between 1,480 and 1,625 nm. The latest IEEE 802.3 Ethernet standards now use the bend-sensitive 1,490 nm wavelength. FTTX, HFC, and WDM systems operate at very bend-sensitive wavelengths around 1,550 nm, and often with very tight power budgets. Table 1 summarizes how video services are transported today and how systems might evolve in the future. It is apparent that nearly all video applications will operate over a bending loss-sensitive wavelength.

For FTTH, the task of managing minimum fiber radius increases by orders of magnitude as networks leap from a single fiber supporting hundreds of users to a single fiber per user. As a result, the twists and turns of today’s compact fiber pathways could disrupt revenue-rich video services and the business cases they support. The industry has responded to these challenges by offering a new class of fibers with improved bend performance documented in the ITU-T G.657 standard. With standards in place for fibers with improved bend performance, network designers and component manufacturers can now develop smaller enclosures with compact packaging and avoid service disruptions from inadvertent bends caused by technician handling and installation errors.

The G.657 standard describes two types of bend-improved optical fibers. The first is referred to as Class A singlemode fiber that is fully compliant with the embedded base of low-water-peak fiber and has improved geometry and bending performance. Some of the improvements over low-water-peak singlemode fiber are highlighted in Table 2.The second fiber type is referred to as Class B and was included in the standard to target the niche application of wiring within residences, though some Class A fibers can also support this application. The Class B recommendation encompasses existing and proposed bend-insensitive fibers that may not be compliant with the embedded base such as hole-assisted fibers and fibers with very small mode-field diameters.

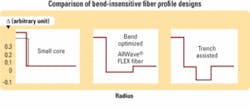

Several fiber manufacturers offer products that meet the G.657 Class A standard. Figure 2 compares the different fiber core profile designs used to improve singlemode bend performance.

Small core design: Commercial examples of the small core design are simply a selection of standard singlemode fiber product, and the macrobend performance is only marginally better than the best conventional singlemode fiber. Further, the small core design provides only a marginal improvement in microbend performance as compared to standard singlemode products.

Small core designs typically have a nominal mode field diameter (MFD) of 8.6 µm versus the 9.2-µm nominal MFD of the installed base of G.652 fibers. The unfortunate result is that when joined to the installed base of G.652 fibers, the small core design can exhibit additional splicing and connector loss of 0.1 dB or more per joint, affecting network performance, and also show a large proportion of “gainers” that complicate one-way OTDR testing.

Trench-assisted design: The trench-assisted design uses a deep trench in the refractive index near the core to help keep optical energy inside the core under bending. The trench-assisted design has very good macrobend performance but does not splice easily due to the high level of fluorine used to create the trench. Fusion splicers are often fooled by the profile and special recipes are required to get low-loss splices. This can become a serious liability, particularly for large service providers desiring a simple splicing protocol for field technicians.

In addition, while macrobend loss is very low, trench-assisted designs can provide insufficient warning of risky tight bends (<7.5-mm radius) during OTDR testing. Thus, such fiber can be a potentially risky choice for reliability-conscious deployments.

Bend-optimized design: The bend-optimized design provides optimized performance by balancing low bending loss, compatibility with the installed networks, splice performance, and fiber reliability under bending stress. The design combines a full-spectrum zero-water-peak core with a low index of refraction cladding feature.

The design reduces macrobending loss at sustainable bend radii to levels tolerable within the margins of current and future power budgets. It also exhibits half the microbending loss to reduce attenuation resulting from cabling stress, and thus enables more compact cable designs and/or a reduction in cabled fiber attenuation. Splicing has been difficult with some bend-insensitive fibers, while the bend-optimized design makes splicing easy. It closely matches the MFD of the existing installed base to minimize the issues of splice loss and OTDR gainers and can be spliced to itself or to installed G.652 fibers with standard G.652 splicing programs.

Reliability is a key concern with tight bends. While the glass in optical fiber has 5× the tensile strength of steel, it still can break. Fiber is screened by proof testing typically to 100,000 psi. This strain level is observed in a fiber when it is in a 6-mm radius bend. As one gets close to this diameter the chance of a failure during the lifetime of the network increases exponentially.

More detailed reliability analysis shows that the minimum bend radius is actually greater than 6 mm. Intentional bends smaller than 10-mm radius in modern networks should be avoided for added reliability, and bends smaller than 7.5-mm radius should be identifiable during network OTDR testing by providing the minimum reliably detectable loss, of at least 0.2 dB. The bend-optimized fiber design has an added benefit that the macrobend attenuation is very low for small bends but the attenuation does increase to OTDR-detectable levels for very tight bends where 40-year mechanical reliability is at risk.

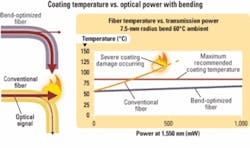

In digital systems, high-power often occurs as the number of wavelengths or channels increases in a DWDM system. For example, consider a digital system where each channel uses a transmission power of up to 6 dBm (4 mW). While this is low power, as more wavelengths are added the total power in the fiber increases. The total power in this system would be 27 dBm (640 mW) if the system were loaded with 160 wavelengths on the same fiber as some systems could support. High power levels can also exist on PON fibers with combined RF video and OLT power on the feeder fiber jumpers and cables. Certain precautions must be taken to prevent reliability issues that can arise from such high power density.As a demonstration of the reliability at very high optical powers, up to 3 W, the bend-optimized fiber design was compared to standard singlemode fiber in a 7.5-mm-radius bend. The surface temperature of the coating changed little for the bend-optimized fiber even after several hours of testing at 3 W, while the standard singlemode fibers heated well above 85°C and coating degradation was clearly observed at about 600 mW (0.6 W) of input power, clearly demonstrating the high power reliability advantage of bend-optimized fibers. This testing was performed at 20°C ambient temperature. Assuming a 60°C ambient temperature one would expect the same test would have resulted in the standard singlemode coating degradation occurring at 200 to 250 mW.

Video applications with increasing definition and personalization will drive both bandwidth growth and successful business cases in FTTX and ultimately most optical networks. A video-dependent network needs video-optimized fibers, and for video applications, which primarily operate above 1,480 nm, it’s mostly about the bends.John George is director, FTTX solutions, and David Mazzarese is technical marketing manager at OFS (www.ofsoptics.com).