Fiber remains medium of choice for data center applications

by Meghan Fuller

Fiber cabling shipments are expected to experience a 26.3% compound annual growth rate to net $4 billion by 2010, according to new research from FTM Consulting (www.igigroup.com). In fact, FTM analysts forecast that fiber cabling shipments will exceed copper UTP cabling shipments by 2008. And the highest growth application is expected to be the data center. Though fiber has always been a competitive option, new developments in optics and emerging applications have further strengthened the business case for fiber in the data center.Today’s data center designers are discovering that traditional building LAN equipment just isn’t sufficient for use in the current data center environment. Traditional LAN equipment simply will not allow designers to achieve the requisite density, scalability, manageability, and flexibility, says Alan Ugolini, data center specialist at Corning Cable Systems (www.corningcablesystems.com).

Hutch Coburn, senior product manager of enterprise fiber infrastructure solutions at ADC (www.adc.com), agrees, noting that LAN traffic may not always be mission critical, but data centers face often stringent uptime requirements. Even a few minutes of downtime could be costly for enterprises in the financial sector. “Tomorrow’s data center is not your father’s Oldsmobile,” he asserts.

Today’s data centers are constantly growing; new equipment is added while old equipment is cycled out-on average every 2 to 3 years-to make way for higher-density, more feature-rich equipment. According to Ugolini, churn and scaling together represent “a killer combination for the data center.” Traditional LAN equipment cannot enable the frequent moves, adds, and changes required.The data center’s requirements in terms of flexibility, scalability, density, and manageability today are met, in part, by MTP (also called MPO or multifiber push-on) connectors, which enable designers to create a cabling infrastructure that meets the challenging density requirements but is flexible enough to handle moves, adds, and changes.

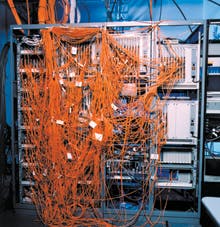

For example, says Ugolini, consider a system box that may have been deployed a year ago-say, a SAN Switch Director. The density was likely around 144 ports in a 14-U package. But today’s system vendors are supplying the same box with 512 ports or 1,024 fibers. Densities have increased in the same amount of space or with only a minimal increase in space. Traditional optics components are not sufficient in such case, he says (see Photo 1).

Using traditional fiber optics, you can get-at best-around 96 fibers in a 1-U LC panel, Ugolini explains. Delivering 1,024 fibers to the SAN switch using an LC interface will consume valuable real estate inside the cabinet. But using MTP connectors in that same 1-U configuration, you can achieve densities of 432 fibers, he says, “getting you close to supplying enough connectivity to handle one of these new SAN switches at 512 ports.”

But what if the data center designer decides to house two SAN switches in the same cabinet-resulting in more than 2,000 fibers to the same location? (See Photo 2a.) Again, the quantity of fibers is simply unmanageable. To alleviate the congestion, today’s data center designers are turning to ribbon cables, known as harnesses, with MTP connectors on the end (see Photo 3). They can route one 12-fiber ribbon cable through the cabinet rather than 12 jumper cables; the ribbon cable is then broken out into LC or SFP duplex connectors just before the line card, thereby simplifying the cabling infrastructure (see Photo 2b).

Both Ugolini and Coburn agree that laser-optimized, 50-µm multimode fiber (OM3) remains the medium of choice for data center applications, in part because it is less expensive when the higher-priced optics associated with singlemode are factored in. Moreover, they say, high-data-rate SAN networks and Ethernet networks operate in the 850-nm window, and laser-optimized, 50-µm multimode fiber provides the best bandwidth performance in that window.Not everyone is sold on this argument, however. Jim Gerrity, director of enterprise storage at ADVA Optical Networking (www.advaoptical.com), reports seeing a prevalence of singlemode fiber deployments in data center environments. You can get longer distances out of singlemode fiber, he says, and it features less dispersion over distance than its multimode counterpart. But the key reason he believes most data centers should be fibered with singlemode, if they aren’t already, is futureproofing.

“The main driver for fiber, looking at the data center, is storage,” he notes. “Ultimately, what people want to do is extend that fiber. So if I have a singlemode infrastructure and I need to connect to a disaster recovery site, I can run that fiber from a switch out to another building, another site, another facility,” Gerrity explains.

Fiber in the data center is not new, he says. What is new is running fiber between data centers, facilities, corporate backbones, and even third-party disaster recovery vendors. Moreover, enterprises are now starting to leverage that infrastructure to support other types of traffic along with their storage traffic, says Gerrity.

“You could have your storage traffic coming in, your Ethernet and voice. You could run IP through it,” he says of the data center network. “And video conferencing and high-definition TV are becoming more and more prevalent among corporations that are trying to minimize travel.”

For this reason, he says, more and more enterprises are turning to WDM to transport multiple protocols at their native speeds using as little fiber infrastructure as possible.

“Fiber has certainly served its purpose and will continue to serve its purpose in the data center,” he muses. “But I think most are already wired. Now, we are seeing fiber extension out between sites.”

Both Corning Cable Systems and ADC say they have yet to see an all-fiber data center; however, as speeds continue to increase, the limitations of copper make the economics of fiber more attractive, even for short distances. Historically, high data rates have been used for long-distance applications, but higher data rates are now migrating to the campus backbone, the building backbone, and into the data center. Moreover, the industry has begun to mull the possibility of high-data-rate transmission from frame-to-frame, board-to-board, and even chip-to-chip. As speeds increase, technology challenges proliferate.

As Ugolini notes, the IEEE recently “put its stake in the ground” and is moving forward with a standard for 100-Gigabit Ethernet transmission. At 100G, the problems inherent in 10G copper transport will be a hundred times greater, he says. “There are a lot of limitations that [copper] has to overcome that fiber doesn’t. As speeds continue to increase, fiber technology continues to evolve,” he says.