Maximizing bandwidth utilization in access, aggregation, short-haul

As capital spending picks up again, significant investment is in technology that can help service providers meet the challenge of aggregating and transporting heterogeneous traffic within short-haul networks. For access and aggregation portions of the network, the key to cost-effective network operation lies in maximizing the use of bandwidth; that's important for any networked environment, but especially for MANs where a changing mix of different-sized access pipes as well as protocols is terminated, aggregated, and transported.

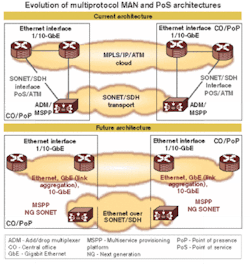

Traditionally, Ethernet has been used for internal links between point-of-service aggregation systems within the central office or point-of-presence, while SONET/SDH has been a dominant factor in transport over public infrastructures. The rise of

IP-based infrastructures using MPLS and the emergence of Ethernet over SONET/SDH are making it practical to map native Ethernet traffic directly over the cloud, setting the stage for seamless transitions between end-user traffic aggregation and backbone transport (see Figure 1).As they transition to converged IP/MPLS networks, service providers will need flexible mechanisms to support the migration of an increasingly complex variety of services without forklift abandonment of their existing SONET/SDH investments. Many incumbent carriers, wary of a wholesale shift in their profitable legacy services onto a converged IP/MPLS network, will run voice circuits and leased lines directly over their optical transport networks. As such, new-generation systems such as multiservice provisioning platforms must seamlessly map user-level traffic into SONET/SDH and DWDM transport networks, while maintaining granularity, flexibility, and service-level agreements.

System and line-card architectures that can handle any protocol on any port at any time and dynamically "right size" the access pipes to match real world user requirements may represent the ultimate answer. Not only is port-by-port, card-by-card flexibility enhanced, but also overall system utilization is greatly improved. Instead of having to juggle line cards dedicated to a particular LAN or SAN protocol such as Gigabit Ethernet (GbE), Fibre Channel (FC), or Escon, carriers can soft-configure ports to meet current user demands.

Multifunctional programmable line cards offer major advantages for vendors and carriers. Line-card vendors can better leverage their development resources, shorten time-to-market, and streamline inventory management by designing and supporting fewer designs. Carriers can also reduce their overall spare inventories as well as significantly trim operational expenses by eliminating unnecessary truck rolls and field-technician time previously needed for swapping out different line cards in remotely deployed systems.

In addition, as they migrate to IP-based network infrastructures while maintaining their legacy TDM environments, carriers can appropriately match SONET/SDH payloads on the access side to individual user bandwidth requirements, regardless of the back-end transport methods.

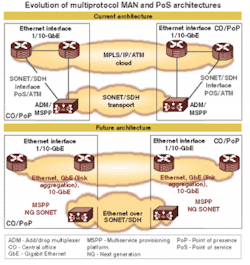

Achievement of the "any protocol on any port" objective requires a whole ecosystem of interrelated technologies, including network-processor units, traffic managers, switch fabric, and serial multiplexers, all of which need to work seamlessly together. One pivotal technology for maximizing bandwidth utilization in multiservice networks is the use of virtual concatenation (VC) integrated within multiprotocol mapper devices that provide adaptable bridges between small-pipe-line interfaces and big-pipe transport links.

The integration of multiprotocol VC mapper functionality into all of the line interfaces on every card allows carriers to flexibly provision end-user services with a high degree of granularity while leveraging the full bandwidth of higher-bandwidth pipes for transport. For example, many individual end-user STS-1 (52-Mbit/sec) or STS-3 (156-Mbit/sec) channels can be virtually concatenated for transport over OC-48 (2.5 Gbits/sec) or OC-192 (10 Gbits/sec), without wasting any of the available bandwidth.

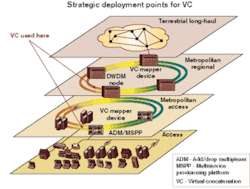

The use of VC technology can provide a seamless bridge from the MAN edge to aggregate a wide range of end-user access traffic across residential and enterprise environments (see Figure 2). VC also can be strategically deployed within short-haul transport rings in the MAN backbone to map individual user channels throughout entire metro regions, without paying the overhead of intervening protocol conversions.Two of the key challenges for successful implementation of VC involve minimizing latency and managing skew. De-skew is critical because of the need to compensate for differential delay among multiple received paths belonging to a common virtual concatenation group (VCG). Managing latency is also vital for achieving performance expectations, especially if heterogeneous transport networks mix latency-sensitive traffic such as FC, Escon, or voice over IP with less latency-susceptible services such as GbE or digital video broadcast (DVB).

The member paths of each VCG invariably require a certain amount of de-skew. The amount of memory required for the de-skew compensation will vary, however, based on whether the paths are co-routed or differentially routed. Co-routed paths typically require a significantly smaller amount of memory than differential routing, but mandating that all VCGs use co-routing can potentially limit VC deployment flexibility. Designers need to offer VC capabilities that can efficiently support either co-routing or differential routing. Therefore, the optimal chip-level approach to VC de-skew is to use a small amount of internal memory with the option for interfacing to additional off-chip de-skew memory in the form of inexpensive double data rate (DDR) synchronous dynamic random access memory (SDRAM).

For co-routed paths, delay differences are primarily incurred in the pointer processors within each SONET/SDH network element, such as add/drop multiplexers or synchronous crossconnects, while the fiber itself has a negligible contribution to delay. Analysis available through the European Telecommunications Standards Institute has shown a worse case of 10-byte delay at each pointer processor. In relatively large rings, an equal distribution of negative and positive adjustments will result in a typical profile with approximately 5 bytes of delay between co-routed paths. That means co-routed VC traffic can be effectively deployed over metro SONET/SDH or DWDM rings that span as much as 2,000–3,000 km, with as little as 90 bytes of on-chip de-skew memory for each VC mapper device. Even for networks with a large number of differentially routed paths, experience has shown that a relatively small amount of off-chip memory is sufficient for de-skew. For instance, 15 msec of de-skew memory (4 × 32 DDR SRAM devices) can compensate for as much as 3,000 km of difference in length between diversely routed paths.

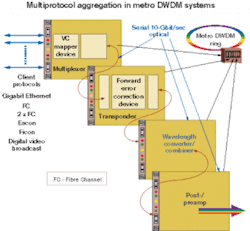

For most efficient implementation, advanced multiprotocol VC mapper devices are designed to interface directly with optics or external forward error correction devices using standards such as STS-192/STM-64 or 4 × STS-48/STM-16 mode.

A VC mapper works in conjunction with transponder and wavelength converter/combiner functions to interface diverse client protocols (GbE, FC, Escon, Ficon, or DVB) directly into 10-Gbit/sec optical transport streams via DWDM (see Figure 3).By integrating multiprotocol service availability into every port on each line card and leveraging VC for channel granularity, service providers can achieve optimal bandwidth utilization on the access side and transport side of the aggregation point. Incremental TDM time slots can be provisioned to meet the real world payload requirements of individual users without reconfiguring or provisioning new physical circuits. By using link capacity adjustment scheme, providers can dynamically increase the amount of bandwidth to accommodate a user's growing requirements simply by adding more TDM time slots. On the transport side, carriers can make full use of SONET/SDH or DWDM bandwidth throughout the MAN, without paying a penalty for variations in the traffic mix or having to reconfigure and re-balance the network as the protocol mix grows and changes.

Armin Schulz is senior product marketing manager and Bob Brand is senior solutions architect at Applied Micro Circuits Corp. (AMCC—San Diego).