The marriage of optical switching and electronic routing

Optical switching enables centralized routers to handle traffic at the terabit level with speed and efficiency.

TOM McDERMOTT, Chiaro Networks Ltd.

The continuing growth of IP traffic and decrease in the cost per bit-mile of optical transmission have resulted in bottlenecks in the network. In the core, just as large optical-switching elements have started to appear to handle optical circuits, large, centralized IP routers will appear because they're an extremely efficient solution to IP routing. There are a variety of technologies and issues that influence the architecture for these types of network elements.

To transport terabits around the country and around the world, new optical technologies have emerged to enable the economic transport of incredible bandwidth over singlemode optical fibers, including DWDM and high-speed TDM. That means individual optical links can sustain the enormous traffic needed to support the continuing growth of IP data.High-power, low-noise optical amplifiers-or erbium-doped fiber amplifiers (EDFAs)-and pulse-shaping technologies mean the high-bit-rate optical signals do not require electronic regeneration except on the very longest fiber spans. New fibers with larger cross-sectional areas mean a large number of high-bit-rate signals can be wavelength-multiplexed onto a single fiber. Thus, it is becoming affordable to actually construct links that can support terabits of capacity between routing and switching centers.

Network problems

The bottleneck at the core of the growing network is at the junction points of these fiber bundles: the switching and routing centers. With terabit links, a tremendous amount of data will be converging into a single central office (CO) (see Figure 1).

Scalability of the infrastructure (crossconnect or router) within the office continues to pose problems. New routers emerge only to be swamped with traffic within months, requiring the router to be completely changed out, sometimes within one year of service. A band-aid solution is to cluster several routers (or crossconnects) together. However, clustering is not a good long-term solution to the scalability problem, because a cluster of crossconnects, for example, requires interconnecting links between the crossconnects. As the number of switches in the cluster grows beyond about four or five, the interconnecting links consume most of the ports. Clustered routers have the same problem.

Additionally, as multiple routers are clustered, the IP traffic must transit more and more devices, and the latency (the delay of IP packets) and jitter (delay variance) of the cluster grow quickly. Clustered solutions are also prone to the hot-spot problem, where one of the small routers in a cluster can be overwhelmed by temporary traffic dynamics in the network that do not exceed the combined node capacity. This swamping effect also increases the delay of that saturated small router.The delay is not too noticeable with best-effort traffic, but it is very detrimental to real-time traffic. Ultimately, a cluster of routers becomes difficult to manage because the number of routes, alternate routes, and protective routes within the network grows quickly. That becomes an administrative problem, as the route tables are no longer simple to administer.

Large, centralized routerThe trend in crossconnects is toward large micro-electromechanical systems (MEMS)-based optical crossconnects for core node protection and grooming of DWDM traffic. Similarly, large, centralized routers are an efficient alternative to solving bottleneck problems by avoiding the hot-spot problems of distributed routers, eliminating clustering problems, and per mitting global scheduling.A centralized (single-hop, globally arbitrated), synchronous, large nonblocking switch fabric has the best latency and throughput performance of all router topologies. It also scales better than a clustered system-and it results in less complicated system software for the network element.

In designing a practical architecture for eliminating the bottleneck at the core, it's important to consider some of the elements that impact a large, centralized, synchronous, nonblocking switch fabric. The problem is interconnecting all the fibers into a single fabric.

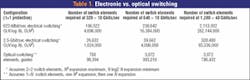

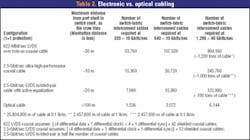

Traditionally, the outside plant fibers terminate on some type of electronic processing card (one card per wavelength per fiber). These cards are, in turn, connected to the switch fabric. Before the advent of optical-switch matrices, nonblocking electrical fabrics were not practical for terabits of capacity. Distance, loss, weight, crosstalk, reliability, and the large number of connections are some of the basic problems with electrical-switch interconnects. Tables 1 and 2 show the magnitude of the weight, distance, and interconnect scaling problems.

The trouble with copperOne key issue with electrically connecting terabits into a single switch fabric is the short interconnect distance of copper cables caused by excessive electrical loss at high bit rates. This large loss means the line cards have to be physically close to the switch fabric to minimize the length of the cables. But it's difficult or impossible to get enough line cards close enough to the fabric to actually connect up terabits of capacity electrically.The weight of the copper cables, even if they were not loss-limited, would be too heavy for any building to hold. The copper cables needed to connect a 50-Tbit router and the loss-limited length of the cables would weigh more than 2 million lb! It's just not cost-effective to build the floor of the CO strong enough to hold this weight-even if it were possible to get enough line cards within range of the central fabric with the current copper-cable technology.

Another problem with copper is that crosstalk of all the high-speed electrical signals in such a small space becomes insurmountable. As the data rate goes up and the copper cables are brought closer and closer together, the problem rapidly becomes completely intractable.

The number of interconnects to tie together any reasonable speed-limited electronic-switching technology imposes reliability problems. As the electronic switches scale, the number of copper interconnects for a nonblocking fabric becomes huge. The most reliable interconnection is on a single silicon die, but the power dissipation of silicon limits how many high-speed devices will fit on a single die. Interconnecting multiple die slows down the data rate, increases power, and imposes distance limits. The interboard connectors then become the limiting reliability factor. This problem grows rapidly with switch size.

It's possible to solve the density problem by paralleling a number of switch elements, but as they're spread apart, the distance limits of copper start to become a problem again. As the switch gets physically spread out, it's difficult to retain single-stage switching due to the inability to keep all the widely spaced elements in synchronization. Thus, copper interconnect has fundamental scalability limits. As the switch fabric scales, the copper links are simply not long enough to work.

Interconnecting fiber

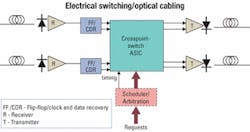

One approach is to optically interconnect the line cards to the electrical-switch fabric. Fiber is very low loss, solving the distance problem and allowing the line cards to be located a significant distance from the fabric. Other limitations such as the speed of light limit the practical switch/line-card separation for time-sensitive applications like IP routing. Additionally, photons are tightly bound to the core of optical fiber and don't easily couple from one fiber to the next (otherwise the fiber would be lossy). Therefore, fiber interconnect also solves the crosstalk limitations.

If the switch fabric is spread out (parallel architecture), then the problem remains to synchronize all the various well-separated switch elements. That adds a lot of power dissipation but solves the electrical cable weight and distance interconnect limitation problems. Large, scalable switching systems are now converting to fiber intrasystem interconnects.

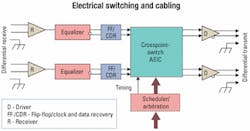

Advances in electronics

Recent advances permit electronic cable equalization at gigahertz rates, correcting for the single impairment of copper skin-effect loss that is proportional to the square root of frequency. That means 622 low-voltage differential signal (LVDS) twisted-pair cable can be used at 2.5 Gbits/sec for distances from 15 to 30 m, depending on cable construction. While that extends the viability of cables by a factor of four and reduces the weight significantly, it still does not solve crosstalk and distance problems.

Skew between 2.5-Gbit/sec channels is an issue in parallel interconnection because of differences in cable lengths, cable propagation velocity, and differences in printed-circuit trace lengths. The general solution is to use a de-skewing buffer to hold plus/minus several bytes on each channel. To do that successfully, the system must recognize channel alignment symbols and operate links at higher than data rate. Even if skew can be managed or overcome, cable distance limitations severely limit the optimum configuration and scalability to meet the traffic demands and avoid bottlenecks.

Optical-fabric characteristics

The development and use of an optical fabric for switching overcomes many of the problems encountered when using electrical fabrics. Use of an optical fabric allows serial transmission, is essentially "analog," requires high-speed receivers, and does not incur significant loss for very-short-reach intra-office applications. Serial transmission eliminates skew and the associated problems.

In addition, an optical fabric dramatically reduces the number of connectors and cables required, while significantly reducing power con sumption of the switch-fabric input/output electronics. It also eliminates an extra set of optical-electrical and electrical-optical converters that connects optics into an out of the electronic fabric. The "analog" nature of an optical fabric raises concerns about loss and crosstalk; however, it also means the fabric is bit-rate- and format-independent.

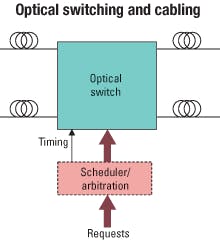

For IP routers, an optical fabric also requires high-speed switching-optical receivers that have special properties such as being amplitude-adaptive with fast clock recovery in the receiver. Also, while cable loss for intra-office applications is not significant, dispersion must be considered in some cases. To evaluate the use of an optical fabric, it's important to compare it to other alternatives, including electrical switching/electrical cabling and electrical switching/optical cabling (see Figures 2, 3, and 4).

MEMS has solved this problem well in the crossconnect domain, but the problem in IP routing has been to find an optical-switching technology that is one million times faster than MEMS-that can switch in nanoseconds. A large nanosecond optical switch, based on an arrayed-waveguide/free-space optical technology, has been developed that provides both of these size and speed characteristics within a large IP router. The use of this technology means that no optical-electrical-optical conversion is required in the fabric itself, so the system is simpler, requires less power, is format-independent, and is very scalable.

Electronic routing

Standard Layer 3 IP routing provides many benefits compared to circuit switching when the network handles mostly IP packets. Layer 3 IP routers using industry-standard packet-over-SONET interfaces, standardized open shortest path first, intermediate system to intermediate system, border gateway protocol 4, MPLS, and Generalized MPLS routing easily fit into existing carrier networks without wholesale change-out of existing technology.

An IP router is extremely efficient in packing a lot of best-effort IP traffic onto the transmission facilities due to the technique that transmission control protocol (TCP) sessions use to dynamically expand traffic loading to nearly 100% of the capacity of the transmission links. Circuit-switched links simply cannot be rearranged fast enough to keep up with these dynamic network flows.

Large, scalable, nonblocking IP routers, when designed correctly, also provide the minimum number of router hops (as compared to clustered router solutions). Thus, they provide good IP packet latency and are capable of supporting excellent delay and jitter performance (as will be needed for real-time traffic) as well as provide reasonable rapid restoration of traffic loss events. The 1+1 and 1:N protected SONET interfaces on a router can provide sub-50-msec protection performance if needed, completely compatible with existing SONET equipment.

Just as electrically based broadband crossconnects have migrated to optical crossconnects with the advent of MEMS optical-switching technology, electronic IP routers will migrate to IP core optical routers with the recent advent of fast, large optical-switching technology.

Switching/routing

A large, centralized router that combines optical switching and electrical routing can help eliminate the bottleneck that occurs because of the increasing convergence of IP, data, and voice traffic in the network. Optical interconnects overcome the physical problems of connecting line cards to a central electrical fabric by reducing the weight and distance limitations of copper. Optical switching enables the centralized router to handle traffic at the terabit level with speed and efficiency.

Electronic processing allows for efficient and standards-compliant IPv4 (and IPv6) routing. With electronic routing, headers can be easily updated, packets can be managed in a sophisticated manner, and IP links can be efficiently loaded using TCP.

Tom McDermott is vice president for the office of technology at Chiaro Networks Ltd. (Richardson, TX). He can be reached via the company's Website, www.chiaro.com.