The impact of IP traffic patterns on today's backbone networks

The successful search for the right architecture for data-centric networks may rely on new technologies currently on the horizon.

Internet applications are the fastest-growing segment of a service provider's network traffic. This growth is expected to continue well into the next century. According to a 1999 report by industry analyst firm Ryan Hankin Kent (RHK Inc.--South San Francisco), "The growth of IP [Internet protocol] creates the prospect of a public network with 30 times the traffic of today's public network...in just four years." More importantly, the bandwidth required to service this traffic is expected to grow at an even faster pace.

Service providers and vendors are proposing a variety of new bandwidth-hungry services and applications based on IP, including virtual private networks, voice over IP, and robust new e-commerce applications. In addition, higher-speed access to the Internet is becoming increasingly popular, thanks to consumer availability of such broadband technologies as digital subscriber line and cable modems, which demand considerably more bandwidth than a 56K modem.

The exponential popularity of the Internet, in turn, significantly affects the origins and destinations of long-haul network traffic. Internet users trigger information flow with a simple click of a mouse between nodes thousands of miles apart. Distance is not a restriction or even a consideration for most people using the Internet, but it introduces a great deal of unpredictability into the network, which makes forecasting for growth all the more difficult and the issue of scalability a critical concern.

All of these examples serve to heighten the impact that IP is having and will continue to have on long-haul backbone networks. When faced with the "double-jeopardy" of tremendous growth both in the amount of traffic and its unpredictability, network planners will find themselves looking for architectural alternatives more suitable for data.Traditionally, voice and private-line traffic patterns were relatively stable with a predictable annual growth pattern. It was far easier to plan and grow network capacity. Networks carrying large amounts of IP traffic do not share these characteristics. For example, Figure 1 illustrates the differences in traffic patterns between a traditional, circuit-switched network and an IP-based network. Note that the number of hops between ingress and egress points of traffic is three times greater for IP networks than it is for traditional voice networks. The rise in the amount of hops greatly increases latency and reduces throughput.

This same scenario is being played out with little variation on long-haul networks worldwide. Currently, all claims of optimized and/or widely used network architectures (such as bidirectional line-switched ring or crossconnect-based architectures) are based on a target network. Growth for this stable target network is forecasted, and changes are made based on these forecasts. However, if the actual growth is different than the predicted growth (volume and/or pattern), as often happens with IP traffic, all advantages claimed by this network are quickly neutralized.

Another issue with IP-centric traffic patterns is that of network churn. Similar to the issue of scalability, but better quantified, churn is defined as the movement of network traffic from one part of the network to another. If the forecasted traffic differs widely from the actual movement of traffic, it greatly affects network planning. For example, if Span-A is predicted to have an overbuild of 5x and Span-B 10x and, in reality, the opposite is true, the net effect is traffic from Span-B "moving" to Span-A. Again, tremendous strain is put on the network's resources to cope with this unforeseen consequence.

The most common architecture used today, the Synchronous Optical Network (SONET) ring is well suited for predictable voice traffic. But when faced with a large influx of data traffic, with all the problems just discussed, this model is no longer a viable solution. Clearly, as increasing amounts of data traffic begin to run across networks, service providers will need to deploy a new architecture that will be able to handle these demands.

As might be expected, multiple architectures have been proposed, each with its own set of advantages and disadvantages. Surprisingly, the most appropriate architecture for an IP-centric backbone is one that is simplified, rather than more complex--a point-to-point, diverse routing architecture. While not widely used for long-haul backbone networks due to the tremendous expense related to line costs, new photonic technologies will make it a preferred option in the near term. This architecture's ability to scale based solely on the actual traffic growth from point to point makes it immune to unpredictability and thus more desirable for current backbone networks.

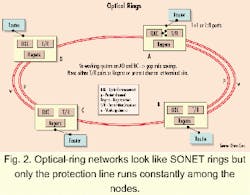

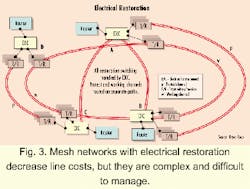

Although there are many different architectures for long-haul backbones, there are four models considered to be the primary alternatives to the traditional SONET ring today-optical rings, electrical restoration, Layer 3 restoration, and SONET 1+1 restoration. Each has its merits and drawbacks, depending on the nature of the traffic on the network. We will examine these architectures assuming that the majority of service providers' backbone networks will be data-centric and thus susceptible to unpredictable growth and network churn.Figure 3 depicts a mesh-type architecture based on electrical restoration. Notice that each node has multiple paths leading to and from each separate node. This structure allows line costs to be reduced significantly, since many of the redundant protection lines (that don't generate revenue) can be eliminated. Despite this benefit, this architecture is extremely complex to design and difficult to manage. In addition, cost per node is raised significantly, as electrical crossconnects (EXCs) and optoelectronic conversions are required at all nodes. This model also doesn't handle unexpected growth as well as some other alternatives, as this architecture requires network-wide capacity planning.

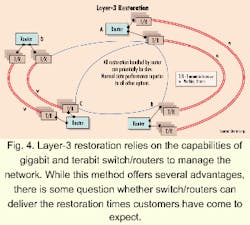

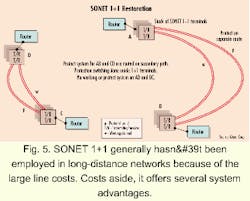

The third architecture, illustrated in Figure 4, is similar to that of electrical restoration but relies on new Layer 3 technologies to handle restoration. This architecture has all the benefits associated with electrical restoration, including the elimination of redundant protection. In addition, this architecture avoids some of the complexity of electrical restoration, since there is no need for EXCs. In this case, the router positioned at each node handles all restoration.That brings us to the last of the more conventional architectures, as well as one that is usually not considered for use in long-haul backbones--SONET 1+1 (see Fig. 5). This architecture has multiple benefits. Due to an extremely simplified node, cost per node is extremely low. In addition, restoration is extremely fast. Most importantly, this architecture provides immunity from unpredictable traffic growth, as network planners are able to scale the network on a point-to-point basis based on actual traffic patterns.

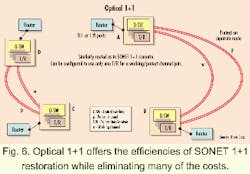

SONET 1+1 also features incredibly large line costs, especially when considering the immense distances that a long-haul backbone network would be required to cross. These line costs are due to the large number of expensive optoelectronic regenerators that must be added to the network to make delivery of this data possible. Therefore, this model hasn't generally been considered for the extremely long-haul, data-centric backbone networks being deployed today.Each of these architectures has associated drawbacks that would make any network planner hesitate. But new technologies that have recently been developed make a new type of architecture possible (see Fig. 6). Optical 1+1 provides all of the benefits associated with SONET 1+1, but, through a combination of terabit routers and advanced photonic networking equipment, eliminates the huge line costs associated with a SONET 1+1 architecture. In this scenario, new photonic networking equipment allows for the delivery of data over extremely long distances, which practically eliminates the need for regenerators to be placed in the backbone. These regenerators often make up as much as 40% of the cost of a backbone network. By providing such ultra-long-reach delivery at such a low price point, the optical 1+1 architecture provides the best of all worlds. Fastest restoration, significantly reduced node costs, and the ability to scale are all available, provided the system has the reach necessary to eliminate the need for regenerators.

To make this architecture a viable option, service providers will have to carefully consider their choice of optical-networking equipment. Today, there are no systems on the market that can provide the capacity and reach needed to sustain the optical 1+1 architecture-at least not at a price point that service providers will be able to swallow. Today's OC-192 systems have the capacity, but not the reach. The opposite is true for long-haul OC-48 systems. While it may be tempting to use OC-48 systems as an interim solution, it is proven that 10-Gbit/sec wavelength rates achieve the lowest cost per bit while maximizing fiber capacity. To make a long-haul optical 1+1 architecture a reality, 10-Gbit/sec-based dense wavelength-division multiplexing systems with ultra-long-reach transport capability will be necessary. Systems that provide the reach necessary for enabling this type of architecture will soon be commercially available.

Provided that both systems have the same reach, service providers ultimately will eschew OC-48 or 2.5-Gbit/sec systems in favor of OC-192 systems because of the cost and capacity benefits associated with the latter choice. To support the same capacity as 10-Gbit/sec-based systems, systems based on 2.5 Gbits/sec require four times the number of wavelengths. Assuming that a vendor could design a system without going to more costly amplifier designs (which would support more wavelengths), the channel spacing of the 2.5-Gbit/sec-based system would need to be four times smaller. This greatly increases the cost of filtering in the transmission line, as well as increasing nonlinear effects such as four-wave mixing and cross-phase modulation.

The cost advantages are clear. Higher-speed wavelengths are necessary to maximize the fiber throughput, minimize the floor space and power requirements, and offer highest overall network availability. In addition, electronics are cheaper, especially when considering that four OC-48 transponders/regenerators are needed as opposed to just one for an OC-192 system. There are secondary issues that also need to be taken into account, such as having to dedicate resources to manage four times the number of wavelengths and spare four times as many transmitters. Furthermore, new services such as 10-Gbit/sec Ethernet and OC-192c services can only be economically transported over 10-Gbit/sec-based backbone networks.

All network planning contains some element of risk, so it is understandable when an organization seeks to minimize that risk as much as possible with forecasts and stable, easy-to-predict architectures. In the coming years, however, the only thing that will be predictable is the fact that data traffic will continue to grow exponentially, introducing more unpredictability into the backbone.

To meet this new paradigm, service providers will be best served by planning a data-centric backbone that will be able to cope with the demands of future applications and bursty traffic. This will be made possible through advanced optical-networking equipment, deployed in an architecture that can provide for unlimited growth, based on actual traffic patterns. Not only is this possible, it is necessary. As IP traffic continues to proliferate over networks worldwide, this necessity will become readily apparent all too soon.

Sri Nathan is vice president of network architecture for Qtera Corp. (Boca Raton, FL), with primary responsibility for defining the long-term architecture of Qtera's next-generation photonic networking systems.