Strengthening the ASP business model

Auto-provisioning routers for optical networks based on channelized reserved services offer new opportunities for ASPs to capitalize on customer demands for mission-critical services.

Per Lembre

Dynarc

Carriers and Internet service providers (ISPs) are faced with new business opportunities as fiber deployment releases the major bandwidth obstacles in the metropolitan areas. Fiber-optic architectures and open Internet Protocol (IP) platforms, combined with cost-effective storage, enable carriers to go after the application-service-provider (ASP) business model and target corporate customers with new revenue-generating services.

To capitalize on these opportunities, the service provider must take advantage of new advancements in optical IP networking architectures. By deploying auto-provisioning routers based on the channelized reserved-services (CRSs) architecture, ISPs can speed up installation and guarantee broadband connections between clients and the ASP site. The ASP model, which allows clients to rent all kinds of computer and communications applications to the service provider, requires customized and flexible service-level agreements that guarantee supreme uptime of these mission-critical services.

Although the overall infrastructure for ASP networks has yet to be defined, there are two major trends currently being established. First, optical fiber will be the physical infrastructure and, second, IP will be the dominant networking technology. Optical fiber is preferred due to the enormous bandwidth potential that makes it inherently futureproof. IP will be the networking technology of the future, with most of the communication services using it today and virtually all of tomorrow's new services adapting to it. In addition to delivering IP-over-fiber to a customer, service providers must also deliver guaranteed services in just the right quantity and quality, along with proper billing capability.

There are four important requirements for ASP networks to be most effective:

- The network should be developed with an IP-centric view. IP will evolve as the prevailing network layer, and the network must be optimized to realize current and future IP services.

- The network should offer high-speed manageability, enable traffic engineering, and efficiently bill for differentiated services. Today's Internet technology was not designed to carry streaming services such as telephony and videoconferencing. Introduction of these applications will require support of the IP layer and means of service differentiation.

- The network must have the ability to scale in terms of capacity, releasing the full potential of fiber-optic technology. Also, the network should be simple to design and forecast.

- The network must provide 24/7 availability as customers move more mission-critical information to the ASP site. Availability translates into resilience and ultra-fast restoration on one or more network layers.

Channels are portions of bandwidth from a sender to one or several receivers, built from two levels of multiplexing. First, different IP flows may be multiplexed on the same channel. Second, channels are multiplexed on the fiber or lambda when DWDM equipment is used. Channels are implemented via different link-layer technologies-some have been designed to encompass channels up front (DTM, ATM), while others need extensions to feature channel properties (SONET, Ethernet).

The first commercial CRS implementation uses a time-division multiplexing (TDM) scheme on a shared link with four main features of the channel mechanism (see Figure 1):

- Multirate. During the lifetime of a channel, the capacity can be changed. A channel is a dynamic resource that can be set up with bandwidth ranging from 512 kbits/sec, in quantum steps of 512 kbits/sec, up to the full capacity of the link. The number of time slots constituting a channel can be changed dynamically during its lifetime.

- Fast establishment. The channelized link layer is designed to create channels fast. Setting up a channel, or changing its capacity, happens quickly-on the order of a millisecond. This speed is due to the optimized signaling scheme in conjunction with the distributed architecture where resources are locally available at all nodes.

- Multicast. A channel can have multiple receivers, enabling true multicast operations (as well as unicast and broadcast), meaning any channel at a given time can have one sender and any number of receivers. Over the network, any number of IP multicast groups can be active simultaneously, which is important when distributing video or other multicast services.

- Simplex. Channels are simplex, making it possible to guarantee resources both upstream and downstream. Channels also support asymmetric traffic patterns with high bandwidth utilization.

Channels provide links between routers and hosts in an IP network. Since a router doesn't need to terminate the full link capacity, the CRS distributed architecture can offload the routers, only routing the traffic that has to go through the network. Furthermore, the links can be dynamically configured for different traffic types. For example, it can multiplex "best effort" traffic (file transfers) over a single channel and concurrently set up dedicated channels for delay-sensitive traffic (voice and video).

Providing strict QoS is a key issue when extending the network to carry delay-sensitive services such as voice and real-time video. When designing IP networks for these services, problems derived from network congestion, packet losses, and jitter are the most important technology challenges to be addressed.The most common way to deal with the quality problem is to over-provision the network. Even though it provides a quality level suitable for most best-effort services available on the Internet today, over-provisioning results in poor bandwidth utilization. However, as business-critical services and user data are stored on the Internet, the service provider needs to guarantee the accessibility and availability of the network connection for each customer. Furthermore, when real-time-sensitive services such as voice and video are introduced, the provider must take better control of the QoS.

When the CRS architecture, or IP over a channelized link layer, is used, traffic on a specific channel is isolated from other traffic on the link. Once a channel is set up, there is no contention for network resources, meaning that over-provisioning the networks will not affect QoS because a channel already provides a continuous jitter-free transfer of the packets.

Those taking an IP-centric view on future networks believe IP should not only be the access technology for future services, but also the network building tool of the future. IP addressing and routing should be used to forward packets and manage the link layer to set up, manage, and tear down channels.

Establishment and routing of channels are decided solely by the presence of an IP packet in a node. This event triggers a channel setup to the IP next-hop node. Resource management maps IP flows to channels with desirable properties. This capability, referred to as "auto-provisioning," requires no human intervention to manage the channelized medium. The optical bandwidth is made accessible for the IP layer, and each service has a dedicated channel to perfectly fit its needs.

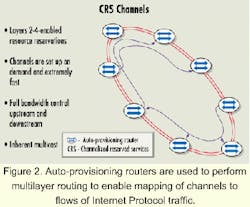

A CRS node is basically an auto-provisioning router combined with channel allocation capabilities. The outgoing packets are not only supplied to physical interfaces, but also to channels set up automatically. An auto-provisioning router performs multilayer routing when forwarding IP traffic. The routing decision is made based on information in Layer 3 and/or 4, enabling mapping of channels to flows of IP traffic (see Figure 2).

When packets arrive, they're first routed to the outgoing interface using ordinary IP routing. Next, they're identified by traffic filters and routed to a channel leading to the IP next-hop node. The identified channel possesses the resource characteristics described in a channel specification associated with the filter. Channels can be established on demand when the first packet of an IP flow arrives or they can be established for permanent connectivity.An IP flow is identified using information carried in the IP and next layer headers such as source and destination address, type of service field, protocol field, and port numbers. The packets of the identified flow are then mapped onto a channel with properties specified in the channel specification. The properties include capacity, channel holding time, and account/priority.

The capacity can be set in quantum steps of 512 kbits/sec, where minimum and maximum capacity can be specified as well as dynamic control parameters controlling how channel size is changed to follow the shifts in traffic intensity. Channel holding time, or idle time before disconnection, is determined in milliseconds (infinity channel hold time results in a permanent connection). Channels can be grouped into accounts such as different customers of the service provider. Accounts may have different resource policies, enabling prioritized channels to preempt best-effort channels adherent to other accounts. This feature ensures high bandwidth utilization and strict QoS in parallel.

Channels can be switched at the link layer, providing end-to-end transmission paths with short and fixed delays and guaranteed capacity. The CRS approach to establishing and switching channels is truly IP centric. IP addressing and routing is used to decide when and to where channels should be established. Properties of the IP packets (derived from network-, transport-, and application-layer information of the packets) are used to select channels with suitable properties for the traffic. The approach constitutes a convergence of switching and transmission by using the IP intelligence to administrate the resources of the optical layer.

An IP network using CRS constitutes a distributed routing architecture in the sense that traffic is transported between the routers via channels. These channels build a dynamic link-layer infrastructure on top of the fiber or DWDM. With the distributed routing architecture, a router will process only traffic that is actually being routed by the node. That means the routers connected to the network will be offloaded as compared to packet-over-SONET/SDH networks, for example, where the full capacity of the link is terminated in the router.

With the rapid increase of optical transmission capacity, active components such as routers and switches will become bottlenecks in future networks. Thus, the distributed switching architecture becomes a very important feature in order to scale to OC-192 and higher speeds.

When the forwarding capacity needs to be increased in an optical network using CRS, there is no need to exchange existing routers. New ones can be added to the network to increase packet-forwarding capacity and the number of connected subnetworks. This can be done without upgrading the capacity of any existing node or extending the fiber infrastructure, as long as the total capacity of the fiber or DWDM ring is sufficient.

That is in contrast to networks built as mesh or point-to-point links. In such networks, either new network connections are added to an existing router or new routers need to be installed. The CRS architecture also enables multiple lambdas to be managed as one channelized medium. Multiple rings further increase the bandwidth, which, for example, enables OC-192 speeds using multiple OC-48 (2.5-Gbit/sec) lambdas.

The distributed architecture not only impacts future network enhancements, but it also simplifies network planning. Networks built with the CRS architecture can be planned on an aggregated level, since only the amount of traffic passing through a node onto the shared medium needs to be calculated. The bandwidth between the routers is automatically changed according to the actual traffic pattern. New services, therefore, can be provisioned with reduced risks of network congestion caused by a sudden increase in bandwidth demands. Furthermore, in a distributed architecture, there are no costly centralized nodes that need to be upgraded as the network grows geographically with new users.

Typically, networks based on CRS technology are deployed in dual, counter-rotating fiber rings. Both fibers are used concurrently, doubling the available bandwidth. In addition, a spatial reuse scheme allows for better utilization of the available bandwidth. Spatial reuse means allocated resources for channels are reused on other segments of the ring to increase the available bandwidth, depending on load distribution. For example, bandwidth segments can be occupied between nodes 1 and 3 on the ring, and the same portion of the bandwidth may then be reused for communication between nodes 4 and 7 on the same ring. In the general case, the total throughput of dual 2.5-Gbit/sec CRS rings can be as high as 20 Gbits/sec.

This increased throughput is derived from using both rings concurrently, adding up to 5 Gbits/sec, and because the average distance of transported traffic measures a quarter of a ring, another 20 Gbits/sec can be added. Substantially higher gains can be derived in applications with more traffic locality. Finally, statistical multiplexing of packets can easily be mapped onto channels, allowing for oversubscribed links and creation of cost-effective, best-effort services.

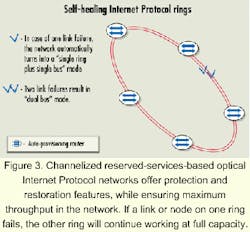

Network availability is becoming increasingly important. Basically, the network should not go down, and in the event of a node or link failure, the network needs to degrade in a way that allows the service provider to easily detect and immediately repair the failure. Optical IP networks based on CRS offer protection and restoration features, while ensuring maximum throughput in the network.

If a link or node on one ring fails, the other ring will continue working at full capacity. The broken ring will be automatically reconfigured into bus mode, and all undamaged segments of the ring will still be used (see Figure 3). Furthermore, overall throughput will go down from eight times the link capacity to somewhere between two and eight times, depending on the load distribution.

In the case of a dual link failure, the rings will automatically reconfigure into a dual bus. All nodes will still be able to communicate with each other, and the overall network throughput will drop from eight times to two times the link capacity. The self-healing mechanisms in CRS offer protection in less then 50 msec. When optical power goes down, the receiving node detects ring failure and offers continuous probing between CRS nodes through an auto-configuration protocol.

The bandwidth bottleneck in metropolitan areas is about to be released by the use of fiber and IP as the prevailing protocol for future services. As carriers take on the ASP model to deliver revenue-generating services to corporate customers, there is a need for an improved and simplified optical IP infrastructure.

The CRS architecture strengthens the competitive position of service providers by leveraging the optical layer to provide services on demand. CRS is IP centric in that it is optimized to carry IP traffic. The channelized link layer includes functions not commonly handled by IP, such as QoS mechanisms, bandwidth management, and protection switching. Global addressing and routing can also be handled by IP.

CRS scales with advances in optical transport technologies due to its distributed architecture. It enables simple upgrading of the forwarding capacity of a network without necessarily replacing routers or upgrading the fiber infrastructure. Furthermore, a spatial-reuse mechanism will provide high utilization of the available transmission capacity.

The introduction of CRS will launch new business opportunities for service providers by simplifying the planning and provisioning of new revenue-generating services. Ultimately, the network will become transparent and the end users will receive services without concern about processing locally or at the ASP site.

Per Lembre is director of marketing for Dynarc, based in Sunnyvale, CA. The company's Website address is www.dynarc.com.