New optical edge switch, access devices to deliver next-generation services

Richard Barnett and Jim Mooney

Sirocco Systems Inc.

Dramatic advances in the optical-networking arena and the phenomenal worldwide demand for bandwidth are leading to revolutionary changes in the traditional concepts of how networks are constructed. This fundamental paradigm shift offers carriers a unique opportunity to re-architect their entire networks to gain significant competitive advantage. New optical edge switches and access devices will serve as key components in next-generation service delivery, transforming bandwidth from the optical core into revenue-generating services.

First-generation optical networks began with a simple purpose-to maximize the information-carrying capacity of the long-haul fiber-optic infrastructure. That was achieved by utilizing point-to-point dense wavelength-division multiplexers (DWDMs) to provide fiber gain between two sites. All service-level processing and intelligence resided in existing electronic components such as Synchronous Optical Network/Synchronous Digital Hierarchy (SONET/SDH) devices, Asynchronous Transfer Mode (ATM) switches, and Internet-protocol (IP) routers. This solution served its intended purpose and, in fact, most optical networks still operate this way today.

Second-generation optical networks were introduced to overcome basic connectivity hurdles. These devices expanded on the capabilities of first-generation systems by incorporating the concept of basic topologies, including rings and simple meshes. This type of architecture is finding favor in metro solutions where fiber is usually deployed in rings and DWDM enables multiple OC-48/STM-16 (2.5-Gbit/sec) streams to be routed on individual wavelengths.

However, similar to first-generation devices, technology constraints relegate these elements to rudimentary transport functions. Second-generation optical networks also rely on existing electronic devices to perform all the complex service-level processing.The industry is now entering a new phase-the building of third-generation optical networks. In this generation, optical core switches are beginning to be deployed, leading to a fundamental shift in the way networks are built. Optical cores need to be fast but also tend to be relatively transparent, offering limited capabilities to switch or process information streams. Devices at the edge of the network usually provide a greater level of electronic processing (see Figure 1).

The optical core currently consists of optical switches that terminate and convert the optical signal back to the electronic domain, perform electronic processing, and then regenerate an outgoing optical signal. The advantage of this optical-electrical-optical (OEO) system is that traditional networking techniques can be employed.

But to achieve very high operating speeds, today's optical-core switches have limited electronic-processing capabilities. Typically, core optical-network switches are protocol transparent and cannot look at the cells and packets flowing on the optical channels and make routing decisions on that basis. Instead, these devices establish the fixed assignment of an incoming optical channel (for example, a wavelength on a DWDM link) to an outgoing channel; all data on one channel is routed to the other channel using a crosspoint switch.While this diminished higher-layer processing has allowed optical networking to achieve very high speeds, it does not satisfy many of the requirements of today's networks. What is needed is technology that can take the high-speed pipes provided by the optical-core networks and derive revenue-generating services-voice, data, and video-that can be delivered to customers. A new class of networking devices known as optical access devices and optical edge switches is emerging to provision these services.

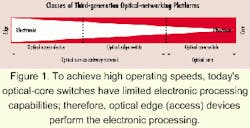

The future optical network will combine high-speed optical cores with the new optical access devices and optical edge switches. Together these devices can provide a new service-delivery network. As shown in Figure 2, the optical access devices are typically located in the metro or access layer of the network, providing a suite of mult iservice interfaces that feed voice, data, and video streams to end users or to other service providers. The optical trunks of the access devices may feed optical edge switches by aggregating traffic from a potentially large community of devices. Optical edge switches act as service aggregators-combining, grooming, and switching network traffic while presenting the streams into the optical core.

In the past, multiservice switches have attempted to map a suite of services into a single converged internal technology. An example is ATM, which is often used as a converged transport layer for carrying multiple services across a network. Today, many vendors are looking at IP and Multiprotocol Label Switching (MPLS)-based technology to provide a similar, converged network transport. Each attempt to unify the transport infrastructure has usually been met with a significant set of technical challenges.

A policy of avoiding service conversion has many advantages. Each service type is transported in its native form, allowing the support for that service to be delivered in an well-understood manner. The need for interworking functions is virtually eliminated unless specifically required as part of the delivered service. In addition, management of the service provisioning can be performed in a way that is optimized for the specific service presentation.

The implication for the optical edge switch is that it must be able to support multiple service types, including optical-networking services. Therefore, the optical edge switch serves as a multiservice, multiwavelength platform. The best way to do that is to implement multiple switching infrastructures within a single system, one to support packet and cell applications and another to support optical applications.

If the goal is to use a single network infrastructure for all the various types of traffic, including voice, video, and data, the unified network must be able to satisfy the quality-of-service (QoS) requirements for each of the traffic types. For example, voice and video traffic is sensitive to absolute delay and delay variation, while these factors do not matter with most data traffic. There are at least two ways of providing this traffic classification: hard differentiation and soft differentiation.

Hard differentiation is the purist form of traffic classification; traffic types are sent in completely different communications channels. The bandwidth in the high-speed pipes across the optical core is structured to provide completely noninteracting channels. One channel is allocated to voice traffic, one to delay-sensitive data such as video, one to best-effort data, etc. The advantage is that its implementation can be extended to almost any communications link, provided SONET/SDH structuring is supported.

A second major advantage is that this approach is fully interoperable in a multivendor environment or a multiservice-provider environment. A disadvantage is that the bandwidth allocated to each of the structured channels must be predetermined unless the network is able to dynamically resize the structured channels in response to offered load. Dynamic resizing is an approach that will be used more and more in the future to extend the utility of this technique.

In the case of soft differentiation, all of the traffic types have to share a common communications channel. That means each network element in the path of the traffic that implements queuing has to perform some form of classification at a very fast rate. Typically, the access-layer devices will perform the traffic classification processing and then use a tag (typically an MPLS tag) that indicates to the service-delivery layer how the traffic is to be treated. The optical edge switches then know how to queue and prioritize traffic when congestion occurs.

An important advantage of this technique is that decisions do not have to be made regarding how much bandwidth to allocate to each traffic type. Instead, the bandwidth is dynamically shared between the traffic types. A disadvantage is the extra processing required in the optical edge switch to make the determination of the traffic class and to perform scheduling of the different classes when congestion occurs. Soft differentiation is ideal where data rates permit its use, but at very fast channel speeds, it can be difficult and expensive to implement effectively. In addition, the task of mapping service-level agreements to actual queuing parameters can present a challenge.

The optical edge switch should be able to use both modes of traffic differentiation, providing the best of both worlds: hard differentiation based on SONET/SDH standards for compatibility and soft differentiation for efficiency.

One problem with DWDM is the coarse nature of the multiplexing at the wavelength level-typically OC-48 or OC-192 (10 Gbits/sec). Many applications for DWDM use different wavelengths (lambdas) to separate classes of traffic between two network elements (an extreme example of hard differentiation). The problem is when there is insufficient traffic to efficiently utilize this technique, for example, using an OC-48 for 100 Mbits/sec of traffic.

A solution to this DWDM limitation is for the optical edge switch to use its infrastructure to multiplex different traffic types onto one wavelength. This technique is called sub-lambda multiplexing (see Figure 3).Sub-lambda switching then allows each sub-lambda to be switched at intermediate switching nodes (much like an IP router can route packets at each node). Using either hard differentiation techniques such as SONET/ SDH or soft differentiation based on ATM or MPLS (using a thin layer of SONET/ SDH overhead) allows sufficient flexibility to configure sub-lambda channel bandwidths with a high level of granularity and efficiency. Sub-lambda switching combines the best features of hard and soft differentiation, and each port or wavelength can be individually configured as required.

One of the issues in building any network is where to place service-level intelligence, such as routers and ATM switches, and where to put simpler devices that just allow data to be "back-hauled" to a concentration point where service-level processing takes place. For maximum flexibility, the optical edge switch should support a distributed service-processing architecture.

A distributed architecture allows network planners to situate the service intelligence where it is required, technically and financially. The advantage of utilizing a back-haul strategy in the access layer is that the devices that live in that layer generally far outnumber the rest of the devices in the network; the network can then be kept relatively simple, lowering the overall cost. Network management is also easier because a smaller number of elements are involved.

This process is known as stream merging. It sets up a hierarchy of bandwidth granularities. For example, the optical core utilizes only high-speed pipes; the access devices require thin pipes suitable for connection to integrated access devices, add/drop multiplexers, digital-subscriber-line access multiplexers, and optical access devices; and the optical edge switch performs the mapping between the two.

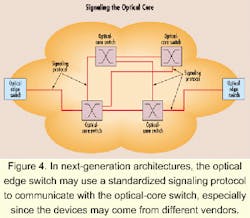

In many cases, the optical core is supplied by a different vendor or managed by a different organization than the service-delivery and switching layers of the network, raising the issue of how high-speed pipes are provisioned and how their existence is communicated from the optical edge switch to the core. One potential solution is to use a signaling protocol to cross the divide between the optical edge switch and the optical core (see Figure 4). Several groups are working toward an open set of standardized protocols for this purpose.With mechanisms that allow the optical edge switches to signal for connections to the optical core, a very flexible and dynamic architecture results. Optical edge switches can set up and tear down high-speed connections across the optical core as required by the offered load under the policy control of the managers of the service-delivery network and the optical core. For example, certain pipes may only be established during working hours to satisfy peak demand on a programmed basis, while other pipes are only introduced when there is sufficient traffic flow along a particular route to warrant the use of extra capacity.

Typically, the optical edge switches do not have to consider the structure or topology of the optical core. Instead, these devices are aware of the possible termination points for fat pipes across the optical core and signal those to which they wish to be connected. Also included in the request are the bandwidth requirements of the pipe and any other QoS parameters that the optical core might support, such as delay, protection level, failure recovery time, etc.

As with any evolution to new technology, the introduction of third-generation optical networks creates a degree of uncertainty. At the same time, however, it presents a tremendous opportunity for carriers to gain competitive advantage.

The optical core has incredible bandwidth-carrying capacity, but that bandwidth must be transformed to deliver revenue-generating services, requiring a new type of optical service-delivery infrastructure. The optical edge switch can perform the essential service-delivery functions; therefore, it is critical to the success of next-generation optical networks.

But as with any edge device, the optical edge switch is subject to a wide range of requirements. These devices must support a multitude of physical interface types and a number of distinct routing and signaling protocols. While that presents considerable challenges for optical edge switch vendors, the combination of these functions within a single managed platform allows entirely new solutions to complex network problems. The whole is truly greater than the sum of the parts.

Richard Barnett is vice president, system architecture at Sirocco Systems (Wallingford, CT). He can be reached at [email protected]. Jim Mooney is product-management director at Sirocco Systems. He can be reached at [email protected].