Roles different protocols will play in the cloud

By Todd Bundy

Overview

IT managers have multiple protocol options for supporting cloud computing. Settling on one may prove difficult in the near term, which means planning accordingly.

The concept of flexible sharing for more efficient use of hardware resources is nothing new in enterprise networking—but cloud computing is different. For some, the cloud computing trend sounds nebulous, but it’s not so confusing when you view it from the perspective of IT professionals. For them, it is a way to quickly increase capacity without investing in new infrastructure, training more people, or licensing additional software.

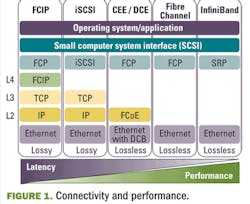

Decoupling services from hardware poses a key question that must be addressed as enterprises consider cloud computing’s intriguing possibilities: What data center interconnect protocol is best suited for linking servers and storage in and among cloud centers? Enterprise IT managers and carrier service planners must consider the benefits and limitations conveyed by a host of technologies—Fibre Channel over Ethernet (FCoE), InfiniBand, and 8-Gbps Fibre Channel—when enabling the many virtual machines that compose the cloud.

Understanding the cloud craze

The idea of being able to flexibly and cost-effectively mix and match hardware resources to adapt easily to new needs and opportunities is extremely enticing to the enterprise that has tried to ride the waves of constant IT change. Higher-speed connectivity, higher-density computing, e-commerce, Web 2.0, mobility, business continuity/disaster recovery capabilities… the need for a more flexible IT environment that is built for inevitable, incessant change has become obvious and creates an enterprise marketplace that believes in the possibilities of cloud computing.

Cloud computing has successfully enabled Internet search engines, social media sites, and, more recently, traditional business services (for example, Google Docs and Salesforce.com). Today, enterprises can implement their own private cloud environment via end-to-end vendor offerings or contract for public desktop services, in which applications and data are accessed from network-attached devices.

Both the private and public cloud-computing approaches promise significant reductions in capital and operating expenditures (capex and opex). The capex savings arise through more efficient use of servers and storage. Opex improvements derive from the automated, integrated management of data-center infrastructure.

Ramifications for the data center

One of the most dramatic changes that cloud computing brings to the data center is in the interconnection of servers and storage.

Links among server resources traditionally were lower bandwidth, which was allowable given that few virtual machines were in use. The data center has been populated mostly with lightly utilized, application-dedicated, x86-architecture servers running one bare-metal operating system or multiple operating systems via hypervisor.

In the dynamic model emerging today, many more virtual machines are created through the clustering of highly utilized servers. Large and small businesses that use this type of service will want the ability to place “instances” in multiple locations and dynamically move them around. These are distinct locations that are engineered to be insulated from failures elsewhere. This desire leads to terrific scrutiny on the protocols used to interconnect servers and storage among these locations.

Bandwidth and latency requirements vary depending on the particular cloud application. A latency of 50 ms or more, for example, might be tolerable for the emergent public desktop service. Ultralow latency, near 1 ms, is needed for some high-end services such as grid computing or synchronous backup. Ensuring that each application receives its necessary performance characteristics over required distances is a prerequisite to cloud success.

At the same time, enterprises have long sought to cost-effectively collapse LAN and SAN traffic onto a single interconnection fabric with virtualization. While IT managers cannot sacrifice the performance requirements of mission-critical applications, they also must seek to cut cloud-bandwidth costs. Some form of Ethernet and InfiniBand are most likely to eventually serve as that single, unifying interconnect; both are built to keep pace with Moore’s Law, which shows the amount of data doubling every year with no end in sight. In fact, neither of these protocols, nor Fibre Channel, figures to exit the cloud center soon (see Fig. 1).

FCoE

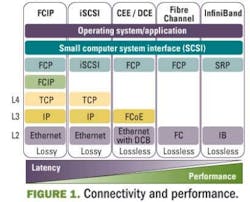

FCoE/Data Center Bridging (DCB)—which proposes to converge Fibre Channel and Ethernet, the two most prevalent enterprise-networking protocols—is generating a great deal of interest. Its primary value is I/O consolidation, aggregating and distributing data traffic to existing LAN and SAN equipment from atop server racks. FCoE/DCB promises low latency and plenty of bandwidth (10 to 40 Gbps), but the emergent protocol is unproven in large-scale deployments (see Fig. 2).

Also, there are significant problems related to pathing, routing, and distance support. It is essential that the FCoE approach supports the Ethernet and IP standards, alongside Fibre Channel standards for switching, path selection, and routing.

Basically, there are issues to be overcome if we are to have a truly lossless enhanced Ethernet that delivers link-level shortest-path-first routing over distance. The aim is to ensure zero loss due to congestion in and between data centers.

The absence of standards defining inter-switch links (ISLs) among distributed FCoE switches, a lack of multihop support, and a shortage of native FCoE interfaces on storage equipment must be addressed. FCoE must prove itself in areas such as latency, synchronous recovery, and continuous availability over distances before it gains much of a role in the most demanding cloud-computing applications.

This means that 8G, 10G, and eventually 16G Fibre Channel ISLs will be required to back up FCoE blade servers and storage for many years to come.

InfiniBand

The number of InfiniBand-connected central processing unit (CPU) cores on the Top 500 list grew 63.4% last year, from 859,090 in November 2008 to 1,404,164 in November 2009. Ethernet-connected systems declined 8% in the same period.

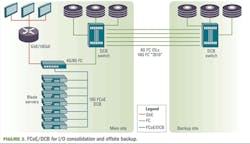

InfiniBand is frequently the choice for the most demanding applications. For example, it’s the protocol interconnecting remotely located data centers for IBM’s Geographically Dispersed Parallel Sysplex (GDPS) PSIFB business-continuity and disaster-recovery offering (see Fig. 3).

The highest-performance, transaction-sensitive business services that might be offloaded to the cloud demand the unmatched combination of bandwidth (up to 40 Gbps) and latency (as little as 1 µs) that an InfiniBand port delivers. Only 10 Gbps of bandwidth and TCP protocol latency of at least 6 µs are possible with an Ethernet port. Latencies as great as 40 to 50 µs are possible, as several tiers of hierarchical switching are often necessary in networks interconnected via Ethernet ports to overcome oversubscription. Finally, InfiniBand, like Fibre Channel, will not drop packets like traditional Ethernet.

8G Fibre Channel

8G Fibre Channel, transported at native speed via DWDM, dominates for rapid backup and recovery SAN services that must not experience degradation over distance. Early in 2009, in fact, COLT announced an 8G Fibre Channel storage service deployment including fiber spans of more than 135 km.

Some carriers see 8G Fibre Channel as a dependable enabler for public cloud-computing services to high-end Fortune 500 customers today, and certainly it’s a protocol that must be supported in cloud centers moving forward. For this reason, 16G Fibre Channel will be welcomed as a way to potentially bridge and back up 10G FCoE blade servers over distance.

Other considerations

Latency and distance aren’t the only factors in determining which interconnect protocols will enable cloud computing. Protocol maturity and dependability also figure into the decision.

With mission-critical applications being entrusted to clustered virtual machines, the stakes are high. Enterprise IT managers and carrier service planners are likely to employ proven implementations of InfiniBand, 8G Fibre Channel, and potentially FCoE and/or some other form of Ethernet in cloud centers for some years because they trust them. Low-latency grid computing—dependably enabled by proven 40G InfiniBand—is a perfect example of an application with particular performance requirements that must not be sacrificed for the sake of one-size-fits-all convergence.

This is one of the chief reasons that real-world cloud centers likely will remain multiprotocol environments—despite the desire to converge on a single interconnect fabric for benefits of cost and operational simplicity, despite the hype around promising, but emergent, protocols such as FCoE/DCB.

Other issues, such as organization and behavior, cannot be ignored. Collapsing an enterprise networking group’s LAN traffic and storage group’s SAN traffic on the same protocol would entail significant political and technical ramifications. Convergence on a single fabric, however attractive in theory, implies nothing less than an organizational transformation in addition to a significant forklift upgrade to new enhanced (low-latency) DCB Ethernet switches.

Maintaining flexibility

Given this host of factors, cloud operators should be prepared to support multiple interconnect protocols with the unifying role shouldered by DWDM, delivering protocol-agnostic, native-speed, low-latency transport across the cloud over fiber spans up to 600 km long. Today’s services can be commonly deployed and managed across existing optical networks via DWDM, and operators retain the flexibility to elegantly bring on new services and protocols as needed.

The unprecedented cost efficiencies and capabilities offered by cloud computing have garnered attention from large and small enterprises across industries. When interconnecting servers to enable a cloud’s virtual machines, enterprise IT managers and carrier service planners must take care to ensure that the varied performance requirements of all LAN and SAN services are reliably met.

Todd Bundy is director, global alliances, with ADVA Optical Networking (www.advaoptical.com).

Links to more information

LIGHTWAVE: Clouds on the Horizon

LIGHTWAVE: The Growth of Fibre Channel over Ethernet

LIGHTWAVE ONLINE: ADVA and COLT Deploy 8-Gbps Fibre Channel

over 135 km