By Jim Thedoras

The last decade has witnessed the rise of social networks, over-the-top media distribution, mobile overtaking fixed broadband, and a lot more trends – all of which have led to the construction of hundreds of mega data centers around the world. Within these data centers, content is held on servers with virtualized storage and compute resources, and software-defined networking (SDN) is already making good on its promise to virtualize the networks interconnecting them. Once everything inside the walls is virtualized, network managers will realize new levels of efficiency and resource utilization.

But as data centers have continued to balloon in size, the applications they run have outgrown their walls, becoming global databases in the process. SDN must now be extended to the optical transport networks that interconnect these data centers to enable true end-to-end multilayer path provisioning of data flows.

Service providers also will benefit from this achievement since efficiency gains help all. Faced with the burden of over-the-top media traffic – yet not necessarily privy to the resulting revenue windfall – service providers are also looking to optimize their end-to-end flows and better respond to traffic volatility. It's only through the efficiency gains of centralized control of fully virtualized resources that the Internet will be able to keep pace with exploding bandwidth demands.

More flexible plumbing needed

One of the strengths of the Open Systems Interconnection (OSI) networking model is that it separates everything into layers. Of course, one of its weaknesses is that it separates everything into layers.

A data storm in the application layers at the top of the protocol stack is invisible to the transport layer at the bottom. That means the transport layer must be overprovisioned; transport connections are "nailed up" – brought online and left there to accommodate moments of peak traffic, though such conditions might occur very infrequently. The result is that today's networks are very inefficient. And that's why making the transport layer more dynamic and flexible is the goal of most of today's real world use cases of transport SDN.

To make matters worse, the type of traffic being carried and the bandwidth consumption profiles have changed dramatically as end-user habits and favorite applications have changed. Consumers have shifted from email, chat, and static information applications to social media, video streaming, and real time information. The majority of packets are now grouped into higher-layer flows such as over-the-top video, database backups, and virtual machine (VM) movements.

To optimize a multilayer flow of packets across an entire end-to-end link, intelligence is needed on all layers and at each network node. From that gathered intelligence, a centralized controller that sees the big picture is needed to make the appropriate decisions. Most of the use cases for transport SDN also depend on the smart interaction of all the layers of the network under the command of a controller that has full visibility into the end-to-end multilayer flows.

Multilayer orchestration

At first glance, the answer would appear to be simply adding transport nodes to an existing SDN controller domain. Unfortunately, the question is a bit more involved, as each transport vendor has their own way of dealing with optical impairments and blocking constraints. The very analog nature of fiber-optic communications has created a tall hurdle to commonality. Consequently, it makes more sense to abstract each vendor's transport approach into a commonly defined model of connectivity. The centralized controller then sees this virtualized representation of the transport layer – a sort of Reader's Digest version.

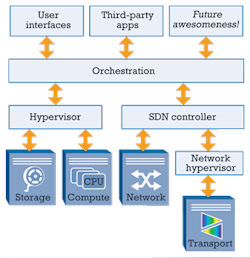

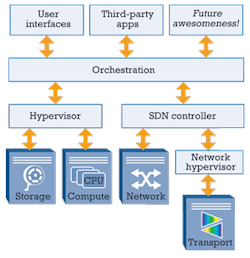

While it's tempting to think of all the elements in a network (including the abstracted transport layer) as residing under a single SDN controller, the reality is more complicated. All the resources of a network typically consist of different elements under different controllers. However, one of the beauties of virtualized elements – especially open ones – is that layering, paralleling, and coordination are easily achieved. Fortunately, a lot of this complexity has already been worked out for storage, compute, and, more recently, networking. All that's left is adding the abstracted transport.

Where transport fits in the SDN puzzle

The various different controllers are integrated at an orchestration layer. For example, a "hypervisor" may run the compute and storage resources inside a data center. A separate SDN controller (e.g., an OpenDaylight controller) would run the networking resources. These two controllers are joined by an orchestration layer above them. The transport layer would not directly report to the orchestration layer. Rather, a network hypervisor would virtualize the transport layer and report into the existing network SDN controller (see Figure).

If we look at a detailed implementation whereby OpenStack provides the orchestration, storage would be controlled by Cinder and compute by Nova. Networking was added to OpenStack with the Neutron plug-in, which uses a REST interface to talk to an SDN controller like OpenDaylight, which, in turn, uses OpenFlow to communicate with the network. The abstracted transport layer is appended to this OpenDaylight controller via an OpenFlow link to a network hypervisor.

The entire network now falls within the realm of OpenStack orchestration. Applications may then be written on top of OpenStack using RESTful application programming interfaces. Other non-OpenStack implementations are possible as well, of course.

Real world use cases

Given that this scenario amounts to such a substantial leap for SDN technology, it's understandable that potential adopters want to see bona fide business justification before making the transition to transport SDN. Here are some real world use cases that illustrate transport SDN's considerable potential business benefits.

Bandwidth calendaring. Daytime, evening, and overnight traffic patterns are distinct enough from one another that bandwidth consumption cycles can be identified and characterized. And just as cities have learned to reverse traffic flows on some highway lanes to accommodate morning and evening rush hour commutes, the configuration of optical transport networks can be optimized with SDN to most efficiently handle predictable traffic patterns (e.g., transport connections can be reconfigured to deliver maximum capacity between midnight and 4 AM for regular data backups).

Since the cycle is known and well understood, this technique is often called "proactive" bandwidth calendaring. The need for peak provisioning on every link is eliminated, and varied costs (capital expenditures, power, etc.) are substantially reduced. And we are not talking about a few percentage points here. Early modeling at a Tier 1 service provider is showing a 35% reduction in provisioned circuits.

Follow the sun. Transport SDN also can help networks more efficiently shoulder traffic demands as they predictably swell and shrink from region to region over the course of a day. Traffic surges between Europe and the east coast of the United States in the early mornings of the North American business day and from east to west across the United States as the North American business day wears on. Then, just as over-the-top video streaming picks up on home TVs in the US, Asia's business day is beginning and transpacific traffic spikes.

To accommodate such variations without transport SDN, all static optical circuits must be provisioned all the time to handle the moments of peak traffic, even though these peaks can be predicted. To make matters worse, these international gateway connections are often the most expensive, with even Tier 1 service providers forced to lease these shared undersea physical links. Transport SDN enables a service provider to lease the bare minimum capacity that's needed at any given moment.

IP offload management. Then there are the unexpected and unpredictable spikes in network bandwidth. Responding accordingly is often called "reactive" bandwidth calendaring. In this case, an IP offload manager is used to gauge the volume of traffic flowing through a router port. With transport SDN, the network can be configured so another end-to-end link is brought up and provisioned to offload the first when a preset threshold is exceeded; the second link can be de-provisioned and traffic directed back to the first link when volume falls back below the threshold.

Routers have a basic ability to respond to spikes; with SDN controlling an abstracted optical layer, the capability to provision optical circuits and additional switching capacity is added for a valuable IP offload approach. In early testbed trials, circuit provisioning has been demonstrated in less than a minute, with de-provisioning occurring in less than 5 sec. When the time to bring up and tear down a circuit changes from weeks to minutes, a new paradigm in transport efficiency arrives.

Data-center load balancing. Load balancing already occurs within data centers, with virtual resources shifted around in blocks among clusters as activity spikes. But what happens when an entire data center is overwhelmed? Transport SDN allows efficient load balancing among geographically dispersed data centers via a centralized controller with full visibility of all layers of the network. For example, a new end-to-end flow path could be configured to shift load to available clusters in remote data centers if CPU loads in a given cluster of VMs exceed 80%. With transport SDN, such a shift is instantaneous; without it, weeks or maybe even months would be required to accomplish such an undertaking.

The majority of bandwidth used between data centers today consists of load balancing, replication, and local caching. With the intelligence of transport SDN, less bandwidth is needed between sites, and the available bandwidth is used more wisely.

Trust but verify

Virtualization has come to storage, compute, and networking; transport is the next logical step. And that future is not so far off. Various development testbeds around the world are demonstrating the valuable and dynamic flexibility that SDN can bring to transport as well as the interoperability of the technology's open-sourced enabling software and hardware.

Imagine what may happen once higher-layer applications being written today for storage and compute clouds suddenly have the transport networks at their command as well. The possibilities are mind-boggling.

JIM THEODORAS is senior director of technical marketing at ADVA Optical Networking.

Archived Lightwave Issues

About the Author

Jim Theodoras

Jim Theodoras is vice president, R&D at HG Genuine USA.