Reducing the cost of broadband by lowering data-center footprint

By CHERI BERANEK

Much has been written about "carbon footprint." But for data-center designers and managers, reducing footprint is not just about going green. Let's review the opportunities associated with "going lean" and reducing data-center space requirements.

The media barrage us with messages about how to lose weight. Regardless of the method, the overriding message is that carrying around excess fat stresses our skeletal systems. Our broadband networks are no different. As increasing bandwidth and performance requirements have resulted in an explosion of deployed fiber, more and more data is being pushed through these networks for dynamic storage and retrieval. The resulting expansion of data centers has been rapid, and real estate is being quickly consumed.

The strain this expansion has put on data centers extends beyond the capacity and redundancy of servers and switches to the physical media - the highway used to transfer it all around. Multimode fiber has replaced copper. Newer 50-µm laser-optimized fiber is now replacing conventional multimode - and in turn is being replaced by the limitless bandwidth potential of singlemode fiber.

Effective data-center design is crucial to the long-term viability of cost-effective broadband management. To ensure a "lean" footprint today and into many tomorrows, forethought is required.

Data-center architectures and requirements

Source: Google Inc.

Today's data centers are complex facilities. Most consume more power than a small town and have auxiliary generators in the event they lose utility power. With all that power being consumed, cooling the data center consumes even more power. Some companies are building data centers in traditionally "cold" locations (such as Duluth, MN, and Finland) to use the cold air to cool the data center more economically.

The wiring for a data center can be a work of art or a bird's nest. Most data centers have at least three different types of cabling (copper, fiber, and power). In addition, to provide increased resiliency, data centers have two sets of each type of cable, referred to as A side and B side. That allows the systems housed in the data center to connect to redundant power, networks, etc., by being connected to both the A and B side cabling. Keeping separation between the copper wires and the power cords is important because the power cords could cause disruption to the signals on the copper. Of course, fiber is not affected by that type of interference.

There are two options for running the cabling: under the floor and above the rack. Some data centers use a combination of the two options, but the general trend is to run the cables through troughs mounted above the racks. This approach makes the cable much easier to run, locate, and maintain.

All these cables connect equipment in racks. Servers, storage arrays, and network switches are all rack-mounted with rack depths approaching 3.5 feet. Unfortunately for Hollywood, reel-to-reel tape has been retired, and what tape is in use today looks more like VHS. As noted earlier, most equipment can accept redundant connections for power, networking, and storage, and today's racks can be configured with in-rack power distribution units (PDUs), dual PDUs, temperature sensors, and remote power control.

Current cost considerations for size and space

With all the power, cooling, and cabling requirements, data centers are expensive. Current studies indicate that building a data center can cost $1,500 to $2,000 per square foot of data-center space, not including the costs for racks, servers, etc. Renting square footage within someone else's data center (normally referred to as co-location) can run $30 to $50/square foot/month, depending on the type of data center.

The Uptime Institute has developed a classification system for data centers based on the redundancy and resiliency built into the facility. Four tiers categorize data centers from least resilient (Tier 1) to most resilient (Tier 4). Redundant hardware and cross connections can add more resiliency. The more resilient the data center, the more expensive it will be.

With the increased use of the Internet and customer demands for systems that are available 24/7, companies are beginning to move their applications from traditional in-house Tier 1 or, at best, Tier 2 data centers to professionally managed Tier 3 or 4 co-location facilities. These facilities cost more to use, so companies are expending considerable resources to reduce their footprint and the amount of wasted space (aisles, etc.).

Technologies such as blade servers and virtualization have enabled companies to reduce their footprint. But the amount of wasted space is still high because of requirements to have access aisles on both sides of current racks: one side to access the front of the server/switch/etc. and the other side to access the cabling on the back of the equipment. Traditional designs using traditional equipment that require 3- to 4-foot-wide aisles on each side of racks waste a significant amount of space. New fiber management technology and cassette connections enable the elimination of the back aisle because all of the functionality and cables can be accessed from the front.

Scalability crucial to cost-effective growth

For today's data centers, traditional fiber management methods are just overkill - they have too many needless components that drive up costs. Rather than "changing the lipstick," today's fiber management must be designed from conception for high-density environments.

Fiber management today must be very flexible and configured so it can be scaled to meet a variety of environments. In addition, today's economic environment forces all data-center providers to maximize the alignment between capital equipment and data-center utilization rates. As a result, it makes economic sense to take fiber management down to the lowest common denominator that aligns with fiber constructions of the day - 12 fibers at a time. Fiber management that scales in 12-port increments enables data-center designers to upgrade their port counts. And the upgrade must be done without loss of space at full configuration.

But careful attention must be placed on every element of the fiber. One area of concern is the protection of buffer tubes. Most traditional approaches are inadequate since the buffer tube is stored in a common route path and area. Alternatives that address this challenge with in-device buffer-tube storage reduce the footprint of the overall device and enhance the protection of the fiber sub-unit.

Superior density reduces real estate costs

Fiber management design has long promoted the need for density. We push to increase the number of ports per rack-unit space while balancing the need for fiber access.

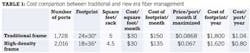

Regardless of whether you own your central office or rent or choose to co-locate, real estate is a cost of doing business. To create a cost metric for the savings of space that fiber management can provide, let's use the cost per square foot to rent space in a co-location environment ("a cage") from a third party. While some locations will be significantly higher, Table 1 uses $30/square foot/month.

Assuming the port count is fully maximized, dividing the cost of the footprint per year by the number of ports on the frame calculates the cost per port for the high-density approach to be 80 cents per port per year. That's 24¢ per port per year less than the $1.04 cost of the traditional approach, delivering a cost savings of 23%.

Front/rear access implications

The cost savings associated with real estate goes beyond density. Fiber management approaches that provide full access to the fibers using only the front of the frame offer better use of the cage or full data center. While many data centers require up to 4 feet for aisle access, some conservative environments may use as little as 30 inches. Using this estimated 30 inches of aisle space for access, when a front access technology is deployed, that 30 inches of aisle space is required only on the front, with zero space required in the rear. That compares to the 30 inches of aisle space on the front and rear of the frame required with a standard platform. As a result, the amount of square footage required for the high-density front access system is dramatically less than the alternative solutions.

To establish the cost savings of these designs, Table 2 outlines the cost of each approach on a per port, per year basis, accounting for both the footprint of the two racks and the required aisle space. The 24 square feet of the high-density front access frame will house 4,032 ports, while the 35 square feet of the traditional method houses 3,546 ports. Assuming the port count is fully maximized in this two-frame example, the annual cost per port for the high-density front access frame is $2.04 per port - $1.61/port/year less than the $3.65 cost of the traditional frame, a savings of 44%.

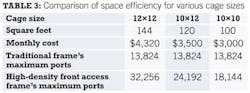

Full cage implications

To further demonstrate the savings associated with reducing the real estate requirements for fiber management, the real world implications of optimizing the floor plan of a cage exemplify its full impact. High-density front access designs can be deployed either in a back-to-back layout or against the wall (see Figure). As Table 3 details, 32,256 ports could be housed in a 12×12 cage with the high-density front access system, while only 13,824 could be housed using the traditional platform. The high-density front access design provides an improvement of 233% more ports per cage versus the traditional approach without jeopardizing ease of use.

Investing in fiber management is not a necessary evil. Protecting fiber with management devices that scale to your capacity requirements will pay dividends in the future. Unlike a standard resolution to lose weight, this commitment to establishing a lean infrastructure for your data center will pay dividends long into the future.

Past Lightwave Issues