Will dispersion kill next-generation 40-Gbit/sec systems?

Despite the new dispersion-compensating fiber, demand for dispersion-management solutions is expected to grow at a healthy pace.

JAMES JUNGJOHANN, RICK SCHAFER, ALAN BEZOZA, and KEVIN GALLAGHER, CIBC World Markets

Since 1982, the transmission speed of optical networks has doubled almost every two years. By the mid-'90s, the de facto standard for SONET transmission and the first WDM systems was 2.5 Gbits/sec. Nortel began shipping true 10-Gbit/sec WDM transmission gear in early 2000. Laboratory or "hero" experiments in the late '90s were already looking at 40 Gbits/sec. Today, some carriers such as AT&T and Qwest are testing 40-Gbit/sec transmissions-Qwest on one wavelength between Denver and Colorado Springs.

When 10-Gbit/sec optical networks were debated in the mid-'90s, many engineers predicted that dispersion-the natural broadening of light pulses as they travel down the fiber-would cripple the evolution to higher speed network transmissions on existing fiber cable. Notably, the problem of signal loss, or attenuation, was solved with erbium-doped fiber amplifiers (EDFAs). For designers, still very frugal back then, the biggest hurdle was probably justifying the incremental cost of 10-Gbit/sec hardware compared to 2.5-Gbit/sec systems.

That didn't stop Nortel from pushing early concatenated 10-Gbit/sec (OC-192c) systems, ultimately squashing the competition and enjoying a debatable two-year market advantage over competitors such as Lucent, NEC, Alcatel, and Ciena in 1998. Similar debates now exist as original equipment manufacturers (OEMs) and carriers look to migrate to higher speed 40-Gbit/sec networks in the near future.

Today, network engineers must juggle many different technical issues when designing next-generation networks, including chromatic and po larization-mode dispersion (PMD), at tenuation, optical signal-to-noise ratio, and fiber non-linearities. All of these technical issues require tradeoffs with some of the others.The OEMs debate which issue is the limiting factor. Corvis, Ciena, Nortel, and startups Innovance, Solinet, and PhotonEx view PMD as the major issue in 40-Gbit/sec networks. PMD happens because light pulses travel down the fiber in combination with their electrical fields in two planes at right angles or perpendicular to one another. Polarization refers to the electric field orientation of a light signal. Under normal conditions, they travel at the same speed over the same distance. If one of the planes is faster or slower than the other, a frequent occurrence when the core of the fiber is not perfectly round, the pulse broadens and may overlap with neighboring pulses, causing bit errors at the receiver.

These same vendors, however, also address chromatic dispersion, which is of particular importance at higher channel counts. The longer the pulse travels down the fiber, the wider it becomes. When the pulse broadens it can overlap with other neighboring pulses, resulting in intersymbol interference. As with PMD, the greater the interference, the more likelihood of higher bit-error rates or the receiver's inability to distinguish between "0s" and "1s." Chromatic dispersion is of particular importance at higher channel counts.

Believe it or not, many people at the service-provider level are not aware of the two different types of dispersion.

The initial 10-Gbit/sec addressable market for dispersion-compensating devices will surround carriers with older installed fiber. This group includes AT&T, Sprint, Worldcom, and the majority of international carriers. Even in today's capital-constrained service-provider market, older-generation fiber (33% of the installed base) will be in use for some time. Figure 1 indicates that roughly one-third of all installed fiber in the U.S. is in long-haul networks.

In 2000, it was estimated that 80% of the installed fiber was commodity-like standard singlemode, whereas 20% represented high-end long-haul nonzero dispersion-shifted fiber (NZDSF) and metro NZDSF. Growth in long-haul NZDSF was supposed to approach 33% per year through 2004, but the fallout among long-haul carriers will likely show flat NZDSF growth through 2003 with the likelihood of a new cycle beginning in 2003/2004 in conjunction with 40-Gbit/sec networks. Metro NZDSF sales look healthy, but Corning's MetroCor fiber still does not compensate for slope over 100% of the transmission window like Lucent's AllWave.

Can service providers squeeze more out of their existing installed fiber plant? Yes. Therefore, the initial dispersion-management market will first surround carriers using 10-Gbit/sec "old" fiber and then ultimately 40-Gbit/sec deployments for all carriers regardless of installed fiber type. Sales into old fiber networks can still be lucrative. Ciena reports roughly 50% of their sales are still in old 2.5-Gbit/sec systems. The biggest issue is if chromatic and PMD devices will see a large enough market develop at 10 Gbits/sec until the sizable growth opportunities emerge with 40-Gbit/sec deployments in three years. Due to the relatively small size of the opportunity, the market is likely to be addressed by nimble, niche equipment vendors.

Today, it is greatly debated whether or not Internet traffic will experience the sizable gains witnessed in the last several years. Some believe scaling networks due to the fundamental laws of graph theory may not be needed. But even conservative estimates now at 50% annual growth, down from 200% to 400% in the late 1990s, would still require immense bandwidth growth requirements. UUNET tells us if the network is to handle the 50% increase in Gbit/sec offered load over 12 months, the Gbit/sec-route-mi capacity of the network must increase 100% about every four months. Hence, the cost of keeping up with this N2 phenomena is still a real issue in network design.

On almost every quarterly conference call, service providers are reporting strong demand for new services and bandwidth, yet equipment expenditures have faltered significantly. This disconnect reflects the fact that carriers are migrating to just-in-time capacity adds rather than building out capacity in advance of demand.

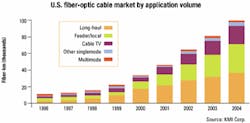

In the short term, most carriers are less inclined to build out new fiber networks. Instead, they are taking advantage of plummeting dark fiber sales, focusing on greater utilization of existing fiber, and maximizing the use of existing WDM equipment (i.e., adding new transponders to each end of the network). When the capital markets were favorable for the carriers, they would build out capacity 18 months ahead of bandwidth demand. But now that 18-month "buffer" has diminished and carrier capacity is added on an as-needed basis.Figure 2 illustrates that carriers are filling up old 40-wavelength systems to 90% of capacity before ordering next-generation gear capable of 160 wavelengths. This "pay as you grow" attitude increases capacity by increasing the number of utilized wavelengths rather than moving to the complexity of faster speed 40-Gbit/sec networks. The mi-gration from 10-Gbit/sec to 40-Gbit/sec transmission speeds results in a 400% capacity increase. Comparably, the carrier could add 60 more wavelengths to the 20 already in use for a similar capacity increase.

If you were to attend the March 2001 Optical Fiber Conference in Anaheim, CA, or the June 2001 Supercomm trade show in Atlanta, all the major OEM vendors were exhibiting an early leadership position in 40-Gbit/sec WDM networks. However, carriers and investors need to separate fact from reality. The simple evolution to 40-Gbit/sec networks is very unclear in today's crippled capital markets. Carriers seem to have slashed their capital spending plans for new long-haul network builds. Instead, carriers have become much more efficient with the $10 left in their back pockets.

Currently, as the cost of components plummets, the economics of adding incremental 10-Gbit/sec wavelengths may be far cheaper than the jump to faster 40-Gbit/sec transmission speeds. But then again, similar economics existed when designers contemplated 10-Gbit/sec systems in 1998. While the near-term economics of 40-Gbit/sec networks are unfavorable, we continue to believe the 40-Gbit/sec migration will happen eventually, albeit at a much slower evolution. However, we do see significant 40-Gbit/sec opportunities in short-haul terabit-router interoffice applications where fiber cabling densities must improve.

Additionally, carriers with little new NZDSF may find it very uneconomical to deploy this fiber to accommodate the higher speed transmission. We suspect several long-haul carriers such as AT&T, Sprint, and Worldcom will migrate to a leased long-haul strategy this year rather than continue building their own networks. Many of these carriers simply have not deployed the needed amount of next-generation fiber. Most conventional singlemode fiber (SMF) is capable of handling 10-Gbit/sec speeds and may also be intolerable for 40-Gbit/sec speeds. That is debated, however, and many OEMs, including Siemens, are designing their 40-Gbit/sec systems around SMF. It seems possible that standard SMF can support 40-Gbit/sec transmission, provided dispersion is managed effectively.

Carriers have two choices when adding capacity: keep adding incremental wavelengths with existing systems, or migrate to next-generation systems with additional wavelength capacity or higher 40-Gbit/sec speeds. The prohibitive economics of 40-Gbit/sec networks will likely push meaningful 40-Gbit/sec network deployments out until 2003. In the meantime, technical challenges for these high-speed 40-Gbit/sec optical networks will surround:

- Chromatic and polarization-mode dispersion management.

- External modulators to reduce laser chirp and dispersion.

- Special modulation schemes like duo-binary, chirped return-to-zero, quasi solitons, or inverse multiplexing.

- New dispersion-managed fibers such as Corning's LEAF or Lucent's TrueWave.

- Optical time-division multiplexing bypassing the limitations of electronics.

The commercial availability of 40-Gbit/sec optical and electrical components is well behind what the OEMs would lead the public to believe. Although 40-Gbit/sec know-how litters the microwave industry, the higher speeds in optics are very difficult. Source lasers may be attainable in volume, but lithiumniobate (LiNbO3) modulators and electronic modulator drivers are in very short supply. Generally, transmitter dispersion compensation is easily implemented, but provides only a limited amount of compensation. At the receiving end, 40-Gbit/sec PIN photodiodes and avalanche photodiodes lack sufficient yields to make them commercially available this year. At the physical layer, clock and data recovery integrated circuits and decision circuits are also in poor supply. Maybe only AMCC and Nortel are doing 40-Gbit/sec clock and data recovery while other OEMs will rely on multiplexing TDM signals.

One of the biggest issues is the de-velopment of cost-effective dispersion-management solutions that can be implemented commercially. Simple chromatic-dispersion solutions are available today using dispersion-compensating fibers (DCF) supplied by Lucent and Corning. Dispersion-compensation devices are currently restricted to chromatic-dispersion compensators and dispersion-slope compensators. Typically, chromatic dispersion is solved with a tray of DCF used after amplification. Today, PMD is alleviated by deploying new fibers designed to limit this type of dispersion, but new continuous solutions are coming to market. DCF has been the mainstay for resolving dispersion in existing 2.5-Gbit/sec networks and early 10-Gbit/sec networks.

CIBC estimated the DCF market in 2000 to be near $300 million with Corning holding more than one-half the market share. That is a very high-margin business for both Lucent and Corning, so next-generation subsystems must compete on much lower pricing. New dispersion vendors must come to market with semi-custom differentiated products offering tunability and dy-namic management features.

Most OEMs and component vendors agree-the high costs of dispersion management currently will inhibit 40-Gbit/sec deployments. For example, if a PMD compensator costs about $18,000, it is likely a simple 40-Gbit/sec, 40-wavelength DWDM hub link would cost a sizable $1 million. Yafo believes even at $30,000 per wavelength, the cost is one-half that of electronic regeneration.

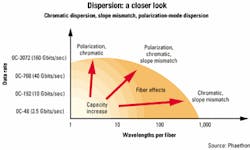

The economics of managing chromatic dispersion are also costly. For instance, if CIBC were to use LaserComm's broadband slope-matching dispersion-compensation module, it might cost $10,000-$15,000 for the same 40-wavelength network to handle chromatic dispersion. Tunable banded solutions for chromatic dispersion offered in four or eight wavelengths, such as the solution offered by Phaethon, may go for under $10,000 or closer to $1,000 per wavelength. There is also a real estate or "footprint" cost associated with some PMD and chromatic solutions where a 40-wavelength compensation solution may take six racks of equipment when carriers are already cramped for central office space.

The big question for network planners is whether dispersion management is still too expensive. Hence, is it less costly to go ahead and drop in optical-electrical-optical regenerators? Especially if the current component market is witnessing strong price pressure now that supply is meeting demand. In today's harsh component environment, there will also be a willingness for vendors to forward price, aiding material costs for next-generation subsystem developers.

Therefore, dispersion suppliers will be forced to comply with falling regeneration costs, which today we estimate at $40,000 per wavelength in 10-Gbit/sec networks. Our market forecast assumes some hefty price declines over the next several years. CIBC believes the market will have strong elasticity, so as prices drop, more and more OEMs will fully utilize the benefits of next-generation dispersion-management devices.

Modern DWDM transmission systems convert electrical signals-phone calls, images, sound, video, and e-mails-to optical pulses with a defined repetition rate, also known as bit rate. Each signal is transmitted on a separate wavelength or "channel" and multiple channels can be transmitted simultaneously over a single fiber by multiplexing, or combining, the individual channels together.

Today, many vendors claim transmission in up to 160 different wavelengths. Each wavelength is packed closely together at 50-GHz spacings (0.4 nm). The hardest part is to get all 160 wavelengths to propagate inside a single strand of optical fiber without speeding up or slowing down too much and interfering with one another.However, both the optical power and quality of these signals degrade as they travel through the fiber. While power can be added to the signals with EDFA and Raman optical amplifiers to maintain signal strength, at a certain point in the network the signals must be regenerated due to dispersion and noise. Therefore, regeneration is one approach to dispersion compensation. With optical amplification greatly mitigating the effects of loss or attenuation, dispersion becomes even more important as optical signals travel further.

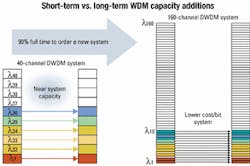

New NZDSFs have helped but are more effective in improving power and reducing non-linear effects. These fibers fail to reduce dispersion in each separate channel, since dispersion is wavelength dependent and accumulates at different rates. Figure 3 shows the dispersion values at different bands of various fiber types.The new fiber does not alleviate the dynamic nature of PMD created when fiber is bent, changes over temperature, sways in the wind, or is jiggled by a passing train. Conventional methods of dispersion compensation have technical drawbacks and lack an ability to serve as cost-effective solutions. Dispersion-compensating fiber designed for negative dispersion can be periodically added to the network to undo or reverse some of the effects of dispersion. It cannot, however, provide the precise dispersion correction needed per wavelength (termed slope compensation) and cannot tolerate the high powers required for fast bit-rate multiwavelength systems.

Dispersion "management" is multifaceted. Dispersion management is actively correcting the effects of PMD and maintaining the balance between chromatic dispersion and dispersion plus precise slope correction in an optical network. Dispersion results not only from the fiber, but also from numerous network elements such as EDFAs.

Coming up with an economical solution to resolving both chromatic and polarization-mode dispersion is a key issue in lowering 10-Gbit/sec and 40-Gbit/sec network costs for commercialization. Current systems experience significant signal degradation after 400- to 600-km transmission due to noise and dispersion. After the signal quality has deteriorated to that point, the signal is near the limit of a receiver's ability to distinguish pulses, and regenerators must be used to convert the signal to electrical pulses, regenerate the signal electronically, and convert it back to an optical signal.

Regeneration is a costly process sometimes accounting for up to 50% of the cost of the entire network. That is the main reason why network designers are now struggling to expand the regeneration length beyond 600 km to up to 3,000 km for ultra-long-haul systems. Ultra-long-haul systems are now in commercial rollouts from OEMs such as Corvis and Nortel. Importantly, the amount of signal interference due to chromatic and polarization-mode dispersion increases as the square of the increase in bit rate. That is also proportional to distance and to the number of wavelengths in use. So as network capacity expands to meet bandwidth demand with increased channel density and higher data speeds, dispersion management becomes one of the most critical bottlenecks in advanced optical networks as Figure 4 illustrates.

There are several factors driving the demand for next-generation dispersion-management solutions:

Higher channel counts. The number of optical channels per fiber has risen from early 16-channel systems to systems with more than 160 channels. These systems transmit in both the C-band and the L-band and require dispersion management across both bands. This expansion in wavelengths means that there are large differences in the rate of dispersion accumulation be-tween the shortest and longest wavelengths. The more wavelengths in use, the greater the need for dispersion management. In high channel count systems, channel spacing is very close and requires continuous broadband management of dispersion and dispersion slope. Management of dispersion slope is challenging and entails adjusting for higher dispersion at some wavelengths. This wavelength dependence means that there may be large differences in dispersion slope between the shortest and longest wavelengths.The preferred dispersion-slope management in today's high-channel systems is "continuous," correcting for all wavelengths simultaneously, rather than "channelized." But it can also be managed or fine tuned with banded tunable devices operating in just a small group of four to eight wavelengths.

Faster transmission speeds. Next-generation 40-Gbit/sec systems require 16 times more accuracy in dispersion management than 10-Gbit/sec systems on the same fiber. Because of this requirement, full-band continuous dispersion management is extremely difficult for 40 Gbits/sec. For 40-Gbit/sec systems to be effective, the accuracy of chromatic-dispersion tolerance across the entire operating band must be 99% correct. That is, for 40-Gbit/sec transmission, dispersion correction must be within 1% across the entire band. On an absolute basis, regardless of the length of the span, the correction should be near 15 to 20 psec/km. As an example, dispersion on a 100-km segment of Corning's ELEAF fiber is 420 psec/nm at 1550-nm wavelength (the center of the C-band). It's different at every wavelength due to dispersion slope. For a 40-Gbit/sec system to work on ELEAF fiber, the dispersion could be corrected as much as 416-424 psec/nm at 1550 nm, which is a very tight tolerance.

Longer distances between regeneration. Dispersion is a physical effect of any type of optical fiber and is proportional to the fiber length. Continuous dispersion management over large bands becomes even more indispensable as the spacing between regenerators increases. Ultra-long-haul transmission can now occur over 5,000 km before regeneration. Regenerators are costly devices that account for a significant portion of the entire network cost. Operators are pushing toward solutions with greater regeneration spacing, particularly when using systems with more than 40 channels. Extending the optical link (distance between regenerators) actually used in the system has progressively required more and more precise dispersion management for each of the spans between amplifiers. Next-generation return-to-zero chirped or "quasi" soliton transmissions will require more evenly spaced dispersion-compensation modules.

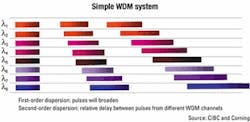

To set the record straight, some dispersion is actually good. A certain amount of manageable chromatic dispersion in the transmission of signals is needed to prevent impeding non-linear effects such as four-wave mixing (FWM). Notably, closer channel spacings of 50 GHz and 100 GHz between each wavelength are more susceptible to non-linear effects than dispersion. Figure 5 illustrates the first- and second-order dispersion (slope). Pulses broaden as they move down the fiber and there is a relative delay between pulses from different WDM channels. Notice the relative difference in the signals between wavelength 1 and wavelength 8.

A way to avoid FWM is to intentionally introduce some dispersion to break the phase-matching conditions of adjacent wavelengths. Thus, it is possible to create ultra-long-haul transmissions by solving FWM, allowing some dispersion at the point where signals reach the end of the fiber so that the receiver can distinguish a "1" from a "0." Notably, dispersion is a linear effect, and so its effects are reversible, whereas nonlinear effects like FWM are impossible to recover from.

James Jungjohann, Rick Schafer, Alan Bezoza, and Kevin Gallagher are re search analysts at CIBC World Markets (Denver), focusing on the optical-networking and components industries.