Improving wavelength stability in ultra-dense WDM systems

Optical PLL technology increases the stability and wavelength accuracy of commercial semiconductor lasers, enabling 12.5-GHz channel spacing of OC-192 signals.

BRAD MELLS, fiberspace

The growth in Internet users and bandwidth-rich Web applications is increasing the demand for low-cost broadband access. Remaining competitive while maintaining profitability poses a significant challenge for network service providers. An economically viable solution requires substantial reductions in the cost to deploy and operate network services. Given current market conditions, such a solution must focus on maximizing the use of existing network infrastructure, while providing scalability to meet future bandwidth demands.

Today, ultra-dense WDM systems with channel-frequency spacings of <50 GHz are becoming a reality. There is a definite trend to increase the number of channels along a single fiber by decreasing the spectral separation between adjacent channels. It's not surprising that Ciena, the market leader in WDM technology, is committed to the development of high-channel-count systems. As the density of optical channels increases, the accuracy and stability of the laser and filter frequencies become critical to transmission performance.Optical phase locked loop (OPLL) technology improves the stability of commercial semiconductor lasers, enabling reduction of channel spacing for OC-192 (10-Gbit/sec) signals to 12.5 GHz. OPLL supports favorable network economics by maximizing spectral efficiency while supporting increased optical spans within the network.

High spectral efficiency

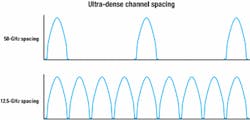

Ultra-dense channel spacing enables higher channel counts, which increases the amount of data transmitted over a single fiber. By reducing channel spacing from 50 GHz to 12.5 GHz, four times as many channels can be added, thereby quadrupling the data transmission capacity of the fiber (see Figure 1). Note that while the 50-GHz spacing leaves much room for error, the channels are barely separated from one another in the 12.5-GHz spacing.

Closer channel spacing improves spectral efficiency, which is the quantity that specifies how much of the available bandwidth is used to transmit data compared to how much bandwidth is wasted. Individual optical components function properly over limited optical spectrum. Thus, increased spectral efficiency reduces the cost per unit of transmission bandwidth by maximizing the use of available optical spectrum.

Spectral efficiency of a system is calculated as the ratio of the transmission rate in bits per second to the transmission bandwidth in hertz. A WDM transmission incorporating 10 Gbits/sec on each optical channel and a frequency spacing of 50 GHz between adjacent channels achieves a spectral efficiency of only 20% (0.2 bit/sec/Hz). On the other hand, 10 Gbits/sec at 12.5-GHz frequency spacing improves the spectral efficiency to 80% (0.8 bit/sec/Hz).

Higher spectral efficiency corresponds to a higher bit-rate throughput of optical components such as amplifiers and dispersion compensators. Utilization of existing network infrastructure is substantially improved by maximizing the data rate transmitted by installed optical components. Thus, enhanced spectral efficiency reduces the cost/bit/sec, enabling the realization of optimum network cost to performance ratios.

As channel density increases and channel frequency spacing decreases, individual channel wavelengths need to be very accurately tuned and absolute stability of those wavelengths must be maintained over long periods of time. To accomplish that, high-stability optical components are required. Such components include laser sources as well as passive components like the optical filters, which are used to combine and separate the multiple wavelengths on a single fiber.

Improving frequency stability

Ultra-dense WDM systems require highly stable and accurate laser wavelengths to function optimally. The causes of frequency drift and the resulting misalignment of lasers and filters in the network are quite complex. While environmental changes that cause the center frequency of optical components (e.g., filters and lasers) to drift in wavelength can be resolved through simple feedback control mechanisms, component aging causes irreversible changes that require more sophisticated control techniques.

There are a variety of approaches to address the problem of component aging. One such approach involves manually adjusting the laser carrier frequency from time to time to achieve optimal network performance. Alternate techniques are often used to actively stabilize the laser wavelengths to the International Telecommunication Union (ITU) grid by feedback-based locking techniques. The passive components such as filters must also be tuned to the ITU grid and may be actively temperature-controlled or based on thermally compensated designs to ensure wavelength stability.

Current approaches fall short

The manual approach, requiring periodic adjustments of laser wavelengths to manage long-term stability, has been used in long-distance submarine optical networks. While this method enables periodic performance optimization, it adds substantial cost to the management and maintenance of the network. Such maintenance cost may be justified in undersea networks, but it is definitely undesirable and unsuitable for terrestrial applications. As a result, active feedback techniques that cause the laser carrier frequency to lock to an optical reference frequency are quite common in terrestrial optical networks.

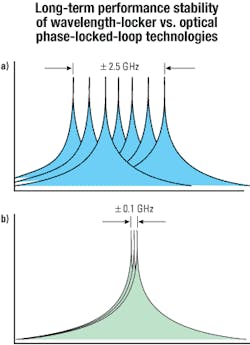

The typical frequency stability achieved by wavelockers is about ±2.5 GHz. Wavelocker performance can be improved to about ±1.25 GHz with better wavelength reference designs. Most wavelength-locker technology in use today uses relatively coarse optical references and the slope of the resonance for frequency discrimination. Thus, the overall stability of the conventional wavelength-locking device fundamentally is limited by the broad resonance of the optical reference and unpredictable offsets in the feedback electronics, which can result in errors in the set point of the frequency discriminator.

Although laser-frequency stability performance on the order of ±1.25 GHz is approaching the requirements of the 25-GHz OC-192 system, it is not adequate to support 12.5-GHz frequency spacing. Even at 25-GHz spacing, a stability limitation of ±1.25 GHz requires extreme frequency stability from all other optical components in the system, especially the WDM filters. Furthermore, application of forward error correction (FEC) would be restricted by, if not incom patible with, such a limitation. As increased FEC coding is applied or closer channel spacing (e.g., 12.5 GHz) is pursued, the performance of wavelocker technology becomes insufficient.

Innovations in laser stabilization

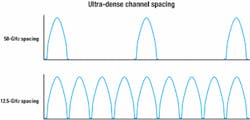

OPLL technology locks the laser frequency by imposing frequency modulation (FM) on the laser to derive a phase-dependent error signal relative to the optical reference frequency. This approach was originally developed by R.V. Pound for stabilization of microwave oscillators1 and was applied later to lasers by various researchers, including R.W.P Drever and J.L. Hall.2 This technique is compatible with extremely sharp optical references that provide much better frequency discrimination than can be obtained with the typical wavelength locker. In addition, the feedback mechanism is self-correcting and therefore insensitive to offsets in the feedback electronics, because OPLL locks the laser to the peak of the optical reference. Figure 2 compares the conventional wavelength-locker design to that of OPLL.

To understand how OPLL works, think of the frequency-modulated laser light as containing two small FM sidebands with equal magnitude and opposite signs. It is the nature of FM to cancel in intensity due to the balance of the power in the FM sidebands. When the modulated field spectrum interacts with an independent optical reference frequency, the beat components of the FM sidebands are only balanced when the laser frequency and reference frequency are matched.

In the case of a frequency (or phase) error, the balance is lost and an intensity-modulated radio-frequency (RF) signal at the FM modulation frequency is detected. The magnitude and phase of the signal are directly related to the difference between the phase of the laser and phase of the optical reference. This phase difference is detected as an intermediate-frequency (IF) signal by mixing the RF signal with the modulation frequency just like in an electrical phase locked loop. Feedback of the IF signal to the laser current causes stabilization of the laser frequency.

The improvement in frequency stability enabled by application of OPLL technology can be quite substantial. Short-term stability is quite good, maintaining the laser center frequency within approximately ±10 MHz. Long-term stability is limited mainly by temperature sensitivity of the optical reference resonator and overall optomechanical stability. Nevertheless, by incorporating thermal compensation techniques into the reference resonator design, long-term stability on the order of ±100 MHz can be realized in practical applications. Figure 3 depicts the relative long-term performance stability limitations of wavelocker versus OPLL technology.

Cost-effective bandwidth

The effort to increase fiber capacity must balance the data rate per wavelength, number of wavelengths, and optical bandwidth occupied by multiple wavelengths. Assuming that the overall capacity is known, and given the selection of a 10-Gbit/sec-per-wavelength (OC-192) format, the required number of optical wavelengths can be determined. The remaining issue involves the allocation of optical bandwidth. This bandwidth requirement is determined by multiplying the number of wavelength channels by the channel spacing in units of frequency.

To illustrate this point with a system supporting 50-GHz channel spacing, consider a sample network intended to support a capacity of 1.96 Tbits/sec. At a 10-Gbit/sec line rate, such a system would require 196 channels. At a channel spacing of 50 GHz, the optical bandwidth required is determined as follows: 50 GHz x 160 = 9.8 THz. That is the maximum optical bandwidth that can be amplified using the state-of-the-art in erbium-doped fiber-amplifier (EDFA) technology.

The advent of the EDFA contributed substantially to the current state of fiber-optic communications technology. The EDFA technology that is most commonly utilized in today's networks operates in the 1530-1560-nm wavelength range, an optical bandwidth of approximately 3.77 THz.3 This region of the optical spectrum, known as the C-band, is at the center of the lowest-loss region of the fiber transmission. While the C-band EDFA is by far the most widely deployed of all optical-amplifier (OA) technologies in today's networks, the spectral width of C-band is substantially less than the 9.6 THz required by the limitations of a 50-GHz frequency grid for 1.96-Tbit/sec transmission.

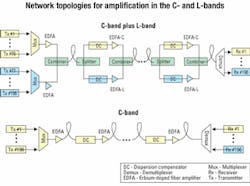

New fiber-amplifier technologies extend the gain region into other bands. Recent advances in EDFA technology have enabled the utilization of gain in the longer-wavelength band from 1560 to 1610 nm. This longer-wavelength region, known as the L-band, creates nearly 6 THz of additional usable bandwidth. But the additional bandwidth comes at a substantial cost in efficiency, since the EDFA gain is substantially lower in the L-band than in the C-band. Thus, L-band amplifiers tend to be considerably more expensive (about two times more) than their C-band predecessors.The combination of C- and L-bands together does yield enough bandwidth to place 196 channels on a 50-GHz frequency grid. However, this network topology is substantially more expensive than a topology that utilizes a higher spectral efficiency in C-band. While C-band amplifiers operate from 1530 to 1560 nm, L-band amplifiers typically operate from 1570 to 1610 nm. Therefore, a system that utilizes both C- and L-bands needs to incorporate both types of amplifiers.

By utilizing a 12.5-GHz frequency grid, it is possible to fit all 196 channels into the C-band where the optimum gain performance of EDFA technology is realized. Working within the C-band has major advantages because the C-band EDFA is the most efficient OA currently available and dispersion-managed fiber spans are most advanced within the low-loss wavelength regime. In fact, most of the installed base of optically amplified dispersion-managed fiber links are operating in the C-band. Figure 4 illustrates the network topology that would be required to operate both C- and L-bands together on a single fiber, in contrast to the much simpler case of increased spectral efficiency in C-band.

It is important to note that a WDM system operating within the C-band can carry at least 300 channels, if a 12.5-GHz spectral grid is used. Even C- and L-band together do not contain adequate bandwidth to carry this many channels on a single fiber using a 50-GHz grid. Thus a 50-GHz EDFA-based system carrying a 3-Tbit/sec data rate would require at least two fibers, one of which would carry 196 channels in C-and L-band and the other would carry the remaining 104 channels in L-band.

Network economics

The economic impact of improved spectral efficiency is significant. By and large, the Internet lacks communities of interest, which is creating a need for ultra-long-haul network overlays where terrestrial links spanning well over 2,400 km are not uncommon. As the optical link span becomes longer, the costs associated with increased spectral efficiency become more significant.

In an analysis to determine the cost of a long-haul WDM system relative to the 12.5- versus 50-GHz frequency grid, two different optical spans-1,200 and 2,400 km-were considered. The results indicate that for a 1.96-Tbit/sec transmission containing 196 OC-192 channels, a 30% reduction from $2.1 to $1.5 million in overall network equipment cost is realized at a span distance of 1,200 km. This cost reduction is enhanced to more than 40%-$3.1 million versus $1.8 million-when the optical span increases to 2,400 km.

The impact of increasing the data rate from 1.96 to 3 Tbits/sec has also been considered. Note that the data rate of the 50-GHz WDM system utilizing both C- and L-bands is maximized at 1.96-Tbit/sec transmission capacity. Con versely, the 3-Tbit/sec data rate corresponds to the maximum transmission capacity of a 12.5-GHz WDM system operating within the C-band. Excluding the cost of the additional fiber that would be required for the additional L-band amplified fiber span, the 300-channel C-band system enables a 40% savings (from $3.1 million to $1.9 million) for a 1,200-km span. Increasing the span to 2,400 km results in a dramatic cost reduction of more than 60% from $4.7 million to $1.8 million.While high spectral efficiency provides major cost advantages, such compelling economics can be easily obstructed if the transmitted signal integrity is not maintained over suitable distances of fiber. In the past, transmitted signals have been cleaned up through a process of optical-electrical-optical (OEO) regeneration, thus enabling the signals to be recovered, retimed, and retransmitted further down the fiber. This technique contains a dominant cost element in the network architecture, because OAs effectively regenerate multiple wavelengths simultaneously and OEO regeneration requires a separate transmitter and receiver for each wavelength. A single OA costs less than an OEO regeneration of a single channel.

Efforts to increase the span of optically amplified systems have resulted in substantially more cost-effective solutions. Of the variety of optical techniques proposed to increase all-optical fiber span, virtually all incorporate FEC in some form to improve transmission performance. FEC technology incorporates redundant data that enables bit errors to be corrected by a sophisticated form of parity checking. The ability to correct bit errors effectively improves receiver sensitivity and therefore can result in substantial improvements in fiber transmission distances.

Since redundant data must be transmitted in addition to the digital-signal payload, incorporation of FEC imposes additional bandwidth requirements on transmission. The use of data rates as high as 12.5 Gbits/sec in long-haul WDM systems is not unusual. The extra bandwidth required by the FEC code can have a damaging effect on the transmission system. Although increasing FEC overhead causes a larger total coding gain, the net coding gain may actually decrease due to these transmission penalties.

Wavelength drift

In high spectral efficiency WDM systems, one major source of transmission penalties is caused by wavelength drift that results in a relative offset between the center frequencies of the laser and filter. Incorporation of FEC increases the transmission-signal bandwidth and hence imposes tighter tolerances on wavelength stability and accuracy. Only by using highly stable and accurate laser sources can the additional bandwidth required by FEC be compatible with the reduced filter bandwidths needed for ultra-dense WDM optics. By minimizing the signal degradation caused by wavelength instability, optimum FEC performance is realized. Thus, high-stability laser technology is a critical component to extend non-regenerative distances of fiber-optic transmissions, enabling network operators to enjoy the corresponding reduction in network installation costs.

A number of critical economic and technological drivers support the trend toward closer channel spacing in DWDM systems. As the spectral range between optical channels decreases, the requirements for frequency stability and absolute accuracy of optical components in the system increases. Improved laser stability offers numerous benefits for network operators, including greater signal capacity, higher spectral efficiency, and increased optical-fiber span. The most favorable network economics are realized when both spectral efficiency and reach of the optical spans are optimized.

Brad Mells is co-founder and chief technology officer of fiberspace (Woodland Hills, CA).

References

- R.V. Pound, "Electronic Frequency Stabilization of Microwave Oscillators," Review of Scientific Instruments, Vol. 17, No. 11, pp. 490-505, November 1946.

- R.W.P. Drever and J.L. Hall, "Laser Phase and Frequency Stabilization Using an Optical Resonator," Applied Physics B, pp. 97-105, February 1983.

- E. Desurvire, Erbium Doped Fiber Amplifiers, Principles and Applications, John Wiley and Sons (1994).

Let's Talk

Lightwave is interested in reader feedback. Why not drop us a line at [email protected]?