Expanding the bandwidth pipe: Subwave technologies

Rather than dedicate a wavelength to a single service, subwavelength grooming techniques now enable carriers to pack wavelengths with as much traffic as possible for more efficient utilization.

By Brian Pratt, Meriton Networks

After 20 years in the telecom industry, I'm fed up. My tolerance level for articles that do little more than state the obvious or perpetuate meaningless statements has been exhausted. Let's just get to the point!With that said, here is one obvious statement and one not so obvious statement:

- Video on the Internet is here to stay, presenting a challenge to service provider infrastructure, both access and transmission.

- Subwavelength aggregation in the transmission network is needed to support video, but subwavelength switching is essential for it.

First, the obvious part: The IPTV hype can be overwhelming, but I'm sure video will drive Internet growth for a long time. There have been several false starts with video-on-demand (VoD) technology over the last 20 years. Remember when we all thought that VoD was going to be the "killer app" for ATM? The cable multiple-systems operator (MSO) industry, especially in North America, has done very well with TV for a long time. Now all other types of service providers, from older telcos to the latest content providers, want a piece of the action. Video's time as a data application has arrived.

This has significant implications for all operators' infrastructure, particularly the telecom carriers. Remember the rush to deploy fiber during the bubble era around 2000? Everyone gushed about the carriers spending billions to replace copper infrastructure with fiber. Well, the carriers figured out that there was no business case (at that time), and they ended up doing just fine with DSL-based access.

So what has changed? Broadband access and technology to deliver video over the Internet have matured. Video compression—both standard (e.g., MPEG 4) and others—allows a standard-definition (SD) video stream to be neatly delivered into an ADSL connection of 1 to 2 Mbits/sec. Suddenly, it seems like video is everywhere on the Internet—and not just YouTube, but all kinds of video entertainment.

When I first moved to Europe in the early 1990s, my mother mailed to me days-old newspaper clippings to keep me up to date on my ice hockey news from Canada. Now I can watch free streamed highlights from the previous night's games every morning over my home ADSL connection in the U.K., and the quality is fantastic. Broadcast agreements still restrict consumption of free live hockey video to North America. However, broadcast rights holders will soon catch up with Internet-based pay-per-view services. I'll be watching live hockey in Europe via the Internet soon—and at reasonable prices!

This anecdote touches on several important issues:

- First, the cable MSOs and satellite companies are ahead with high-definition (HD) TV. People love it, and not just in North America. A February 2007 study by IDATE Consulting & Research reveals that 20% to 30% of homes in France will have HDTVs by 2009.* HD IPTV streams need a minimum of 8 or 9 Mbits/sec per stream, including audio. Unlike SD video, this does not fit neatly into ADSL.

- It is unclear who will actually make money from Internet video. Carriers need to make substantial investments in infrastructure to support it. The economics and copyright aspects for the various stakeholders remain an area of uncertainty—from Hollywood to the carriers to the content providers. At one time, I thought that music on the Internet would remain a pirate industry, but now my iPod is one of my most treasured possessions, and I regularly buy music on the Internet. (Yes, I actually pay for it.) Similarly, video also will eventually take off.

- It is unclear how video will ultimately be consumed at the home. It's not as comfortable watching video on a laptop in a home office chair as it is watching it on the TV in the living room, with your feet up on the coffee table. The fact is no one knows which box will win: the set-top box, the PC, or something else.

- Broadcast TV on the Internet is simple. It's the on-demand part that is interesting and exciting. It is unclear whether on-demand HDTV will be streamed or downloaded and then watched. Whether it is a 9-Mbit/sec stream or a 10-Gbyte file of a two-hour movie, there are major access and backhaul capacity implications around on-demand HD IPTV. Consumers won't wait 10 hours to download a movie (as is the case for a 10-Gbyte file over 2-Mbit/sec ADSL) or even two hours (over uncontended 8-Mbit/sec ADSL2). Either way, anyone who competes with the cable MSOs and satellite TV providers needs to address capacity.

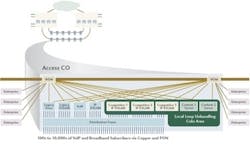

- A full-rate Gigabit Ethernet (GbE) per IP DSLAM is a minimum. Hundreds of compressed SD and HD broadcast channels fit into 500 Mbits/sec of capacity. However, the remaining 500 Mbits/sec could support at most a few hundred uncontended on-demand video streams or downloads. If the IP DSLAM is intended to support even 1,000 subscribers, a full-rate GbE is barely enough.

The bottom line:

So what does this have to do with subwavelengths?

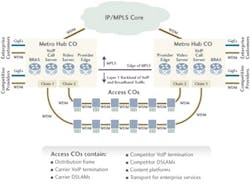

Many next-generation IP/MPLS-based networks already are being built on WDM technology for transmission. WDM is used to backhaul GbEs, SONET/SDH, and other traffic from access central offices (COs) to metro hubs.

IPTV drives full-rate GbE services for backhaul from access COs to metro hub COs, including at least one or two for the carrier. And at least one or two additional GbEs are required for competitive carriers. Regulators everywhere are forcing local loop unbundling on incumbents. The competitors are putting their own IP DSLAMs with GbE interfaces into the access CO. Additionally, content providers want to place their own platforms in the CO, including video servers, also with GbE interfaces. Finally, enterprise customers will require GbEs as well.

There is IPTV fever here, but it results in larger, higher-density, urban COs with as many as 10 to 15 GbEs to backhaul. Even when the market shakes out, there still will be a large number of GbEs to backhaul.

These GbEs are full-rate "circuits," backhauled from access COs to metro hub COs where they must be distributed to their destinations. Layer 2 and 3 technologies (IP/MPLS, Ethernet switching, etc.) are great for bursty packet traffic. However, packetizing a full-rate GbE adds cost and complexity. Delivering it via Layer 1, on the other hand, is ideal.

We could stop and say, "Great, WDM backhauls many full-rate GbEs." As mentioned above, however, there are challenges inherent in backhauling such a large number of full-rate GbEs.

In Fig. 2, each of the access COs provides the following protected services:

- Two SONET/SDH services for legacy voice and legacy DSLAM.

- Two GbEs for VoIP and carrier IP DSLAM.

- Three GbEs for three competitors' IP DSLAMs.

- Two GbEs for two content providers.

- Four GbEs for four enterprise customers.

There are, therefore, 13 optical services per access CO. Multiply those 13 optical services by six for the number of COs per chain, and you have a total of 78 optical services—much more than 40 DWDM wavelengths per access ring/chain. With two access chains terminating at each metro node, there are 156 such services to be distributed per node.

Also shown in Fig. 2, access COs arranged in ring or chain topologies could have three, six, or even a dozen full-rate GbEs each to backhaul. For all of the GbE and non-GbE demands on the chain or ring, an expensive 40-channel DWDM system will run out of wavelengths if each is consumed for one GbE.

In high-density urban environments, carriers are turning to 10-Gbit/sec wavelengths, each carrying as many full-rate GbEs as possible. G.709/OTN and proprietary methods provide eight GbEs per 10G wavelength. Standard Generic Framing Procedure (GFP) allows up to nine GbEs to be multiplexed onto a single 10G wavelength. Delivering GbEs as "subwavelengths" is not new; Virtual Concatenation (VCAT) allows it to be done with SONET/SDH, too. However, the goal of the IP/MPLS-based next-generation network is to deliver this capacity without SONET/SDH.

The challenge we see for carriers is the metro hub. Designers see many GbEs arriving there, increasingly on 10G wavelengths, which need to be distributed to their destinations. Designers also foresee churn. Enterprise customers, competitive providers, and content providers come and go, adding and removing GbEs as they do.

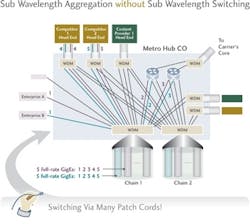

Without switching for those subwavelength GbEs, the carrier uses patch cords—lots of them. And they use truck rolls to the metro hub to add or change them.

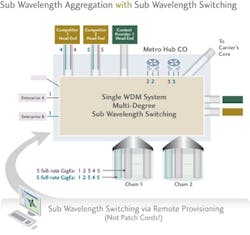

The better approach is to switch them: Use a backplane and switching fabric to take GbEs from one 10G wavelength and either drop them to GbE client ports or switch them to any other 10G wavelengths on any other WDM fiber pairs.

Consider a chain of access COs where each CO has five GbEs to backhaul to the metro hub, as depicted in Fig. 3. The backhaul is done over 10G WDM wavelengths.

At the metro hub CO, the GbEs must be extracted from 10G wavelengths and distributed to their respective destinations.

Without subwavelength switching (see Fig.4), all GbEs will be dropped out of the WDM system as client ports. If their destinations are onward WDM fibers, patch cords must be used across the floor to plug them back into another WDM system.

With subwavelength switching, multiple WDM fibers plug into the same WDM system, avoiding the need for patch cords to redirect GbEs to WDM destinations. As shown in Fig. 5, the GbEs are distributed from one WDM fiber to another without dropping out as client ports at any point.

The strength of the subwavelength switching technology is remote provisioning, which avoids costly truck rolls and keeps operational expenditures (opex) low. Additionally, subwavelength switching accelerates time to revenue/service activation for the service provider. As for capital expenditure:

- It replaces multiple back-to-back systems with one system.

- It avoids unnecessarily burdening the IP/MPLS service layer with packetizing/de-packetizing full-rate GbE flows for transport switching rather than termination of service-layer packets, which the IP/MPLS service layer was designed to do.

Layer 1 switched transport is perfect for point-to-point circuit-oriented traffic. It does not replace the role of IP/MPLS in the core or at the service layer or Ethernet-based transport tunnels such as Provider Backbone Transport (PBT). Rather, it complements and adds value to the service provider that uses it for this growing service type.

Case studies of service providers have shown that up to 60% savings can be achieved with subwavelength switching at the hub node—instead of just subwavelength transport—of GbEs.

It is easy to get lost in the hype around IPTV and IP/MPLS. Savvy service providers have taken a pragmatic look at the traffic today and its expected growth, and they see important roles for wavelength transmission, wavelength switching, subwavelength switching, and Ethernet tunnel transport (especially for sub-GbE flows via new technologies such as PBT), all enabling a new transport architecture for the IP/MPLS network. Subwavelength switching is a great technology for backhaul of full-rate GbEs in the access network.

Now, if I could only see more live hockey games, I'd be happy.

Brian Pratt is Meriton's (www.meriton.com) director of technical marketing, EMEA. He is based in Birmingham, UK, and is an avid ice hockey fan. He can be reached at [email protected].

Reference

* R. Montagne, IDATE Consulting & Research, "FTTH Deployment Costs: Overview and French Case," Barcelona, February 7, 2007, FTTH Council Europe, Panel Session Architecture Committee. See page 15 of: http://www.reseau-ideal.asso.fr/thd/medias/interventions/pleniere1/pm_attali_idate.pdf.