Research networks revive interest in circuit switching

by N.S. Rao, W.R. Wing, and Jeff Verrant

Supercomputer users at the Department of Energy (DOE) need to move super-sized files, but shared-infrastructure TCP/IP-based networks are unable to handle the bandwidth overload. Dedicated circuits, by contrast, provide dedicated bandwidth and absolutely predictable performance. With dedicated circuits, users are freed from the tyranny of TCP; they can use all the bandwidth for which they have paid.

Of course, even the DOE can’t afford the collection of dedicated circuits that would be required to connect all its supercomputer sites to all its users. Instead, the DOE is turning to high-bandwidth switched-circuit networks. Switched circuits offer the same advantages as dedicated circuits but are more cost effective.

Supercomputers are a lot like telescopes for high-level astronomy; there are a limited number of them, and users must schedule usage time well in advance. In the case of the supercomputer, the user has a finite amount of time to transfer data files from the computer site to their home institution when their allotted time arrives. By scheduling a switched circuit in conjunction with the computer time, the user guarantees that the data (tens or hundreds of terabytes) can be transferred in a timely manner.

So if switched circuits are so great, why aren’t they used more often? The answer seems to be that engineers have focused so much effort on packet switching-for its scalability benefits-that circuit switching has been largely forgotten. However, circuit switching offers an ideal solution to demanding requirements. If the total number of sites to be connected is relatively small and the demands are extremely high but can be predicted and scheduled-and, in particular, if there is a need for nonstandard transport-then circuit switching’s benefits make it the only game in town.

Historically, network engineers objected to the use of circuit switching for data applications because an expensive over-provisioned mesh would be required to increase the probability of a connection being available when needed. However, a circuit-switched network that allows reservation or scheduling of connections in advance can offer the advantages of both dedicated and shared infrastructures.

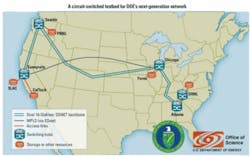

Three years ago, researchers at the DOE’s Oak Ridge National Laboratory started building a testbed network to exercise this approach to high-performance networking in the real world (see Fig. 1). The UltraScience Net (USN) testbed was built on a pair of backbone OC-192 SONET circuits provided by National LambdaRail and SONET switches provided by Ciena. USN has installed Ciena’s CoreDirector multiservice switches at each of its nodes, which are located in Sunnyvale, CA; Seattle; Chicago; Atlanta; and Oak Ridge, TN. The CoreDirector switches provide a full set of backbone ports and between two and four client ports at every location.This USN architecture (CoreDirectors acting as fast switching front-ends for static lambdas) has proven so robust that it is now being adopted by other research and education networks such as Internet2 and LHCnet.

Since the USN testbed has become operational, its users have discovered that it is a valuable resource for testing several other aspects of leading-edge networking as well. Because USN’s Layer 1 paths can be provisioned independently of normal routing, it has turned into an ideal testbed for wide-area hardware and protocol tests. These tests have included techniques for bridging between Layer 2 and Layer 3 networks at USN’s edges and wide-area tests of specialized transport protocols such as InfiniBand.

InfiniBand is a particularly interesting case. It was initially promoted as a data center protocol for building high-performance storage clusters, but it is becoming more and more interesting in the wide area. It is inexpensive, it scales well, and it can connect both disk arrays and large clusters.

As interest and need have increased in widely distributed, object-oriented databases such as Lustre and PVFS, some venders have started offering InfiniBand switches with sufficient buffering to be useful in wide-area applications. The CoreDirector switching platforms enable the pair of USN backbone circuits to be used for controlled loop-back tests. Figure 2 illustrates some of the results of looping a pair of Linux hosts, each with an InfiniBand wide-area unit (Longbow devices from Obsidian Research Corp.), out and back through USN. The essentially flat performance achieved independent of distance is in striking contrast to normal network performance.

In summary, the latest generation of SONET switches is demonstrating the advantages of circuit switching in solving some of the most difficult problems associated with packet switching.

N.S. Rao is Sensor Networks & Information Fusion Group leader and W.R. Wing is senior research staff member and Networking Research Group leader in the Computer Science & Mathematics Division at Oak Ridge National Laboratory. Jeff Verrant is director, research & education initiatives, at Ciena Corp. (www.ciena.com).