Industry debates merits of 100GbE transmission

Last July, the IEEE 802.3 Working Group formed the Higher Speed Study Group (HSSG) to determine whether the time is right to develop 100-Gigabit Ethernet (100GbE) standards. In November the group voted in the affirmative, and now the real work can begin.

The debate over the next benchmark after 10 Gbits/sec once again places SONET/SDH transport (which is finally seeing fielded 40-Gbit/sec systems) out of sync with Ethernet, which remains wedded to 10× generational leaps in capacity. Ethernet supporters argue that it makes no sense to reengineer a system for only a 4× improvement in speed when you can achieve 10×.Of course, it’s not enough to say, “There is a demand for 10 times more capacity”-although the big Internet exchanges and major telecommunications carriers say there is. You also have to develop the necessary technology, and given the hurdles that 40-Gbit/sec transmission has had to overcome, the development of 100GbE would appear a formidable challenge. However, several vendors say they have already obviated some of these challenges, at least in the near term.

While a serial, single-wavelength 100GbE stream would be the most spectrally and bandwidth-efficient implementation, many industry insiders agree that a parallel approach will be the quickest route to practical 100 Gbits/sec. In its initial implementations, therefore, 100GbE likely will comprise multiple lanes of a lower bit rate.

“100-gig Ethernet is probably going to be the first interface where the total client rate doesn’t match the line rate,” explains Peter Anslow, senior standards advisor for Nortel (www.nortel.com). With the exception of the parallel 10GbE LX4 PMD, Ethernet’s client rate and line rate have always marched in step. But many people are now arguing that decoupling the client and line rates for 100GbE makes the most sense in the short term. Suggestions on the table include 10×10, 5×20, and 4×25 implementations, in addition to a serial option.

A critical challenge that serial 40-Gbit/sec transport has already faced is the physical impairments encountered at higher bit rates. Chromatic dispersion, for example, scales exponentially with bit rate; a 40-Gbit/sec link has 1/16th the dispersion tolerance of a 10-Gbit/sec link. Dispersion impairments at 100 Gbits/sec, therefore, would be 100× greater than at 40 Gbits/sec. But running multiple lanes at a lower bit rate mitigates such physical impairments. Dispersion is far more manageable at the 10-Gbit/sec rate.

Moreover, says Serge Melle, vice president of technical marketing and business development at Infinera (www.infinera.com), a parallel implementation would provide greater resiliency. If a 100GbE serial data signal were interrupted in any fashion, the entire signal would be lost, whereas two or three 10-Gbit/sec channels potentially could be lost and transmission would continue, albeit at a reduced bit rate.

Infinera champions the 10×10 implementation, which it already has demonstrated over Level3’s network (see “Demo Showcases 100GbE Transmission over Commercial Network” below).

However, David Huff, vice president of marketing and business development at the Optoelectronics Industry Development Association (www.oida.org), believes that a 5×20 implementation would be better, as he believes it would be possible to develop a 5×20-Gbit/sec-capable transceiver in a standard XENPAK module. While the industry still would need to develop the components to support a 5×20 configuration, the mechanical, electrical, and thermal side of the problem would be solved.

Not everyone is sold on the benefits of a parallel approach, however-at least, not using currently available technology. Per Hansen, director of business development at ADVA Optical Networking (www.advaoptical.com), says, “It’s not obvious how that would give you any cost saving in a national backbone, for example. When you went from 2.5 to 10 gig, one of the advantages was you got four times as much capacity for typically an increase of two-and-a-half to three times the cost. This is the same that people are looking for at 40G. But if you go to a parallel scheme, it’s not obvious how you would derive similar cost savings.”

Joe Lawrence, principal architect at Level3 (www.level3.com), reports that his company is seeing a tremendous demand from its customers for high-speed connectivity. He says the Level3 network already is running at eight lanes of 10-Gbit/sec traffic between city pairs, using existing link aggregation technology. If carriers already are running multiple lanes today, how much economic value will they derive from bonding those lanes together?

“Technology is now coming out where you could issue multiple signals off the same chip,” explains Lawrence. “You don’t have to limit yourself to just one wavelength per chip. And, of course, there’s a lot of integration in the packaging and what’s on the chip, so you get a lower cost.”

And therein lies the rub. For many, the key issue in the 40G versus 100G debate is not technology-although that certainly plays a big part. It’s economics. Consider Verizon Business (formerly MCI), which has had an active 40G program for some time now. “I think we’ve done more lab trials and field trials than anyone else,” admits Glenn Wellbrock, director of network technology development at Verizon Business (www.verizonbusiness.com). “We have been able to do 40G experiments, at least at reasonable distances, for two years now-almost three. And yet, it still hasn’t become a viable product,” he says. “You don’t see it shipping in volume simply because the cost isn’t right. We’re not looking to go to full commercial deployment until it’s cost effective.”

Implementing, say, a 10×10 architecture using discrete components-10 lasers, 10 modulators, etc.-would be cost prohibitive. But several companies are making headway in the field of photonic integration, particularly Infinera. The vendor is shipping its DTN system, complete with photonic integrated circuits (PICs), worldwide. Each PIC can send or receive 10 lanes of traffic at 10 Gbits/sec, for a total bandwidth of 100 Gbits/sec. And in March 2006, Infinera announced a lab demonstration of a 1.6-Tbit/sec chip that integrates 240 optical devices.

Startup Luxtera (www.luxtera.com) also has entered the photonic integration market with a production die that multiplexes four 10-Gbit/sec wavelengths onto a single fiber, resulting in a 40-Gbit/sec link. The single-wavelength 10-Gbit/sec transceiver is implemented in standard CMOS technology with integrated indium phosphate lasers and is less expensive than existing high-bandwidth parallel interconnections, say company representatives, who note that the same technology paves the way for 100GbE connectivity.

The technology breakthrough that is most often cited as a key enabler of 40-Gbit/sec transmission also could prove critical for the development of a 100GbE serial technology: advanced modulation schemes that mitigate the effects of dispersion by modulating both the amplitude and phase of the signal. “If I had to pick one technology for 100-Gbit serial or single-wavelength transmission, I would say it’s probably DQPSK,” says Huff.

DQPSK, or differential quadrature phase-shift keying, enabled Lucent Technologies’ Bell Labs (now part of Alcatel-Lucent; www.alcatel-lucent.com) to transmit 10 channels of 107 Gbits/sec over a distance of 2,000 km in a demonstration detailed in a paper presented at the recent European Conference on Optical Communication (ECOC). Bell Labs researchers reported that the use of DQPSK technology at 107 Gbits/sec enabled high-speed transport using a prototype fully integrated lithium niobate modulator and hardware similar to that used in 40G networks. They say the use of commercially viable products is a good first step toward the realization of serial 100-Gbit/sec technology.

There seems to be little doubt within the carrier community that bandwidth demand will continue to push bit transmission rates ever higher. “When we look to go forward,” says Level3’s Lawrence, “we see that the key to scaling the network isn’t a router with 10,000 interfaces. It’s a router that can handle very high-capacity interconnection between the large cities.”

Lawrence doesn’t mince words when it comes to Level3’s enthusiasm for 100GbE transmission: “The minute 100-GigE comes out,” he says, “we’re going to be buying lots of them and running them over the wide area.”

In the interim, the carrier is watching the IEEE 802.3 HSSG proceedings with a careful eye. “It is our view that it would be tragic if 100 gig came out and there wasn’t a way to operate over WDM systems,” Lawrence admits. “I’m not saying that it has to have the exact same format as SONET or existing LAN PHY. I might need to go get a 100-gig card, but you can take an existing WDM system that operates at 10-gig rates and add new 10-gig lanes that have different packet formats on them or different bit formats and those could operate together.”

Moreover, while Lawrence reports that Level3 “has some ideas about the technology”-it has shown an early preference for 10×10 implementations-the carrier also believes that the most economical option will be the one that can be shipped in volume. “On the debate of ‘Should we go to 100GbE or 40 gig?’ I would first point to, ‘Well, you put an E at the end.’ To me, that means volumes,” says Lawrence. “In my mind, you’ve told me 100-Gigabit Ethernet is going to be the more economical solution.”

For this reason, he says, Level3 will adopt whatever option the IEEE chooses to standardize. “We’ll debate within the standards body, but whatever they come out with, our goal is just to make sure that that technology can operate over our network,” he contends.

For Verizon Business, historically a champion of 40G, the issue also comes down to economics. “Some folks have asked me, ‘Should we just skip [40G]?’ My answer to that is, ‘No,’” says Wellbrock. “We need 40 gigabits, and we need people to pursue it.” When the industry jumped from 2.5- to 10-Gbit/sec transmission, a new generation of equipment was required, which meant lots of innovation, investment, and R&D activity. Wellbrock believes the jump from for 40- to 100-Gbit/sec transmission, “at least for the folks who paid the price to go from 10 to 40, will be much easier,” he says. “It’s a considerable step, and it’s not going to happen overnight. But it is achievable with the tools and innovation that took place in order to get us to 40.”

Wellbrock notes that a step from 10 to 40 Gbits/sec is, after all, a 4× improvement in bandwidth capacity. But, he says, if Verizon Business could transmit 100GbE at a lower cost than 40G, those economics would prove an irresistible incentive. A 100G transmitter would result in fewer points of failure and fewer devices to manage and spare, which is “just an overall good thing,” says Wellbrock. “And our cost per bit would go down, even if [the vendors] were selling it four or five times [the cost of] a 10G transmitter. Our costs would be much better, and the manufacturer would have a little more room to breathe. Today, they are doing everything they can to try to make 40G cost-effective, trying to get to where they can still make a living selling them and get below this 4×, 4×10G, and they are struggling to do that.”

At the end of the day, says Lawrence, the carriers just want to see a technologically sound, cost-effective method for increasing bandwidth. “This isn’t a WAN PHY versus LAN PHY debate,” he says. “This isn’t us carriers coming in and saying, ‘It needs to follow SONET.’ This is us coming in and saying, ‘We will work with whatever speed ranges are reasonable.’ And we’ll modify our WDM system to interoperate with it.”

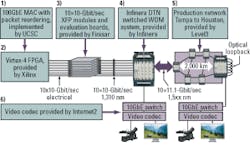

At the recent SC06 International Conference, held in Tampa, FL, last November, Infinera teamed with Finisar, Level3 Communications, Internet2, and the University of California at Santa Cruz (UCSC) to demonstrate what it claims was the first successful 100-Gigabit Ethernet (100GbE) transmission through a live production network.The 100GbE traffic was transmitted over Level3’s existing DWDM network from the show site in Tampa, FL, to Houston, TX, and back-a total of 4,000 miles.

According to Level3 principal architect Joe Lawrence, Level3 today transports eight lanes of 10 Gbits/sec across its network using existing link aggregation techniques, and he is quick to point out that this demonstration is far more sophisticated. “This is bundling at the MAC layer, not the link aggregation layer,” he says. “This isn’t us just simply running LAG [link aggregation] across ten 10-gig waves. We’re already doing that in our IP network. This is actually making it appear as one 100-gig single pipe.”

The University of California at Santa Cruz provided a 100GbE MAC and packet-reordering algorithm that preserves packet order as individual traffic flows are striped across multiple wavelengths. The algorithm corrects skew caused by variable latency in the optical signals, which is a shortcoming of the existing link aggregation method.

The demonstration used an Infinera-proposed implementation for bonding 10 parallel lanes of 10 Gbits/sec into one logical flow. A Xilinx FPGA electrically transmitted all 10 signals to ten 10-Gbit/sec XFP optical transceivers, provided by Finisar, which converted the signals into the optical domain. From there, the signals were transmitted to Infinera’s commercially available DTN Switched WDM System, where they were handed off to the Level3 network.

Lawrence admits that, at least today, such an implementation “is not necessarily economical. It’s a demo chip, but the rest of the solution is economical,” he says. “The WDM system was not changed specifically to handle this.” Nor was the existing network, which is another reason why Level3 was keen to take part in the demonstration.

Serge Melle, vice president of technical marketing and business development at Infinera, believes the demonstration will have far-reaching effects, first because it proves that a 10× jump in the Ethernet domain is technologically feasible. And second, “it’s also recognizing that Ethernet as a protocol has a lot of advantages as the next scaling step-stone rather than just continuing with SONET,” he says. “There’s a lot of consensus within the industry that Ethernet interfaces have much better cost points than core IP routers with SONET interfaces.”