Adapting to Ethernet

The future of Gigabit Ethernet in the adapter market is tied to maximizing user investment.

JONATHAN ALBIN and KENNY TAM, SysKonnect Inc.

More speed, more bandwidth, more designs-the evolution of Gigabit Ethernet (GbE) and network adapters is driven by advancements in server bus technology, intelligent network adapters, and bandwidth-intensive network applications. Peripheral component interconnect (PCI) remains the leading technology, but we are already seeing products for PCI-X. New technologies are on the horizon, including InfiniBand, 3GIO, and HyperTransport. Each new and improved architecture promises more bandwidth and greater performance.

Enhancements in bus technology are increasing the bandwidth of the entire network. The first 32-bit/33-MHz PCI boards have now progressed to 64-bit/66-MHz boards. The new PCI-X bus will begin as a 64-bit/66-MHz bus and grow to 64 bits and 133 MHz. PCI-X will have 1 Gbyte of shared bus throughput with planned growth to 2 Gbytes and 4 Gbytes.

Recently, a proposal offered by the PCI-SIG organization released plans for 3GIO bus technology. 3GIO, also known as Arapahoe, uses unsynchronized signals and fewer wires than PCI for transferring data at higher speeds. The current stated objective is for 3GIO to achieve performance levels 12 times faster than those of PCI-X. Final specification is anticipated by the second quarter of 2002, with products appearing in the second half of 2003.

The emerging InfiniBand switched-fabric serial bus will start at 0.5 Gbyte of throughput, with planned growth to 6 Gbytes of full-duplex throughput. InfiniBand is an industry initiative for high-speed, serial I/O switched "fabric" architecture. It resolves many of the traditional barriers seen with shared-bus architectures and is working to be the I/O interconnects for next-generation data-center communications.

With broad industry support, the InfiniBand architecture is viewed as a key element for building reliable, low-cost, high-performance servers. Initially, InfiniBand will be used to connect servers with remote storage and networking devices. It will also be used inside servers for interprocessor communication (IPC) in parallel clusters.Companies, which include Internet service providers (ISPs) that require dense server deployments, will also benefit from the smaller form factors that are proposed for InfiniBand. InfiniBand promises better performance, lower latency, easier and faster sharing of data, built-in security, and quality of service (QoS).

Just around the corner is the migration to 10-Gigabit Ethernet, which is standardized for both the LAN and WAN. Therefore, 10-GbE is considered to be an alternative to ATM in the WAN. Today, 10-GbE is only specified for fiber. Businesses anticipating migration to 10-GbE must consider this path when wiring their backbone.

It is unlikely vendors will produce GbE adapters for InfiniBand or any of the other new bus technologies. Early development on adapters for the new bus technologies will be in the area of 10-GbE.

As server and bus technology becomes more advanced, the burden placed on the network adapters will increase. The distinction between high-end and low-end network interface controllers (NICs) will become more evident as adapters become classified as "worksta tion-class" or "server-class" NICs.

The lower-end, or workstation class, NICs will compete on price. They will be based on single-chip designs with low-profile dimensions and lower heat dissipation.

On the high-end, performance, reliability, and off-loading will be more important. Server-class cards improve bandwidth by decreasing CPU and bus utilization by using NICs on board memory and processing such as reduced instruction-set computing (RISC) to offload functions like the transmission control protocol (TCP)/IP checksum calculation and the TCP stack. High-end adapters will support buffering, dynamic interrupt moderation, and 802.3ad link aggregation, and they will rely on superior chip speed.At the server, reliability is also crucial. A dual-port NIC can provide fail-over and fault tolerance from a single expansion slot, conserving bus space and decreasing bus usage. Adding this functionality at the adapter not only increases bandwidth, but also provides a more cost-effective solution.

A small investment in a server-class NIC can have a profound impact on network performance. In this period of strained budgets, a gigabit NIC running at the server is a cost-effective method of improving overall network bandwidth.

When Midwest Pre-Press in Kansas City needed a faster solution for large file transfers over the network, it looked into GbE. The company's data files, on average, totaled more than 1.5 Gbytes. Midwest Pre-Press installed a 48-port switch and gigabit-over-fiber NICs. One NIC was installed in the server and another in the Rampage RIP. The system enables Midwest's server and the Rampage boxes to transfer high-resolution photos and other data at a very fast rate, saving time and increasing production.

Two emerging trends in the NIC space are higher port density and IP security offloading. A security chip on the adapter will manage the offloading of critical security applications such as encryption and authentication. The CPU host or NIC will be able to handle the administration, resulting in greater performance and reliability.

Server NICs will have a higher port density. The availability of dual- and quad-port GbE adapters should increase. Based on smaller-form-factor requirements, future generations of multiport fiber NICs will be based on VF-45 and MT-RJ connections.

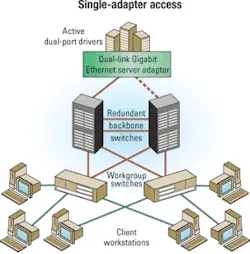

Multiport adapters have three applications. They provide built-in fail-over and fault tolerance. Manufacturers of multiport GbE adapters have developed redundant link-management technology (RLMT) to manage fail-over. RLMT masks the two physical media access control (MAC) addresses of the adapter ports into one "virtual MAC address" (see Figure 1).

Therefore, a fail-over is considered to be a path changed by the routing table. Fail-over is almost instantaneous. Without RLMT, the routing table must compute a new MAC address, which can take about 30 sec. During those 30 sec, the network connection can fail. The instant fail-over of RLMT is ideal for ISPs, e-commerce applications, or other servers where reliability is crucial.

Another application for multiport adapters is to increase throughput with 802.3ad link aggregation. Users can aggregate multiple ports from a single adapter, resulting in greater throughput while conserving bus slots and decreasing bus usage. Similarly, multiple ports can be used to access multiple network locations from a single adapter (see Figure 2), also conserving bus slots and decreasing bus usage.

Statistics charting the growth of GbE are becoming more prevalent. Industry analyst IDC Research (Framingham, MA) predicts that GbE product sales will grow at an annual rate of more than 100% through 2004, surpassing Fast Ethernet as the predominant LAN switching technology.

One factor driving the growth of Gigabit Ethernet is the decreasing price per port. Some projections site prices dropping by almost 50%. Another driving factor is the insatiable appetite for speed. More businesses are installing more bandwidth-intensive applications, bringing GbE out of the backbone and into departmental and application servers.

Traditionally, the server market for adapters was considered the file and print server used by the entire organization. Today, these servers are surrounded by a multitude of appliance servers such as Web, storage, security, and video streaming. Application bandwidth has always been a major influence in the development of network technology. The stress put on networks by processing video, voice, and storage as well as niche applications like animation, three-dimensional graphics, and pre-press will drive the need to increase the speed and capacity of the network. Clustering multiple servers has emerged as a method to obtain more process power and add fault tolerance.

The increased speed, simplicity, and cost-effectiveness of GbE are encouraging the growth of previously cost-prohibitive applications such as SANs. Small computer-system interface (SCSI)-over-TCP/IP (iSCSI) provides high performance, low latency, and low CPU overhead while using the existing IP infrastructure. As a result, companies will have an alternative to the high cost of deploying Fibre Channel SANs.

The attractiveness of Ethernet is based on its cost-effectiveness, flexibility, compatibility, and manageability when compared to other network technologies. The future of GbE is tied to its ability to maximize user investment.

On the infrastructure side, that will be done by providing scalable, manageable solutions for future migration in areas such as cabling. On the component side, users want access to cost-effective methods that improve performance and adapt to future needs such as the ability to upgrade firmware on the network adapter. The ability to add functionality to components like the network adapter is one method to improve performance without requiring large-scale investments. On the application side, it is the capability to route functionality such as IP storage-over-Ethernet that will drive innovation in Gigabit Ethernet.

Jonathan Albin is director of channel marketing and Kenny Tam is vice president of technical services at SysKonnect Inc. (San Jose, CA). They can be reached via the company's Website, www.SysKonnect.com.

In the February 2002 Lightwave Special Report "Polarization-OTDR: identifying high-PMD sections along installed fibers," p. 64, the measurements in Figure 3's top graph showing the OTDR trace of a link-build from a concatenation of six fibers should read as follows (from left): 0.8 psec, 5.8 psec, 3.2 psec, 1.4 psec, 10.3 psec, and 1.2 psec.