Superchannels to the rescue!

As the need for ever-increasing amounts of DWDM transmission capacity shows no sign of waning, the optical transport industry is moving toward a new type of DWDM technology – the “superchannel.” A superchannel is a set of DWDM wavelengths generated from the same optical line card, brought into service in one operational cycle, and whose capacity can be combined into a higher-data-rate aggregate channel. It’s the DWDM industry’s answer to the question, “What comes next after 100 Gbps?”

Scaling optical-fiber capacity

The capacity and service flexibility of optical fiber is remarkable, but still governed by strict rules of physics and engineering practicality. Although written in 2006, Emmanuel Desurvire’s paper still gives an excellent overview of those limits, while a more recent paper by Adel Saleh and Jane Simmons points out that increases in the spectral efficiency of optical transport systems ultimately provides the biggest “bang for the buck” in terms of capacity scaling to meet growing internet demand.1,2 But what neither of these papers covers is that, despite 40% compound growth in demand over the past five years (equivalent to a factor of five increase), service providers are not able to hire an army of extra network engineers. In fact, in most cases headcount will be frozen.

So it’s clear that the optical transport networks of the future must be capable of turning up much larger amounts of DWDM capacity for a given operational effort without sacrificing optical reach or total fiber capacity. Today that capacity unit in long-haul networks is 100 Gbps – a data rate enabled by a series of advances in optical transmission, namely:

- High-order phase modulation (typically polarization-multiplexed quadrature phase-shift keying, or PM-QPSK).

- Coherent detection using a very stable local oscillator laser.

- Advanced digital signal processing in the receiver to compensate for fiber impairments.

- High-gain forward error correction (FEC), including soft-decision FEC that can offer more than 11 dB of gain for a typical span.

Let’s refer to the combination of these four items as “coherent technology,” which offers a quantum leap in terms of optical performance compared to non-coherent systems. While there will likely be incremental improvements in future coherent technology, these advances alone are unlikely to keep up with bandwidth demands.

It’s interesting to note that computer manufacturers are facing a similar problem. You may be aware that CPU clock speeds appeared to stop getting faster about five years ago. Yet the famous Moore’s law remains valid in that the number of transistors on a chip is still increasing. CPU and GPU (graphics-processing-unit) manufacturers are using those additional transistors to build multiple cores, rather than running individual cores at faster data rates. But the chips they produce appear as a single unit of processing capacity to the operating system.

Likewise, a DWDM superchannel consisting of multiple wavelengths appears as a single unit of operational capacity to the network engineer. This analogy is shown in Figure 1.

Implementing superchannels

So what’s the best way to implement coherent superchannels? Let’s assume that a service provider needs to turn up a terabit of optical capacity in a single operational cycle. Today that would mean installing ten 100G transponders – an approach that actually takes more than 10X the effort of a single transponder because each time a transponder is added it affects the existing wavelengths in the fiber. Since this approach offers no value for operational scaling, we will not consider it further.

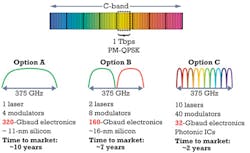

Instead, Figure 2 shows three engineering options – A, B, and C – that we will consider. All three examples will use PM-QPSK as the modulation technique:

- Option A is a single-carrier (i.e., one wavelength) transponder operating at 1 Tbps. That’s effectively a 100G transponder where the electronics run 10X faster. Unfortunately, electronics (particularly the analog-to-digital converter and DSP chips) that run at the 320-Gbaud rate required will not be available for another decade, according to certain industry roadmaps.

- Option B is a superchannel implementation consisting of two 500-Gbps “subcarriers,” which are electronically combined in the transponder card to appear as a 1-Tbps superchannel. The advantage is that the performance of the electronics is halved to 160 Gbaud. Unfortunately, we still have to wait about seven years before chips with this performance level are available for products (they may be available for hero experiments before this, of course).

- So let’s take that to the next step with Option C, a superchannel with 10 subcarriers, which divides the electronics performance by 10 also – and 32-Gbaud electronics is actually available today. However, 10 subcarriers imply 10 optical circuits, and coherent technology already requires a rather large number of high-quality and therefore expensive optical components even for a single optical circuit. In fact, a 10-carrier 1-Tbps superchannel line card would involve around 600 optical functions in total for the transmitter and receiver circuit – quite impractical if built using discrete optical chips.

Fortunately, DWDM systems based on large-scale (i.e., multi-carrier) photonic integrated circuits (PICs) have been commercially available since 2004. These PICs predate the more recent move toward coherent technologies, and many skeptics in the DWDM industry had initially expressed their doubts that such an advanced level of optical performance could be delivered in a commercial PIC.

During the course of 2010 and 2011, however, a series of incremental field trials was completed culminating in a terabit of superchannel capacity being transmitted over a production DWDM fiber link between San Jose and San Diego on the TeliaSonera International Carrier network last November. The TeliaSonera trial used twin pre-production 500G coherent superchannel line cards, thanks to large-scale PIC technology. Turning up this 1 Tbps of capacity took two operational cycles, one for each 500 Gbps of capacity. This implementation compares much more favorably to the “multiple rack” implementation that’s typical for a discrete-component superchannel demonstration requiring 10 line cards of 100 Gbps of capacity.

High-order modulation

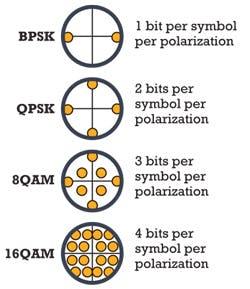

Those of you with a cable, wireless, or xDSL technology background may already be familiar with higher-order phase modulation. Figure 3 shows the basic principle. Binary phase-shift keying (BPSK) uses two phase states per modulation symbol, which encodes 1 bit in that symbol. By adding polarization multiplexing, PM-BPSK encodes 2 bits per symbol. We can add phase states to each symbol to encode additional bits, enabling us to transmit higher data rates with much better spectral efficiency. PM-BPSK will deliver 4 Tbps in the C-band, while PM-16QAM increases that to about 16 Tbps.

But higher-order modulation comes at a price. Because optical fiber is a non-linear medium, each modulation symbol can only be transmitted at a certain power level before non-linear effects are triggered. While PM-BPSK superchannels could well be used for transpacific submarine links, the reach of a PM-16QAM superchannel may be limited.

Going gridless

In explaining Figure 2, I had said that 1 Tbps of capacity will require about the same amount of fiber spectrum regardless of how many subcarriers make up the superchannel. That’s not true if the channels are “forced apart” to comply with a fixed grid ITU-T G.694.1 spacing. This recommendation defines several grid spacings, including 25 and 50 GHz. If we assume a 10-carrier superchannel of 100G per subcarrier, using PM-QPSK, then the carrier width is about 37 GHz. That’s too wide for a 25-GHz grid, yet using a 50-GHz grid will “waste” about 25% of the available fiber spectrum.

For this reason ITU-T has updated G.694.1 to include a “flex grid” option based on a 12.5-GHz granularity. The spectral width for a 1-Tbps gridless superchannel varies from about 750 GHz (PM-BPSK) to about 200 GHz (PM-16QAM). But all of these superchannels can be accommodated efficiently using a multiple of 12.5 GHz.

In the short term, however, service providers will need a superchannel that can be deployed on an existing grid-based DWDM line system. So the first generation of commercial superchannel products will use “split spectrum” superchannels, a term coined by the IETF. Split spectrum superchannels could potentially be designed to operate on 25- or 50-GHz G694.1 grid line systems and will provide a seamless migration from a grid-based to gridless architecture. Meanwhile, they’ll also offer the required operational scaling benefits and only sacrifice about 20–25% of the maximum ideal fiber spectrum (assuming PM-QPSK modulation).

OTN flexibility

An interesting technical challenge that results from superchannel architectures is the need for more flexibility for Optical Transport Network (OTN) transport containers. The current OTN hierarchy defines ODU0 (1.25G), ODU1 (2.5G), ODU2 (10G), ODU3 (40G), ODU4 (100G), and ODUflex (n x 1.25G). ODUflex was ITU-T’s response for more flexible, lower-data-rate containers. Since superchannels may vary in their total capacity, depending on the balance of capacity and reach needed by the network designer, it’s necessary to define an “adaptable” OTN container that can be sized accordingly.

At last December’s ITU Study Group 15 meeting, an “OTUadapt” proposal gained widespread support from vendors, component companies, and service providers. This flexibility would help to solve the nagging problem that OTN containers are often “out of sync” with next generation Ethernet services. Gigabit Ethernet (GbE), 10GbE, and 40GbE all had different but significant issues in their OTN mapping. OTUadapt will avoid these issues in the future – especially since the data rate for Ethernet services beyond 100GbE has not yet been defined (note the IEEE standard is expected in the 2016-17 timeframe).

Flexible capacity

DWDM superchannels potentially offer an ideal solution to the twin problems of increasing optical transport capacity beyond 100 Gbps and providing the flexibility to maximize the combination of optical capacity and reach. By implementing a superchannel with many optical carriers, we can reduce the requirement for exotic electronics, allowing this technology to be delivered much more quickly than other options. The key to a multi-carrier superchannel is the use of large scale PICs to reduce optical-circuit complexity and offer the maximum flexibility for an engineering design.

References

1. E. Desurvire, “Capacity Demand and Technology Challenges for Lightwave Systems in the Next Two Decades,” Journal of Lightwave Technology, Vol. 24, No. 12, December 2006.

2. A. Saleh, J. Simmons, “Technology and Architecture to Enable the Explosive Growth of the Internet,” IEEE Communications Magazine, January 2011.

GEOFF BENNETT is the director of solutions and technology for Infinera. He has more than 20 years’ experience in the data communications industry, including IP routing with Proteon and Wellfleet, ATM and MPLS with FORE Systems, and optical transmission and switching with Marconi as distinguished engineer in the CTO Office.

Past Lightwave Articles