Transforming metro-network economics

To optimize metro architectures, you need to know your Layer C from your Layer T.

By GEOFF BENNETT AND PRAVIN MAHAJAN

Two fundamental trends will drive change in network architectures across the next decade - scale and virtualization. Increases in scale are needed because overall bandwidth demand continues to rise at exponential rates. Virtualization is a distinct, but relevant, effect that acts as a "turbo boost" to scale, generating an unprecedented growth and dynamism in network traffic patterns.

With the intersection of these two trends, networks, including metro, will fundamentally settle into two layers: the services and virtualized-network functions delivered from the cloud that we'll refer to as Layer C and the converged packet optical transport layer we'll call Layer T (see Figure 1).

Long haul 'Optical Reboot'

In early 2011, Andrew Schmitt at the analyst firm Infonetics Research coined the term "Optical Reboot" to describe a trend he was seeing in the long haul market. He predicted a rapid migration away from conventional 10-Gbps optical transmission toward a combination of 100-Gbps coherent transmission with ubiquitous Optical Transport Network (OTN) switching and increased "packet awareness." Three basic trends drove the reboot: increasing video quality and use, the rise of cloud-based services, and the consumption of high demand services on mobile platforms with increasing user access speeds.

We now know the Optical Reboot is real, and in particular the past few years have seen the dramatic success of coherent superchannels in addressing the scalability needs of the long haul market, with some modules capable of 500-Gbps superchannels. We now see superchannels poised to do the same in the metro space.

What's driving next gen metro?

It's becoming clear that a similar step-change in scale to 100 Gbps will occur in the metro network starting this year and have a long ramp-up. Infonetics, in a recent survey of service providers, finds that new metro installs for 100-Gbps coherent would rise from 6% in 2014 to 28% in 2017.

Unlike in the long haul, metro has more available fiber, and the decision to move to 100G is a combination of bandwidth pressure and 10G versus 100G economics. However, bandwidth pressure will continue to increase as more content caching and application placement move from centralized data centers to distributed data centers to improve the user experience. Meanwhile, user access speeds and application demands also will continue to increase.

Overlaid on this ever-present background of increasing demand, it's clear that virtualization - especially in the context of cloud data centers - is a key accelerating factor in this trend. Aligned with the transfer of content and applications into the metro is the deployment of additional data centers into the metro by cloud providers and Internet content providers. Established owners of central-office and cable-headend locations are also using their real estate to deploy local data centers.

These data centers will be used not only for content and application services, but also to support network function virtualization (NFV). NFV takes appliance-based network functions that require power, space, cooling, cabling, installation, and other operations and converts them to software instantiations that run on common x86 server data-center infrastructure.

These virtualized network functions (VNFs) can be deployed in any data center on demand as traffic patterns shift. As traffic patterns become more dynamic, they require rapid, high volume data connections between the various VNFs and between VNFs and the user - no matter if the VNFs are deployed in the same rack, the same data center, or in geographically distributed data centers. Data-center interconnection over long haul networks has certainly been a driver in that market, but a Bell Labs forecast suggests more than 500% growth in metro-network traffic by 2017.

Metro market fragmentation

If the scale of metro transmission capacity is set to explode, what will "next gen metro" look like? First of all, we need to be clear that the term "metro" covers a lot of ground, although metro revenues tend to be lumped into one big bucket. At the top level there are two metro markets: metro cloud and metro aggregation (see Figure 2).

Metro cloud is driven by Internet content providers, cloud providers, and data-center owners who do not typically have a lot of fiber, combined with massive growth of "east-west" server-to-server traffic between data centers. These operators need massive point-to-point scale between data centers; not surprisingly, this market has already moved to 100G. In fact, the majority of 100G revenues reported in the metro category today are based on this market.

Metro aggregation is driven by telecom and cable service providers who deliver retail and enterprise broadband services and have thousands of network elements and millions of endpoints. They typically have more fiber, and their requirement for metro aggregation is moving from 10G to 100G based on both bandwidth pressure and economics. They also have a major incentive to simplify their networks as they move to their next generation metro architectures - with the goals of fewer boxes, fibers, and truck rolls; less space and power; and dramatically increased agility.

Addressing market needs

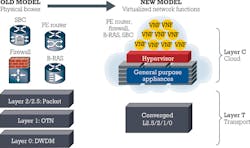

Paul Parker-Johnson at ACG Research has quantified the data-center interconnect (DCI) segment, forecasting it to grow to around $3 billion in five years. It's clear that service providers in this segment are looking for a small-form-factor rack-and-stack approach with very high capacity and a "server-like" installation process. It's also clear that's in the sweet spot for photonic integration; a simple solution to support this market is to integrate photonic integrated circuits (PICs) and coherent DSPs into such a platform (see Figure 3).

Designed for a point-to-point topology, the platform requires only the simplest Ethernet aggregation capability to meet DCI cost goals. It also should offer a fluid or "instant" bandwidth capability so the data-center operator can buy the interconnect capacity in chunks of 100 Gbps and simply turn on additional 100-Gbps units in software as the demand level increases. Such DCI platforms for the metro are already commercially available.

The metro aggregation market is more complex, with subcategories of metro access, metro edge, and metro core. Each requires different form factors and different capacity. Embedded in this future architecture will be data centers of different sizes housing VNFs, cached content, and applications.

Today the metro contains numerous physical appliances, including routers, switches, and OTN elements. The future goal of the metro aggregation architecture will be to virtualize everything at Layer 3 and above into VNFs running on x86 servers (Layer C). Such an approach would provide the simplest transport function possible to and between data centers with highly scalable optics as the foundation and converged digital switching and simple but high performance packet switching and forwarding (Layer T).

The three distinct metro aggregation segments, plus DCI, are shown in Figure 4.

The metro core segment would have the most rapid migration to 100 Gbps and could immediately take advantage of scalable PICs as the most cost-effective optical engine for this transformation. Here we would also see the need for packet/OTN switching, as opposed to simple aggregation.

The metro edge segment would move from 10G to 100G after the core, and 100-Gbps coherent interfaces and 10 Gbps would coexist for a time. The function in this domain would predominantly be Layer 1 and 2 traffic aggregation.

The access segment would continue to use 1- to 10-Gbps, non-coherent transmission (often using pluggable optics) and Layer 1 and 2 traffic aggregation functions.

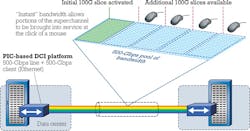

As just mentioned, a PIC-based approach could provide a scalable optical engine for the metro core segment. However, the application demands a new implementation. For example, a key characteristic of the metro core segment is that traffic patterns are frequently "one to many" or sometimes "many to many." Figure 5 (left portion) uses a simplistic example to illustrate the problem for a classic "hub and spoke" topology.

Using the 500G PIC technology as in long haul, multiple pairs of superchannel linecards must be deployed to satisfy this traffic matrix. While an instant bandwidth capability could reduce the cash flow impact and the linecards' capacity would fill eventually, this type of network would tend to have considerable "service-ready" capacity for much of its operational lifespan. Such capacity would of course be available to meet unexpected service demands - frequently caused by the higher capacity, dynamic demands we expect to see from Layer C.

Meanwhile, the right portion of Figure 5 shows the pitfalls of a more conventional approach. Here, bandwidth demands are satisfied individually using 100-Gbps metro transponders. But the opex of this approach scales linearly as demand increases. This approach also puts a lot of strain on the network engineering team and is less responsive to Layer C traffic demands.

Sliceable PIC to the rescue

Fortunately, there's an elegant way to use high capacity PICs in the metro core. In Figure 6, the hub site at location A clearly needs at least one 500-Gbps linecard. But now the capacity is optically redirected to the three other sites using a flexible grid ROADM.

Locations B and D are known to be high growth spoke sites, so 500-Gbps linecards are also installed at these sites with instant bandwidth capability to turn up new 100-Gbps -connections very quickly. In contrast, location C is known to be a lower demand site, in which 100 Gbps will be adequate capacity for the foreseeable future. At this location, a 100-Gbps transponder is deployed that can accept the 100-Gbps superchannel slice from location A.

This architecture could radically change the economics of the metro core segment and scale with PIC capacity to 1 Tbps and beyond.

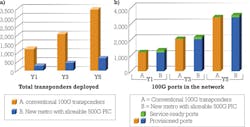

Even in this trivial example the reduction in linecards at high demand locations is obvious. If we scale the example to a larger number of nodes - typical of either a pan-European long haul network or a national metro core network in Europe or Asia - we would expect results similar to those shown in Figure 7. These charts derive from a study covering five-year traffic growth for a European metro core topology.

As expected, the number of transponders deployed in this architecture drops dramatically (Figure 7a) compared to conventional 100G transponders. The "service-ready" capacity in the network is very small (Figure 7b), which means there's a good match between supply and demand. Finally, the new metro architecture with sliceable PIC results in an average 37% reduction in power consumed versus a conventional 100-Gbps transponder-based architecture.

Layer C and Layer T

The clear trends of scale and virtualization are creating two distinct layers in modern network architectures: a cloud layer (Layer C) and transport layer (Layer T). The success of superchannels and OTN switching in the long haul network has created a truly programmable Layer T that's the ideal way to support Layer C demands. With a highly programmable Layer T, carrier grade control plane technologies such as software-defined networking can reach their full potential. The ability to slice superchannel capacity using a new generation of PICs will bring the same scale and programmability to the metro Layer T and enable service providers around the world to meet the twin challenges of scale and virtualization.

GEOFF BENNETT is director, solutions and technology, and PRAVIN MAHAJAN is director of product and corporate marketing at Infinera Corp.