Choices emerge for 40G and 100G applications

by Ed Cornejo

Signs everywhere are indicating a new explosion in bandwidth demand. While the rate of increase of IP traffic is debatable, the growth trend is not. Experts -- Cisco, Gartner, IDC, Merrill Lynch, and Ovum RHK among them -- predict that by 2009 IP traffic will grow to 11 exabytes per month and 15 exabytes by 2010. For a perspective, 1 exabyte equals the entire world's printed matter.

In preparation for this increased IP traffic, carriers have started deployment of 40-Gbit/sec (40G) WDM technology. According to Ovum RHK and Opnex's internal studies, the 40G module market should experience a compound annual growth rate greater than 50% from 2006 to 2010. At OIDA's "Future Optical Communication Systems" meeting last November, AT&T presented data predicting that some parts of the network will need 100G WDM capabilities by the end of this decade.

The trend toward higher bandwidth has spawned numerous alternatives for 40G and 100G applications. The industry appears to be reaching consensus on 40G technology; 100G remains unsettled, but a few options show promise.

Abiding by the rules

To better understand the optimal solution for each application, we will refer to them as transport and client interfaces. Transport is defined as the long-distance WDM application that usually comprises multiple spans of fiber, with each span about 40 to 80 km in length. Client is defined as the shorter TDM application that usually requires less than or equal to 80 km over a single span; as its name implies, this interface usually represents the connection between the carrier and the client.

In existing 10G transport infrastructure, most fiber spans are accompanied by in-line amplification, optical filters to add or drop wavelengths, and likely some chromatic dispersion compensation. For any next-generation 40G or 100G technology to be successful, it must abide by the engineering rules on which the 10G infrastructure was based and account for such factors as optical signal-to-noise ratio (OSNR), filter narrowing, and the optical impairments inherent in the fiber.

For example, over the length of each span the signal is attenuated and then amplified with a broadband amplifier. This has the effect of reducing the OSNR. The 4x increase in data rate attendant with the deployment of 40G in networks negatively affects the OSNR performance by 6 dB. The rule of thumb is a 3-dB penalty for every 2x increase in speed. Filter narrowing, meanwhile, exacerbates as you cascade reconfigurable optical add/drop multiplexers (ROADMs) and filters, so the modulation scheme selected must support 50-GHz spacing.

The main optical impairments are chromatic dispersion (CD) and polarization-mode dispersion (PMD). CD tolerance decreases by the square of the data rates. Thus, you have 16x worse CD tolerance at 40G than on 10G links, which mandates dynamic chromatic dispersion compensation at the system level. PMD is not considered a major issue for most singlemode fiber in the field. However, mechanical and thermal stresses over time can induce eccentricities in the fiber that can cause PMD. Older installations thus are more likely to exhibit poorer PMD characteristics.

The table shows a summary of key parameters to consider by modulation scheme. Each column in the table represents a different data modulation technique with increasing complexity and cost moving from left to right across the table. For example, non-return-to-zero on/off keying (NRZ-OOK) is the simplest and least costly to implement; however, the performance does not support most transport applications at rates higher than 10 Gbits/sec.

For 40G systems, the most widely successful transport option to date has been phase-shift binary transmission (PSBT, also known as optical duobinary). The main reasons for its success are the use of multilevel coding (which allows a lower-bandwidth, ~20 GHz modulator to be used), the direct photodetection receiver, sufficient CD tolerance, lowest cost after NRZ-OOK, and the ability to be packaged in a 300-pin MSA. The one negative is the OSNR performance due to the additional noise cause by the multilevel encoder that affects the overall distance it can support. PSBT can support 500 to 1,000 km at 50-GHz spacing with appropriate CD compensation and is optimal for metro and regional applications.

For longer-distance performance, non-return-to-zero differential phase-shift keying (NRZ-DPSK) makes reasonable sense. It utilizes a moderately more complex receiver that uses differential phase detection along with a similar transmitter to the one used in PSBT. NRZ-DPSK can be designed to support 50-GHz spacing but has lower filtering tolerance than PSBT. This technology, which can fit into a 300-pin MSA module, can support 1,000 to 2,000 km and is optimal where PMD is not a significant issue.

If there is a severe case of PMD then RZ-DQPSK is a good choice because of its 2x improvement in PMD tolerance. However, the transmitter is more complex, thus driving up the cost. Figure 1 shows the form factor and modulation format trends for both transport and client.

The move to modules

High performance electronics are equally important to make 40G transport work. Among these key components are strong forward error correction (FEC) and CD compensation. Not all system vendors had the resources to invest in these components, and initial 40G deployments consisted of subsystems or line cards that included these electronic components.

As 40G begins to ramp, investment has gone into these electrical components and now there is a strong desire by system manufacturers to simplify the front-end optical engine. This means moving to 300-pin MSA modules that are more economical, smaller, and consume less power. Figure 1 highlights this trend; PSBT and DPSK 300-pin modules are expected to ship in volume by the second half of this year.

The trend in the 40G Optical Transport Network (OTN) client interface arena is a move to smaller 300-pin MSA footprints such as 4x5 or 3.5x4.5 inches. To enable these smaller footprints, improvements are needed for the eletroabsorptive modulator distributed-feedback laser (EA-DFB), opto-electrical packaging, and next-generation SFI-5 silicon to reduce power dissipation and size.

There is also a new development on the client side called 40-Gigabit Ethernet (40GbE). The IEEE 802.3ba has an objective for a 100-m multimode fiber (MMF) specification. There is also market interest for a 40GbE singlemode approach. Figure 1 shows the two likely schemes for these applications. For MMF the consensus is that 4x10G parallel MMF is the most economical for servers. For client-side data centers, 4x10G coarse WDM (CWDM) is being considered because it could support SMF and MMF for approximately 4 to 10 km and 100 m, respectively.

The view toward 100G

For 100G transport, more research is required and the optimal approach has yet to be determined. Similar to 40G you must abide by 10G engineering rules and be spectrally efficient. The latter means the transmission must be serial. However, the penalties and the economics would be too severe to adopt the traditional 10-Gbit/sec scheme of one bit per symbol OOK. A modulation scheme that increases the symbols per bit is necessary to achieve a reasonable distance.

Referring back to the table, dual-polarization QPSK (DP-QPSK) shows the most promise because the bit rate would be reduced to ~25G for 100G transmission. This technique is complex and requires significant new developments in optics and electronics. Development of a ~25G analog-to-digital converter (A/D) with high bit depth, digital signal processing (DSP) engine, and coherent receiver are needed to recover the signal. The coherent receiver requires a local optical reference signal to recover the data. Today, this is done with discrete components and requires significant cost and know-how to implement.

Figure 2 shows the form factor and modulation format trends for 100G transport and client interfaces.

Though 100G transport has been demonstrated, more work and investment is needed on integration of critical components and ease of implementation before this becomes a viable commercial option. Also, further study is needed on alternative schemes such as orthogonal frequency-division multiplexing (OFDM). Like many of the modulation schemes discussed throughout this article, none of these schemes are new. They have been used in copper and wireless communications. However, optical communications run at much higher data rates, and because photons don't interact it is challenging to perform phase detection when using these schemes. OFDM is in its infancy in terms of use for optical communications, but it does show promise in terms of its performance.

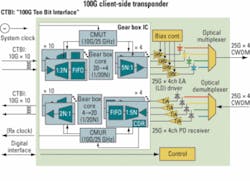

The client side seems to be on a faster pace and will probably drive transport investment and development. The target for 100G client-side interfaces is early 2009. The initial application will be for SMF between 4 and 10 km. The leading proposals in IEEE 802.3ba are for a coarse or LAN WDM approach using four ~25G lasers. The main difference between the CWDM and LWDM approaches is the wavelength spacing of 20 nm and 2 nm, respectively. The CWDM proposal allows for future cost and power consumption reduction by moving to directly modulated DFBs, as well as higher wavelength yield of each laser. Figure 3 shows a functional diagram of a potential client-side 100G transponder.

Several key developments must be completed to achieve the 2009 timeline. The IEEE 802.3ba standards group must converge on a physical link and MAC-to-PCS-to-PMA interface specifications that will drive the laser wavelength and gear box IC investment and development. The gear box function is to map the PCS to PMA interface, shown in Fig. 3 as 10x10G CTBI, to a 4x~25G input to the optical transmitter via bit multiplexing. Like most client-side applications, the form factor will likely be pluggable.

There are many alternatives for 40G transport, but it is clear that PSBT and DPSK are maturing and 300-pin MSA versions of these will be ready for economical mass deployment by 2008. The 40G client side has some new focus on 40GbE, so we need to stay tuned. Although 100G transport needs more work, it is conceivable that commercial technology will be available by 2010. The 100G client standard and development has started and market expectation is for modules in 2009.

Ed Cornejo is vice president of product marketing for Opnext.