Impact of Big Data transport on network innovation

The Internet and data services have evolved significantly - which means a new set of requirements for transport networks.

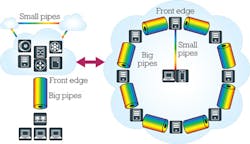

A fundamental transformation seems to have transpired so swiftly and imperceptibly that few have even noticed. The Internet has evolved from being vertically accessed caches of information (for example, a user making a request at a search engine then receiving that content) that demanded massive north- and southbound links to instead being dominated by more horizontally oriented traffic patterns across virtualized clouds of data (see Figure 1).

The astronomical growth of the Internet is a story that's frequently told, but the growth of internal networks to accommodate it and the evolution required to adapt to new usage models such as relationship mapping and lifescaping compose the most important story happening in the data-center industry today. This change has driven significant and diverse innovation for data-center operators' transport networks in areas such as capacity, efficiency, security, and programmability to optimize infrastructures for the shift from vertical to horizontal traffic dominance.

How data centers have changed

Data centers now hold within their vaults so much information on users' profiles, habits, and relationships that the amount even exceeds that of the actual data those users are accessing.

What happens across global data-center infrastructures today with any single packet coming in from a user is mind-blowing. As of 2012, Facebook was said to be processing 2.7 billion "Like" actions per day. Each "Like" can be linked to traffic across more than 1,000 servers around the world. And like a first domino falling, that first ripple caused other ripples, driving a huge amount of horizontal traffic across their global network - all from a single click of a mouse.

Also, there are massive horizontal, relational databases being gathered and maintained across global data centers for every data point stored on each person. It has been widely reported that each data-center operator maintains several thousands of data points on each user for advertising and marketing purposes. And, earlier this year, it was disclosed in public court filings that Google gathers much data on each Gmail user for purposes beyond simple advertisement matching.

Whether the practice is right or wrong, it cannot be argued that this huge amount of data not only has to be stored somewhere, but also cached and transported globally. A significant percentage of the transport industry is now servicing a global virtual cloud of load balancing, caching, and automatic routines.

Google has said that its private backbone network interconnecting data centers and transporting site-to-site bandwidth is already larger and growing more quickly than its public network that accommodates in-and-out bandwidth. Furthermore, based on "Amdahl's lesser known law" - 1 Mbps of I/O is needed for every 1 MHz of computation in parallel computing - Google figures it will need a 100-Gbps connection for every one of its virtual machines (VMs). That translates into 100 Tbps for the transport pipe between their clusters of 1,000 VMs apiece.

The implication of such insights for transport networks is clear: Big Data demands big pipes, and the transport industry is adjusting because that's now the source of the bulk of bandwidth demand. Many have plotted the astounding and continuing growth of the Internet, but the growth of the private supporting networks is like the Internet's growth on steroids. The transport industry was caught napping but is now responding to this new dynamic.

What this change means for transport

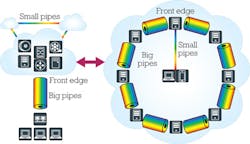

It's critical that data-center operators take advantage of transport-network technology optimized for the new era (see Figure 2). Running a Big Data transport network can more than double the performance of a data center's VMs.

Previously undreamt-of capacity in fibers among data-center sites is needed for Big Data. Of course, the number of fibers crisscrossing the globe is finite - thus the imperative to get as much out of each fiber as possible. Therefore, as many colors as possible must be crammed into each fiber, and as much data as possible needs to be encoded on these wavelengths.

To meet this seemingly impossible challenge, we see more complex modulation formats put into use today because higher-order modulation helps enable higher data rates for a given spectral width. Line-side 100 Gigabit Ethernet (100GbE) transport ushered in the age of coherent networking, bringing an end to worries about dispersion maps, polarization mode dispersion tolerance, and gain budgets. Today, 400 Gbps has started discussion of such schemes as 16-quadrature amplitude modulation (QAM) and 64-QAM, among others, in the standards bodies.

Software-defined networking (SDN) is a must in today's transport infrastructure for data centers. There already are use cases for SDN-enabled transport boxes - with Google's Andromeda SDN platform being a prime example. Transport SDN turns the network interconnecting data centers into a programmable resource capable of supporting bandwidth on demand on a calendared or ad hoc basis. Through integration with cloud orchestration, resources become virtualized across and beyond the data center, working in tandem for truly elastic cloud services.

Extending SDN beyond its local-area-network roots to the transport layer for interconnection holds the key to virtualizing everything inside and outside the data center. For operators of data-center networks, that translates into the ability to easily and fluidly shift VMs and data among geographically dispersed locations and automatically turn up or tear down circuits as necessitated by bandwidth demands as they evolve. End-to-end multilayer flows can now be fully optimized. Applications can be seamlessly migrated from data-center cores to local caching - for better end-user experience - and back again.

Efficiency - in terms of power, space, and cost - is another important area of Big Data transport networks. Given the astronomical growth in internal bandwidth demand driven by the substantial ripple effect of user activity horizontally across networks globally, data centers simply can't keep building more capacity to accommodate the need indefinitely. Data centers must be able to wring more capacity out of the infrastructure they already have deployed.

Data centers require the highest performance, whether measured by watt, dollar, or square meter of floor space. Requests for proposals to transport providers in effect ask more and more frequently, "What can you do in a given amount of space?" Terabits of capacity per rack is becoming an increasingly important benchmark in the transport industry. And given that it takes a 100-Tbps pipe to keep from throttling the performance of a 1,000-VM cluster, it's no wonder.

Finally, with so much sensitive user data being stored, concerns around information privacy and security issues continue to gain importance. Security must now assume a place alongside cost, space, and power consumption as a defining factor when operators of data-center networks make decisions about transport connectivity. As data-center networks have become virtualized global clouds, they increasingly shift sensitive personal data around their cloud to be in the local cache closest to the end user. Users' personal data isn't sitting securely in some data vault deep in a cave but rather constantly transported all over the globe. And these transport links become a key point of vulnerability.

The answer is encryption, but transport pipes run at 100 Gbps, and any encryption engine must keep up. Encrypting traffic at the transport layer is called in-flight, or wire-speed, encryption since it happens in real time, in line with the traffic. Unlike encrypting traffic at higher layers in the network stack, in-flight encryption uses no overhead and thus maintains 100% throughput as packet sizes get smaller.

Big changes for Big Data

A user making an Internet request might imagine those traffic packets going to a hard drive in the sky where the desired content is accessed and served back to the user downstream. That's an antiquated notion; such a simple, vertically oriented transaction is no longer the norm.

The architecture of the cloud has quietly, yet profoundly, changed over the last couple of years. Everything done on the Internet today will within a few seconds set into motion horizontal traffic among hundreds of servers around the world.

Data centers therefore are ballooning in size and number, and the applications they run are outgrowing the walls. Truly massive amounts of data must be transported among the various geographic sites, whether for load balancing or perhaps replication and/or business continuity.

Early interconnection networks for data centers simply made the best use of whatever network resources and technologies were readily available. But with the percentage of total transport bandwidth consumed by data centers eclipsing that of traditional telecommunications, network technology optimized for the new reality of Big Data transport is needed.

About the Author

Jim Theodoras

Jim Theodoras is vice president, R&D at HG Genuine USA.