Meeting the testing challenges of 100-Gigabit Ethernet

By Paul Brooks

Overview

The development and deployment of 40GbE, 100GbE, and OTU4 technology introduces a new set of test requirements. A new generation of test equipment is required in response.

The transition from Gigabit Ethernet (GbE) to 10GbE was very much an evolutionary process, but the current shift toward 40- and 100GbE represents a major discontinuity. In particular, 100GbE places tremendous electrical and optical demands on the physical layer. It creates the need to move to parallel optics—multiplexed either by space with parallel optics or by lambda—for LAN applications and requires complex modulation schemes and coherent receivers for long-haul transmissions. This, coupled with the convergence of OTU4 and 100GbE, gives rise to the 100GbE revolution. The 100GbE revolution increases the importance of testing to ensure quality, while at the same time increasing the magnitude of test challenges encountered during development and integration.

FIGURE 1. A significant amount of 100GbE development work has focused on the specification that calls for four wavelengths of 25 Gbps each. These wavelengths could be multiplexed or sent down parallel fibers.

The advent of 100GbE

The expansion in the number of broadband Internet users and growing use of multimedia applications is driving in the neighborhood of a 100%-per-year increase in the volume of backbone Internet traffic. A number of the largest Internet services and cloud computer users are expected to require 100GbE between data centers and 40GbE and 100GbE within the data center. These factors are driving the move toward 100GbE.

Yet this move could take longer than previous generations because of the major technological challenges involved. The first generation of 100GbE products will likely be data-centric devices using current-generation short-haul WDM optics arrayed as parallel transponders along with new 100GbE ICs. Long-haul transport, on the other hand, will require a new generation of optics including much more complex transmitters, receivers, and post-processing schemes. As a result, long-haul transport will take longer to reach the market.

According to Robert Winter of Dell, 100GbE is expected to coexist with rather than replace 40GbE. Winter says that 40GbE mostly applies to servers and chassis mid-plane while 100GbE will primarily be used for 10GbE aggregation and telecommunications. Of course, the delivery dates and cost of 100GbE equipment will also influence the demand and investment for 40GbE equipment. Many service providers are pondering whether to invest more in 40GbE or wait for 100GbE.

The development phase

Against this backdrop, network equipment manufacturers spent the past year primarily working the development phase. The CFP multisource agreement (MSA) is the first industry standard to support next-generation Ethernet optical transceiver interfaces for both 100GbE and 40GbE. The CFP MSA defines the form factor of a hot-swappable optical transceiver supporting 40/100GbE and OTU4 and uses an electrical interface consisting of multiple 10G lanes. CFP transponders are designed to support a reach between 10 and 40 km. The first CFP pluggable optics for 100GbE are expected as 2009 ends.

The CFP standard also supports the Optical Transport Networks (OTN) transport platform. A hierarchy of transport containers called optical data units (ODUs) and organized in optical transport units (OTUs) provides the basis for transparent data services framed into OTU4 containers. The OTU4 transport layer adds the framing, management layer, and forward error correction on top of Ethernet that is needed for longer-distance transmission. OTU4 will play a growing role on the client side and will require a speed of approximately 112 Gbps.

The Institute of Electric and Electronic Engineers (IEEE), International Telecommunications Union (ITU), and Optical Internetworking Forum (OIF) have been working on standards that are expected to be finalized in 2010. These standards have led to 100G field trials in 2009 while deployments are expected in 2010.

Most development activity is focused on the 4×25G IEEE proposal, in which one fiber carries four 25G lambdas (see Fig. 1)—although alternative proposals, including 10 lambdas of 10G each, have been developed. Both the 4×25G and 10×10G schemes use a singlemode fiber to give a 10-km reach with 40 km possible in the near future. On the electrical interface side, a system of 10 parallel electrical lanes, each carrying 10GbE, provides the best mix of cost-effectiveness and flexibility.

The Physical Coding Sublayer (PCS) is responsible for bonding multiple lanes together through striping or fragmentation techniques. The number of PCS lanes is typically the least common multiple of the number of electrical and optical lanes. In today’s most popular implementation with 10×10G electrical lanes and 4×25G optical lanes, 20 virtual 5G PCS lanes are used in the draft standard.

None of this can be accomplished without a complete 100GbE ecosystem, which in turn will require the development of many new components. These include PHYs, ASICs, and FPGAs that are 100GbE ready. Signal integrity needs to be maintained in both electrical and optical domains while providing the necessary parallel data paths. CFP pluggable optics are much more complex than previous generations due to the inclusion of the gearbox, parallel 25G optics, and two-part connectors. The management data input/output (MDIO) control interface of the CFP transponder offers remote supervision and control but further increases transponder complexity.

Transponder and component development is the key to 100GbE progress. It’s not enough merely to overcome the technical challenges to produce working components. In addition, components must be mass produced, integrated, installed, and maintained economically. The goal is to reach the right price targets in the next five years for the highly complex parallel optics, gearbox, connectors, and DWDM integrated optics. Ultimately it will be economics that triggers mass deployment of 100GbE.

Critical role of testing

Testing will play a critical role in the commercialization of 100GbE. In the early stages, the most critical testing requirements will be the validation of components, especially transponders, which will require both electrical and optical testing. When fast signals are moved across a printed circuit board (PCB), signal degradation is inevitable, so electrical layer challenges will be critical. System and equipment manufacturers will want to use FR4 as the board material to keep costs low, increasing the level of the challenge.

During the testing process, it will be important to identify signal integrity concerns such as noise, crosstalk, and impedance and diagnose PCB and connector issues. The use of parallel data lanes makes it essential to measure inter-lane skews. Clock and dynamic performance are also important, particularly clock recovery behavior.

Testing must go deeper than simply unframed bit-error-rate testing (BERT). The testing source must match real-world signals to verify the transparency of the gearbox and electrical-optical and optical-electrical interfaces. The fact that data is carried over 20 virtual lanes, 10 physical electrical lanes, and 4×25G optical lanes complicates things. If errors occur, which domain is at fault?

The optical layer will be equally challenging at 100GbE. All of the current optical parameters such as power, stability, SRS, and eye pattern will still be important but they will have to be measured over 4 or 10 lambdas. If the time delay between signals or skew on different lambdas goes beyond a certain value then the links cannot buffer the data and synchronization is lost.

This effect can be tested with a platform that delivers multiple optical signals and then applies stress to the signal such as modifying the eye closure. Stress in the optical lane can be augmented by electrical lane skew to fully test the margins of the electro-optics. By monitoring the performance of the receiver you can see the precise level at which the line card starts to produce errors. The OTU4 transport line side provides even more complex modulation challenges.

New generation of testers is needed

Component and system manufacturers need testers that cover all key requirements for the early stages of 100GbE, including transponder testing/validation, network equipment development, and system verification testing (SVT). Advanced applications are required to support developers in the challenging task of debugging and verifying highly complex 100GbE multilane, multilambda products. A full test regime requires testing optical and electrical interfaces from the physical layer to the Ethernet IP, protocol testing, PCS layer validating, and transponder testing. The use of parallel signals in 100GbE also creates the need for multiplexing/demultiplexing, signal conditioning, and signal access of 100GbE optical signals.

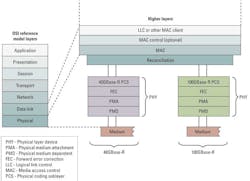

FIGURE 2. The Physical Coding Sublayer plays an essential role in enabling the full benefits of 40- and 100GbE.

The test approach needs to measure skew of multilane signals at the full rate to verify the functionality of the gearbox, one of the key component development challenges. The individual lanes are mapped onto different lambdas via the action of the gearbox in the CFP module. This process is random at startup so you normally cannot generate an aggregated (per 25G lambda) BER to determine the full performance vital in the development and evaluation phase of CFP modules. The ability to group the individual lanes on a per-lambda basis would greatly increase the depth and breadth of testing that can be done over the link.

Once the gearbox chip has been validated to handle the physical signals correctly, the next step is bringing up the transponder and the line card to determine whether there are bit errors. The test equipment should apply framed and unframed signals and apply payloads to framed signals to determine whether or not errors occur. Low-layer measurements would then be performed with a pseudorandom bit sequence (PRBS) or digital words that can fully stress the electrical and photonic layers. Faults that are impossible to troubleshoot at higher layers would quickly be found with the appropriate control over the raw data.

Test systems that offer fast, low-jitter triggers could work together with fast oscilloscopes to reconcile the physical and logical signals. The next step typically is stimulating the system with a framed signal that contains PRBS instead of real data. From there you can add PCS data and test the corresponding functionality in the transponder or line card.

The PCS provides particular testing challenges. It is responsible for providing frame delineation, delivering the proper clock transitions, and bonding the multiple lanes together (see Fig. 2). The major testing considerations on the receiver side include mapping the received to the transmitted PCS lanes and generating and measuring virtual lane skew. The PCS layer alarms and errors need to be fully validated against standards before you can move on to the next level. If issues occur with the PCS layer, it is important to have applications that allow full control of the coding and payload.

The next layer up is the IP layer, which can be tested by sending and receiving IP frames. The test instrument would need to create a full 100GbE load on the client either as a single flow or in multiple lanes.

Latest test instruments meet the challenge

The latest generation of 100GbE test platforms addresses the needs of component manufacturers through a powerful and flexible electrical interface (via an adapter) and unframed BERT stream generation and lambda mapping with migration up to Layer 3 and beyond. These systems meet the requirements of transponder manufacturers who need to develop and then validate at both the electrical and optical interfaces to ensure full compatibility. They also meet the needs of system manufacturers who need to integrate complex 40GbE, 100GbE, and OTU4 elements into equipment and perform validation and verification of key components against standards. Finally, they enable service providers who need to adopt and install 40GbE, 100GbE, and OTU4 equipment at critical aggregation points and ensure full and seamless validation and verification of interoperability with current equipment and OTU4.

The technical discontinuities driven by 40GbE and 100GbE are formidable, and without the correct test and measurement equipment they are nearly impossible to bridge. The latest generation of 100G test systems is designed to meet the needs of developers, manufacturers, and installers of 40GbE, 100GbE, and OTU4 equipment. It offers full scalability to cover the current and future challenges of hardware, firmware, and software development from component development and manufacturing and system verification through manufacturing and installation and into the turn-up phase.

Paul Brooks, PhD, is a product manager in JDSU’s Communications Test and Measurement business segment (www.jdsu.com).

Links to more information

LIGHTWAVE: CFP, CXP Form Factors Complementary, Not Competitive

LIGHTWAVE: 40/100-Gigabit Ethernet: Watching the Clock

LIGHTWAVE: 100G Chips Slowly Get up to Speed