Making the jump to 100G

In the past two years, the number of 100G transport platforms on the market has grown from one to nearly a dozen. With this much-needed leap in bandwidth capacity here to stay, service providers are now asking, “How do I implement 100G in a scalable, cost-effective way?”

How far ahead do service providers have to stay to keep up with the world’s endless appetite for bandwidth? While many continue to deploy 10- and 40-Gbps transport platforms, growing numbers are looking at 100G to give them a longer-lasting bandwidth boost.

According to most service providers, bandwidth demand is growing by at least 33% compound annual growth rate (CAGR) each year. Streaming video is expected to make up some 58% of all Internet traffic by 2014.1 More than 20 billion mobile devices are likely to be in use by 2020.2 And in just two years, more than 80% of all new software will be available as a cloud service, which will require the adoption of all-new content delivery and storage models.3

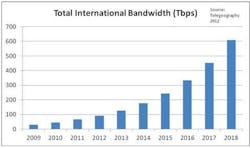

Some service providers have said they’ll need to double their capacity every 18 months to keep pace.4 (See figure below.) Other sources estimate that the total amount of content passing through the world’s networks will increase from 800,000 petabytes in 2009 to 35 zettabytes in 2020—meaning that by the end of this decade, service providers will need an astonishing 44 times the capacity they have today.5

A growing market

To deliver the capacity operators need, technology vendors have been actively working to develop commercial 100-Gbps network systems. Alcatel-Lucent opened the field in 2007 with the industry’s first field trial of 100G optical transmission. As 100G technology has matured, several vendors have brought such platforms to market. Today there are at least 10 different 100G platforms available.

The momentum behind commercial 100G is good news for service providers who have been waiting for the technology to mature before upgrading and evolving their networks. With 100G now widely available, they can finally start building capacity with the confidence that this technology will be the standard for years to come.

Different options for different needs

Every network has its own unique set of requirements—which means no single flavor of 100G will suit all applications. Service providers need choice and flexibility as they look to evolve, starting with options for both the IP and optical portions of their networks. These include:

- 100-Gigabit Ethernet (GbE) service routing interfaces that can be deployed anywhere in the transport network -- in the metro, at the service edge and in the core. In some networks, higher-speed core router or data center interconnection is the critical requirement. In others, 100GbE links provide headroom for handling high traffic volumes within the metro or can increase efficiency at the service edge of the IP network.

- Single-carrier 100G coherent optical technologies that couple coherent detection with advanced digital signal processing algorithms and sophisticated modulation formats to increase symbol rate and improve wavelength performance. Platforms that benefit from this approach possess the capacity to simultaneously handle native 10G, 40G, and 100G wavelengths—enabling service providers to easily migrate their network infrastructures to ever-higher wavelength capacities without sacrificing performance.

100G marks an inflection point in the evolution of both IP and optical transport networks. Although different service providers will have different objectives for migrating to 100G, they have the flexibility to start their transitions in one domain or the other—or proceed incrementally in both—based on the best approach for their particular network architecture and strategy.

Planning is key

To monetize their networks, service providers need the most flexible, efficient, and cost-effective means of transporting network traffic. With this in mind, a number of complex variables must be taken into account when planning 100G deployments. These include:

- fiber type

- distance between sites

- topology

- placement of amplifiers, electrical regenerators, and add/drop sites

- platform scalability

- capex and opex

- total cost of ownership (i.e., the need to reduce equipment footprint, power consumption, truck rolls, etc.).

The challenge is to achieve the right balance across these elements without going so far as to compromise one for the sake of another—for example, by reducing line rates or wavelength capacity on some spans or installing additional regeneration sites, all of which can diminish overall performance and undercut the value of the 100G technology investment.

The latest 100G systems on the market address these issues by extending unregenerated reach to 2,000 km or more. Although such distances typically suggest ultra-long-haul applications, a more common use may actually be in highly meshed regional and metro networks, which have frequently constrained service providers to 40G or even 10G wavelengths due to the variability and unpredictability of impairments in the optical infrastructure.

Enhanced 100G performance broadens the addressable market for 100G while improving network capacity and lowering costs. Despite the increasing number of applications available to service providers, however, migration plans still need to be well thought out and as complete as possible.

Going beyond 100G

While most service providers are either deploying or making plans to move to 100G, the unabated growth in traffic demand will soon push them toward even higher rates. (In fact, many early adopters of 100G—financial institutions and data center operators, for example—have already reached the point where 100G is no longer enough.) As such, many vendors are starting to think about what might be next—in a world after 100G.

Knowing that bandwidth demand will continue to climb, service providers will need to make sure their 100G networks can evolve elegantly into higher-bandwidth infrastructures down the road. But most approaches to higher rates require substantial investments in network equipment or fiber routes. Overhauling their entire infrastructure every few years is clearly not a viable option; today’s 100G platforms have to be both scalable and backward-compatible.

Leveraging recent advances in optical componentry, processing, and chip design, commercially available 400G chipsets have been developed for existing WDM platforms that are fully compatible with 100G networks. The result in one case is a high-bandwidth 100G system that extends reach by 50% while reducing power consumption and equipment footprint by more than 30%. When equipped for 400G transport, the same system delivers a fourfold increase in traffic payload rate and module density.

Chipsets for 400G from more vendors will soon arrive on the marketplace. Ideal devices will be in-house designs optimized for specific vendor products rather than the diverse range of potential applications (and performance compromises) typically required by merchant silicon. For service providers, these in-house chipsets will not only arrive on the market sooner, but will deliver improved performance over virtually any fiber infrastructure or topology. More importantly, they will provide a smooth evolutionary path that allows them to leverage their existing investments and migrate to higher rates at their own pace—meaning they can stay ahead of bandwidth demand while increasing capacity in a way that makes sense for them.

Moving forward, the need for more bandwidth will have a profound impact on every aspect of service providers’ operations. There’s a lot at stake. But with 100G technology more accessible than ever—and with recent advancements setting the stage for even greater capacity—the time to act is most definitely now.

References

1. Informa Telecoms and Media, 2011.

2. Strategy Analytics.

3. Bell Labs: Value of Cloud for a Virtual Service Provider, 2011.

4. Telegeography: International Bandwidth Deployments, 2002–2016.

5. IBM Software: Delivering a New ROI for Communications, 2012.

Sam Bucci is vice president and general manager of Alcatel-Lucent’s Terrestrial Optics Product Unit.