Terabit for Data Centers

As cloud consumption has grown, multiple generations of data centers and their supporting networks have come and gone as internet content providers (ICPs) have tried to scale to meet the demand. Briefly summarized, first was GBF (Get Big Fast), as they added servers as fast as they could. Then they outgrew the confines of their walls and had to scale across multiple buildings on a campus, which eventually became cities, countries, and the globe.

As they scaled bigger, they needed to start caching content in each metropolitan area, and today the number of caching sites dwarfs the number of data centers. Peering links popped up to build shorter, direct paths between competing cloud services, as subscribers typically subscribe to everything. (It's free after all.) Much to everyone's surprise, the previously stale business of submarine networking became de rigueur as existing wet nets could not meet the performance requirements of modern database architectures.

And most recently, the newest trend is to attempt to get not just caching, but also compute engines as close to mobile towers as possible. Mobile advertising is now the biggest cash cow, so it makes sense to optimize the cloud for it. As one ICP veteran recently told me: "It's a pain driven process - we focus on wherever our biggest pain point is and then move on to the next one."

And the next pain point would appear to be terabit networks. Not terabit total link capacities, as some ICPs already have links that exceed 100 Tbps (seriously). ICPs will need terabit capacity per individual WDM channel on the network side, and per individual port on the client side. Not that such a goal is impossible; the challenge is doing terabit at a sufficiently low price and power. Once again, we run into the three Ps: power, price and performance – pick any two.

So what's the terabit transmission problem?

It may seem incredible that ICPs might worry about price or power when they sit on massive cash hoards measured in the billions of dollars. (Why not just build another nuclear power plant? They could probably pay for it with petty cash signed for by the new intern.) However, the business model of cloud providers is incredibly fragile, as any service given away for free would be. And the cloud business is now mature enough that all the numbers are well understood, with plenty of underlying data. That data shows that, unless both power and price per bit continues to fall, the cost of reaching subscribers will eventually exceed the ad revenue from those subscribers.

Like a Foucault pendulum, there seems to be a repeating cycle where client bandwidth outpaces network-side bandwidth, and then the opposite, and then back again. We are once again approaching the situation where client port bandwidth will exceed network bandwidth (per channel), and it is here the pendulum might stop. Even using the most exotic modulations formats coupled with coherent gain, 400 Gbps does not travel very far in a fiber, and the problem only gets worse as you chase 1 Tbps. Such is not the case on the client side, where distances are shorter and parallelism is more common.

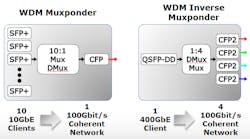

This brings us to the unique predicament that the ubiquitous WDM muxponding function might be supplanted by inverse-multiplexing (see Figure 1). Today, 10:1 muxponders enable lots of sub-rate 10 Gigabit Ethernet (GbE) client signals to be combined for transport over a single 100GbE WDM channel. Tomorrow, 400GbE clients will be inverse-multiplexed onto either 4x100GbE or 2x200GbE WDM channels, depending on distance. Future terabit client ports will by necessity be further inverse-multiplexed onto even more WDM channels for transport.

Another factor that must be taken into consideration when talking terabit networks is Moore's Law running out of steam. More specifically, the semiconductor industry is now predicting there will be no more geometry shrinks and the gains they entail. This directly impacts terabit, as most recent gains in performance have been based on heavy digital signal processing. While the latest round of coherent DSPs, both internal designs from vertically integrated systems houses as well as from independent chip vendors, have brought a performance leap over their predecessors, future DSP generations will bring diminishing returns. For example, the upcoming generation of DSPs appears to be focused more on parallelism and power reduction than reaching terabit transmission per single channel.

Trends to consider

Indeed, parallelism seems to be the way forward on all fronts. This is analogous to how CPUs were routinely made faster until about the 3-GHz clock rate was hit. Since then, the focus has been on the number of cores and power.

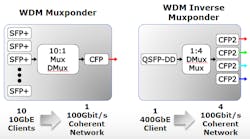

Similarly, standardization efforts have recently focused on filling in sub-rates between 10, 100, and 1000 Gigabit Ethernet, such as 25, 50, and 200 Gigabit Ethernet. The new sub-rates allow more efficient parallelism, delivering more performance per cost and power.

40/100 Gigabit Ethernet and later standards have added an important feature that helps efficiency when parallelizing a serial data stream: a multi-link gearbox (MLG). Lane markers are transparently added to the Ethernet physical coding sublayer frame such that a larger serial stream of data can be broken into any multiple of smaller parallel streams for transmission. The parallel streams are then bit interleaved together again at the receive side with minimal processing power, buffering, latency, and overhead required.

A great example of the value of MLG would be to look at a standard 100GbE client interface (see Figure 2). Without MLG, even with a multi-rate electrical interface, the most 10GbE clients that can be supported is four, thus stranding 60% of the bandwidth on the port. With MLG, ten 10GbE clients can be supported, thus yielding full throughput. As higher-speed Ethernet in the future depends more upon parallelism, MLG features are going to be critical to efficient transfer of data between servers, switches, and routers.

Also analogous to CPUs, as clock speeds flattened out, emphasis shifted to adding value-added features. CPUs gained video accelerators, encryption engines, memory controllers, PCIe bus switches, etc. Similarly, as coherent DSPs are running out of gains in modulation formats, they have started to add value-added features such as the aforementioned MLG, Link Layer Discovery Protocol (LLDP) support, and Layer 1 transport encryption.

LLDP has taken on new importance as the scale of data centers has grown beyond the ability of humans to manage them. It simply is impossible for network engineers to set up every piece of equipment in a rack/row/building and enter it into the network configuration. LLDP enables equipment to be racked and stacked as fast as possible; automatic network crawlers identify what has been added where, who is connected to whom, and how to best bridge across all devices on the network.

Transport layer encryption has experienced a renaissance of late. Only a few years ago, it was seen as a redundant feature that was nice to have if it was thrown in for free (but don't ask me to pay for it). The thinking was that, with all the higher layer encryption protocols running, there was really no need for it at Layer 1. Fast forward to today and it has become a checkbox item that is mandatory.

The industry realized that a sufficiently motivated person or group of people can defeat nearly all higher-layer encryption protocols through a variety of means, including but not limited to man-in-the middle attacks, spoofing, phishing, brute force password guessing, and more. Furthermore, various leaks have shown state-sponsored actors actually prefer to tap into communications at their foundation: the large bundles of fiber crisscrossing the globe.

If the biggest data breaches occur on the fiber, then it only makes sense to want to protect transmissions at the fiber level. Layer 1 encryption protects data at the fiber, and is the only method of encryption that does not impact throughput – in other words, the only technique that can keep up with terabit channel speeds.

Into the void

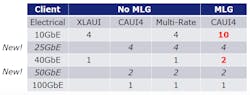

The increasing parallelism and value-added features are nice, but the emphasis on them is proof of a larger problem. Namely, a void still remains between grey short-reach clients and long-haul coherent optics at 100-Gbps speeds -- and it only gets worse as you approach terabit-per-channel data rates.

The sweet spot of the industry need is 40-80 channels at distances in the hundreds of kilometers. No solution today hits that bullseye, but the industry is approaching it from both ends of the solution space (see Figure 3). Direct-detect strategies seek to add colors and distance to client-like solutions. For example, a QSFP running two color channels at 50 Gbps using PAM4 modulation achieves 80 km with 40 channels. Approaching from the opposite direction, a CFP2-ACO achieves 1000-2000 km with 80 channels using coherent detection and DP-QPSK to DP-16QAM modulation. That still leaves quite a large gap to fill.

The latest attempt to fill the gap is a "coherent-lite" industry proposal that would have the potential to yield 96 channels of 400 Gbps at 64QAM. However, distances could be limited to around 70 km, which risks reducing the total potential addressable market.

The hard truth is that the optical engineer's bag of technological tricks is running dry. The transition from muxponders to inverse-muxponders is a sign of things to come. The fiber is full, and higher-speed steps might be faster, but will not necessarily cram more data on a fiber, nor increase throughput.

Higher and higher order modulation techniques do help, but at diminishing levels of return for the extra power and expense. Bringing higher-order modulation from the network side to the client side will breathe new life into client connection throughput, but at significant price/power/space penalties.

The transition to terabit is going to be less about making the next leap in speed and more about managing the complex compromises that must be made to arrive at the optimal balance point of conflicting priorities.

Jim Theodoras is vice president of global business development at ADVA Optical Networking.