DCI Requirements Drive the Next Generation of Coherent DWDM

Why are we so infatuated with data center interconnect, or DCI? Sure, it’s the application most responsible for recent growth in optical spending. And yes, it flows from two of the great megatrends shaping the networking world – digital content delivery and enterprise cloud IT. It also doesn’t hurt that some of its most notable practitioners are names we see every day on our TVs, laptops, and mobile devices.

But I’m an engineer. And if you’re reading this, it’s likely that you are, too. So you might agree that DCI grabs our attention because it distills optical networking to its purest form. More than any other application, DCI explores the technical and economic boundaries of transmitting maximum capacity at the lowest possible cost per bit over distances from metro to subsea. If optical networking can be defined as the practice of combining bandwidth and distance, and economics the study of the optimal use of scarce resources, then DCI – which places immense demands on optical fiber’s finite spectrum – surely sits at their nexus.

Few things are as scarce as the usable spectrum in an optical fiber. (OK, I suppose the wireless guys have us on this one.) The ultimate challenge of optical networking and DCI is to use that finite spectrum efficiently. Claude Shannon’s famous capacity limit, expressed as C = B*log2(1+SNR), can be rewritten (by moving the B under the C) as a simple relationship between spectral efficiency and signal-to-noise ratio (SNR). But noise in optical systems is caused by the amplifiers that make long-distance transmission possible. So the challenge is to maximize the use of the SNR the line system provides. We can do this using sophisticated mathematical algorithms implemented in coherent digital signal processors (DSPs).

The coherent revolution

With the advent of 100G transmission in 2010, coherent digital signal processing revolutionized optical networking. It increased spectral efficiency by a factor of 10 over existing systems with no sacrifice in distance, causing engineers to begin eyeing the Shannon limit as a benchmark, and ultimately a goal, for optical performance. 100G wavelengths are spectrally efficient near their maximum distance of around 3,000 km, but they don’t take advantage of the higher SNR available at shorter distances. This inversely proportional relationship between achievable spectral efficiency and link distance is called the rate/reach trade-off, and it’s exacerbated by the nature of DCI.

Data centers are everywhere – from edge data centers sprinkled throughout metropolitan areas to continental mega data centers strategically located throughout the world. The distances between them are determined by geography and economics rather than by network operators. DCI distances range from across the street to the other side of the world. It is for this reason that subsequent DSP generations introduced support for software-programmable modulation formats that could optimize spectral efficiency over varying distances.

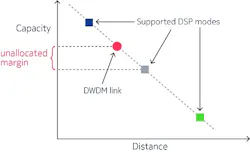

With moderately programmable DSPs, the diverse range of DCI distances still creates the problem of excessive unallocated margin. It occurs when a DWDM link is provisioned with SNR margin beyond what is necessary for aging and reliability.

Figure 1. The unallocated margin problem.

Figure 1 illustrates a typical example, in which the red dot represents a DWDM link, and the colored squares the capacity of available modulation formats. The distance of this link falls between two supported DSP modes, represented by the blue and gray squares. It requires the operator to use the longer-reach, lower-capacity mode to successfully operate the link (gray square).

This “quantization inefficiency” results from the coarse granularity of DSP modes supported by most of today’s optical transponders. Greater DSP programmability could exploit this excess margin to increase the capacity (hence the spectral efficiency) of the link, thereby minimizing cost per bit. With DCI bandwidth demand doubling every two to three years, it’s crucial to optimize every link in a network.

Solving the unallocated margin problem

The first coherent DSPs operated at 100G, using QPSK modulation. Operators were able to quickly adopt these new interfaces because their baud rate of roughly 33 Gbaud enabled them to operate over ubiquitous 50 GHz-spaced line systems. Subsequent DSP generations introduced rudimentary rate adaptability by implementing higher-order square QAM modulation formats such as 8QAM and 16QAM, while still operating at 33 Gbaud.

200G 16QAM has been widely adopted in metro networks worldwide, due in no small part to its compatibility with 50-GHz infrastructures. This generation of DSPs succeeded in capturing some of the unallocated margin typically wasted at shorter distances. Offering few modes of operation, they added minimal complexity to link engineering. But the limitations of 33-Gbaud operation severely limited the rate/reach granularity of these early DSPs. Clearly, new innovation was needed.

Baud rates in the 45- to 57-Gbaud range were the next frontier in the evolution of coherent DSPs. Faster symbol transmission opened the door to higher bit rates and longer reaches. Different combinations of baud rates and modulation formats brought new DSP modes supporting more rate/reach options. The new modes unlocked precious bandwidth that was previously inaccessible at certain link distances, allowing network operators to keep chipping away at the unallocated margin problem.

This evolutionary step opened up 200G for long-haul applications. But it added complexity by creating the need to deploy flexible-grid line systems and manage multiple channel widths. For more than a decade, network operators planned, built, and operated networks around a fixed grid of channels spaced at 50 GHz. Flexible-grid transmission accommodates wider channel spacing and higher baud rates. But operational considerations relating to link engineering, spectral planning, and the potential for stranded spectrum (middle row, Figure 2) have slowed the rate of adoption of this generation of interfaces.

For example, baud rates in the 45- to 57-Gbaud range require a minimum of 62.5-GHz spacing (utilizing the 12.5-GHz granularity of flex-spectrum ROADMs). Unless operators plan carefully, networks that use a mix of 50-, 62.5-, and 75-GHz channels could create unusable chunks of spectrum, especially after restoration events or network churn.

New DSPs bring new promises and challenges

A new generation of coherent DSPs was announced in 2018. These DSPs promise to provide an unprecedented level of modulation flexibility, eliminate unallocated margin, and capture every bit of latent capacity available on a given link. One shared characteristic of these new DSPs is the use of state-of-the-art silicon processes to support a baud rate of approximately 64 Gbaud. 64-Gbaud interfaces require a 75-GHz DWDM channel, which is poised to become the predominant channel size for the next era of DWDM networks. Consistent channel spacing simplifies planning and eliminates the possibility of fragmented spectrum. It’s also much more accommodating of the dynamic wavelength provisioning and re-routing that we expect to see in software-defined networking (SDN) controlled networks. When necessary, 75-GHz channels can coexist with 50-GHz channels because they conveniently share a low least common multiple of 150 GHz. This minimizes the likelihood of stranding spectrum in a mixed channel-width environment.

Consistent channel spacing will undoubtedly ease the operational concerns of adopting this new generation of highly flexible coherent interfaces. But how will these interfaces provide, within uniform channel spacing, the extreme level of rate adaptability required to maximize capacity across the vast range of possible link distances? The key is to support a modulation regime that maximizes spectral efficiency across the complete range of distances and offers fine granularity without requiring a change in baud rate.

One option is to echo the first programmable DSPs by supporting numerous discrete QAM modulation formats. There are two problems with this approach. Standard QAM constellations alone (e.g., 8QAM, 16QAM) do not provide sufficient rate/reach granularity to eliminate unallocated margin. Attempting to overcome this with additional non-square QAM constellations (i.e., 45QAM) significantly increases the implementation complexity of the DSP. None of the latest-generation DSPs adopt this approach.

Time-division hybrid modulation (TDHM) is another method for achieving finely adjustable rate adaptability. It uses a small set of traditional square QAM constellations, rapidly alternating between pairs of them (hence the time-division descriptor) to achieve rate/reach performance between those of the constituent square QAM constellations. The mixing ratio is adjustable and allows for fine rate/reach granularity.

The problem with TDHM lies in performance – the capacity available at a given SNR or, alternatively, the achievable distance at a given data rate. Due to inefficiencies inherent in the mapping of data bits into the two rapidly oscillating square QAM constellations, the performance of TDHM suffers, only achieving parity with traditional square QAM modulation when no mixing is present. In other words, TDHM imposes a cost to overcome the unallocated margin problem.

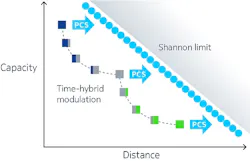

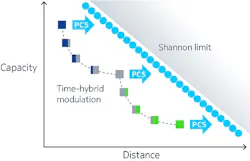

The resulting performance curve droops at rate/reach points between those addressed by square QAM alone, as shown in Figure 3, where squares consisting of two colors represent time-multiplexed constellations. At points that use a single square QAM constellation, where no mixing penalty exists, performance falls short of that achieved with the final technique used by this new generation of coherent DSPs – probabilistic constellation shaping, or PCS.

Figure 3. Relative performance of time-division hybrid modulation and PCS.

PCS is a new modulation technique that provides finely variable rate/reach granularity and superior optical performance. With standard square QAM modulation formats such as 64QAM, all constellation points are used with equal probability. By applying a sophisticated and elegant coding algorithm to the source data, PCS determines which constellation points are used and how often, matching the wavelength to the specific optical characteristics of each route with an adjustable shaping factor.

In addition to its rate-versus-reach benefits, this shaping function improves optical performance by mapping bits into symbols in a more energy-efficient manner than square QAM constellations. The net effect is that PCS improves optical reach and/or capacity by up to 25%, bringing spectral efficiency to within a fraction of Shannon’s theoretical limits. And it does so with a single modulation format – currently based on 64QAM – using a single channel size of 75 GHz.

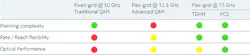

Table 1 summarizes the three generations of programmable coherent DSPs and their qualities relative to planning complexity, rate/reach flexibility, and optical performance.

Table 1: Evolution and characteristics of programmable coherent DSPs

By taking advantage of improvements in silicon and optics, programmable coherent transmission has improved remarkably from its single-rate origins less than a decade ago. Modern DSPs are largely capable of eliminating the problem of unallocated margin. They can put the latent capacity of optical fiber to work at any conceivable link distance. Innovations such as PCS also improve spectral efficiency to near Shannon limits. Combined, these two axes of improvement ensure that network operators can maximize their deployed fiber and optical networking hardware investment and keep up with the rapid growth of data center interconnect.

Kyle Hollasch is director of product marketing for optical networking, at Nokia, where he is responsible for promoting the company's broad portfolio of packet-optical transport solutions. Prior to Nokia, Kyle held roles in sales engineering and product line management at Cisco Systems, with responsibility for sales enablement and strategic customer engagements across both service provider and enterprise markets. Kyle began his career at Lucent Technologies, performing research involving high-speed data transmission over twisted-pair cabling and leading the deployment of long-haul DWDM systems. Kyle holds a BS in Electrical Engineering from Rensselaer Polytechnic Institute and a Master of Electrical Engineering degree from Cornell University.