New model for building next-generation optical data networks

The Optical Data Network Hierarchy promises an end-to-end approach to network infrastructure.

WILLIAM SZETO, Iris Labs Inc.

During the last decade, two major advances in optical networking have been viewed as critical factors in addressing the ever-increasing demand for capacity in service-provider networks. DWDM, which boosted the usable bandwidth of the installed fiber plant by dividing a single fiber into multiple channels, was the first of these advances. The second was the intelligent management of bandwidth through devices such as wavelength (lambda) routers and crossconnects, which supported increasingly dynamic networks with intelligent provisioning and restoration.

What's next? A pair of major challenges faces the industry at this juncture. One is to keep scaling an intrinsically data-centric network-from the customer access point to the metropolitan area and all the way to the core and long-haul networks-as demand grows to once unimaginable levels. The other is to efficiently create and manage the data-centric optical services (e.g., Internet access over virtual private networks, intranet/extranet connections, streaming videoconferencing) that will be running on this network.

The goal, of course, is to enable service providers to offer a rich and flexible set of services that can be differentiated in terms other than price and are well supported by an infrastructure optimized for service flexibility, complexity, space, and cost. At the network edge, business subscribers require a variety of ultra-high-speed optical data services; new services such as Gigabit Ethernet must be supported without sacrificing legacy applications. To achieve efficient use of resources, packet processing must be distributed to several locations in the network.

In the core of the network, increasing and managing capacity is critical. Here, the latest 10-Gbit/sec transmission technology is not enough to create an efficient, manageable network as router port and wavelength counts increase. High-bit-rate connections must be established beyond the limitations of individual wavelengths, leading to reduction in core router port count and routing of optical signals at granularities greater than that of a single optical wavelength or channel.

But despite tremendous advances in the fields of packet processing, transmission, and optics, independent developments in these fields typically are not synchronized in a way that allows network designers to build fully integrated solutions. What is needed is an architecture that utilizes the best optical, data, and network-management technologies available at any given time to create networks that scale from access to core; this architecture should incorporate multiple levels of data aggregation, routing, and optical devices that offer increasing rates and capacity to address increasing demand levels. Overlaying this platform hierarchy should be a network-management system that incorporates the functionality of all network elements and has a common look and feel to let network operators efficiently provide their customers with end-to-end services.

Packet traffic represents the bulk of the new demand placed on today's backbone routers. As packet traffic grows, massive numbers of wavelengths must be managed at two critical points of the network: the optical junction and the core packet switch. But even the largest carrier networks do not have huge numbers of points of presence (sites with hubs and switches)-the largest has perhaps 80 such junctions-and thus the traffic is typically being transmitted to a fairly limited number of destinations.

In other words, to achieve multiterabit capacities, large numbers of optical channels are being deployed in parallel along relatively few routes. At hub sites where these parallel channels terminate, huge and complex crossconnects have grown up; crossconnects with 12,000 ports would not be uncommon in the near future!

All of these interconnections result in entries in routing tables and links that must be maintained by the control plane. Furthermore, from a management perspective, each of these parallel links must be independently provisioned and maintained. Even with core networks now migrating from a 2.5-Gbit/sec to a 10-Gbit/sec infrastructure, the transmission technology is not sufficient to create an efficient, manageable network as router port and wavelength counts continue to increase.

For the solution to this problem, we return to the fact that large numbers of these wavelengths are running in parallel across a small number of routes. Because of that, there is often no need to crossconnect them. Rather than creating huge numbers of pipes and ports, it would be far more efficient to create bigger, faster pipes and ports.

Bigger pipes, however, are not likely to come only from increased laser transmission rates. Historically, such increases have occurred very slowly-rising only from 1 Gbit/sec to 10 Gbits/sec in the 20 years since the first fiber-optic networks were installed. Much of the existing fiber will have difficulty supporting a move to the next increment, 40 Gbits/sec. And each jump in speed requires more precision in lasers, modulation, and error-correction techniques. Even with faster transmission techniques, there are significant tradeoffs in capacity, distance, and cost that will be challenging to overcome.Router and switch port speeds, on the other hand, have increased steadily, providing a means to build the big pipes needed today. Creating these pipes will reduce both core router port count and optical signal routing requirements, allowing the industry to move forward with truly scalable networks without waiting for the next advances in transmission technology. Furthermore, the smaller the number of network elements and network objects in use, the easier a network is to manage. Carriers want simplicity, which they know comes from the smallest possible number of ports operating at the highest possible rates.

But how can these big pipes be created when packet router ports cannot currently operate at rates higher than the transmission rate of the WDM channel that carries them? The answer lies in a new architectural hierarchy of devices-all managed by a common platform-that provides for data aggregation and routing by utilizing progressive rates and capacities as traffic moves across the network. This architecture, called the Optical Data Network Hierarchy (ODNH), allows the bit rate to evolve along two dimensions-not just across a single time-concatenated optical channel with multiple time-division multiplexing (TDM) slices but also across the optical channels themselves.

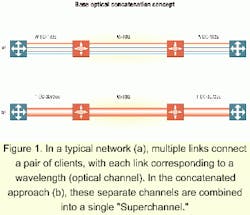

Achieving this goal involves a new standards-based technique, optical-channel concatenation (see Figure 1), that combines the capacity of multiple wavelengths into a single high-speed pipe (which we'll call by the trademarked term "Superchannel"). The technique involves a new framing protocol that breaks down wavelength "walls," sending bits across multiple optical channels simultaneously.

With this approach, advances in packet switching are at last decoupled from advances in transmission technology, allowing each to be developed and implemented independently. The immediate benefits: OC-768 channel concatenated (cc) and OC-3072cc switch port outputs can be transported on today's installed service-provider fiber infrastructures. Sophisticated network topologies and adds/drops can be supported without a huge number of crossconnects.

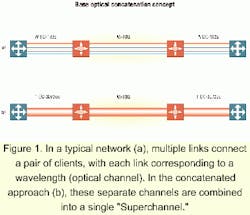

The ODNH specifies a set of five highly integrated architectural elements, spanning the metro area to the core (see Figure 2). The five device types coordinate with one another, under a common network-management umbrella, to deliver appropriate bandwidth and innovative optical data services. ODNH core devices (Type 4 and Type 5) provide restoration and transport of large volumes of data traffic. ODNH metro/access devices (Types 1, 2, and 3) multiplex data in the "last mile," aggregate data streams at the central office onto faster metro backbone pipes, and provide traffic distribution and bandwidth management across metro rings.ODNH core devices. The next generation of routers and switches will for the first time generate payloads at rates beyond the transmission rate of individual wavelength channels. The transmission systems must therefore use new techniques to support these payloads.

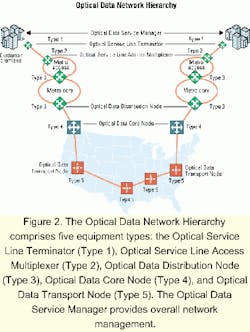

The Optical Data Transport Node (Type 5 in the ODNH scheme) provides ultra-high-capacity transport in the core of the network, transmitting signals over great distances without regeneration. That's possible partly because of a new modulation concept known as "waveband," made possible through the optical-channel concatenation methodology previously described.

A waveband is a data-unaware slice of the optical spectrum that supports multiple physically grouped optical payloads but is treated as a single unit, providing a flexible, scalable, high-bandwidth connection between source and destination (see Figure 3). Wavebands, which are the basis for creating Superchannels, are treated independently, optically added or dropped individually as needed at inline sites, and routed optically at fiber junction sites. The waveband's high granularity allows for superior transmission capability (payloads can have rates greater than the rate of any individual wavelength) and simplifies the network architecture because router port counts are minimized and fewer objects are needed to be managed.The Type 5 devices support three basic types of network node. The simplest of these is the inline amplifier (ILA), which enables increased signal transmission distances by boosting the power of the transmission signals. Long-distance transmission capability means that many more ILAs can be deployed in series before regeneration of the wavebands is required.

At each ILA site, wavebands can be created or dropped as desired by transforming the site into the second node type, the waveband optical add/drop multiplexer (WB-OADM). Wavebands can be terminated or regenerated at these sites as required; those not dropped are unaffected and transmitted through the site optically.

The third node type, the multispoke hub, exists at network nodes where there is a fiber junction of three or more cables (typically at major switch sites). Some wavebands are created at these sites and terminated from remote locations, while others pass through the sites optically en route to their final destinations; still others are regenerated in the sites as required.

The most scalable, simple, and sustainable core architecture has been used for years: As demand increases, port speed of the throughput of the device is increased. While today's terabit routers have approached this problem by using large numbers of lower-speed (OC-192) ports, the Optical Data Core Node (Type 4 device in the ODNH architecture) interfaces to a Superchannel of four, 16, or more 10-Gbit/sec (and in the future 40-Gbit/sec) streams that have been concatenated into one 40-Gbit/sec or 160-Gbit/sec (OC-768cc or OC-3072cc, respectively, and beyond). Superchannel switch ports provide robust protection and restoration mechanisms. They are true high-capacity ports that use Multi protocol Label Switching (MPLS) techniques to avoid the limitations and complexity of solutions that attempt to mimic high-speed performance. The scale of optical crossconnects is reduced by the factor of optical concatenation (e.g., n=16 for OC-3072cc).

Superchannels are mapped onto the wavebands to be routed across the long-haul network by the Type 5 devices previously described. In addition, standard interfaces are used to map signals into the waveband payload to provide compatibility with legacy systems and networks.

Restoration is handled through the intelligent distribution of packet traffic over dual diverse paths rather than over standby idle paths in the transport layer. For protection from failures in the physical layer, a graceful degradation mechanism responds to physical-layer changes and provides instantaneous traffic-engineering-based recovery. Carrier-class reliability designed into the Type 4 device can create a packet network capable of handling the most sensitive payloads. That obviates the need for optical-layer wavelength-granularity restoration.

ODNH access/metro devices. Until now, aggregation of customer traffic has occurred in the central office or terminal site, resulting in long and inefficient loops with multiple parallel service-specific or limited connections. The availability of special services such as Gigabit Ethernet, dedicated wavelength services, or virtual private networks is usually very limited, and delivery to customers is often slow. Aggregation equipment is not integrated-and may not interoperate-with access customer-premises equipment.

The Optical Service Line Access Multiplexer (OSLAM-Type 2 in the ODNH hierarchy) is a network element that supports efficient aggregation of data, TDM, and wavelength services at the central office onto faster backbone pipes. Located locally or remotely from terminals, the OSLAM supports Internet Protocol aggregation of up to 10-Gbit/sec Ethernet and hubbing for TDM and wavelength-based networking.

Currently, small and medium-sized businesses require multiple parallel facilities to support different communication needs. Both copper and fiber, or multiple fibers, may be involved. There may be several lines for videoconferencing, a T1 for local voice, another T1 or DSL for data, and still other private lines for intranet functions. Services may come from several providers, and the capacity available in the links cannot be shared across the services.

The Optical Service Line Terminator (OSLT-Type 1 in the ODNH hierarchy) is a customer-premises equipment platform that cost-effectively collapses legacy, Gigabit Ethernet, and OC-x facilities, multiplexing them onto a single fiber. It provides both large bandwidth and class of service (CoS)/quality of service (QoS) for data services, along with support for legacy services and equipment. The OSLT also provides flexible growth and carrier-class QoS support with sufficient bandwidth at a reasonable cost, permitting growth in both pure capacity and newer broadband services.

While the OSLAM acts as a service-management point for a particular customer of the service provider, supporting individual Gigabit Ethernet interfaces, the Optical Data Distribution Node (ODDN-Type 3 in the ODNH architecture) has interfaces to metro rings. Its much greater capacity and higher port speeds provide traffic aggregation across the entire metro network. Installed in the central office, the ODDN interfaces to the OSLAM, collects and distributes traffic between the access and distribution portions of the network, and provides provisioning and bandwidth-management services. It then hands off traffic to the Type 4 core device or other networking equipment.

It is essential for the Type 3 device to be "Superchannel-capable," so as traffic levels grow on the access/metro side of the network, the metro-core ring can utilize the optical-channel con catenation framing developed for the Type 4 and 5 devices.

The infrastructure created by the five ODNH device types described above is designed to create high-margin revenue opportunities for service providers. Service creation, resource allocation, fairness assurance, and network optimization are handled by the Optical Data Service Manager (ODSM), a management-control system that comprises a rich set of service and network control software. Intelligent management of a suite of differentiated transport and packet-based service platforms leads to the creation and high-velocity delivery of services.

The ODSM is constructed from a refined set of functional blocks whose core is the ability to control the network topology. The platform can simultaneously support point-to-point, point-to-multipoint, and multipoint-to-multipoint implementations, accommodating carriers' frequent desire for a variety of topological solutions.

The ODSM manages the entire topology and the associated control plane. Operation is simplified through the elimination of Layer 2 segment management; network-level connections are enabled, collapsing Layer 2/Layer 3 manual management complexity and simplifying the control and management planes. Unlike current solutions, where services, network management, control, data routing, link management, and transport are independent, the ODSM network topology control integrates all of these functions.

The desired level of service reliability is selected by choosing from various topologies. The simplest topology provides connectivity over a single physical connection-a solution that is efficient and economical but provides relatively poor reliability due to single points of failure. More sophisticated topologies, such as dual homing from the Type 1 subscriber units to different Type 2s, provide significant improvements in reliability. Throughout the network, the control of both physical and logical path diversity allows the service provider to efficiently create the desired level of service reliability.

Within each of the customer-premises equipment connections, service-quality constructs are added based on customer parameters, including service classification, bandwidth traffic rate policing, and other QoS factors, all selected at the time of service creation. The ability to create the appropriate QoS is one of the key features to provide service differentiation.

Service manageability is also critical. The ODSM's high level of integration allows for control over every aspect of managing the network-services, links, and network elements-allowing network operations to be unified, centralized, and simplified. Service manageability includes several tasks. Policy management provides the ability to provision service-level specifications. Service-assurance features provide traffic-rate policing, per-flow-based counters, reporting, and network availability. Customer network-management tools provide multitier support, service provisioning, service assurance, fault management, and access control. Management tools monitor traffic; perform characterization functions, network optimization, and modification; and move traffic to new channels.

Data service flexibility is maximized in terms of both service format (light path, tunable TDM, and data can all be offered simultaneously) and delivery (services can be offered over optics, and a single physical port can support multiple virtual private networks, each sized independently).

Service security features are integrated into the management and control system, with usage roles defined for each user; user information is automatically replicated across all of the network elements. The network supports challenge/response authentication mechanisms for individual end users, third-party security servers, and differentiated levels of resource control for Internet infrastructure providers, virtual service providers, and end users.

As providers add services to their network, traffic volumes increase and links may fill to capacity. Real-time traffic statistics are collected and analyzed to suggest changes for traffic flow optimization.

These service creation features allow for the selection of CoS, multiple drop priorities, multiple topologies, pipe-and-hose service-level agreement (SLA) models, and protection. Resource allocation features allow for SLA specification, connection admission, over/undersubscription, bandwidth resizing, and traffic engineering. Fairness assurance features include differentiated services and multifield classification, ingress policing and egress shaping, advanced queuing and scheduling, and service assurance. Net work optimization features allow deployment of multiple services on a single interface and per-flow performance guarantees.

William Szeto is founder and vice president, network technology and architecture, at Iris Labs Inc. (Plano, TX).