Fiber-optic Ethernet in the local loop

After proving its viability at the core, Gigabit Ethernet is poised to enter carriers' local distribution loops.

RUSS SHARER, Occam Networks Inc.

Gigabit Ethernet over fiber will become the standard for local distribution-loop deployment in five to 10 years. This migration to fiber-optic Ethernet is the result of three powerful forces: the heterogeneous traffic as the access network moves to a converged network environment, the economic benefits offered by greater scalability and flexibility in supporting increasing numbers of users demanding higher bandwidth, and technology extensions putting Gigabit Ethernet on par with ATM.

A networking protocol deployed worldwide in enterprise networks for nearly two decades, Ethernet is easy to install and manage and offers unequalled cost benefits. As such, the technology migrated from enterprise networks to carriers' backbone networks.

Gigabit Ethernet is deployed in the core as a result of two powerful trends. Data makes up an increasing percentage of overall network traffic. The capital and operating costs of Gigabit Ethernet technology are significantly less than other network technologies.

Most industry analysts agree that three factors affect today's telecommunications infrastructure: data surpassing voice traffic in 1999 or 2000; voice traffic growing in the range of 6%-7% while data traffic doubles annually; and data traffic being almost exclusively IP-based, the majority of it originating on Ethernet LANs.Assuming these growth rates continue on similar trajectories, more than 80% of public-network traffic will be data by 2005. And because this traffic originates in the local distribution loop, a similar statistical analysis is true for the access network.

The transition from voice delivered over circuits to derived voice delivered over packets is another key development. Whether used for pair gain solutions or as a replacement for more expensive legacy technologies, packet voice is beginning to take traffic volume from circuit voice. Today, it makes sense to examine packet-oriented protocols when evaluating next-generation access network technologies.

The days of separate voice and data access networks are coming to an end. Whether separate physically or logically, two access networks cost more to operate than one. To reduce the expense of operating separate voice and data networks, a common infrastructure from the edge of the carrier network to the core must be created. Gigabit Ethernet, with its ability to deliver a mix of sporadic broadband data and its capacity to carry time-sensitive data, offers carriers a major advantage (see Figure 1).

In late 2000, The Dell'Oro Group and The Yankee Group analyzed Gigabit Ethernet in the telecommunications environment and determined that services delivered over Gigabit Ethernet cost one fifth to one twelfth the cost annually of services delivered using traditional technologies. This analysis takes into consideration variances in capital and operational costs. Operational staff that understands Ethernet is readily available, trained, and ready to deploy it. Even the initial configuration of Gigabit Ethernet is significantly faster than traditional protocols.

Access networks are about to face previously unseen demands. Rather than legacy DS-0 (64-kbit/sec) traffic growth, broadband to the home and business will cause traffic volume to swell in the next ten years. Since DSL and cable will demand connections 100 times greater than a standard telephone call, access networks will have to scale quickly. This new traffic mix with a high percentage of data will also be less predicable and more "bursty," a normal issue with data traffic. While these new network characteristics are problematic for existing access network technologies, they play to the strengths of Gigabit Ethernet.

The traditional access solution is ATM over SONET or directly over optical, such as ATM passive optical networking. Demand for ATM grew primarily in WANs, where its circuit emulation capabilities fit well with existing circuit-based voice traffic. It then migrated to the access network.

Historically, ATM has had three key advantages over packet-oriented protocols such as Gigabit Ethernet. The debate between the two alternatives usually boils down to quality of service (QoS), constant bit-rate (CBR) support, and resiliency.

QoS is inherent in ATM, but not in Ethernet. Standards groups have been working on approaches to providing QoS over Ethernet. These approaches include resource reservation protocol and various class-of-service implementations, which are now broadly deployed. These techniques enable both the reservation of bandwidth, which provides a more predictable transport time, and traffic prioritization based on the type of data transmitted. Together, these techniques address the ability of Gigabit Ethernet to deliver real-time traffic by scheduling resources across the network, thus providing end-to-end congestion and flow control. Multiprotocol Label Switching is also being reviewed for use in Gigabit Ethernet networks, although Gigabit Ethernet will not be its first deployment area.

A Gigabit Ethernet feature known as rate limiting enables a carrier to set specific throughput limits on a port-by-port basis and therefore, a subscriber-by-subscriber basis. That enables the delivery of a variety of services over a common fiber at different price/performance points. Rate limiting, which addresses bit-rate issues, is easily adjusted (up or down) per subscriber, enabling user-adjustable provisioning of the service.

There is also work underway to overcome Gigabit Ethernet's perceived lack of resiliency. In December 2000, the IEEE approved P802.17, a new standards development project within the IEEE 802 LAN/MAN standards committee, to create a media access control (MAC) layer standard for resilient packet rings (RPR). The IEEE 802.17 Resilient Packet Ring Working Group (RPRWG) is working to define an RPR access protocol for use in LANs, WANs, and MANs to transfer data packets at rates scalable to many gigabits per second. The new standard will use existing physical layer (PHY) specifications and develop new PHYs where appropriate.

Today, fiber-optic rings are widely deployed in MANs and WANs, implementing protocols such as SONET/SDH. These protocols are neither optimized for, nor scalable to, the demands of packet networks. The goal of the RPRWG is to define a protocol that will provide Gigabit Ethernet with SONET-like fail over times of less than 50 msec, which will enable Gigabit Ethernet to carry interrupt-sensitive traffic such as voice with carrier-grade reliability.What is the best application for each technology today? The enterprise and residential desktop will remain Ethernet. In the core of the network where high capacity is required, 10-Gigabit Ethernet will play the dominant role. ATM will continue to be used in bandwidth-limited links, such as those attaching enterprise networks to the core telecommunications network. However, the economic and technical realities of fiber pushed closer to the user will dictate that Ethernet ultimately dominates everywhere.

To successfully deploy Gigabit Ethernet in the local access network today requires traffic engineering. By definition, access networks are closed networks. All traffic that enters the network comes from a given number of subscriber end-points. Using available Gigabit Ethernet tools, the local distribution loop can be modeled, sized, and engineered to ensure voice without delay.

New access equipment, which enables Gigabit Ethernet feeds between both the customer premise and the remote terminal to the central office is quickly becoming available. This equipment-integrated access devices on the premises and broadband loop carriers in remote terminals-aggregate the voice and data traffic and in many cases, convert the circuit-based voice to derived or packetized voice (see Figure 2). Testing shows that with this type of equipment, users can't determine the difference between circuit and packet-oriented voice in the local distribution-loop network.

In March 1996, the IEEE announced the formation of the 802.3z Gigabit Ethernet standards project. In May of that year, 11 companies formed the Gigabit Ethernet alliance to promote interoperability and development of the standard. A draft 802.3z standard was issued by the IEEE in July 1997, with the final standard ratified approximately a year later.

The PHY of Gigabit Ethernet uses a mixture of proven technologies from the original Ethernet and the ANSI X3T11 Fibre Channel specification. Gigabit Ethernet supports four physical media types. That media is defined in 802.3z (1000Base-X) and 802.3ab (1000Base-T). In the access network, fiber is the media of choice.

Today, an Ethernet network is typically wired in a star configuration with a switch at the center. That enables a choice of either copper or fiber and the delivery of the total available bandwidth (10, 100, or 1,000 Mbits/sec) to each node on the network. Inherently, switches provide the ability to avoid data collisions that were part of the original carrier sense multiple access/collision detection (CSMA/CD) definition. If two switch ports attempt to transmit data to the same port, the data enters a queue and is then transmitted serially. Switches also support data transmission in both half- and full-duplex mode. A full-duplex Fast Ethernet connection transmits data at rates of 100 Mbits/sec in each direction to provide a total data transmission rate of 200 Mbits/sec.

The 1000Base-X standard is based on the Fibre Channel PHY. Fibre Channel is an interconnection technology for connecting workstations, supercomputers, storage devices, and peripherals. It has a four-layer architecture. The lowest two layers, FC-0 (interface and media) and FC-1 (encode/decode), are used in Gigabit Ethernet. Because Fibre Channel is a proven technology, reusing its PHY greatly reduced the development time of the Gigabit Ethernet standard. The 1000Base-X standard supports three types of media:

- 1000Base-SX (short-wavelength) 850-nm laser on multimode fiber.

- 1000Base-LX (long-wavelength) 1,300-nm laser on singlemode and multimode fiber.

- 1000Base-CX short-haul copper "twin ax" shielded twisted pair cable.

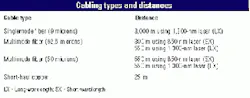

The Table on page 100 lists the cabling distances the standard supports.

The MAC layer of Gigabit Ethernet uses the same CSMA/CD protocol as Ethernet. The maximum length of a cable segment used to connect stations was traditionally limited by the CSMA/CD protocol. If two stations simultaneously detected an idle medium and started transmitting, a collision occurred. Switches and fiber remove these limitations.

Ethernet has a minimum frame size of 64 bytes to prevent a station from completing the transmission of a frame before the first bit has reached the far end of the cable, where it may collide with another frame. Therefore, the minimum time to detect a collision is the time it takes for the signal to propagate from one end of the cable to the other. This minimum time is called the slot time. A more useful metric is slot size-the number of bytes that can be transmitted in one slot time. In Ethernet, the slot size is 64 bytes, the minimum frame length.

The original maximum cable length permitted in Ethernet was 2.5 km with a maximum of four repeaters on any path. As the bit rate increases, the sender transmits the frame faster. As a result, if the same frame sizes and cable lengths are maintained, then a station may transmit a frame too fast and not detect a collision at the other end of the cable. Avoiding a collision requires one of two things: keeping the maximum cable length and increasing the slot time and therefore, minimum frame size; or keeping the slot time the same and decreasing the maximum cable length, or both. Fast Ethernet reduces the maximum cable length to only 100 m but leaves the minimum frame size and slot time intact.Gigabit Ethernet maintains the minimum and maximum frame sizes of Ethernet. Because Gigabit Ethernet is ten times faster than Fast Ethernet, keeping the same slot size requires the maximum cable length be reduced to approximately 10 m, which is not very useful. Thus, Gigabit Ethernet uses a bigger slot size of 512 bytes. To maintain compatibility with Ethernet, the minimum frame size is not increased-instead, the "carrier event" is extended. If the frame is shorter than 512 bytes, it is padded with extension symbols. These are special symbols, which cannot occur in the payload. This process is called carrier extension (see Figure 3).

For carrier extended frames, the non-data extension symbols are included in the collision window. That is, the entire extended frame is considered for collision and dropped. However, the frame check sequence (FCS) is calculated only on the original frame (without extension symbols). The extension symbols are removed before the FCS is calculated and checked by the receiver. So the logical link control layer is not aware of the carrier extension.Packet bursting is carrier extension plus a burst of packets. When a station has a number of packets to transmit, the first packet is added to the slot time using carrier extension, if necessary. Subsequent packets are transmitted back-to-back, with the minimum inter-packet gap until a burst timer of 1,500 bytes expires. Packet bursting substantially increases Gigabit Ethernet data throughput.

Any organization planning its architecture for next-generation access networks should consider Gigabit Ethernet.

Russ Sharer is vice president of marketing at Occam Networks Inc. (Santa Barbara, CA). He can be reached via the company's Website, www.occamnetworks.com