Controlling opex, availability in packet networks

by Sam Lisle

Service providers are offering new high-bandwidth, high-quality, packet-based services. As more bandwidth is delivered by the network and as these new services become increasingly availability- and performance-sensitive, the fault tolerance and the accompanying management simplicity of the metro aggregation and transport infrastructure grow critical in enabling service providers to control operational costs while delivering the required service performance.

Optical networking systems-the building blocks of metro aggregation and transport infrastructures-have sophisticated fault tolerance capabilities and enable service providers to build manageable, scalable, and profitable networks. As traditional optical elements evolve toward packet optical networking platforms (packet ONPs) that integrate reconfigurable optical add/drop multiplexing (ROADM), SONET, and packet processing capabilities, these new elements must apply successful optical fault-tolerant approaches across a broader range of technologies.

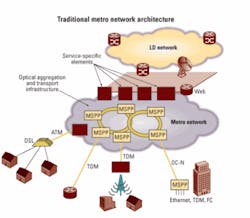

Figure 1 shows a classical metro architecture where a distributed aggregation and transport infrastructure connects widely dispersed end users to centralized service elements.

The service elements-Frame Relay and ATM switches, TDM voice and private-line platforms, and Internet access routers-are higher-cost, feature-rich elements that provide unique service functionality. Since the elements are few in number and centralized in location, more complex element operations can be tolerated.

By contrast, the SONET multiservice provisioning platform (MSPP) infrastructure elements provide geographically distributed, protected connectivity between end users and service elements for best-effort services and services requiring five-nines availability. These MSPPs are deployed in very large numbers, which means these elements must be low cost and simple to manage. To meet five-nines service availability requirements, the individual elements of this infrastructure are themselves five-nines elements able to endure hardware and software faults and undergo maintenance activities with no impact to availability.

To provide distributed, low-cost aggregation and transport in the new packet-centric environment, optical elements are evolving to incorporate a significantly higher degree of technology integration and sophistication in packet processing. This new class of optical element-known as the packet ONP-provides the distributed aggregation and transport that optical elements have always delivered but with the following innovations:

- Chassis-level integration of ROADM, SONET, and Ethernet made possible by a universal TDM and packet-based switching fabric.

- Connection-oriented packet aggregation and multiplexing capabilities that provide network resource reservations for Ethernet connections-made more scalable by an integrated control plane.

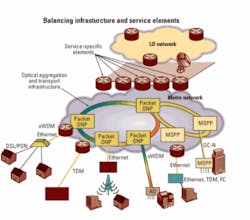

Therefore, the next-generation metro architecture shown in Fig. 2 is similar to the classical architecture where service elements and infrastructure elements balance one another. The service elements-routers-continue to be feature rich, specialized for each service, higher cost, and centrally deployed. Greater operational complexity can be tolerated and duplicated equipment is deployed to achieve fault tolerance. The packet ONP infrastructure elements continue to be general purpose, widely distributed, have a lower cost-per-bit, and by contrast are five-nines elements that are simple to manage.

This natural evolution of optical elements enables service providers to enjoy the same manageable scalability benefits that optical networking has always provided, only now service providers can:

- Provide a higher degree of service availability and quality of service (QoS) for Ethernet circuits.

- Continue to support the still growing quantity of TDM-based services.

- Economically scale both TDM and packet connections with modular ROADM/DWDM capabilities.

- Deploy this network as an interoperable behavioral extension of the existing optical network.

It is vital that these packet ONP elements deliver management simplicity by building on the fault-tolerant capabilities of previous optical equipment. They also must remain on the forefront of the latest technology advances in circuit, packet, and photonic layer fault tolerance.

Network element fault tolerance is a resistance to downtime as a result of hardware and software faults in the element itself, as well as downtime that may be inadvertently caused by human intervention during the course of owning the network or by an element not responding well to network conditions or events.

Because the aggregation and transport infrastructure is highly geographically distributed and contains many elements, fault-tolerant requirements become especially stringent. For a small number of service elements, it may be possible to develop specialized procedures or deploy completely redundant sets of equipment. But for a highly distributed infrastructure deployment a simpler, more scalable approach is vital.

The following paragraphs highlight the attributes of fault-tolerant aggregation and transport elements and indicate specific requirements and emerging technology advances.

Software upgrades. As general-purpose investments, aggregation and transport infrastructures have tremendous longevity in a metro network. The same deployment can provide aggregation and transport for several generations of services supported by several generations of service elements. This longevity and the sheer quantity of elements drive several requirements and highlight some emerging capabilities:

- In-service software upgrades: Network managers need to be able to upgrade a node’s software and firmware or an entire network without taking the node or network out of service. This requirement also applies to selectively upgrading specific software and firmware modules within a node.

- Network stability: Upgrade of system software should not cause rerouting or protection switching of traffic. The system should provide operations visibility for as long as possible during an upgrade.

- Backward compatibility: It should be possible to upgrade portions of the network while allowing other portions of the network to run on previous software without losing interoperability or feature capability.

- Upgrade timing and fallback: Control of the timing of upgrades to a large number of nodes is a requirement. So is reverting in-service to previous versions of the software should something go wrong.

In addition to these capabilities, packet ONPs are employing warm-standby management complex processors. By using warm-standby management processors that are already booted and have access to a portion of the network element database, loss of operations visibility during a software upgrade can be reduced by a factor of 10 over previous approaches.

Graceful restart extensions for IP-, MPLS-, and GMPLS-based control-plane protocols enable packet ONPs to maintain uninterrupted packet service during software upgrades. With graceful restart the data plane on a network element remains operational while the control-plane protocols reset and recover their state machines from their peers.

Hardware replacements and configuration changes. The need to upgrade and replace hardware units, modify network element hardware configurations, and upgrade the capabilities of nodes drives important requirements and new innovations:

- itless fabric protection switches: Protection switching of centralized switching fabrics is vital, since centralized fabrics touch all the subwavelength traffic handled by the node. Packet ONPs enable manual switching of the fabric via software without interruption of any subwavelength traffic traversing the node. Automatic switching of fabrics without causing any hit on packet traffic also is provided.

- In-service interface card swapouts: This capability enables removal of the working copy of a working-standby pair via a less than 50-msec protection switch.

- Removable management complex: Removing and replacing all management complex hardware without affecting traffic is an important requirement.

- In-line-amplifier (ILA) to ROADM upgrades: The ability to add optical ROADM fabrics to ILA sites with no hit to wavelength traffic increases flexibility.

Packet ONP systems can employ optical splitter designs to provide hitless ILA-to-ROADM configuration upgrades. For WDM-based infrastructures, it is often cost-effective to deploy a node as an ILA then add a ROADM fabric later. At the time of upgrade, there may be dozens of working wavelengths passing through that ILA, making it desirable to insert a new fabric into that shelf without forcing even a protection switch of the traffic.

Network database backups. Since the aggregation and transport infrastructure supports all metro network services, a loss of database or provisioning information affects a large number of customers and potentially all services, therefore driving the following requirements:

- Multiple levels of database backup: The automatic on-element storage of more than one nonvolatile copy of the network element database and off-element storage and backup are important.

- Database integrity validation: The use of checksums to validate clean copy or corrupted database files can meet this requirement.

- Manual database manipulation capabilities: These involve database transfer commands to manually move database copies from one location to another within a network element.

Network management interface. Highly fault-tolerant network operation places an important requirement on the management interface. A “stateful” management interface enables the element to deny service-affecting management actions unless the network element is in the proper state.

Traditionally, optical networking gear has implemented a stateful management interface known as TL-1. TL‑1 is stateful in that a command to add or delete a resource is only accepted if the network element is in the correct state or meets a set of conditions. This makes it difficult to inadvertently cause downtime through management actions. To add services, the operator enters commands in a rigid order and then removes services by a similar command sequence. There are no commands that simultaneously add and delete services.

For packet services, packet ONPs leverage a transport command line interface (TCLI) approach that merges the stateful management behavior of TL-1 with the packet flexibility of a traditional (yet potentially error prone) command line interface.

Resiliency under optical layer phenomena. Metro networks use a flexible and dynamic photonic layer to provision wavelengths and make optimal use of electronic subwavelength switching resources. Infrastructure elements must react to changing photonic network conditions that include:

- Dynamic span loss adjustments: Amplifiers must react to sudden and significant span losses introduced into the incoming receive fiber and maintain the appropriate launch power downstream to avoid traffic loss.

- Dynamic transient response: Amplifiers also must adjust to sudden additions or removals of individual wavelengths or groups of wavelengths from an optical span.

Troubleshooting and fault sectionalization. Rapid troubleshooting is vital for reducing downtime. If a network failure or outage does occur, the duration of simplex time or downtime is directly proportional to the time required to troubleshoot the root defect. Several key requirements and emerging capabilities emerge:

- Deterministic data plane: Packet connections must flow over a known route in the network and the route must not change on a packet-by-packet basis.

- Precision fault sectionalization: The operator must be able to determine on a hop-by-hop basis the origin of a connection defect.

- Multilayer fault sectionalization: The operator must be able to apply hop-by-hop fault sectionalization at photonic, circuit, Ethernet, and Ethernet transport tunnel layers.

Circuit monitoring capabilities such as tandem connection monitoring (TCM) in the ITU Optical Transport Network (OTN) standards allow packet ONPs to define multiple segments of sectionalized performance monitoring on point-to-point big-pipe OTN payloads. This capability enables operators to precisely diagnose the origin of defects even in circuits that traverse a number of carriers or geographic regions.

Ethernet OAM standards such as IEEE 802.1ag and ITU Y.1731 enable native Ethernet service layer monitoring and MPLS OAM standards such as VCCV promote MPLS service layer monitoring. These capabilities allow packet ONPs to provide detailed fault sectionalization for packet traffic.

Packet ONP elements can implement a significant number of optical power monitoring points throughout a DWDM/ROADM node. This is vital in isolating power-level defects that may occur between optical fabrics and mux/demux hardware, across dispersion compensation modules, and other places within the system.

As service providers deploy high-bandwidth, high-quality packet-based services, they require a highly fault-tolerant and simple-to-manage aggregation and transport infrastructure evolution that is an incremental extension of today’s network. This new infrastructure requires the individual elements to be highly fault tolerant for carriers to control operational costs while delivering the required service performance.

Packet ONPs achieve these requirements by providing five-nines availability. They do so by embracing several historical and emerging capabilities in the areas of software and hardware upgrades, element database management, network management interface robustness, resiliency under optical layer phenomena, and troubleshooting and fault sectionalization. Packet ONPs deliver these capabilities across circuit, packet, and ROADM technology sets and enable service providers to build large-scale, low-cost aggregation infrastructures for the emerging generation of services and beyond.Sam Lisle is market development director at Fujitsu Network Communications (http://us.fujitsu.com/telecom).