Video-distribution markets look to ATM technology as transport vehicle

David Pecorella

Artel Video Systems

Services such as Internet access, video on demand, digital TV, and voice over Internet protocol (IP) are starting to converge on the same core infrastructures at a furious pace. These "rich-media" infrastructures consist of an array of technologies capable of supporting all communication types. As convergence takes place, competitive local-exchange carriers (CLECs) and last-mile service providers (cable television, telecommunications, fiber-to-the-curb, and wireless) are looking for ways to capture and distribute new content, in conjunction with legacy content, over their existing delivery media. The ultimate solution must combine flexibility, scalability, and sophisticated bandwidth utilization.

The need to leverage proven technologies is also evident. Thus, many providers are appraising the technical merits of Asynchronous Transfer Mode (ATM) technology for video-content distribution (see Figure 1).

The ATM standard was defined in 1988 by the International Telecommunication Union (ITU) as a vehicle for the transport of voice, video, and data over the broadband Integrated Services Digital Network (B-ISDN). While the majority of traffic ported over the ATM infrastructure today is voice and data, video will soon occupy the same space and drive the need for higher-capacity ATM networks.

The basis for ATM technology is a high-efficiency, low-latency switching and multiplexing mechanism ideally suited to an environment where there are specific bandwidth limitations. ATM facilitates the allocation of bandwidth on demand by constructing virtual channels and paths between source and destination points on the ATM network boundaries. These channels are not dedicated, physical connections, but rather permanent or switched virtual connections, which are deconstructed when no longer needed. ATM transport uses a packet construct referred to as a cell, a fixed 48-byte data payload. Five bytes are allocated for cell overhead, making the overall fixed cell length 53 bytes.

The proliferation of the ATM core network over the past decade has been astronomical. ATM switch suppliers such as Lucent Technologies, Nortel Networks, FORE, Cisco, and Newbridge have carved multibillion-dollar markets out of both the core and enterprise edge switch business. The growth of private ATM networks with respect to public networks is also significant. It's clear that the footprint of the core ATM network infrastructure is growing closer to the metro-access markets, a fact not lost on network providers.

ATM ensures quality of service (QoS), which is difficult for traditional IP routing technology to guarantee. It is also a complementary protocol for technologies such as Synchronous Optical Network (SONET) and Synchronous Digital Hierarchy (SDH), enabling integration with nonswitched long-haul transports.

While a cursory look at the use of the ATM backbone to support video transport seems ideal, it is important to consider some profound aspects of high-bandwidth, streaming-video transfer. Video can't be treated like a typical data stream. It comprises a series of closely tolerance-clocked frames of color, luminance, audio, and data information. Video data is extremely sensitive to inconsistent or variable propagation delay. Once it is pulled off the network, reassembling the frame with the correct timing is an arduous task, if consistent propagation times cannot be guaranteed.

Unfortunately, the means by which ATM puts video data on the network doesn't help matters. ATM uses statistical multiplexing, also referred to as asynchronous time-division multiplexing (TDM), to efficiently manage inconsistent traffic patterns over the network. ATM will only allow access to the network if there is sufficient bandwidth available to support the transport stream requesting access. If the demand for links exceeds the speed of the available channels constructed by ATM, the network multiplexer has to buffer packets, further exacerbating the delay variability.

There is no error-correction mechanism that is effective on real-time streaming video. Higher-layer protocols have the ability to retransmit lost or damaged packets. Unfortunately, because of the real-time nature of the video streams, there is also no opportunity to initiate a session to resend lost or damaged packets. This problem is worsened by the high-bandwidth demands of the video feeds. Bandwidth restrictions make high-speed uncompressed digital video difficult to transmit through the ATM carrier networks. While the speed and reliability of the ATM switched networks are unparalleled in comparison to other widely executed wide-area network (WAN) technologies, the nuances and peculiarities of digital video make it impractical to transport real-time video in its native uncompressed format. Sophisticated compression techniques have to be employed within ATM networks to alleviate technical road blocks when it comes to managing these large, super-fast video streams. As a result, many are turning to MPEG-2 (Motion Picture Experts Group) compression technology.

MPEG-2 technology is now the de facto compression standard for distributed entertainment-quality video. It can efficiently compress full-motion video data for transmission over ATM networks. Full-motion, digitized, and uncompressed National Television Standards Committee (NTSC)-quality video requires a data-transfer rate of roughly 240 Mbits/sec. With little perceived degradation, MPEG-2 can crunch that down to 16 Mbits/sec for contribution-quality video and to 4 or 5 Mbits/sec for distribution-quality video.

An intrinsic quality of video is that its utility to the end user is the visual aesthetics of the picture, not a bit-for-bit reconstruction of a data stream. Infor mation contained in the original video not dis cern able to the human eye or ear is not important and can be discarded without detriment to the pleasure of the end user. MPEG-2 exploits this factor through several compression techniques.

Video is a series of frames; in the case of television video, there are 30 frames/sec. Each frame contains all of the color and luminance information necessary to display a picture on the screen as well as other ancillary information associated with the program like audio and data.

The retina of the human eye senses visual information with two different sets of cells-rod cells and cone cells. Rod cells are more adept at detecting small changes to detail within a picture. These cells are only sensitive to the intensity or luminance of the light. Cone cells, only sensitive to color information, perceive less change in detail.

MPEG-2 compression technology takes advantage of this fact by deploying chroma sub-sampling to alter video. Since the eye does not effectively discern small changes in color or chrominance, then chrominance can be sub-sampled to a lesser degree than luminance without perceptible picture degradation.

Sampling ratios are defined as a ratio of three numbers, luminance: color:capacity. Sampling the luminance at the same resolution as chrominance is referred to as 4:4:4 sampling, which is generally not used. Generally, 4:2:2 sampling is used to store content video that may be recompressed and redistributed and 4:2:0 sampling, which comprises nearly half the total bandwidth of 4:4:4 sampled video, is used exclusively for distribution-quality video.

Another algorithmic compression technique is called motion-vector estimation. In the case of video, many of the frames are very similar to the content of neighboring frames. For example, if a video feed was manually forwarded from a VCR to a television set frame by frame, the information from one frame to the next doesn't change that much. This phenomenon is referred to as temporal redundancy.

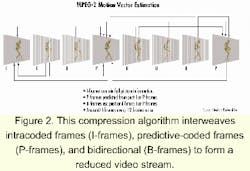

MPEG-2 uses this phenomenon to its advantage by sampling all of the information on a frame every so often. These frames are referred to as intracoded frames or I-frames. I-frames contain information about the full picture and are generally only placed in the video stream twice per second or every 15 frames.

Predictive-coded frames (P-frames) are typically 30% to 50% the size of I-frames. P-frames are decoded by using information from previous I-frames or other P-frames. Information that cannot be borrowed is coded in a similar way to I-frames.The third frame type is called a bidirectional frame (B-frame). B-frames use information from previous frames in much the same way as P-frames. However, B-frames can also use information from future pictures. That is possible because when these frames are coded, the encoder can access some of the frames ahead of it. B-frames can use either past or future information from both I-frames and P-frames and are typically 50% the size of P-frames. These frames are interwoven to form a greatly reduced overall video stream (see Figure 2).

The discrete cosine transform (DCT) converts spatial information into frequency information. The human eye is less sensitive to the higher-frequency content, thus the encoder is free to discard those frequencies less visible to the human eye. Quantization and Huffman encoding are other mathematical constructs used to reduce the overall size of the video content by coding commonly grouped DCT coefficients.

MPEG-2 is also flexible to the degree that information is coded. When the content is rapidly changing, the eye is less sensitive to detail, so less detailed content has to be coded. When the change in content is slower, the encoder engine injects more detail and thus more data.

As noted earlier, the innate time-sensitive nature of video makes managing the clocking of the video stream every bit as important, if not more, than managing its content. MPEG-2 injects into the stream a free-running 27-MHz timing clock called a program clock reference (PCR). The system time clock in the decoder is initialized by the first transmitted PCR. When the next timestamp arrives, the decoder's clock time should be the exact value of this timestamp. If not, the decoder clock has to be adjusted based on the difference between the internal clock and PCR value.

MPEG-2 video is extremely sensitive to variances in propagation delay. Data encoded in an MPEG-2 packet is not associated with a particular pixel in a specific frame. Because the encoding process places vital information about past frames, as well as future frames, lost or corrupted data can result in noticeable blocking artifacts.

MPEG-2 has stringent QoS parameters. For a video stream of 1.5 to 20 Mbits/sec, bit-error rates (BERs) of less than 1 in 10-10, packet/cell loss rates of less than 1 in 10-8, and packet/cell delay variations of less than 1-microsec must be maintained.

While MPEG-2 streams do need careful attention and consideration over ATM networks, the compression scheme's value in delivering multiple streams of high-bandwidth information cannot be overlooked. For example, assume a cable-TV service provider has a 1-GHz coaxial cable that services a particular neighborhood and the full bandwidth of the cable was used for MPEG-2 video transport. Using 256-quadrature amplitude modulation (QAM), data streams of 39 Mbits/sec can be hauled over a 6-MHz channel. Up to eight MPEG-2 streams can occupy each 39-Mbit/sec channel, and there are approximately 150 said channels per 1-GHz cable. A quick calculation reveals that, in theory, a cable-TV provider could transport up to 1,200 simultaneous video feeds per cable.

That reveals the most important aspect of MPEG-2 encoding, the ability to provide multiple channels of digitized high-quality video over existing last-mile transmission infrastructures. MPEG-2's ability to utilize bandwidth inherent in current infrastructures is crucial to the success of its use as a means for video-content distribution.

One of the greatest synergies between MPEG-2 encoded video and the ATM transport network is that each respective bit structure is based on a fixed length. MPEG-2 packets consist of fixed 188-byte packets (184-byte payload and a 4-byte link header), making the logistics mapping of MPEG-2 transport over ATM simple. The mapping of data onto ATM networks is a process called segmentation and reassembly (SAR). In the case of MPEG-2 video, the ATM edge device's ability to mitigate cell-delay variation is of the utmost importance. ATM must cope with the needs of the data being transported and provision some function to manage each video stream's requirements. The mechanism responsible for this function is referred to as the ATM adaptation layer (AAL). The AAL sits above the ATM layer in the ATM reference model. The function of these two layers is analogous to the data link layer in the Open Systems Interconnection (OSI) reference model defined by IEEE 802.3.

The two AALs that typically transport MPEG-encoded video are AAL1 and AAL5. The AAL1 convergence sub-layer was designed for constant bit-rate real-time applications. At the expense of some additional overhead, AAL1 contains provisioning for a forward-error-correction scheme. AAL1 services also have the ability to contend with time-sensitive cell streams through constant bit-rate emulation. AAL5 was originally developed for connection-oriented data transfer but has evolved to address MPEG-2 video. AAL5 does not have provisioning for forward error correction or an inherent ability to cope with timing relationships or mis-sequenced ATM cells. It is, however, an extremely efficient protocol with very little overhead attached to its payload. One aspect of mapping compressed video onto ATM is that two 188-byte MPEG-2 packets, with 8 trailer bytes, map exactly onto eight 48-byte ATM payloads.

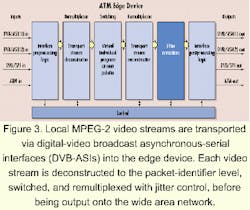

Getting MPEG-2 onto ATM networks and then picking it off in good order takes some care. The ATM network edge device must be particularly adept at handling MPEG switching and jitter management to compensate for propagation delays in the network. Jitter management must include a combination of buffering, fixed delay queuing, timestamping, and delivering output at a steady rate.Local MPEG-2 video streams are typically transported via an interface known as digital-video broadcast asynchronous-serial interface (DVB-ASI). ATM edge devices deconstruct either an MPEG-2 multiprogram transport stream (MPTS) or single-program transport stream (SPTS) to the program level and ultimately to the packet identifier (PID) level. At the PID level, different program streams can be reordered and multiplexed back into another MPTS, a process known as remultiplexing. Each packet of MPEG-2 data is tagged with a PID, a 13-bit field that identifies what program a transport stream of a particular packet is associated with. A PID can also reveal what type of information (program association tables, video, and audio) is contained in the payload. The streams can then be segmented and placed on an ATM OC-3 (155-Mbit/sec) or OC-48 (2.5-Gbit/sec) WAN transport.

On the receiving end of the ATM boundary, another edge device manages the ATM cell stream, SAR operations, and finally, the reconstruction of either an SPTS or MPTS. The local service-distribution networks can then distribute the video (see Figure 3).

It's clear that ATM will be implemented for video distribution. While ATM is a well-respected and proven network architecture, intelligent video-aware edge devices need to manage video content at the periphery of the ATM cloud to contend with jitter-management issues. Powerful compression algorithms like MPEG-2, coupled with these devices, will allow last-mile service providers to leverage a growing and well-established core network to distribute more content and interactive services.

This network infrastructure offers many elements that service providers yearn for, such as cost-effective, scalable, proven technologies, which implement sophisticated bandwidth utilization. It can be accomplished without massive cash outlays for proprietary long-haul transport systems. In the end, the consumer will benefit as last-mile service providers vie to win their businesses with a new generation of value-added services.

David Pecorella is a product-marketing manager at Artel Video Systems Inc. (Marlborough, MA). The company's Website is www.artel.com.