MPLS, quality of service, and the next-generation network

MPLS promises to enable carriers to enforce quality- and class-of-service guidelines on Internet Protocol traffic.

BY JEFF LAWRENCE, Trillium Digital Systems Inc.

The next-generation network is evolving toward an infrastructure that is based on packet- and cell-based communications. As the infrastructure moves in this direction, service considerations related to throughput, delay, jitter, and potential information loss become serious issues that must be addressed to ensure that the appropriate quality of service (QoS) is available to satisfy the expectations of a wide range of network users and enable the adoption of new advanced services.

As defined by the International Telecommunications Union in the E.800 recommendation, QoS is "the collective effect of service performance, which determines the degree of satisfaction of a user of the service." Interestingly, this definition creates the need to have quantifiable measurements that can be associated with the subjective experience of the user's perception of the QoS. Ensuring an appropriate QoS that meets user expectations will become increasingly challenging as the number of users and volume of traffic continues to increase in the network.

Recent usage studies have found that traffic patterns within the Internet exhibit the characteristics of fractals-meaning that traffic patterns will look the same whether traffic is observed at a macroscopic or a microscopic level (the scale of the axis simply changes). Variations in traffic patterns arise because of varying user patterns and the variations in the traffic itself (traffic can be in the form of short e-mail messages, video streams, long file transfers as part of Web browsing, or real-time voice). Understanding these patterns and building an infrastructure that can provide high throughput, low delay, low jitter, high availability, and scalability regardless of the variations will be critical to ensuring the successful adoption and deployment of new services offered over the next-generation network infrastructure.The simplest way to ensure a high QoS is to engineer the network so it has sufficient capacity-in the form of processors, buffers, and high-speed links-to process, store, and move packets through the network. If the capacity is sufficiently large, then by implication, information will move quickly and with minimal delay and jitter through the network. The downside of this approach is that it may be inefficient and potentially expensive to build.

Some recently deployed networks are initially relying on their excess capacity to provide fairly high levels of QoS; but as their traffic increases, these networks will have to depend on additional mechanisms to maintain the same level of QoS. These supplemental mechanisms fall into the realm of "traffic engineering," which is based on the concept that the traffic and information flowing between applications can be differentiated (e.g., voice, video, e-mail, and Web browsing) and moved through the network with different levels of service.Traffic engineering uses either reservation- or reservationless-based mechanisms. Reservation-based mechanisms such as the integrated services model of the Internet Engineering Task Force (IETF) assume there is a certain amount of capacity available for each type of service. Capacity is reserved on an "as needed" basis from processors, buffers, and links. Reservationless mechanisms such as the differentiated services model of the IETF do not reserve capacity but instead assign different priorities to traffic and information flows. As capacity is used up, higher-priority traffic and/or traffic flows are maintained, and service provided to lower-priority traffic flows is degraded. In both cases, if the network cannot provide the required level of service, then it may use admission control mechanisms to prevent additional traffic from entering the network so as not to affect the already-existing traffic and flows.

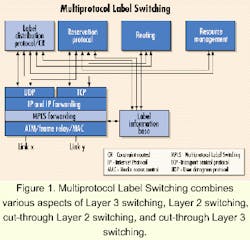

The "nuts and bolts" of supporting different QoS mechanisms occurs at the level of the packet routing, switching, and forwarding mechanisms. There are a number of solutions that address the throughput, latency, and scalability issues previously mentioned. These solutions include Layer 3 switching, Layer 2 switching, cut-through Layer 2 switching, and cut-through Layer 3 switching. The differences among these approaches result from whether the routing and forwarding occur at the data-link level (Layer 2) or packet level (Layer 3); or whether a flow is identified locally or end-to-end. In general, Layer 3 switching involves routing and forwarding (hop-by-hop) or switching and forwarding (source routing).

Each approach has advantages and disadvantages. Hop-by-hop routers perform significant processing, as packets are received, examined, and forwarded on to the next "hop" toward the destination. Unfortunately, as the traffic increases, these routers can become bottlenecks, and it becomes difficult to scale them to large networks. On the other hand, source routing presents other challenges because it introduces additional complexity and increased delay to recover from route failure. The various approaches address the same challenges from different angles.

Multiprotocol Label Switching (MPLS) is a protocol, specified by the IETF, that provides for the efficient classification, routing, forwarding, and switching of traffic flows through the network. MPLS is becoming the mechanism of choice to manage traffic flows in Internet Protocol-, ATM-, and frame relay-based networks. MPLS combines various aspects of Layer 3 switching, Layer 2 switching, cut-through Layer 2 switching, and cut-through Layer 3 switching to successfully provide efficient and scalable routing of traffic flows (see Figure 1).The forward equivalence class (FEC) identifies traffic flows. FEC is a representation of a group of packets or cells (i.e., traffic flow) that share the same requirements for their transport. MPLS can manage traffic flows of various granularities such as flows between different pieces of hardware or even flows between different applications. Traffic flows from different sources traversing the network toward the same destination can be merged by using a common label. This process is known as "flow aggregation" or "stream merging" and provides a means to efficiently transport large amounts of data over the equivalent of virtual trunks.

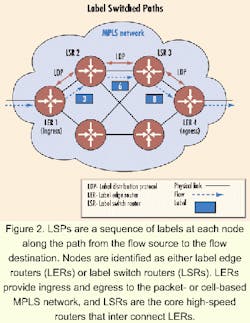

MPLS is independent of the Layer 2 and Layer 3 protocols and provides a means to map Internet Protocol (IP) addresses to simple fixed-length labels (also known as "tags") used by different packet-forwarding and packet-switching technologies. The flows are transported on label switched paths (LSPs), which are a sequence of labels at each node along the path from the flow source to the flow destination. Nodes are identified as either label edge routers (LERs) or label switch routers (LSRs). The LERs provide ingress and egress to the packet- or cell-based MPLS network, and the LSRs are the core high-speed routers that interconnect the LERs (see Figure 2).

The Layer 3 protocol forwards the first few packets of a flow. As the flow is identified and classified (based on various QoS or class-of-service requirements), a series of Layer 2 high-speed switching paths are set up between the routers along the path between the source and destination of the flow. The Layer 2 switching paths are established by assigning a label to each flow traversing a link that connects two LSRs or a link that connects an LSR and an LER.The binding of the FEC to the labels is maintained in the label information base. Labels only have local significance and are encapsulated in the Layer 2 header in the form of virtual-path identifiers/virtual-channel identifiers for ATM, data-link connection identifiers for frame relay, and shim headers for IP (see Figure 3).

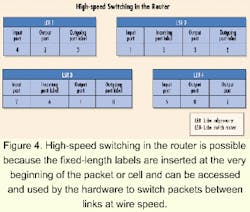

High-speed switching in the router is possible because the fixed-length labels are inserted at the very beginning of the packet or cell and can be accessed and used easily by the hardware to quickly switch packets between links at wire speed (see Figure 4). As the processing depth into a packet increases for classification, routing, and switching, the performance requirements of the router increase. These performance requirements can be achieved through a combination of content-addressable memory, ASICs, application-specific service processors, or microprocessors.

Label assignment can be topology driven (e.g., between source and destination devices), flow driven (e.g., Reservation Protocol-RSVP) or control driven (e.g., policy based). Associating these labels with an FEC and binding these labels to each other across the entire path of the flow is performed by either a routing or label distribution protocol. The Border Gateway Protocol and RSVP have been enhanced to support this management of the MPLS label space. The label distribution protocol is a new protocol that has also been designed to support the management of the MPLS label space and can support explicit routing based on QoS and class-of-service requirements. MPLS can also support the Open Shortest Path First routing protocol.

MPLS offers a great deal of flexibility and, even today, allows what is known as "ships in the night operation." That is, MPLS can be introduced into a network without affecting the existing operation of other routing, switching, and forwarding protocols within the network. That will allow for the gradual deployment of MPLS without having to replace the network infrastructure all at once. In the future, MPLS will be able to support transparent and nontransparent wavelength (i.e., lambda) switching in optical networks. The ongoing enhancement of the MPLS specifications is underway in the MPLS and Routing Area working groups of the IETF and in the ATM-IP Collaboration and Traffic Management working groups of the ATM Forum. Additional activities are underway in other industry forums to enhance MPLS for efficient operation in optical networks.

Future applications and services must be aligned with developments in the underlying network infrastructure to take full advantage of its capabilities. In the future, MPLS and other associated protocols will define, to some extent, the network's capabilities. Understanding these capabilities will ensure that as advanced services are developed and deployed, they will behave as expected-and not present any unwelcome surprises-to users and service providers. In the future, applications running on the network will be able to identify "flow types" and take maximum advantage of the network's ability to manage its resources efficiently and cost-effectively.

Jeff Lawrence is co-founder, president, and CEO of Trillium Digital Systems Inc. (Los Angeles).

This article appeared in the July 2000 issue of Integrated Communications Design, a sister publication.