Switch fabric requirements for optical transport systems

By Gary Lee

The details of switch chip implementation can vary widely depending upon the capabilities the design is intended to support and the switch chip chosen.

Carrier-class optical transport systems (OTS) have a backplane fabric based on a legacy of carrying TDM traffic that has been maintained in some form even into this age of IP-oriented data traffic. As we move toward a completely packet-based world, traditional TDM-based OTS are evolving to more efficient backplane architectures using high-bandwidth Ethernet switch chips, where TDM data is transported across a packet fabric.

Designers, then, must know how OTS requirements map into Ethernet switch chip features. This includes interface protocols, port count, traffic classification, congestion management, and how to choose from the existing traffic tunneling methods that include the use of proprietary headers to identify unique traffic flows.

These switch chips must support a wide range of features while also minimizing system costs. On the customer side, a variety of interfaces and protocols must be supported including Ethernet and storage, such as Fibre Channel or Fibre Channel over Ethernet. The customer traffic must be efficiently aggregated for transport over the optical carrier network while maintaining service-level guarantees. On the carrier network side, the switch chips must provide high-bandwidth connections for Optical Transport Network (OTN) or SONET/SDH transport.

Ethernet switch chip requirements

Traditional OTS backplanes have used cell-based fabrics where a limited amount of packet traffic was segmented into cells on ingress, and re-assembled into packets on egress. As the amount of packet traffic and link bandwidth has increased over time, segmentation and reassembly for TDM backplane fabrics has become a very costly approach, leading to the use of both a TDM fabric and a packet fabric in the system backplane.

Most system designers agree that this fabric combination is a temporary solution, as emerging Ethernet fabrics have the ability to transport both packet and TDM traffic, reducing system cost and complexity. But such Ethernet fabrics are just now starting to support these requirements.

If the OTS includes Ethernet aggregation capabilities, it must combine multiple lower-rate ports into high-bandwidth links that support optical transport protocols, such as OTN. For example, 64 Gigabit Ethernet (GbE) ports may be aggregated into active and protective 40G OTN links through an OTU3 mapper. In this case, using a single high-port-count Ethernet switch can save system cost compared to a multiple chip approach. Due to potential over-subscription of the 40G link, the switch must also support advanced classification capabilities and provide scheduling features that enable minimum bandwidth guarantees along with traffic policing and shaping.

To support TDM flows, Ethernet fabrics need to support lossless operation with bounded latency. This is because frames cannot be dropped as they are in traditional LAN switches, and egress jitter-removal FIFOs must be sized based on maximum latency bounds.

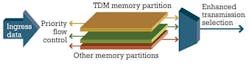

Surprisingly, solutions for these requirements can be borrowed from the data center market, as shown in Figure 1. By using IEEE Priority Flow Control (PFC) frames, TDM traffic can be isolated using special memory partitions within the switch and flow controlled separately, guaranteeing no loss for TDM traffic. IEEE Enhanced Transmission Selection (ETS) specifies egress schedulers that can give minimum bandwidth allocations to traffic classes. By providing a minimum bandwidth guarantee to TDM traffic, maximum latency can also be guaranteed. In addition, traffic shaping can be applied to non-TDM traffic to minimize packet fabric congestion.

The switch memory architecture also affects TDM performance. A true output queued shared memory switch provides low-latency jitter along with predictable queuing delays. Some Ethernet switches employ a combined input/output queued (CIOQ) architecture due to limitations on internal memory performance. This type of architecture suffers from high latency and internal congestion, leading to higher-latency jitter—which requires larger egress timing recovery FIFOs and, thus, higher system cost.

Traffic tunneling methods

Until recently, SPI 4.2 was the standard channelized interface for 10G TDM traffic. But this interface is too wide for high-port-count devices and cannot be directly connected across backplanes. Interlaken has emerged as a replacement for SPI 4.2, but large Ethernet backplane fabrics do not have Interlaken interfaces. As a result, TDM mapper and framer vendors have developed enhancements to the XAUI standard, such as SPAUI or Extended XAUI that include in-band channel information and channelized flow control. These approaches are ideal for Ethernet fabrics that usually contain XAUI physical interfaces.

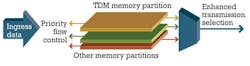

Ingress traffic will contain a channel number somewhere in the header. Typically, the Ethernet preamble will be used to minimize bandwidth overhead. The Ethernet switch must be able to parse this channel number and, along with the ingress port number, use it to forward the frame to the correct egress port.

As can be seen in Figure 2, each switch ingress port can use an independent set of channel numbers. In the case illustrated in the figure, both ingress port A and B use channel number 4, but the switch uses this plus the ingress port number to map these to different destinations.

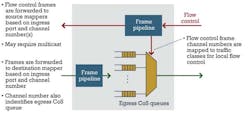

A next-generation OTS switch must also support channelized flow control. Figure 3 shows that frames are not only forwarded to an egress port based on channel number, but the channel number is also assigned to traffic classes in the frame pipeline, which are mapped into egress queues. By using ETS, each channel can be assigned a different minimum bandwidth.

The egress mapper can send channelized flow control information back to the switch. By using parsing features similar to the ones used for PFC, frames can be paused based on channel number in the egress class-of-service (CoS) queues. The frame pipeline can also be used to forward these flow control frames to the proper ingress mapper based on channel and port information. If multiple channels must be flow controlled, these can be multicast to the proper ingress switch ports.

In optical aggregation systems, it may be desirable to label flows for identification across the optical link. This can be done with traditional methods, such as MPLS or virtual private wire services (VPWS). Proprietary interswitch link (ISL) tags can be used as well. If similar transport systems are used on each side of the link, global address fields in these tags can be used to create a large virtual Ethernet switch encompassing two separate physical locations.

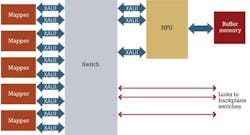

Merged TDM — a packet fabric example

Figure 4 shows an example 100G line card for an optical transport system. Mappers are available today with single, dual, and quad 10G interfaces to optical modules. On the other side, they may contain SPAUI or extended XAUI interfaces to a line card switch chip. This switch chip contains high-bandwidth uplinks to multiple switch cards in the backplane, providing system scalability up to tens of terabits. Some frames can be diverted to an attached NPU for buffering and fine-grained traffic management. This card can forward both TDM and packet traffic to the backplane.

The system may contain legacy SONET/SDH cards, as well, which must also contain a mapper chip connected to the protocol processor for tunneling TDM flows through the packet fabric.

The system may also contain Ethernet access cards that include an Ethernet switch with high-bandwidth backplane connections. These cards forward standard Ethernet frames to the OTS line cards and can use a different memory partition in the switch, effectively acting as a separate virtual fabric.

When packets are received by the line card, as shown in Figure 4, the mapper can add an OTU wrapper on the frame for optical transport. On the receive side, the mapper can remove the OTU wrapper and insert the frame back into the Ethernet virtual fabric for forwarding to an access card as a standard Ethernet frame.

The NPU can be used for buffering, fine-grain traffic management, and exception processing. In most cases, software can properly provision TDM traffic across the OTS fabric. When traffic temporarily exceeds the mapper bandwidth or the fabric bandwidth, it can be diverted to the NPU buffer memory.

In other cases, it may be desirable to provide finer grain traffic management than can be configured in the mapper and switch. In these instances, the switch can classify these traffic types and divert them to the NPU, which can support thousands of CoS queues.

Finally, special packets can be classified by the mapper and/or switch, and diverted to the NPU for processing. These include Ethernet operations, administration, and maintenance connectivity checking and performance monitoring packets, along with frames that may need special security processing as they enter or leave the access network.

Optical transport systems are migrating to packet-based switch fabrics due to changing traffic demands and cost. Advanced Ethernet switch fabrics can fill this role by providing features that support both packet and TDM traffic. To support this, efficient OTS line cards can be developed that include direct connection between mappers, NPUs, and switches.

Gary Lee is director of product marketing for Fulcrum Microsystems. He has been working in the semiconductor industry for over 29 years.