SDN moves from hype to reality

by JIM THEODORAS

Networking has witnessed an endless succession of over-hyped technologies over the years. After all, something must feed the marketing monster an endless supply of headlines, blogs, speaking ops, conferences, and, yes, bylines (guilty as charged).

Software-defined networking (SDN) might seem like another example of this phenomenon, given that the hype surrounding this technology may seem more suited to a season of "American Idol" than to the calendar of fiber-optic conferences. However, it's important to note that there is a lot of serious work being done by a lot of very smart people to make the SDN dream a reality – and their efforts are beginning to bear fruit.

So let's look at what SDN is, where it came from, and where it's headed.

Defining SDN

SDN is such an all-encompassing term that it's difficult to define. To some, SDN is a way of switching networks globally in the most efficient way possible. To others, it's a way of letting packet-based networks better handle today's predominantly flow-based traffic such as over-the-top (OTT) video. To others still, SDN promises the commoditization of switching/routing hardware, similar to what has occurred with servers. Finally, to data-center managers who are driving its rapid adoption, SDN offers a vendor-agnostic way of data-center virtualization – initially from within, eventually between geographical sites, and ultimately worldwide to create a global data-center that acts as a single entity.

But when boiled down to its essential element, SDN is nothing more than the separation of the control plane from the data plane. The data plane focuses on its job of moving data, and it's a slave to a controller that may or may not be physically separate and/or centralized, though often SDN implies external centralized control.

The general concept itself is not new. The use of centralized, separate control to make more efficient decisions about the flow of something is almost as old as humanity itself, and there are more examples than space allows in this article to cover. Early traffic lights merely timed red-green cycles, each one independent of others; now, centralized control of synchronized traffic lights greatly improves throughput of roads. Early airports made local decisions on scheduling the landing and takeoff of planes; now, centralized air traffic control has dramatically increased the efficiency of air travel.

SDN in networking is not new either. We could argue that AT&T's Intelligent Call Processing system that sends messages over the Signaling System 7 network to route 800 calls was an example of voice-call SDN. On the router front, AT&T's Route Control Platform efforts presaged the benefits of centralized control of routers. In fact, there is little argument that separation of the control plane will improve a network's efficiency. The argument typically is more about how to best implement it.

OpenFlow

SDN can't be discussed without mentioning OpenFlow, though there are other competing protocols (I2RS, XMPP, etc.). Please note the word "protocol." By itself, OpenFlow does nothing. It's like the BIOS on a PC. It's the ambassador between hardware (the motherboard) and higher-layer software (the operating system). Higher-layer software applications (MS Office, Adobe, etc., in our analogy) must be written on top of OpenFlow to realize its value.

So why all the fuss? For the first time, a protocol exists to directly access the packet forwarding engine inside a router, which is a router's primary value-add, a source of billions of dollars in revenue over the years, and the last remaining hurdle in "virtualizing" the functionality.

The general concept is that, in addition to MAC and IP tables, an OpenFlow table is also populated as a router does its job. The router no longer makes decisions. It just accepts packets and fills in the table, then does what an external controller tells it to do, based on simple if/then/else rules. Using wildcards acting on packet flows enables a higher granularity of routing that has been missing until now.

Again, identifying packet flows is nothing new – NetFlow agents and tables have been around for years. But monitoring flows and intelligently switching them are two entirely different things.

SDN inside and outside the data center

While SDN means different things to different people, and its benefits are far reaching, the disparate fields of study have been gradually coalescing into two distinct disciplines: inside and outside the data center.

"Inside the data center" refers to virtualizing the switching and routing function, commoditizing the hardware, and having all vendors' hardware managed by a common external controller so the entire data center behaves as a single entity. "Outside the data center" refers to the physical transport networks that interconnect them. While both disciplines contain all network layers, routers, switches, fiber optics, etc., their requirements are very different primarily due to link distance and the latency it creates.

It's somewhat ironic that a protocol that looks to revolutionize the management of global networks and flip inside-out router architectures had its catalyst inside the data center. But data centers have grown so large that their internal networks look a lot like global networks. In trying to manage these massive internal networks and improve their efficiency, data-center operators turned to SDN and OpenFlow to control them. And why not? Their computing, server, and storage resources are already virtualized. The only thing within the walls of data centers that has not been virtualized is the network itself.

As data centers have outgrown their walls and more are springing up all over the globe, their operators are now exploring ways of extending the use of OpenFlow beyond individual sites to between the data centers themselves. Doing so will require more than just a larger controller because now the physical transport layer of the network becomes involved.

That's a different job than the one for which OpenFlow was conceived. Today's networks that link data centers are interconnected with fiber optics. The physical transport layer of the network has become a complex switching element – just like packet switches – with directions, fibers, and colors as degrees of freedom. Moving forward, superchannels and variable baud rate modulation formats will add two more degrees of freedom.

The beauty of SDN though is that it doesn't care what a switching element looks like. Whether a router, switch, OTN switch, ROADM, etc., all are abstracted to be a simple switching element.

Software-defined optical networking

The European Commission's "OpenFlow in Europe – Linking Infrastructure and Applications" (OFELIA) collaborative project includes a prototype implementation of SDN concepts to the optical wavelength-switched domain. In the prototype, a common OpenFlow-based umbrella integrated within a reconfigurable optical add/drop multiplexer (ROADM) provides integrated control of the network's circuit-based (optical and packet-based) switching layers. Additions to OpenFlow adapt the protocol to the strict switching constraints of optical networking, allowing OpenFlow to bridge layers. SDN is effectively transformed into "SDON" – software-defined optical networking.

SDON based on OpenFlow or some other protocol could one day support end-to-end orchestration of both IT and network resources with a single stroke. Configuration, management, and scaling of infrastructures could be drastically simplified, as holistic control of cloud computing, storage, and networking resources is delivered via a single API. That would potentially enable new opportunities at the network control layer for large-scale macroscopic network applications not yet envisioned. True network virtualization and associated capabilities such as capacity on demand, adaptive infrastructure, and dynamic service automation could be enabled as bandwidth, latency, and power consumption are optimized per application.

SDN challenges

The author of OpenFlow said he created it to improve security for a government application, which is ironic since security is often touted as OpenFlow's chief weakness. Indeed, having a central controller that commands an entire network would seem akin to aiming at a single bull's eye.

Conversely, having distributed control has proven even more problematic. With a network full of routers making independent decisions without visibility into what is happening elsewhere or across the network, it's relatively easy to devise attack strategies. A single rogue router can bring an entire network to its knees.

Meanwhile, there are two Achilles' heels in extending SDN architecture to the transport layer. One is the transport time from controller to "controlee." Simply put, the time it takes for control messages to travel from a centralized controller to a router elsewhere might be longer than the length of the flow being controlled. The result is that the set of flows that have been identified for re-route have changed characteristics before they can be optimized by the central controller, which is working on dated information and always playing catch-up.

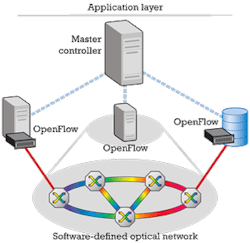

To solve this issue, the overall network has been divided into layers (sound familiar?). At the top sits an orchestration layer where global decisions are made at a coarse granularity. A master controller acts like a conductor of an orchestra (see Figure). These decisions are then passed to a network control layer, like the sections of an orchestra, to continue the analogy. The network controller runs the part of the network it's responsible for and makes finer granularity decisions. Finally, each device on the network gets commands. Whether the network controller directly manages each network device (instruments) in a flattened model or talks to another SDN agent (section lead) in an overlay model remains to be decided.

The second weakness and potential pitfall of centralized control of transport networks is that control plane messages often ride in the same physical fiber as the data itself. That creates a chicken-and-egg situation where the data can't flow until the control plane is up, but the control plane cannot function until the data flows. The simplest solution is to maintain physically separate control and data networks.

There's at least one large enterprise that owns and runs a massive data network, yet leases connectivity from a service provider for control plane traffic under the stipulation that it can't run in the same right-of-way as the company's network. However, with control plane bandwidth being such a small percentage of the total transported bandwidth, the temptation is great to simply run both control and data over the same fiber and rely on quality/class of service marking to guarantee priority.

Where SDN is headed

As part of its efforts to develop and standardize SDN and OpenFlow, the Open Networking Foundation (ONF) is shaping the protocol for the optical domain. But such is the potential of SDN that nearly everyone wants a piece of the action – not necessarily a bad thing since the challenge is huge and diverse.

Again, efforts appear to be splitting down the inside/outside-data-center universe. Inside efforts include reference designs for generic OpenFlow switches and their controllers as well as device drivers for the hardware and software for the various layers in the controller. Outside efforts include transport vendors updating their products with OpenFlow control sockets in the near term, with dedicated products to follow. Transport extensions are being worked on for the OpenFlow table, and alternatives to OpenFlow are starting to come out of the woodwork.

At the orchestration layer, standards bodies are working toward a common definition of a connectivity table so that all network devices at all layers can communicate basic information to a master-master controller.

All these activities are leading to demonstrations galore, including government, research, education, industry, and privately funded projects.

You name it, and it's happening in SDN.

JIM THEODORAS is senior director of technical marketing at ADVA Optical Networking.

Past Lightwave Issues

About the Author

Jim Theodoras

Jim Theodoras is vice president, R&D at HG Genuine USA.