Choosing the right QoS solution for the network

Presenting the case for a dual-ended QoS solution as a win-win scenario for the enterprise customer and the service provider.

By Naim Malik

Aplion Networks

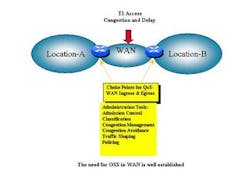

Quality-of-service (QoS) is beneficial whenever there is the possibility of link congestion. Points of substantial speed mismatch--such as Ethernet on the enterprise side, and T1 (1.544 Mbits/sec) access on the WAN side--and points of aggregation are congestion candidates.

These choke points--like the wide-area network (WAN) access link--need some mechanism for prioritizing traffic and enforcing the business policies of the enterprise. These policies include providing prioritized access to mission-critical applications, and ensuring packetized voice gets through the congestion point with sufficient bandwidth and within acceptable bounds of jitter, latency, and packet loss.

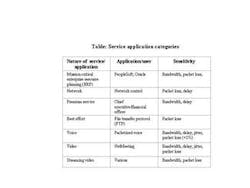

Buffering alone is not a good enough solution because, while buffering reduces packet loss, it adds delay that has a negative impact on the quality of certain time-sensitive applications. The table shows some sample service/application categories. For larger enterprises, the common open policy service (COPS) policy server/service mapping may be more appropriate.

Despite promises to the contrary, the access network, where the data has to ride the copper line, is still the choke point for most businesses. At this point, bandwidth is neither plentiful nor free (see Figure). For nearly all business customers, there is more packet and data traffic being handled than their WAN bandwidth allows. In addition, packet data is increasingly carrying voice and video conferencing that, unlike much IP traffic, requires management of latency, packet loss, and jitter within certain tight limits.

Some method must be used to prioritize this traffic, ensuring that each application, user group, or user gets priority access to its requisite level as determined by the requirements of the business. This is where QoS comes in.

There are three approaches for providing a QoS solution:

• QoS via some type of customer premise equipment (CPE), such as an integrated access device (IAD) or router)

• QoS provided by an edge switch, such as an IP service switch (IPSS)

• Dual-ended QoS, with CPE and intelligence at the network edge

Traditional QoS approach

The traditional approach is to use an enterprise CPE (enterprise router or IAD) to provide QoS in the upstream direction--towards the core of the network through the choke point of the network or the WAN access link. This assumes the CPE can support QoS via support for the Internet Engineering Task Force (IETF) differentiated services (DiffServ) standard.

The CPE would also have to support sophisticated policy management tools for admission control, classification, congestion management, congestion avoidance, traffic shaping, and policing. Most CPE configurations do not support these tools. This approach has some nice features--it prevents certain traffic from making it onto the access link and allows for basic QoS. However, this approach also has some drawbacks.

First, unless there is a Stateful Packet Inspection (SPI) engine in the CPE, which most IADs and enterprise routers do not support, application level QOS cannot be easily integrated into the QoS policy of the enterprise. Secondly, there must be a simple way to link the SPI engine to the QoS engine. Most CPE does not support this linkage, even if it supports the SPI capability. As with most purchases, this is a situation of buyer beware.

The optimal architecture for delivering and administering QoS to the WAN uplink direction involves the interaction (bi-directional control flow) between the SPI engine and the QoS engine, under control of the services management system. Note that what the IAD/router approach does not offer is the capability of providing QoS in the reverse direction--on traffic that is inbound over the WAN access link--since the provider edge switch has to play a role in this scenario. This situation becomes more complicated when adding the effect of network address translation (NAT), obscuring the IP addresses of the servers (or hosts) and users from the service provider and the Internet. Most businesses use the NAT capability if their IAD or router supports it.

Edge switch approach

A second approach to QOS involves an edge switch that, like an IP service switch, provides the enforcement of QOS policies. In this case, a "dumb" device is used on the customer premises.

A major drawback to this approach is that the point at which traffic is shaped and admission control policies is beyond the WAN access link to the IP service switch. This approach can be characterized as the centralized intelligence model. Applying SPI-- inspecting each IP packet for every customer--at the IP service switch is too resource intensive to be offered in that platform.

Dual-ended QoS

The third approach is to use an intelligent enterprise CPE, combined with a multi-service application switch, to provide QoS in both the upstream and downstream direction. This intelligent enterprise CPE should support sophisticated policy management tools for admission control, classification, congestion management, congestion avoidance, traffic shaping, and policing. It should also provide an SPI engine and services management tools to enable the service provider to simplify the complexity of configuring and administering QoS policies. This approach has some nice features--it prevents certain traffic from making it onto the access link and allows for the prioritization of traffic per the businesses policies and service application requirements.

Most existing networks have been designed for data applications that do not require real-time performance. Nor do most networks offer the sophisticated SPI-QOS interaction required for optimal use of the expensive WAN link. Because audio and video data must be received in near real-time (typically < 250 ms delay), QoS is a critical element of a successful converged network architecture. Otherwise, packetized voice and videoconferencing applications cannot be made business class.

The traditional over-provisioning of the network used to provide QoS in the core of the network is not a viable business option for the narrow WAN link, particularly when this option may call for an additional WAN circuit. QoS is an architectural issue that involves many devices, and it can initially be complex to configure. Only an integrated approach that provides a solution for the customer premises and the provider edge is a viable solution. The win-win scenario for the enterprise customer and the service provider is to architect the network for a dual-ended QoS solution.

Niam Malik is director of product management at Aplion Networks, headquartered in Edison, NJ. He may be reached via the company's Web site at www.aplion.com.