Multipath traffic distribution in the era of SDN

Our love affair with all things digital continues. We are, as a result, experiencing a disruptive scaling in network services. Simply put, we need to connect an ever-increasing number of things at higher and higher speeds. At the same time, we are seeing massive uptake of cloud-based services. Some of these services are pushing the current cloud paradigm — the centralization of information and compute resources — towards a more distributed cloud architecture. This move will require an evolution to new wide-area network (WAN) architectures and technologies, such as 5G and software-defined networking (SDN), to support edge-cloud deployments. But how will these changes affect the ways in which we distribute traffic in this more chaotic and dynamic environment?

Where we’re heading

In the land of classic multipath traffic distribution our goals are simple: survive failures, keep the network stable, and spread the load as evenly as possible across the whole network. This was never simple but, traditionally, access and subscriber networks did grow steadily and usage patterns were predictable. However, today’s and tomorrow’s applications and services, such as 5G mobile, SD-WAN, video, augmented and virtual reality, and internet of things (IoT), impose different usage patterns that can only be met by a more agile and dynamic architecture based on network functions virtualization (NFV) and SDN. Traditional networks have long deployed Layer 2 link aggregation and/or bundling and equal-cost multipath (ECMP) mechanisms to increase the available bandwidth capacity between the ingress and egress of a link or network demarcation points. However, this way of handling multipath traffic distribution is no longer good enough, especially with the dominance of cloud services today and the way that they are evolving beyond the traditional data center.Hyperscale data centers initially had little interaction with the network outside of them. They accepted the network much as it had been originally designed and supplemented it, in the case of video, with content distribution networks. Within the data center, however, there was a need to deploy a different approach to network routing. Here the focus was on the benefits of centralizing both information and compute resources — increased reliability, security, and operational excellence — and bandwidth resources inside the data center were relatively inexpensive. SDN and virtualization gave data center operators the agility and scale they needed, but the optimization of traffic distribution was low on the list.

However, under localized legislation, or driven by operator and enterprise network architectures, workloads that normally run within hyperscale cloud services are moving towards smaller, workload-tailored, more distributed data centers or edge clouds. These workloads might run at premises owned by the service provider, enterprise, or even at the subscriber level. These distributed cloud services empower ultra-performing, ultra-resilient, and ultra-reliable workloads and it all needs to be seamlessly connected (Figure 1).

Figure 1. The implementation of SDN can provide significant benefits.

To properly support these distributed cloud applications, the SDN-empowered network needs to connect everything together, from access to cloud services, via the most operationally optimal, scalable, reliable, and future-proof architecture. Underlying connectivity operations must match the agility and scalability of the NFV/SDN technologies to be most profitable.

Getting to NFV/SDN

Most current network implementations involve either the deployment of Layer 2 link aggregation/bundling or Layer 3 ECMP. However, these traditional technologies do not make use of all available bandwidth resources, which results in stranded network bandwidth. Nor do they provide sufficient mechanisms to upsell network resources by introducing flow constraints such as delay, reliability, link costs, or packet loss. These mechanisms also do not allow the network to be used as a consumable resource, which requires differentiating between the various options available and offering them as different services based upon attributes such as reliability, latency, and bandwidth.

In contrast, SDN requires traffic to be optimized in real time based upon the network state and the required service levels of a flow. SDN controllers harvest real-time topology, network state, and business rules to optimally program the distribution of traffic across the network. At its foundation, SDN provides the toolset to distribute traffic more efficiently and, potentially, even deliver multi-tiered transport services (see Figure 2).

Figure 2. Data from a variety of sources helps SDN technology optimize network performance.

To provide the contracted traffic distribution behavior when programming a path for any given flow through the network, the SDN controller must be informed about the state of the core links (e.g., bandwidth utilization, latency) and about the programmatic SDN capabilities of the individual network components.

Network state can be harvested using recent well-known streaming telemetry technologies such as gRPC (the Google-developed remote procedure call). With these kinds of technologies, it is possible for the SDN controller in real time to know latency, used bandwidth, traffic queuing, and so on.

The specific programmatic capabilities of network devices are often harvested by means of routing protocol extensions to BGP, ISIS, or OSPF. This is important information for the SDN controller because these capabilities capture the complexity of the paths that the controller can program into the operator network. The main relevant capabilities are Maximum Segment Depth (MSD), Entropy Label Capability (ELC), and Entropy capable Readable Label Depth (ERLD). MSD defines the number of segments or “hops” a network device can impose upon a flow. When a flow is tagged with an entropy label, ELC informs the SDN controller that the device can act upon this value accordingly, helping to spray traffic fairly within the core network. ERLD informs the SDN controller how deep in the packet headers the network device can look to retrieve the entropy tag.

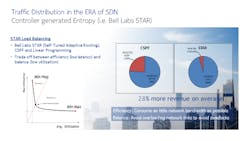

Once the network controller has harvested (in real time) the most recent network state and learned the programmatic capabilities of the network devices, it needs to program the paths through the core network accordingly. It is in the service provider’s best interest (for service and cost optimization) to spray the traffic across the network in such a manner that bandwidth, latency, and quality of service (QoS) resources are consumed most efficiently. The algorithms used to load balance traffic flows over the greatest number of paths depend upon the capabilities of the SDN controller. For example, the Bell Labs STAR (Self-Tuned Adaptive Routing) algorithm provides on average about 28% more traffic efficiency compared to classic non-SDN load balancing (Figure 3).

Figure 3. Algorithms such as Self-Tuned Adaptive Routing (STAR) leverage the SDN controller capabilities

for network load balancing.

Putting it all together

Let’s look at how this kind of multipath traffic distribution might be used to offer SD-WAN services. SD-WAN services possess many of the desired capabilities for interconnecting the telco cloud: programmability, virtualization, agility, automation, and security. Because SD-WAN uses an overlay service model it is independent of the underlying transport layer and takes advantage of any IP transport option available. Underlay network transparency is a key advantage when connecting remote, out-of-region clients and small enterprise branch offices over a commodity internet service. However, the use of commodity services also makes it unable to support deterministic performance requirements such as those available to carrier-grade cloud services.

In contrast, managed IP/MPLS network services do support deterministic QoS guarantees with mission-critical reliability. The combination of MPLS, segment routing, and carrier SDN control adds scalable and efficient traffic engineering capabilities with tremendous control granularity and flexibility. The superior instrumentation of carrier transport services sets the bar on reliability and quality of service. However, the reach of typical managed services is limited and doesn’t extend beyond the WAN perimeter into the telco cloud data centers. Moreover, communication service providers also want to virtualize the WAN in logical network slices that can be optimized and shared for multiple applications.

The ideal solution for telco cloud connectivity is a hybrid WAN service that combines the qualities of software-defined overlay services and managed underlay transport services — without inheriting the shortfalls of each. It is a service that maintains the functional decoupling between IP overlay and underlay network services, but permits the coordination of routing and transport policies between layers. Management and control extend end-to-end: from connected clients in the access network, to distributed virtual network functions (VNFs) in the multi-service cloud edge, and VNFs in centralized telco cloud data centers and potentially even the public internet cloud.

Summary

SDN controllers bring value to existing core networks and introduce new ways to differentiate flow-handling and traffic distribution. They will help an operator to steer flows through most optimal paths and provide a competitive service offering for best-effort and premium traffic, as well as for any other type of traffic in between.

Looking ahead, platforms are in development that will harness the traffic distribution characteristics of SDN to enable telco-cloud automation or, in other words, cloud-optimized services with deterministic guarantees. They will enable agile and seamless connectivity for distributed network functions in telco edge and core data centers to support the many exciting but demanding applications and services that are coming.

Gunter Van De Velde is regional EMEA product line manager for IP & SDN technologies at Nokia. In this role, he is responsible for SDN, service chaining, and service provider routing. He has been founding Chairman of the Belgian IPv6 Task-Force since 2010, Secretary of the IETF Operational Directorate since 2013, and Chair at the IETF for the OPSEC (Operational Security) working group since 2011. Most recently, in 2018 he became Chair for the LSVR (Link State Vector Routing) working group.