Take control of your link-loss budget

Since the 1980s, network speeds and bandwidths have steadily increased to keep up with data, voice, and video applications. As a result, succeeding IEEE standards have had to define adequate link-loss budgets to ensure the specified channel would work. (For a primer on link-loss budgets, see “Link-loss basics” on page 31.) Unfortunately for network planners, as speeds have grown, loss budgets have shrunk.

For example, when fiber Ethernet systems were running at a data rate of 10 Mbits/sec in the early 1980s over conventional multimode fiber (MMF), the standardized cable plant loss budget was 12.5 dB to support a maximum length of 2 km. When Ethernet speeds increased to 100 Mbits/sec, the loss budget tightened to a still generous 11 dB, using long-wavelength (1,300-nm) transceivers. Ten years later, when Gigabit Ethernet (1,000 Mbits/sec or 1 Gbit/sec) MMF specifications were developed for use with low-cost short-wavelength (850-nm) transceivers, loss budgets decreased significantly to 3.9 dB.

As today’s bandwidth-hungry applications creep upward of 10 Gbits/sec, IEEE 802.3ae has tightened the link-loss budget further to a low 1.8 dB for standard 50-µm MMF.

To compound the challenge high-speed networks present, the link-loss budget can be greatly affected by both the quality of fiber in the cable and the insertion loss of the connectors used. Fortunately, the advent of laser-optimized multimode fiber (LOMF) paired with low-cost, 850-nm vertical cavity surface-emitting lasers (VCSELs) provides the opportunity to improve the system link-loss budget. By altering the characteristics of the 50-µm LOMF, defined as OM3 within ISO/IEC 11802, a portion of the loss penalty can be reassigned to the channel’s total allowable cable and connection loss budget.

SANs and data centers are some of the leading adopters of 10-Gbit/sec systems. Today’s primary link-loss concern in these applications is due to high-density connection requirements. A conventional 50/125-µm (500 MHz·km) channel 82 m long (or shorter) could work when using standard LC or SC connectors. Conventional LC or SC connectors have a maximum allowed loss of 0.75 dB per mated pair. When combining two mated-pair connections in a data center (one mated pair at each end) with the maximum allowed cable attenuation of 3.5 dB/km, the maximum link loss budget is an acceptable 1.79 dB.

But because mechanical push-on (MPO) multifiber connectors and cassettes have become popular for data center environments (due to their high-density format), problems have arisen with the number of possible mated connections. The MPO cassette has two mated connections with a total loss of 1.50 dB per mated cassette. Combined with the typical cable attenuation of 3.5 dB/km, the maximum link loss tops off at 3.29 dB. This far exceeds the allowable 1.8-dB budget when using conventional 500 MHz·km 50/125-µm fiber.

Table 1 shows the maximum link-loss budget when using conventional 50-µm fiber. The data demonstrates that using an MTP/MTO cassette-which contains an MTP/MPO connector, a small loop of fiber within the cassette, and an LC mated pair connection-would not meet the link-loss parameter per IEEE 802.3ae.

So how does one use MPO technology within a data center? The answer is to deploy a fiber that is constructed with glass properties superior to the minimal requirements established by IEEE 802.3ae. Using a higher-performance fiber than standard 50-µm MMF will improve the supportable length or will enable an increase in the link-loss budget.

The fiber is characterized by its differential mode delay (DMD). The DMD is the difference in time between the first arriving pulse and the last arriving pulse at the receiver. The lower the DMD, the less the intersymbol interference (ISI) within the system becomes. Improved fiber manufacturing processes can greatly reduce DMD. Lower ISI translates to higher bandwidth and corresponding increases in fiber channel length.

The 10-Gigabit Ethernet performance model is based on a non-laser-optimized, overfilled launch condition and conventional 50/125-µm multimode fiber (500/500 MHz·km). However, the IEEE recognized that the superior DMD performance of the OM3 fiber, which is 50-µm LOMF with an effective modal bandwidth (EMB) of 2,000 MHz·km or better, could support a distance guarantee of 300 m. As a result, when using VCSEL technology, OM3-grade fiber can support a Gigabit Ethernet channel to 1,000 m and 10-Gbit/sec Ethernet up to 300 m. A cable plant loss budget associated with the OM3 300-m length was conservatively calculated as 2.6 dB at 850 nm.

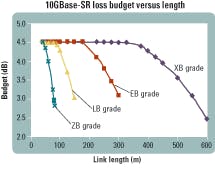

Alternatively, the DMD fiber properties in LOMF cables enable systems with run lengths shorter than 300 m to realize increased link-loss budgets of up to 4.5 dB. This benefit is especially critical for data center and SAN applications where short channel lengths and high connector densities are typical.

Network planners can derive even greater benefits from LOMFs that exceed the performance level of OM3 fiber. The figure shows the link-loss values and distances per IEEE 10GBase-SR (short reach) for a selection of 50-µm LOMFs.

BICSI has identified nine steps network planners should take to analyze a fiber-optic system’s attenuation and determine if the fiber they’re considering will meet their requirements. The nine manual steps are:

- 1. Calculate the fiber loss.

- 2. Calculate the connector loss.

- 3. Calculate the splice loss.

- 4. Calculate other component losses (i.e., bypass switches, couplers, splitters).

- 5. Calculate the total passive cable system attenuation by adding the results of Steps 1 through 4.

- 6. Calculate the system gain.

- 7. Determine the power penalties.

- 8. Calculate the link-loss budget by subtracting the power penalties from the system gain.

- 9. To verify the performance, subtract the passive cable system attenuation (result of Step 5) from the link-loss budget (Step 8). If this result is a negative number, the system will not operate.

An easier way to determine your budget is to use a link-loss calculator, developed for simplicity and user-friendliness. This Microsoft Excel-based tool will prompt the user to select the type of connector and number of mated pairs. In addition to selecting the connectors, the user will be asked to indicate the link length of the system. The calculator will perform the necessary calculations and output the resulting cable plant loss budget.

This calculator is the only tool available that will actually allow you to trade off fiber cable bandwidth for more connection loss. It was developed after extensive system modeling and testing of different connector options at the Data Communications Competence Center (DCCC) at Nexans Inc.

This NetClear model expands upon the original IEEE 10-Gigabit Ethernet model by accounting for the variability in individual connector modal noise when using more than two connectors. Modal noise is caused by the interference of the wavefronts of different modes within the fiber at the connector interface. The IEEE model shows a constant 0.3 dB, even as the connector loss increases. However, the DCCC identified that modal noise steadily increases with connector loss and is clearly a more accurate benchmark for links incorporating higher than normal link loss. In fact, a 50-m test length using the IEEE model resulted in a maximum usable length to 60 m, whereas the more defined NetClear model shows it only reaches to 15 m (see highlighted row on Table 2).

When system designers and consultants develop networks to support Gigabit Ethernet applications for today, with the intention of migrating to 10-Gigabit Ethernet in the near future, the use of LOMFs is mandatory to ensure a viable migration path. Using the link-loss calculator enables designers to consider various configurations and confirm their designs will support 10-Gigabit Ethernet operations. For further information on the test procedures and to download the link-loss calculator, refer to the white paper on link loss at www.netclear-channel.com.

Carol Everett Oliver, RCDD, is the marketing analyst of Berk-Tek, a Nexans Company. Lisa Huff is the technical manager of the Advanced Design and Applications (ADA) group in the Nexans Data Communications Competence Center.

A fiber-optic channel is only as good as the fiber installed beneath the sheath. The fiber-optic channel distance and capacity are characterized by the cable’s bandwidth combined with attenuation, which are defined by the IEEE standards. Through these specifications, the channel must adhere to link-loss budget limits that are defined by the performance characteristics of the optical cable and the electronic equipment.

Bandwidth is the information-carrying capacity of the system and is characterized by cycles-per-second, per unit length of cable. Attenuation is the insertion loss measured by the difference between the power coupled into the fiber and the output power at the other end of the cable. The channel insertion loss varies with the cabling system and its associated optoelectronics. Together these two basic parameters create the link-loss budget, which is the maximum allowable signal loss from transmitter to receiver within a channel. Link loss is expressed in decibels (dB).