Merchant silicon eases MSPP development

Once limited to the transport of voice traffic over long distances, SONET/SDH networks now play an expanded role in today's service-provider networks. SONET/SDH networks now combine voice, video, and data services over a single robust transport mechanism as they evolve to provide increased bandwidth, flexibility, manageability, and efficiency.

As part of this evolution, service providers are requesting next-generation multiservice-provisioning-platform (MSPP) equipment with ever-increasing feature sets at falling price points. Fortunately, once rare telecommunications semiconductors have become more commonly available, and the industry is now mature enough to evolve to an open MSPP architecture.

Such an open architecture would lead to modular MSPPs featuring open internal interfaces. That will enable manufacturers to focus efforts on their core competencies while using off-the-shelf components to build the platform's hardware. This initiative will allow faster development time of leading edge, cost-effective MSPPs. An open architecture should also enable manufacturers to reuse modular cards and software across multiple platforms.

The availability of standard components targeted at the critical MSPP functions underpins this open architecture movement. In the area of backplane design, proprietary interfaces are giving way to higher-speed standard backplane technology. In the area of line cards, standard electrical components have long been available and have evolved along with the optical components to enable the design of very-high-bandwidth line cards. But with this increase in bandwidth, the line-card design challenge has shifted from increasing the line interface speed or density to managing the hefty aggregated traffic and the recovery from error conditions. Lastly, off-the-shelf ICs are now available for scalable monolithic switch fabrics that maximize the reuse potential of subsystems within a product line.

For years, backplane-based systems evolved by moving to wider buses and faster signal clock rates. However, that evolution stopped when designs reached the 1-Gbit/sec range. It then became impossible to pass data reliably over parallel buses because signal skew and load problems increased. Designers were forced to shift from parallel buses to serial interconnects.

Backplane designers must solve new problems as data rates evolve beyond 1 Gbit/sec. The signal integrity of these high-speed serial links is affected by reflections due to impedance mismatches along the signal path, signal attenuation from backplane materials, added noise due to crosstalk, and intersymbol interference. As networking applications increase in speed and density, line-card throughput requirements are now commonly 20 Gbits/sec; fabric-card throughput requirements are rarely less than 160 Gbits/sec and sometimes more than 640 Gbits/sec. Ensuring signal integrity thus becomes more critical than ever.

The selection of a backplane technology can be a daunting task, and the temptation to use a proprietary solution tailored to the problem can be powerful. However, system designers should consider that the selection of a standard backplane interface technology is the foundation of any successful open architecture strategy. Fortunately, the rewards of using standard technology are also very attractive, as designers have access to the prolific results of the numerous companies joined under the umbrella of the Optical Internetworking Forum (OIF).The OIF is creating an interoperable specification for higher-speed backplanes. It previously produced the TFI-5 specification to address SONET/SDH backplane designs at 2.5 Gbits/sec from the physical layer to the framing and management layers. OIF member companies offer a wide range of products compliant with the TFI-5 specification, both as standalone SerDes products (see Figure 1) and as MSPP-specific components with integrated SerDes technology. Such devices are usually equipped with integrated termination resistors, programmable output swing, transmit pre-emphasis, and receive equalization, resulting in improved signal integrity and a low bit-error rate.

While today's systems typically run data rates of 2.5 Gbits/sec across 40 inches of backplane trace, next-generation high-end systems will be looking at rates of 5 Gbits/sec and beyond. OIF members are already offering next-generation technologies that meet the OIF's CEI-6 specification to address the requirements for 4.9–6.4 Gbits/sec (see Figure 2).Improvements in fiber-optic technology enable the creation of networks with greater capacity. In parallel, carriers are deploying new services and collapsing their network hierarchies, thus increasing the system density as well as adding new service requirements like Ethernet over SONET and transmultiplexing functions. While standard products solve the great majority of the hardware challenges of building these new line cards, the software layer must now deal with an ever-increasing number of paths, each requiring performance monitoring and management.

Ultimately, the increase of the line-card bandwidth translates into the aggregation of data from thousands of customers onto a single fiber. Therefore, the loss of a single high-capacity optical path could affect a large region and disrupt crucial services. Networks must be designed to avoid such disruptions—hence, the automatic protection-switching (APS) schemes available in SONET/SDH, such as unidirectional path-switched ring/subnetwork connection protection (UPSR/SNCP) and bidirectional line-switched ring/multiplex section shared protection ring (BLSR/MSSPRING), which define a strict restoration time of <50 msec.

The traditional APS implementation relies heavily on software. The line-card framer monitors the path for alarms and defects and generates an interrupt when it detects such a condition. The companion microprocessor receives the interrupts, keeps a filtered status for each path, and sends a summary status report to the fabric through the management channel. At the fabric, a microprocessor receives the summary status from all the line cards and must compute the fabric-switch map based on the status of each path.

As the line-card and system density increases, bottlenecks can appear: The line-card processor can experience difficulties processing all the performance monitoring and alarms required to generate the consequential actions within the allocated time; the management plane can have insufficient bandwidth to carry all the information quickly between the line cards and switch cards; or the switch-fabric-processing burden could grow enough to overwhelm even for the most powerful processors.

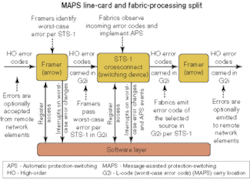

This problem is not new, and system designers have conceived a number of solutions, all involving some form of proprietary signaling within the system. Similarly, standard product designers also conceived solutions to this problem for open architectures. An algorithm such as message-assisted protection-switching (MAPS) addresses the challenge of completing a large number of protection switches within a short time triggered by a single failure event such as a cut in a DWDM fiber within the prescribed APS time constraints.The MAPS algorithm is designed to provide hardware support for APS (see Figure 3). The ingress framer monitors the incoming alarms and defects as well as the K-byte information to generate the worst-case error code for each incoming STS-1/VC-3 or VT1.5/TU-11. The worst-case error for each high-order or low-order path is then transmitted to the fabric card using an in-band communication channel composed of unused overhead bytes on the data serial links. Using MAPS greatly reduces the line-card processor load, which enables the card to better respond to the remaining tasks. In addition, the system communication channels are freed from the burden of exchanging the time-sensitive information from the line cards to the fabric cards.

The switch fabric also requires hardware assistance to meet the APS restoration time requirements. When the MAPS algorithm is used for UPSR or SNCP protection, the switch fabric receives an error code for each path, high-order or low-order, and can then autonomously select which protection path best meets service needs. When MAPS is used for BLSR or MSSPRING protection, the switch fabric acts as a focal point for evaluating the error codes and reports the results to software, which in turn controls the switch settings.

SONET/SDH MSPPs are built around single-stage or multistage switch-fabric architectures. Such switch fabrics are often created using a monolithic single-stage switching device, and standard off-the-shelf ICs exist that switch up to 240 Gbits/sec at a granularity of STS-1/VC-3 or up to 40 Gbits/sec at a granularity of VT1.5/TU-11 with or without MAPS support.

However, different approaches must be considered when the application requires a larger switch fabric, since the maximum bandwidth of single-stage fabrics is set by the available semiconductor technology. A multistage approach enables designers to scale the bandwidth beyond the capabilities of a monolithic switch but at the expense of increased device count, power, and software complexity. As an example, to build a 640-Gbit/sec fabric out of 160-Gbit/sec switching elements requires 12 elements organized in a Clos architecture of three columns of four elements. The power consumption of the multistage fabric is 12 times greater than the single element for a four times increase in bandwidth.

In addition, because the different path flows compete for switching resources, multistage fabrics can block and require rearrangement to complete new traffic selection. This rearrangement incurs a time penalty for completing a connection-setting algorithm for each affected path. The computation required to complete the full switch map can become excessive and performance-limiting, especially when dealing with multiple simultaneous path failures.

Bit-sliced architectures offer a simpler alternative to multistage fabrics. Sliced architectures distribute the data over parallel switching elements of a single-stage fabric, thus taking one data flow (e.g., an STS-1) and spreading it over multiple parallel devices. Each element, while switching the same bandwidth, will switch smaller and incomplete data grains, thereby increasing its overall switching potential.

In the same example (creating a 640-Gbit/sec fabric with 160-Gbit/sec switching elements) in a sliced architecture, each byte from each STS-1 on each link will be spread across four links that will carry 2 bits of that byte. Consequently, the four links will contain the same aggregate bandwidth, but each link now has visibility over the entire traffic; this technique is called "di-bit slicing." The 640-Gbit/sec fabric can now be built using four parallel 160-Gbit/sec elements in a single-stage fabric. Each element, while having the same bandwidth, will switch the smaller grains and affect the entire system throughput. The resulting power consumption of the single-stage fabric is four times that of an individual element. The single-stage fabric is also strictly nonblocking and requires no software rearrangement. It is then better suited to provide deterministic switching time, which simplifies the design of traffic protection protocols with stringent time requirements.

An additional advantage of a bit-sliced fabric is system scalability. Using the same line cards and backplane, system designers can implement different switch-fabric options by using the same switch element in different tiled configurations. For example, a 160-Gbit/sec switch element could be used on its own in byte mode (8-bit); two elements can produce a 320-Gbit/sec single-stage fabric in nibble mode (4-bit); or as explained above, four elements can produce a 640-Gbit/sec single-stage fabric in di-bit mode (2-bit). All these configurations would have the same interfaces, the same latency, and nearly identical software and would only differ by the connections between the switch-fabric-card backplane connector and the switch elements.

The industry has evolved greatly since MSPPs were first proposed and is today more prepared than ever to deliver on the promise of these platforms. Service providers are now realizing the benefits of such equipment in reducing their operational expenses. At the same time, these providers continue to pressure manufacturers to reduce pricing in an attempt to maximize their capital expenditures.

Unfortunately, the post-bubble years have put equipment manufactures in a bind since they lack the manpower needed to sufficiently fulfill all the service providers' demands. But those same post-bubble conditions have made leading edge telecommunications silicon readily available, enabling equipment manufacturers to slash time-to-market and drastically cut development time in offering new industry-standard platforms.

The silicon making all that possible includes SerDes devices, framers, virtual tributaries, pointer processors, high-order fabrics, low-order fabrics, Ethernet over SONET/SDH chips, and FPGAs. These elements are making it possible to build complete MSPP systems using merchant vendor silicon when the base system architecture is compatible with industry standards.

Furthermore, advances in backplane throughput, line-card throughput, hardware-assisted APS protocols, and advanced switch-fabric architectures have converged to make MSPPs viable, scalable, affordable, and the de facto platform of choice for future equipment deployments.

Pierre Vaillancourt is a product manager at PMC-Sierra's Service Provider Division (Burnaby, British Columbia). He can be reached at www.pmc-sierra/networking.