Designing, manufacturing, testing 10-GbE for the real world

Although the IEEE 802.3ae standard was ratified in June 2002, many 10-Gigabit Ethernet (10-GbE) optical component and transceiver companies are still defining what steps must be taken to ensure their products are standards-compliant and can perform at peak levels in the real world. Modifications must be made to traditional SONET/SDH devices to meet the new standard, including the test methodologies used in design and manufacturing.

The two most important tests are transmitter eye-mask and stressed receiver sensitivity (SRS). To guarantee system and vendor interoperability-the goal of most standards-IEEE 802.3ae raises the bar by not only requiring each transmitter to have a clean eye, but also for the receiver to work reliably with a stressed signal.

In the well-known transmitter eye-mask test, a high-speed sampling oscilloscope superimposes sampled data bits to create an eye-diagram. The transmit eye must be clear in the center-masked region to guarantee a good transmitted signal. However, contributors to the IEEE 802.3ae standard knew that clean signals could be degraded before they reached the receivers. Consequently, the SRS test was created to subject receivers to the same worst-case conditions they may encounter in actual deployments.

The SRS test simultaneously applies a signal to the receiver with poor extinction ratio, added horizontal timing jitter, vertical (or amplitude) jitter, slowed rise and fall times, and low optical modulation amplitude. Systems must operate with a bit-error rate (BER) of better than 10-12. Compliance to the IEEE 802.3ae standard helps ensure the likelihood that systems will interoperate and be ready for plug and play operation.That is contrasted with SONET/SDH testing, where near-perfect signals are typically used to characterize systems, then margins are added to accommodate possible conditions in the field. As experience has demonstrated, taking shortcuts on SRS testing, such as adding a penalty to the results of SONET/SDH tests, could lead to the production of components and transceivers that do not meet the 802.3ae specifications.

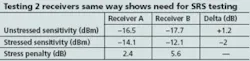

Because the optical world has traditionally used unstressed sensitivity as a figure of merit for receiver quality, most people would believe a receiver that passes unstressed sensitivity testing would also test similarly for stressed sensitivity, but with some offset. When people initially looked at the IEEE 802.3ae spec, they thought they could do an unstressed sensitivity measurement and just add x number of decibels of penalty to account for the stressed condition. That proved incorrect, as the Table illustrates.

The Table compares the performance of two receivers tested with the same signal for both unstressed and stressed sensitivity. Under the unstressed condition, receiver B performed better than receiver A by 1.2 dB-but, surprisingly, the stressed sensitivity of receiver B was 2 dB worse than receiver A. Additionally, the stress penalty (difference between stressed and unstressed sensitivity) of the two receivers showed no correlation. This data illustrates that the initial assumption that testing with only an unstressed signal and adding a fixed offset is not valid and that it’s to be misled without doing SRS testing.

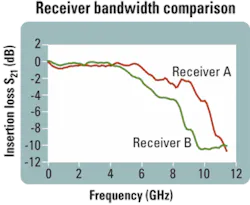

The Figure shows the bandwidths of receivers A and B. Receiver A showed better bandwidth performance than receiver B. Since both receivers work at 10 Gbits/sec, is more bandwidth good or bad?

When optimizing for SONET/SDH, it’s best to strive for less noise or lower bandwidth. That is why in an unstressed sensitivity measurement, receiver B performed better than receiver A. In the 10-GbE world, concerns include both noise and distortion. Bandwidth is bad in terms of noise, but it’s good in terms of distortion. A 10-GbE device is more sensitive to distortion, since its eye is already at its limit in terms of bandwidth, so any additional distortion tends to close the eye even more. Higher bandwidth will be more effective in limiting distortion. As shown in the Table, receiver A, with the wider bandwidth, indeed had better sensitivity performance when tested with the SRS signal.There are plenty of companies that still have not incorporated SRS testing into their manufacturing process, running the risk of having unhappy customers. There is enough lot-to-lot and device-to-device variability that testing is a requirement.

Variability can come from different lots of components that might have minor variations individually but added together can negatively influence the final device’s sensitivity. Although it’s often a tradeoff between getting that variation down to zero and lowering costs, engineers must find middle ground that still enables products to meet the standard for performance.

Additionally, component designs are often modified over a product’s lifetime, which might cause performance to change. Without adequate testing during the manufacturing process, these problems would not be caught.

In the past, there were a lot of headaches in implementing an SRS test system, let alone one that agreed with one built by someone else. These challenges have been resolved by leading SRS test equipment vendors. Today, SRS testing demands no more time than unstressed testing-so why would you not want to test per the standard? In fact, it is beneficial to screen all component vendors to ensure compliance as well.

The key benefit to doing SRS testing as per the standard equates to an insurance policy. It guarantees equipment will operate under the worst-case conditions, mitigating instances of returned products due to poor performance.Jan Peeters Weem is an RF/optics design engineer at Intel (Santa Clara, CA, www.intel.com).