Photonic integration diverges down two paths

by Stephen Hardy

The need to lower cost, power consumption, and package size has led to significant advancements in photonic integration. Infinera (www.infinera.com) has made the biggest splash in this area with its monolithically integrated InP photonic integrated circuits (see sidebar). Emerging companies such as Luxtera (www.luxtera.com) and Laser-wire (www.laserwire.com) claim to have harnessed the promise of silicon photonics. Unfortunately for the industry at large, the business models of these three companies call for them to hoard their successes for use in their own products. This fact means the mainstream component and subsystem vendors must create their own strategies for photonic integration. Central to the construction of these battle plans is the question of which functions should be integrated monolithically and which are best handled in some other fashion.

Optical communications components and subsystems traditionally have comprised discrete components: separate lasers, modulators, and control elements, or separate filters and waveguides. By definition, each of these components were made separately, then somehow strung together, usually by increasingly expensive manual labor. The transfer of manufacturing operations to lower-wage Asian countries is a result of this trend.

Monolithic integration involves the combination of functions that discrete elements previously have performed into a single wafer. "Hybrid" integration is a step in between discrete and monolithic; it implies a closer bonding of functions than just co-packaging discrete components, perhaps involving the joining of different wafers together.

The most obvious path toward monolithic integration involves functions that can be created with the same materials process, or multiple instances of the same function as in the case of laser or modulator arrays. This implies, of course, that it's physically possible and desirable to create the functions you want in the chosen material. For example, since InP is a common material for lasers, most monolithic integration efforts for transmitters begins with that material. Since silica works better for filters and waveguides, those functions generally won't be tried in InP. Arrayed waveguide gratings (AWGs) can be created in either material. However, InP AWGs tend to be more temperature-sensitive; the intended application will determine whether this drawback is important.

Equally, just because monolithic integration appears possible doesn't necessarily mean that it makes sense. "Often, high levels of integration come at a performance price," says Graeme Maxwell, vice president, hybrid integration, at CIP Technologies (www.ciphoton ics.com). "Monolithic integration especially can suffer from this if you're trying to do very large numbers of integration on InP."

For example, there's a limit to how closely you can integrate lasers in an array, Maxwell says, because of optical, thermal, and potentially electrical cross-talk issues. Getting each laser to operate consistently a unique wavelength can also prove challenging, particularly if you use thermal control.

Monolithic integration may also make it difficult for a device to meet a wide range of applications, says Ed Murphy, group CTO, Transmission and Integrated Photonics Business Unit, JDSU (www.jdsu.com). "You tend to have significant performance trade-offs when you integrate because you end up making compromises between material choices or process choices. So you can't necessarily get the ideal behavior out of the device," he explains. One obvious corollary to monolithic integration is that if you're starting in InP and making a transmitter, for example, the modulator will be made in the same material. That means it won't match the performance that LiNbO3 modulators can provide -- which, for all practical purposes, shuts out the device from conventional high-performance long-haul applications.

Manufacturing yield also could take a hit with a monolithic device; a bad modulator means the entire transmitter chain is no good, for example. And since monolithic devices take a long time to design and perfect, "the difficulty is that you really need to anticipate the market over a fairly long period of time, with a specialized chip," Murphy says.

The bottom line is not that monolithic integration should be avoided, but that it should be approached carefully with a clear goal in mind that matches the benefits expected. "Where the driver is footprint and faceplate density and real estate, and there is some latitude on the specification, then monolithic is a strong play," says Chris Clarke, vice president of strategy and chief engineer at Bookham (www.bookham.com). "Where the footprint isn't the primary consideration and the performance and other things are, or even time to market because it generally takes longer to do the monolithic than you can do with hybrid, then the hybrid is the [choice]."

Pairing monolithic with hybrid

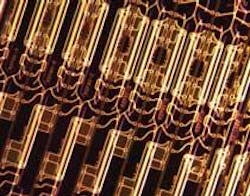

Thus all three companies are looking at both monolithic and hybrid approaches toward component design. Both JDSU and Bookham are working on monolithically integrating tunable lasers and Mach-Zehnder modulators to enable tunability in small packages, such as XFP transceivers. Andy Carter, vice president, technology, at Bookham, also points to his company's recently announced 40-Gbit/sec tunable transmitter assembly. The device includes two nested Mach-Zehnder modulators, another Mach-Zehnder modulator, and "a whole string" of control and setup electrodes and monitor detectors monolithically integrated into a single 7-mm chip (see photo above).

JDSU, meanwhile, has integrated 480 discrete functions monolithically into five planar lightwave circuits (PLCs) for its two-degree reconfigurable optical add/drop multiplexer. It has now turned its attention to amplifiers. At ECOC in Brussels last October, the company announced a "planar integrated amplifier." Besides PLC technology, the amplifier includes integrated photo detector arrays that combine nine devices into one and an integrated isolator design that replaces up to eight isolators with a single component. The amplifier, slated to be available next year, will be half the size of current EDFAs, the JDSU says. The company also has a monolithically based tunable laser and laser arrays, Murphy adds.

CIP used last October's ECOC to unveil its new HyBoard integration platform, which combines monolithically integrated InP actives and passives, monolithically integrated planar waveguides and related functions, and micromachined silica submounts in a hybrid fashion.

"Our view is that you want to use the highest level of monolithic integration that is cost-effective. But you don't want to be religious about only going down one approach. You want to be able to bring in the most appropriate technology and integrate it into a platform to make it the highest performance at the lowest cost," Maxwell says.

This combination of hybrid and monolithic appears to be the common path toward upcoming 40- and 100-Gbit/sec components and subsystems, the sources agree. While Murphy says that his company hasn't come to any conclusions on the exact path it will take in this area, he says that PLCs and LiNbO3 modulators for such applications are currently under development at JDSU. Theoretically, for 100-Gbit/sec dual-polarization QPSK applications, one could see nested modulators monolithically integrated, polarization rotation and combination functions monolithically integrated into a PLC, and the two integrated together in a hybrid fashion.

However, such chips, particularly on the receive side, would be extremely complex, he cautions. Bookham's Carter echoed these sentiments. "Generally, things like polarization are quite hard to handle in a monolithic approach. I'm not saying it can't be done; it can be done, but it's more at the research level," he says.

Despite the challenges, photonic integration be it in hybrid or monolithic format seems to be the best approach for meeting future optical communications requirements. The trick will be matching the right integration strategy to each application.

Infinera speeds PIC development

Infinera, widely credited with boosting monolithic photonic integration from lab curiosity to commercial reality, is thinking along the same lines as the component and subsystem suppliers quoted in this article when it comes to supporting speeds of 40 and 100 Gbits/sec. Not surprisingly, however, they're also thinking more aggressively.

The heart of the systems company's DTN platforms comprises photonic integrated circuits (PICs), active and passive monolithically integrated devices that combine 60 discrete devices into two chips—50 devices in the case of the transmit PIC. The devices currently support a total of 100 Gbits/sec per chip (10 channels of 10 Gbits/sec each) and have surpassed 100 million hours in the field without failure, the company recently announced.

Infinera has embarked on the next generation of PIC, with a goal to support 400 to 500 Gbits/sec per device. David Welch, chief marketing and strategy officer at Infinera, reports that the new PICs will support phase-modulated transmission and therefore will have to integrate a higher number of devices per chip—likely more than 200. The company has already demonstrated that they can support QPSK or DQPSK modulation formats. On the passive side, company engineers have developed the ability to accommodate polarization combining and "polarization diverse" technologies, Welch reports.

The current efforts reflect a very aggressive roadmap toward higher speed and greater functional complexity per PIC. "We always come back to is being able to double the bandwidth on a chip every three years, to go from our 100-gigabit to 400-gigabit to nominally 1 terabit, 2 terabits, or 4 terabits in future generations," Welch says. To accomplish these goals, research must advance in three areas, according to Welch: increasing spectral efficiency through phase modulation capabilities, increasing speed per channel, and increasing the number of channels per PIC.

These goals are quite compatible with an essentially monolithic approach, Welch believes. "Usually what people I think are referring to with [the term 'hybrid integration'] is to have the optical wave go in and out of a couple of platforms. It might have an InP laser going to a silica bench to come back out on some other connection. That type of packaging, we don't see the value of it," he says.